Jiandong Xie

A Unified Replay-based Continuous Learning Framework for Spatio-Temporal Prediction on Streaming Data

Apr 23, 2024

Abstract:The widespread deployment of wireless and mobile devices results in a proliferation of spatio-temporal data that is used in applications, e.g., traffic prediction, human mobility mining, and air quality prediction, where spatio-temporal prediction is often essential to enable safety, predictability, or reliability. Many recent proposals that target deep learning for spatio-temporal prediction suffer from so-called catastrophic forgetting, where previously learned knowledge is entirely forgotten when new data arrives. Such proposals may experience deteriorating prediction performance when applied in settings where data streams into the system. To enable spatio-temporal prediction on streaming data, we propose a unified replay-based continuous learning framework. The framework includes a replay buffer of previously learned samples that are fused with training data using a spatio-temporal mixup mechanism in order to preserve historical knowledge effectively, thus avoiding catastrophic forgetting. To enable holistic representation preservation, the framework also integrates a general spatio-temporal autoencoder with a carefully designed spatio-temporal simple siamese (STSimSiam) network that aims to ensure prediction accuracy and avoid holistic feature loss by means of mutual information maximization. The framework further encompasses five spatio-temporal data augmentation methods to enhance the performance of STSimSiam. Extensive experiments on real data offer insight into the effectiveness of the proposed framework.

A Pattern Discovery Approach to Multivariate Time Series Forecasting

Dec 20, 2022

Abstract:Multivariate time series forecasting constitutes important functionality in cyber-physical systems, whose prediction accuracy can be improved significantly by capturing temporal and multivariate correlations among multiple time series. State-of-the-art deep learning methods fail to construct models for full time series because model complexity grows exponentially with time series length. Rather, these methods construct local temporal and multivariate correlations within subsequences, but fail to capture correlations among subsequences, which significantly affect their forecasting accuracy. To capture the temporal and multivariate correlations among subsequences, we design a pattern discovery model, that constructs correlations via diverse pattern functions. While the traditional pattern discovery method uses shared and fixed pattern functions that ignore the diversity across time series. We propose a novel pattern discovery method that can automatically capture diverse and complex time series patterns. We also propose a learnable correlation matrix, that enables the model to capture distinct correlations among multiple time series. Extensive experiments show that our model achieves state-of-the-art prediction accuracy.

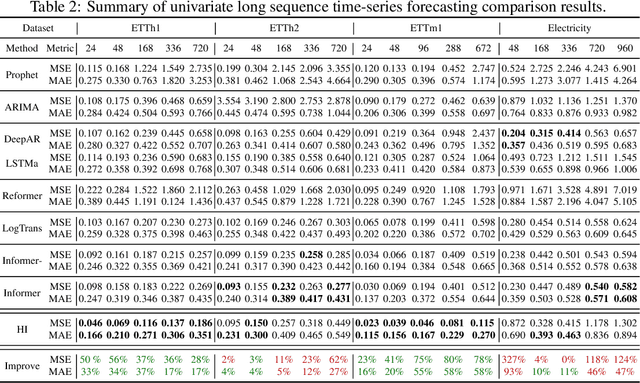

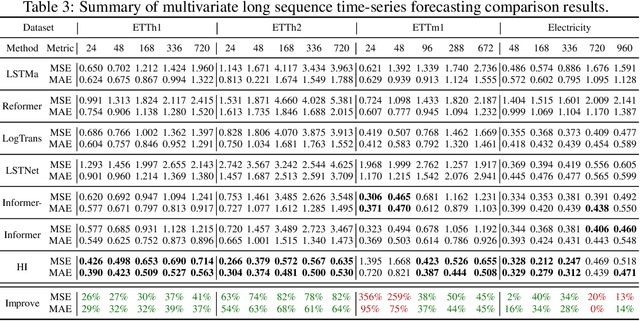

Historical Inertia: An Ignored but Powerful Baseline for Long Sequence Time-series Forecasting

Mar 31, 2021

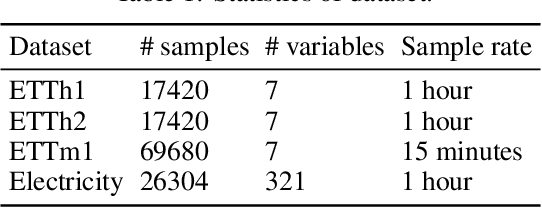

Abstract:Long sequence time-series forecasting (LSTF) has become increasingly popular for its wide range of applications. Though superior models have been proposed to enhance the prediction effectiveness and efficiency, it is reckless to ignore or underestimate one of the most natural and basic temporal properties of time-series, the historical inertia (HI), which refers to the most recent data-points in the input time series. In this paper, we experimentally evaluate the power of historical inertia on four public real-word datasets. The results demonstrate that up to 82% relative improvement over state-of-the-art works can be achieved even by adopting HI directly as output.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge