Igor Udovichenko

Inverting Black-Box Face Recognition Systems via Zero-Order Optimization in Eigenface Space

Jun 11, 2025

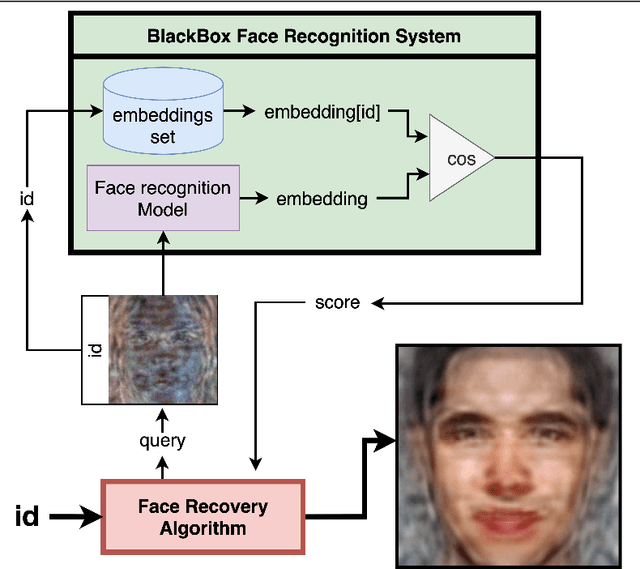

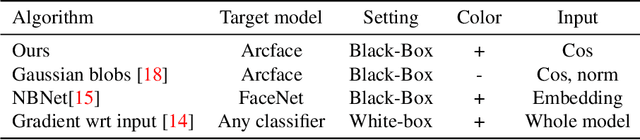

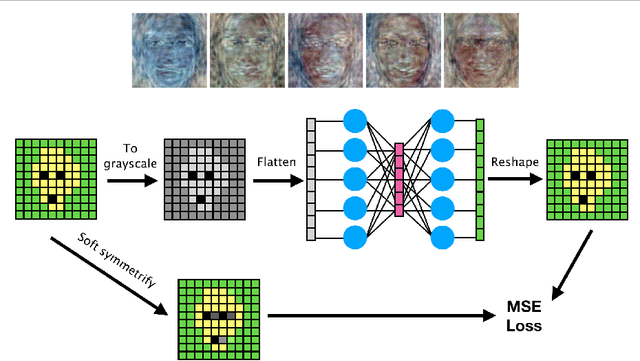

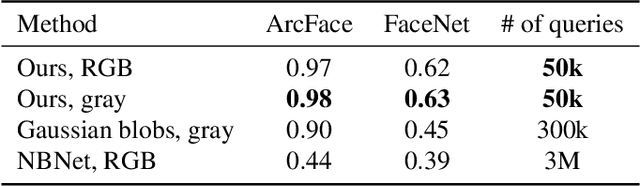

Abstract:Reconstructing facial images from black-box recognition models poses a significant privacy threat. While many methods require access to embeddings, we address the more challenging scenario of model inversion using only similarity scores. This paper introduces DarkerBB, a novel approach that reconstructs color faces by performing zero-order optimization within a PCA-derived eigenface space. Despite this highly limited information, experiments on LFW, AgeDB-30, and CFP-FP benchmarks demonstrate that DarkerBB achieves state-of-the-art verification accuracies in the similarity-only setting, with competitive query efficiency.

Risk-Averse Reinforcement Learning with Itakura-Saito Loss

May 22, 2025Abstract:Risk-averse reinforcement learning finds application in various high-stakes fields. Unlike classical reinforcement learning, which aims to maximize expected returns, risk-averse agents choose policies that minimize risk, occasionally sacrificing expected value. These preferences can be framed through utility theory. We focus on the specific case of the exponential utility function, where we can derive the Bellman equations and employ various reinforcement learning algorithms with few modifications. However, these methods suffer from numerical instability due to the need for exponent computation throughout the process. To address this, we introduce a numerically stable and mathematically sound loss function based on the Itakura-Saito divergence for learning state-value and action-value functions. We evaluate our proposed loss function against established alternatives, both theoretically and empirically. In the experimental section, we explore multiple financial scenarios, some with known analytical solutions, and show that our loss function outperforms the alternatives.

EBES: Easy Benchmarking for Event Sequences

Oct 04, 2024

Abstract:Event sequences, characterized by irregular sampling intervals and a mix of categorical and numerical features, are common data structures in various real-world domains such as healthcare, finance, and user interaction logs. Despite advances in temporal data modeling techniques, there is no standardized benchmarks for evaluating their performance on event sequences. This complicates result comparison across different papers due to varying evaluation protocols, potentially misleading progress in this field. We introduce EBES, a comprehensive benchmarking tool with standardized evaluation scenarios and protocols, focusing on regression and classification problems with sequence-level targets. Our library simplifies benchmarking, dataset addition, and method integration through a unified interface. It includes a novel synthetic dataset and provides preprocessed real-world datasets, including the largest publicly available banking dataset. Our results provide an in-depth analysis of datasets, identifying some as unsuitable for model comparison. We investigate the importance of modeling temporal and sequential components, as well as the robustness and scaling properties of the models. These findings highlight potential directions for future research. Our benchmark aim is to facilitate reproducible research, expediting progress and increasing real-world impacts.

Estimating Barycenters of Distributions with Neural Optimal Transport

Feb 06, 2024

Abstract:Given a collection of probability measures, a practitioner sometimes needs to find an "average" distribution which adequately aggregates reference distributions. A theoretically appealing notion of such an average is the Wasserstein barycenter, which is the primal focus of our work. By building upon the dual formulation of Optimal Transport (OT), we propose a new scalable approach for solving the Wasserstein barycenter problem. Our methodology is based on the recent Neural OT solver: it has bi-level adversarial learning objective and works for general cost functions. These are key advantages of our method, since the typical adversarial algorithms leveraging barycenter tasks utilize tri-level optimization and focus mostly on quadratic cost. We also establish theoretical error bounds for our proposed approach and showcase its applicability and effectiveness on illustrative scenarios and image data setups.

Self-Supervised Learning in Event Sequences: A Comparative Study and Hybrid Approach of Generative Modeling and Contrastive Learning

Jan 30, 2024

Abstract:This study investigates self-supervised learning techniques to obtain representations of Event Sequences. It is a key modality in various applications, including but not limited to banking, e-commerce, and healthcare. We perform a comprehensive study of generative and contrastive approaches in self-supervised learning, applying them both independently. We find that there is no single supreme method. Consequently, we explore the potential benefits of combining these approaches. To achieve this goal, we introduce a novel method that aligns generative and contrastive embeddings as distinct modalities, drawing inspiration from contemporary multimodal research. Generative and contrastive approaches are often treated as mutually exclusive, leaving a gap for their combined exploration. Our results demonstrate that this aligned model performs at least on par with, and mostly surpasses, existing methods and is more universal across a variety of tasks. Furthermore, we demonstrate that self-supervised methods consistently outperform the supervised approach on our datasets.

SeqNAS: Neural Architecture Search for Event Sequence Classification

Jan 06, 2024Abstract:Neural Architecture Search (NAS) methods are widely used in various industries to obtain high quality taskspecific solutions with minimal human intervention. Event Sequences find widespread use in various industrial applications including churn prediction customer segmentation fraud detection and fault diagnosis among others. Such data consist of categorical and real-valued components with irregular timestamps. Despite the usefulness of NAS methods previous approaches only have been applied to other domains images texts or time series. Our work addresses this limitation by introducing a novel NAS algorithm SeqNAS specifically designed for event sequence classification. We develop a simple yet expressive search space that leverages commonly used building blocks for event sequence classification including multihead self attention convolutions and recurrent cells. To perform the search we adopt sequential Bayesian Optimization and utilize previously trained models as an ensemble of teachers to augment knowledge distillation. As a result of our work we demonstrate that our method surpasses state of the art NAS methods and popular architectures suitable for sequence classification and holds great potential for various industrial applications.

Energy-Guided Continuous Entropic Barycenter Estimation for General Costs

Oct 02, 2023

Abstract:Optimal transport (OT) barycenters are a mathematically grounded way of averaging probability distributions while capturing their geometric properties. In short, the barycenter task is to take the average of a collection of probability distributions w.r.t. given OT discrepancies. We propose a novel algorithm for approximating the continuous Entropic OT (EOT) barycenter for arbitrary OT cost functions. Our approach is built upon the dual reformulation of the EOT problem based on weak OT, which has recently gained the attention of the ML community. Beyond its novelty, our method enjoys several advantageous properties: (i) we establish quality bounds for the recovered solution; (ii) this approach seemlessly interconnects with the Energy-Based Models (EBMs) learning procedure enabling the use of well-tuned algorithms for the problem of interest; (iii) it provides an intuitive optimization scheme avoiding min-max, reinforce and other intricate technical tricks. For validation, we consider several low-dimensional scenarios and image-space setups, including non-Euclidean cost functions. Furthermore, we investigate the practical task of learning the barycenter on an image manifold generated by a pretrained generative model, opening up new directions for real-world applications.

Darker than Black-Box: Face Reconstruction from Similarity Queries

Jul 02, 2021

Abstract:Several methods for inversion of face recognition models were recently presented, attempting to reconstruct a face from deep templates. Although some of these approaches work in a black-box setup using only face embeddings, usually, on the end-user side, only similarity scores are provided. Therefore, these algorithms are inapplicable in such scenarios. We propose a novel approach that allows reconstructing the face querying only similarity scores of the black-box model. While our algorithm operates in a more general setup, experiments show that it is query efficient and outperforms the existing methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge