Huanran Wang

MultiEditor: Controllable Multimodal Object Editing for Driving Scenarios Using 3D Gaussian Splatting Priors

Jul 30, 2025Abstract:Autonomous driving systems rely heavily on multimodal perception data to understand complex environments. However, the long-tailed distribution of real-world data hinders generalization, especially for rare but safety-critical vehicle categories. To address this challenge, we propose MultiEditor, a dual-branch latent diffusion framework designed to edit images and LiDAR point clouds in driving scenarios jointly. At the core of our approach is introducing 3D Gaussian Splatting (3DGS) as a structural and appearance prior for target objects. Leveraging this prior, we design a multi-level appearance control mechanism--comprising pixel-level pasting, semantic-level guidance, and multi-branch refinement--to achieve high-fidelity reconstruction across modalities. We further propose a depth-guided deformable cross-modality condition module that adaptively enables mutual guidance between modalities using 3DGS-rendered depth, significantly enhancing cross-modality consistency. Extensive experiments demonstrate that MultiEditor achieves superior performance in visual and geometric fidelity, editing controllability, and cross-modality consistency. Furthermore, generating rare-category vehicle data with MultiEditor substantially enhances the detection accuracy of perception models on underrepresented classes.

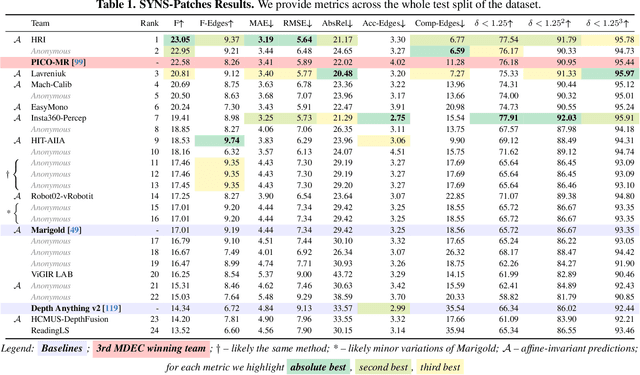

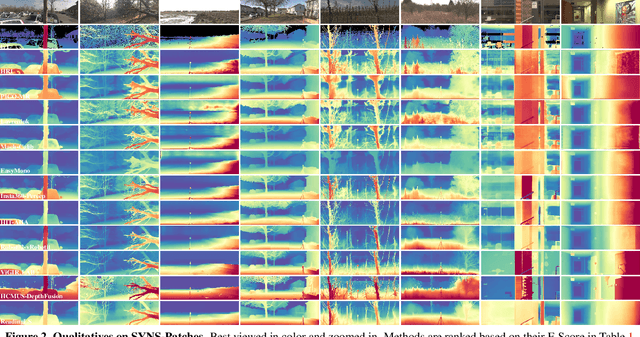

The Fourth Monocular Depth Estimation Challenge

Apr 24, 2025

Abstract:This paper presents the results of the fourth edition of the Monocular Depth Estimation Challenge (MDEC), which focuses on zero-shot generalization to the SYNS-Patches benchmark, a dataset featuring challenging environments in both natural and indoor settings. In this edition, we revised the evaluation protocol to use least-squares alignment with two degrees of freedom to support disparity and affine-invariant predictions. We also revised the baselines and included popular off-the-shelf methods: Depth Anything v2 and Marigold. The challenge received a total of 24 submissions that outperformed the baselines on the test set; 10 of these included a report describing their approach, with most leading methods relying on affine-invariant predictions. The challenge winners improved the 3D F-Score over the previous edition's best result, raising it from 22.58% to 23.05%.

Act in Collusion: A Persistent Distributed Multi-Target Backdoor in Federated Learning

Nov 06, 2024Abstract:Federated learning, a novel paradigm designed to protect data privacy, is vulnerable to backdoor attacks due to its distributed nature. Current research often designs attacks based on a single attacker with a single backdoor, overlooking more realistic and complex threats in federated learning. We propose a more practical threat model for federated learning: the distributed multi-target backdoor. In this model, multiple attackers control different clients, embedding various triggers and targeting different classes, collaboratively implanting backdoors into the global model via central aggregation. Empirical validation shows that existing methods struggle to maintain the effectiveness of multiple backdoors in the global model. Our key insight is that similar backdoor triggers cause parameter conflicts and injecting new backdoors disrupts gradient directions, significantly weakening some backdoors performance. To solve this, we propose a Distributed Multi-Target Backdoor Attack (DMBA), ensuring efficiency and persistence of backdoors from different malicious clients. To avoid parameter conflicts, we design a multi-channel dispersed frequency trigger strategy to maximize trigger differences. To mitigate gradient interference, we introduce backdoor replay in local training to neutralize conflicting gradients. Extensive validation shows that 30 rounds after the attack, Attack Success Rates of three different backdoors from various clients remain above 93%. The code will be made publicly available after the review period.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge