Gourav Roy

MAB Optimizer for Estimating Math Question Difficulty via Inverse CV without NLP

Aug 26, 2025

Abstract:The evolution of technology and education is driving the emergence of Intelligent & Autonomous Tutoring Systems (IATS), where objective and domain-agnostic methods for determining question difficulty are essential. Traditional human labeling is subjective, and existing NLP-based approaches fail in symbolic domains like algebra. This study introduces the Approach of Passive Measures among Educands (APME), a reinforcement learning-based Multi-Armed Bandit (MAB) framework that estimates difficulty solely from solver performance data -- marks obtained and time taken -- without requiring linguistic features or expert labels. By leveraging the inverse coefficient of variation as a risk-adjusted metric, the model provides an explainable and scalable mechanism for adaptive assessment. Empirical validation was conducted on three heterogeneous datasets. Across these diverse contexts, the model achieved an average R2 of 0.9213 and an average RMSE of 0.0584, confirming its robustness, accuracy, and adaptability to different educational levels and assessment formats. Compared with baseline approaches-such as regression-based, NLP-driven, and IRT models-the proposed framework consistently outperformed alternatives, particularly in purely symbolic domains. The findings highlight that (i) item heterogeneity strongly influences perceived difficulty, and (ii) variance in solver outcomes is as critical as mean performance for adaptive allocation. Pedagogically, the model aligns with Vygotskys Zone of Proximal Development by identifying tasks that balance challenge and attainability, supporting motivation while minimizing disengagement. This domain-agnostic, self-supervised approach advances difficulty tagging in IATS and can be extended beyond algebra wherever solver interaction data is available

DeepRacer: Educational Autonomous Racing Platform for Experimentation with Sim2Real Reinforcement Learning

Nov 05, 2019

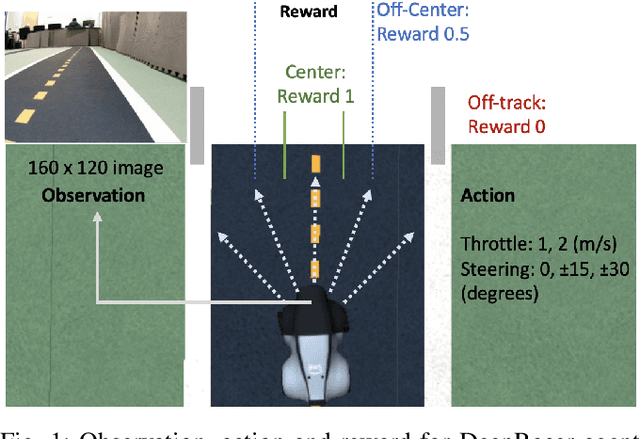

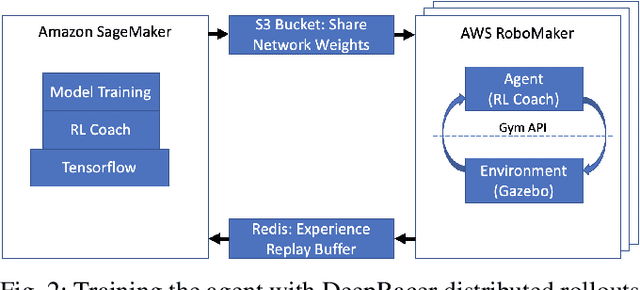

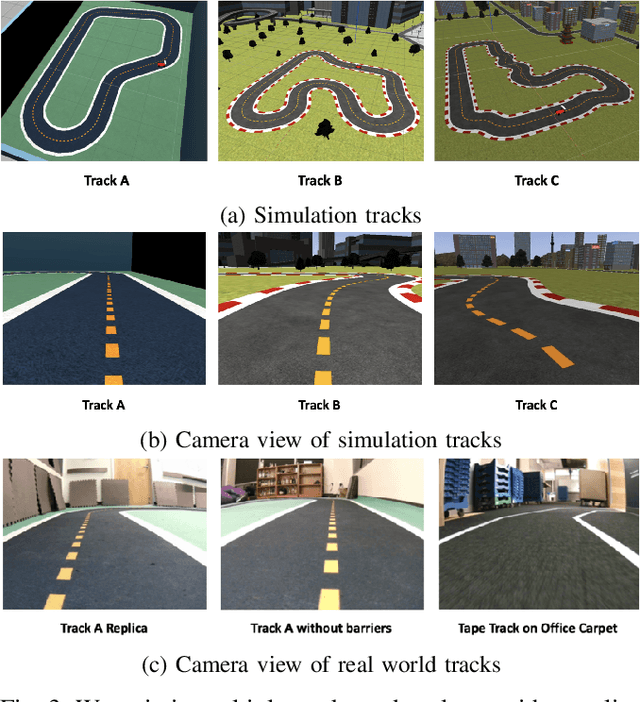

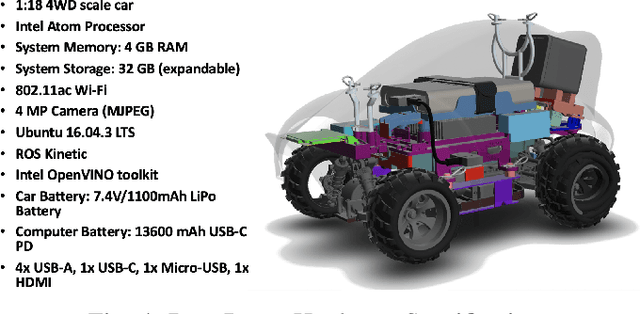

Abstract:DeepRacer is a platform for end-to-end experimentation with RL and can be used to systematically investigate the key challenges in developing intelligent control systems. Using the platform, we demonstrate how a 1/18th scale car can learn to drive autonomously using RL with a monocular camera. It is trained in simulation with no additional tuning in physical world and demonstrates: 1) formulation and solution of a robust reinforcement learning algorithm, 2) narrowing the reality gap through joint perception and dynamics, 3) distributed on-demand compute architecture for training optimal policies, and 4) a robust evaluation method to identify when to stop training. It is the first successful large-scale deployment of deep reinforcement learning on a robotic control agent that uses only raw camera images as observations and a model-free learning method to perform robust path planning. We open source our code and video demo on GitHub: https://git.io/fjxoJ.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge