Gary S. Collins

topic group 6 of the STRATOS initiative

Performance evaluation of predictive AI models to support medical decisions: Overview and guidance

Dec 13, 2024

Abstract:A myriad of measures to illustrate performance of predictive artificial intelligence (AI) models have been proposed in the literature. Selecting appropriate performance measures is essential for predictive AI models that are developed to be used in medical practice, because poorly performing models may harm patients and lead to increased costs. We aim to assess the merits of classic and contemporary performance measures when validating predictive AI models for use in medical practice. We focus on models with a binary outcome. We discuss 32 performance measures covering five performance domains (discrimination, calibration, overall, classification, and clinical utility) along with accompanying graphical assessments. The first four domains cover statistical performance, the fifth domain covers decision-analytic performance. We explain why two key characteristics are important when selecting which performance measures to assess: (1) whether the measure's expected value is optimized when it is calculated using the correct probabilities (i.e., a "proper" measure), and (2) whether they reflect either purely statistical performance or decision-analytic performance by properly considering misclassification costs. Seventeen measures exhibit both characteristics, fourteen measures exhibited one characteristic, and one measure possessed neither characteristic (the F1 measure). All classification measures (such as classification accuracy and F1) are improper for clinically relevant decision thresholds other than 0.5 or the prevalence. We recommend the following measures and plots as essential to report: AUROC, calibration plot, a clinical utility measure such as net benefit with decision curve analysis, and a plot with probability distributions per outcome category.

Bibliometric Analysis of Publisher and Journal Instructions to Authors on Generative-AI in Academic and Scientific Publishing

Jul 21, 2023

Abstract:We aim to determine the extent and content of guidance for authors regarding the use of generative-AI (GAI), Generative Pretrained models (GPTs) and Large Language Models (LLMs) powered tools among the top 100 academic publishers and journals in science. The websites of these publishers and journals were screened from between 19th and 20th May 2023. Among the largest 100 publishers, 17% provided guidance on the use of GAI, of which 12 (70.6%) were among the top 25 publishers. Among the top 100 journals, 70% have provided guidance on GAI. Of those with guidance, 94.1% of publishers and 95.7% of journals prohibited the inclusion of GAI as an author. Four journals (5.7%) explicitly prohibit the use of GAI in the generation of a manuscript, while 3 (17.6%) publishers and 15 (21.4%) journals indicated their guidance exclusively applies to the writing process. When disclosing the use of GAI, 42.8% of publishers and 44.3% of journals included specific disclosure criteria. There was variability in guidance of where to disclose the use of GAI, including in the methods, acknowledgments, cover letter, or a new section. There was also variability in how to access GAI guidance and the linking of journal and publisher instructions to authors. There is a lack of guidance by some top publishers and journals on the use of GAI by authors. Among those publishers and journals that provide guidance, there is substantial heterogeneity in the allowable uses of GAI and in how it should be disclosed, with this heterogeneity persisting among affiliated publishers and journals in some instances. The lack of standardization burdens authors and threatens to limit the effectiveness of these regulations. There is a need for standardized guidelines in order to protect the integrity of scientific output as GAI continues to grow in popularity.

Development of the ChatGPT, Generative Artificial Intelligence and Natural Large Language Models for Accountable Reporting and Use (CANGARU) Guidelines

Jul 18, 2023

Abstract:The swift progress and ubiquitous adoption of Generative AI (GAI), Generative Pre-trained Transformers (GPTs), and large language models (LLMs) like ChatGPT, have spurred queries about their ethical application, use, and disclosure in scholarly research and scientific productions. A few publishers and journals have recently created their own sets of rules; however, the absence of a unified approach may lead to a 'Babel Tower Effect,' potentially resulting in confusion rather than desired standardization. In response to this, we present the ChatGPT, Generative Artificial Intelligence, and Natural Large Language Models for Accountable Reporting and Use Guidelines (CANGARU) initiative, with the aim of fostering a cross-disciplinary global inclusive consensus on the ethical use, disclosure, and proper reporting of GAI/GPT/LLM technologies in academia. The present protocol consists of four distinct parts: a) an ongoing systematic review of GAI/GPT/LLM applications to understand the linked ideas, findings, and reporting standards in scholarly research, and to formulate guidelines for its use and disclosure, b) a bibliometric analysis of existing author guidelines in journals that mention GAI/GPT/LLM, with the goal of evaluating existing guidelines, analyzing the disparity in their recommendations, and identifying common rules that can be brought into the Delphi consensus process, c) a Delphi survey to establish agreement on the items for the guidelines, ensuring principled GAI/GPT/LLM use, disclosure, and reporting in academia, and d) the subsequent development and dissemination of the finalized guidelines and their supplementary explanation and elaboration documents.

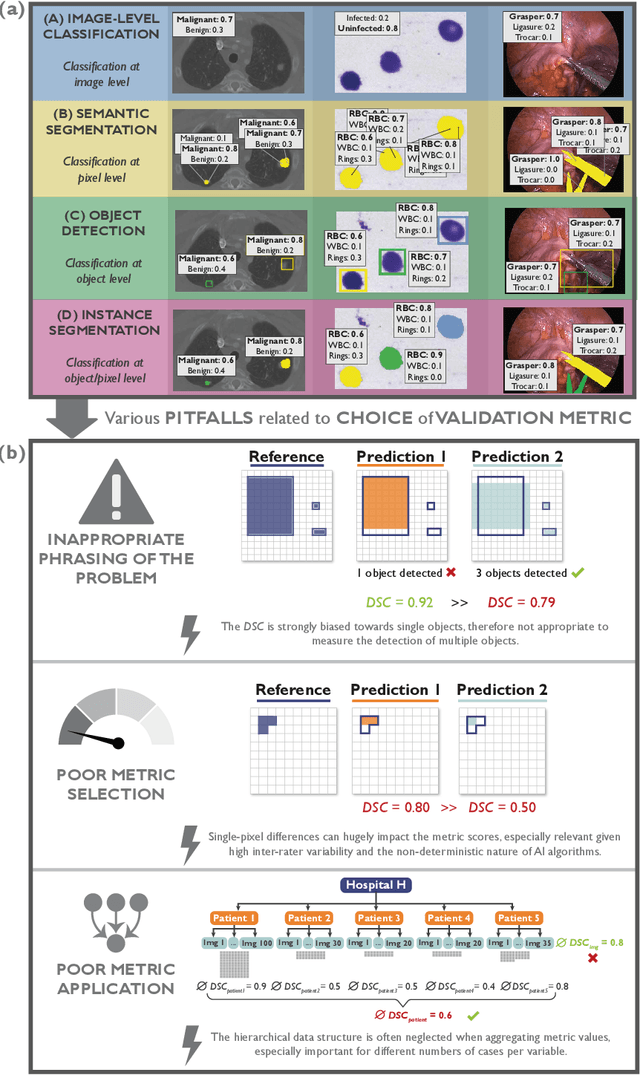

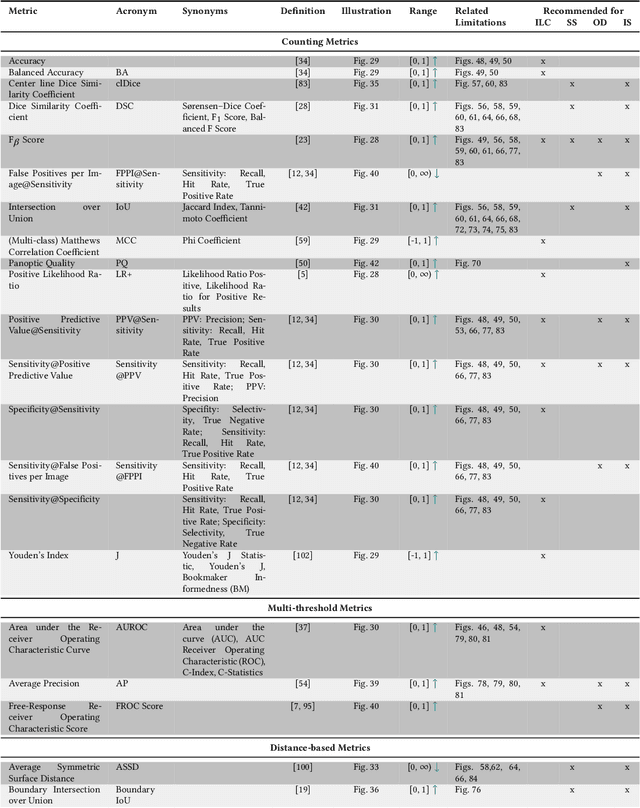

Understanding metric-related pitfalls in image analysis validation

Feb 09, 2023Abstract:Validation metrics are key for the reliable tracking of scientific progress and for bridging the current chasm between artificial intelligence (AI) research and its translation into practice. However, increasing evidence shows that particularly in image analysis, metrics are often chosen inadequately in relation to the underlying research problem. This could be attributed to a lack of accessibility of metric-related knowledge: While taking into account the individual strengths, weaknesses, and limitations of validation metrics is a critical prerequisite to making educated choices, the relevant knowledge is currently scattered and poorly accessible to individual researchers. Based on a multi-stage Delphi process conducted by a multidisciplinary expert consortium as well as extensive community feedback, the present work provides the first reliable and comprehensive common point of access to information on pitfalls related to validation metrics in image analysis. Focusing on biomedical image analysis but with the potential of transfer to other fields, the addressed pitfalls generalize across application domains and are categorized according to a newly created, domain-agnostic taxonomy. To facilitate comprehension, illustrations and specific examples accompany each pitfall. As a structured body of information accessible to researchers of all levels of expertise, this work enhances global comprehension of a key topic in image analysis validation.

Metrics reloaded: Pitfalls and recommendations for image analysis validation

Jun 03, 2022

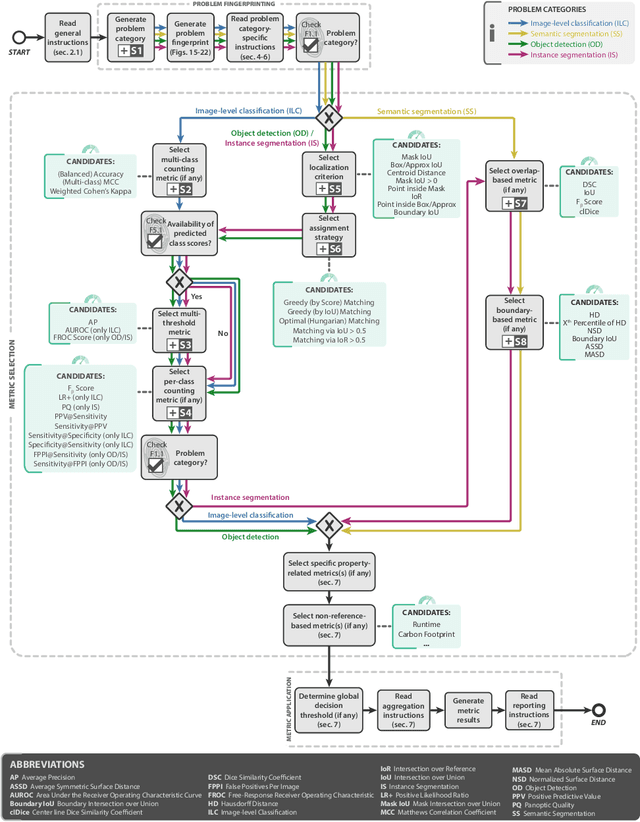

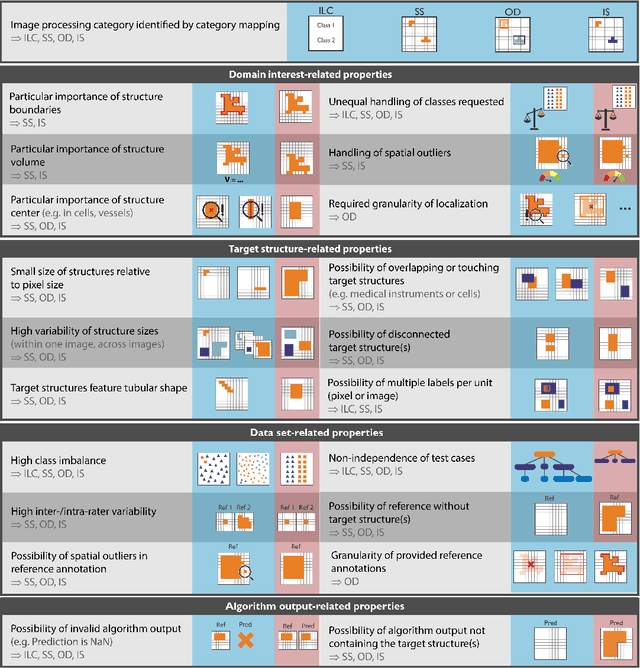

Abstract:The field of automatic biomedical image analysis crucially depends on robust and meaningful performance metrics for algorithm validation. Current metric usage, however, is often ill-informed and does not reflect the underlying domain interest. Here, we present a comprehensive framework that guides researchers towards choosing performance metrics in a problem-aware manner. Specifically, we focus on biomedical image analysis problems that can be interpreted as a classification task at image, object or pixel level. The framework first compiles domain interest-, target structure-, data set- and algorithm output-related properties of a given problem into a problem fingerprint, while also mapping it to the appropriate problem category, namely image-level classification, semantic segmentation, instance segmentation, or object detection. It then guides users through the process of selecting and applying a set of appropriate validation metrics while making them aware of potential pitfalls related to individual choices. In this paper, we describe the current status of the Metrics Reloaded recommendation framework, with the goal of obtaining constructive feedback from the image analysis community. The current version has been developed within an international consortium of more than 60 image analysis experts and will be made openly available as a user-friendly toolkit after community-driven optimization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge