Erik Blasch

Air Force Office of Scientific Research

Image Prior and Posterior Conditional Probability Representation for Efficient Damage Assessment

Oct 26, 2023

Abstract:It is important to quantify Damage Assessment (DA) for Human Assistance and Disaster Response (HADR) applications. In this paper, to achieve efficient and scalable DA in HADR, an image prior and posterior conditional probability (IP2CP) is developed as an effective computational imaging representation. Equipped with the IP2CP representation, the matching pre- and post-disaster images are effectively encoded into one image that is then processed using deep learning approaches to determine the damage levels. Two scenarios of crucial importance for the practical use of DA in HADR applications are examined: pixel-wise semantic segmentation and patch-based contrastive learning-based global damage classification. Results achieved by IP2CP in both scenarios demonstrate promising performances, showing that our IP2CP-based methods within the deep learning framework can effectively achieve data and computational efficiency, which is of utmost importance for the DA in HADR applications.

Transparent Object Tracking with Enhanced Fusion Module

Sep 13, 2023

Abstract:Accurate tracking of transparent objects, such as glasses, plays a critical role in many robotic tasks such as robot-assisted living. Due to the adaptive and often reflective texture of such objects, traditional tracking algorithms that rely on general-purpose learned features suffer from reduced performance. Recent research has proposed to instill transparency awareness into existing general object trackers by fusing purpose-built features. However, with the existing fusion techniques, the addition of new features causes a change in the latent space making it impossible to incorporate transparency awareness on trackers with fixed latent spaces. For example, many of the current days transformer-based trackers are fully pre-trained and are sensitive to any latent space perturbations. In this paper, we present a new feature fusion technique that integrates transparency information into a fixed feature space, enabling its use in a broader range of trackers. Our proposed fusion module, composed of a transformer encoder and an MLP module, leverages key query-based transformations to embed the transparency information into the tracking pipeline. We also present a new two-step training strategy for our fusion module to effectively merge transparency features. We propose a new tracker architecture that uses our fusion techniques to achieve superior results for transparent object tracking. Our proposed method achieves competitive results with state-of-the-art trackers on TOTB, which is the largest transparent object tracking benchmark recently released. Our results and the implementation of code will be made publicly available at https://github.com/kalyan0510/TOTEM.

CCTV-Gun: Benchmarking Handgun Detection in CCTV Images

Apr 02, 2023

Abstract:Gun violence is a critical security problem, and it is imperative for the computer vision community to develop effective gun detection algorithms for real-world scenarios, particularly in Closed Circuit Television (CCTV) surveillance data. Despite significant progress in visual object detection, detecting guns in real-world CCTV images remains a challenging and under-explored task. Firearms, especially handguns, are typically very small in size, non-salient in appearance, and often severely occluded or indistinguishable from other small objects. Additionally, the lack of principled benchmarks and difficulty collecting relevant datasets further hinder algorithmic development. In this paper, we present a meticulously crafted and annotated benchmark, called \textbf{CCTV-Gun}, which addresses the challenges of detecting handguns in real-world CCTV images. Our contribution is three-fold. Firstly, we carefully select and analyze real-world CCTV images from three datasets, manually annotate handguns and their holders, and assign each image with relevant challenge factors such as blur and occlusion. Secondly, we propose a new cross-dataset evaluation protocol in addition to the standard intra-dataset protocol, which is vital for gun detection in practical settings. Finally, we comprehensively evaluate both classical and state-of-the-art object detection algorithms, providing an in-depth analysis of their generalizing abilities. The benchmark will facilitate further research and development on this topic and ultimately enhance security. Code, annotations, and trained models are available at https://github.com/srikarym/CCTV-Gun.

DeFakePro: Decentralized DeepFake Attacks Detection using ENF Authentication

Jul 22, 2022

Abstract:Advancements in generative models, like Deepfake allows users to imitate a targeted person and manipulate online interactions. It has been recognized that disinformation may cause disturbance in society and ruin the foundation of trust. This article presents DeFakePro, a decentralized consensus mechanism-based Deepfake detection technique in online video conferencing tools. Leveraging Electrical Network Frequency (ENF), an environmental fingerprint embedded in digital media recording, affords a consensus mechanism design called Proof-of-ENF (PoENF) algorithm. The similarity in ENF signal fluctuations is utilized in the PoENF algorithm to authenticate the media broadcasted in conferencing tools. By utilizing the video conferencing setup with malicious participants to broadcast deep fake video recordings to other participants, the DeFakePro system verifies the authenticity of the incoming media in both audio and video channels.

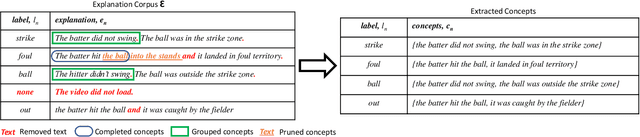

Automatic Concept Extraction for Concept Bottleneck-based Video Classification

Jun 21, 2022

Abstract:Recent efforts in interpretable deep learning models have shown that concept-based explanation methods achieve competitive accuracy with standard end-to-end models and enable reasoning and intervention about extracted high-level visual concepts from images, e.g., identifying the wing color and beak length for bird-species classification. However, these concept bottleneck models rely on a necessary and sufficient set of predefined concepts-which is intractable for complex tasks such as video classification. For complex tasks, the labels and the relationship between visual elements span many frames, e.g., identifying a bird flying or catching prey-necessitating concepts with various levels of abstraction. To this end, we present CoDEx, an automatic Concept Discovery and Extraction module that rigorously composes a necessary and sufficient set of concept abstractions for concept-based video classification. CoDEx identifies a rich set of complex concept abstractions from natural language explanations of videos-obviating the need to predefine the amorphous set of concepts. To demonstrate our method's viability, we construct two new public datasets that combine existing complex video classification datasets with short, crowd-sourced natural language explanations for their labels. Our method elicits inherent complex concept abstractions in natural language to generalize concept-bottleneck methods to complex tasks.

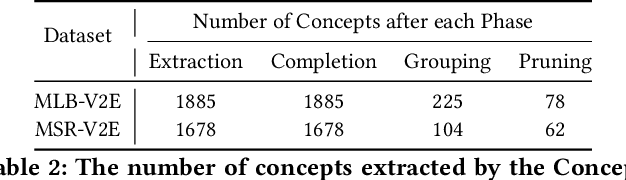

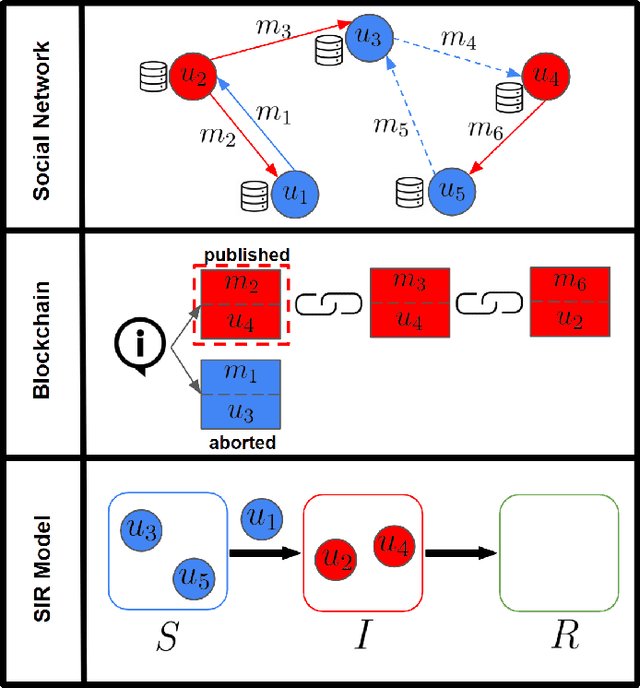

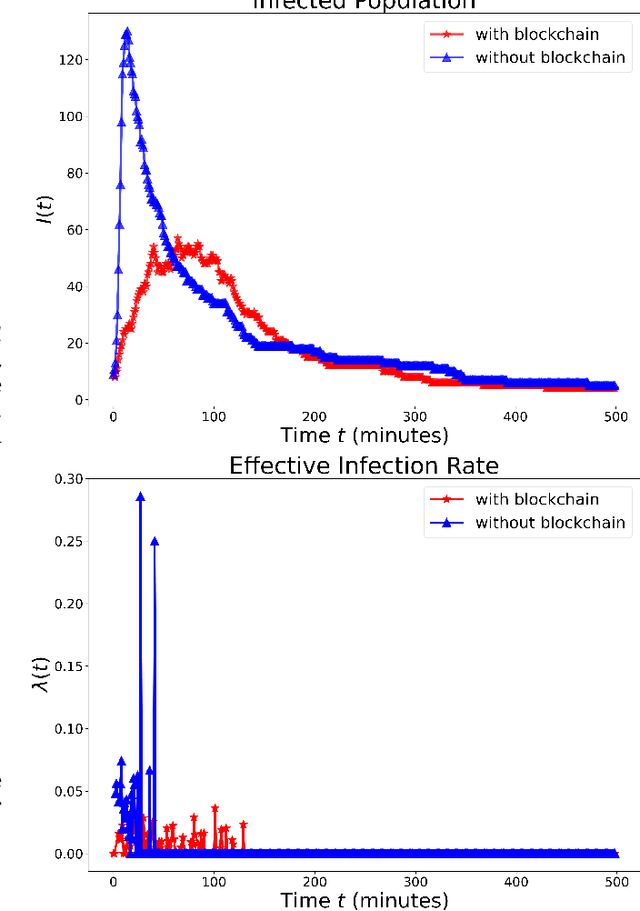

Mitigating Misinformation Spread on Blockchain Enabled Social Media Networks

Feb 09, 2022

Abstract:The paper develops a blockchain protocol for a social media network (BE-SMN) to mitigate the spread of misinformation. BE-SMN is derived based on the information transmission-time distribution by modeling the misinformation transmission as double-spend attacks on blockchain. The misinformation distribution is then incorporated into the SIR (Susceptible, Infectious, or Recovered) model, which substitutes the single rate parameter in the traditional SIR model. Then, on a multi-community network, we study the propagation of misinformation numerically and show that the proposed blockchain enabled social media network outperforms the baseline network in flattening the curve of the infected population.

AAAI FSS-21: Artificial Intelligence in Government and Public Sector Proceedings

Dec 10, 2021Abstract:Proceedings of the AAAI Fall Symposium on Artificial Intelligence in Government and Public Sector, Washington, DC, USA, November 4-6, 2021

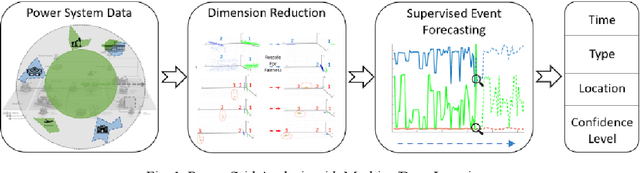

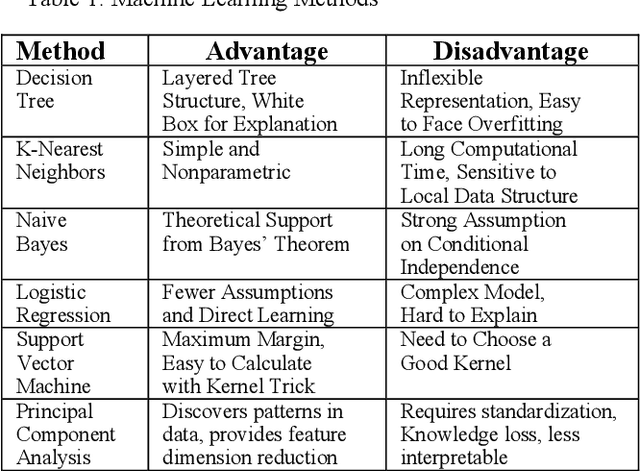

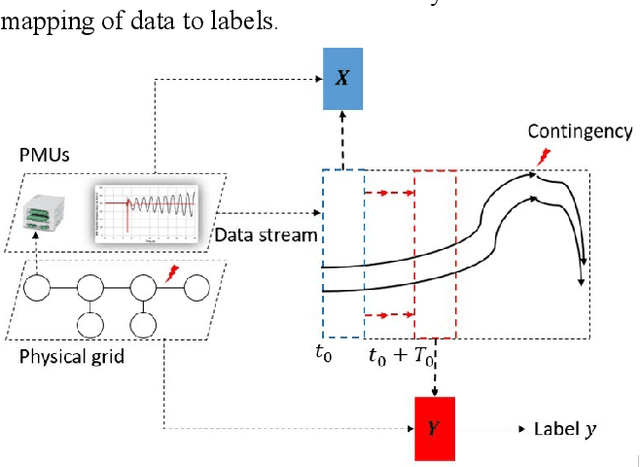

The Powerful Use of AI in the Energy Sector: Intelligent Forecasting

Nov 03, 2021

Abstract:Artificial Intelligence (AI) techniques continue to broaden across governmental and public sectors, such as power and energy - which serve as critical infrastructures for most societal operations. However, due to the requirements of reliability, accountability, and explainability, it is risky to directly apply AI-based methods to power systems because society cannot afford cascading failures and large-scale blackouts, which easily cost billions of dollars. To meet society requirements, this paper proposes a methodology to develop, deploy, and evaluate AI systems in the energy sector by: (1) understanding the power system measurements with physics, (2) designing AI algorithms to forecast the need, (3) developing robust and accountable AI methods, and (4) creating reliable measures to evaluate the performance of the AI model. The goal is to provide a high level of confidence to energy utility users. For illustration purposes, the paper uses power system event forecasting (PEF) as an example, which carefully analyzes synchrophasor patterns measured by the Phasor Measurement Units (PMUs). Such a physical understanding leads to a data-driven framework that reduces the dimensionality with physics and forecasts the event with high credibility. Specifically, for dimensionality reduction, machine learning arranges physical information from different dimensions, resulting inefficient information extraction. For event forecasting, the supervised learning model fuses the results of different models to increase the confidence. Finally, comprehensive experiments demonstrate the high accuracy, efficiency, and reliability as compared to other state-of-the-art machine learning methods.

Certifiable Artificial Intelligence Through Data Fusion

Nov 03, 2021

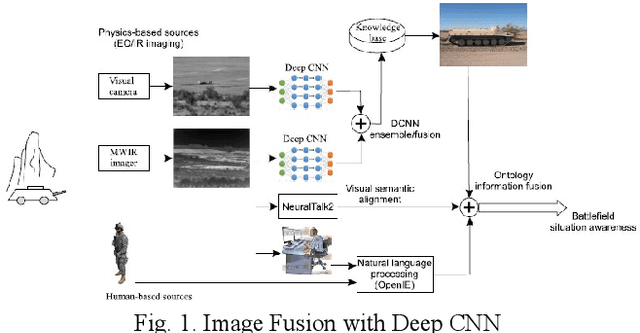

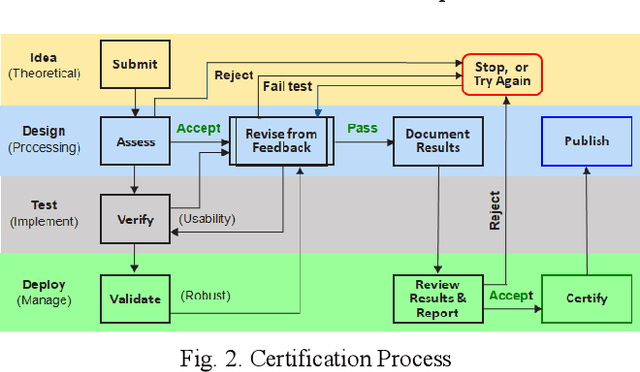

Abstract:This paper reviews and proposes concerns in adopting, fielding, and maintaining artificial intelligence (AI) systems. While the AI community has made rapid progress, there are challenges in certifying AI systems. Using procedures from design and operational test and evaluation, there are opportunities towards determining performance bounds to manage expectations of intended use. A notional use case is presented with image data fusion to support AI object recognition certifiability considering precision versus distance.

NIDA-CLIFGAN: Natural Infrastructure Damage Assessment through Efficient Classification Combining Contrastive Learning, Information Fusion and Generative Adversarial Networks

Oct 27, 2021

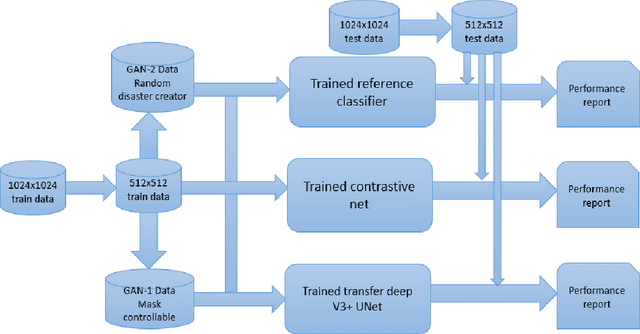

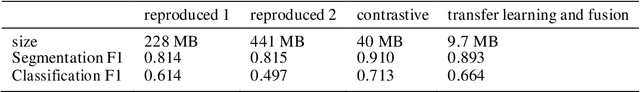

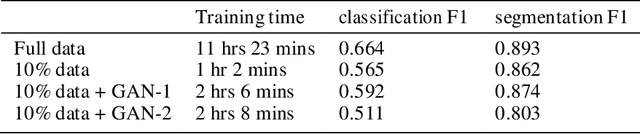

Abstract:During natural disasters, aircraft and satellites are used to survey the impacted regions. Usually human experts are needed to manually label the degrees of the building damage so that proper humanitarian assistance and disaster response (HADR) can be achieved, which is labor-intensive and time-consuming. Expecting human labeling of major disasters over a wide area gravely slows down the HADR efforts. It is thus of crucial interest to take advantage of the cutting-edge Artificial Intelligence and Machine Learning techniques to speed up the natural infrastructure damage assessment process to achieve effective HADR. Accordingly, the paper demonstrates a systematic effort to achieve efficient building damage classification. First, two novel generative adversarial nets (GANs) are designed to augment data used to train the deep-learning-based classifier. Second, a contrastive learning based method using novel data structures is developed to achieve great performance. Third, by using information fusion, the classifier is effectively trained with very few training data samples for transfer learning. All the classifiers are small enough to be loaded in a smart phone or simple laptop for first responders. Based on the available overhead imagery dataset, results demonstrate data and computational efficiency with 10% of the collected data combined with a GAN reducing the time of computation from roughly half a day to about 1 hour with roughly similar classification performances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge