Dimitri Gominski

LaSTIG

Mining Field Data for Tree Species Recognition at Scale

Aug 28, 2024

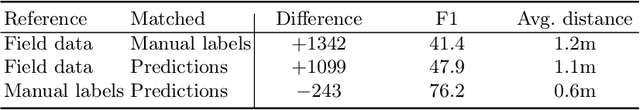

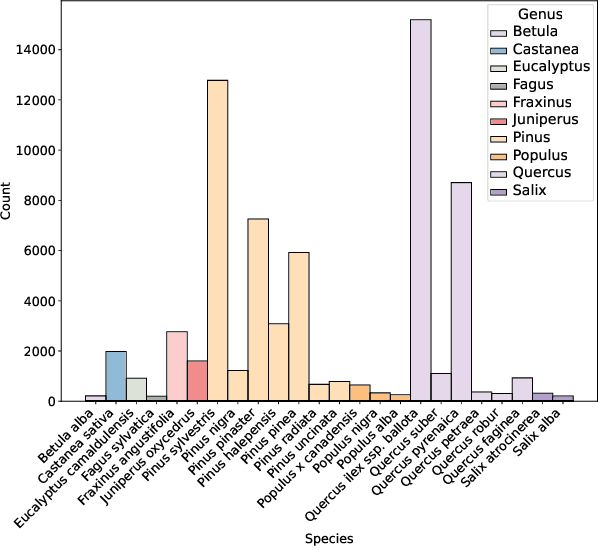

Abstract:Individual tree species labels are particularly hard to acquire due to the expert knowledge needed and the limitations of photointerpretation. Here, we present a methodology to automatically mine species labels from public forest inventory data, using available pretrained tree detection models. We identify tree instances in aerial imagery and match them with field data with close to zero human involvement. We conduct a series of experiments on the resulting dataset, and show a beneficial effect when adding noisy or even unlabeled data points, highlighting a strong potential for large-scale individual species mapping.

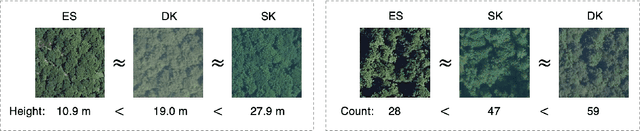

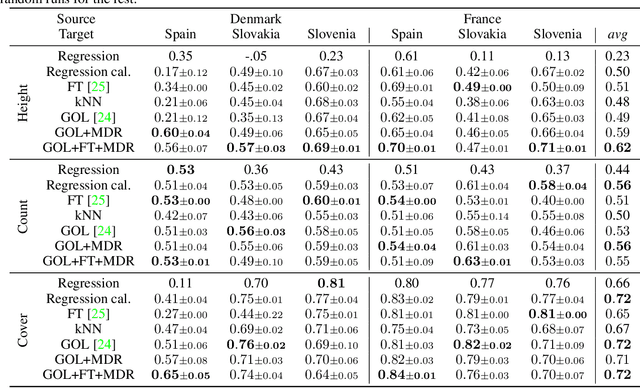

Get Your Embedding Space in Order: Domain-Adaptive Regression for Forest Monitoring

May 01, 2024

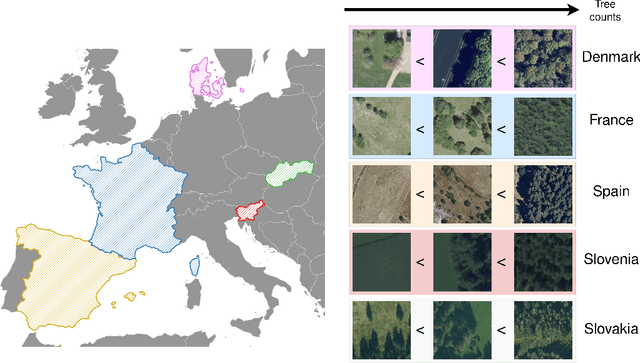

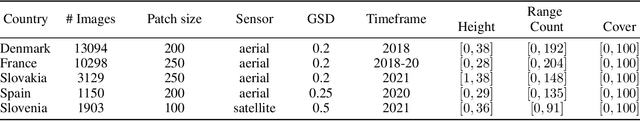

Abstract:Image-level regression is an important task in Earth observation, where visual domain and label shifts are a core challenge hampering generalization. However, cross-domain regression with remote sensing data remains understudied due to the absence of suited datasets. We introduce a new dataset with aerial and satellite imagery in five countries with three forest-related regression tasks. To match real-world applicative interests, we compare methods through a restrictive setup where no prior on the target domain is available during training, and models are adapted with limited information during testing. Building on the assumption that ordered relationships generalize better, we propose manifold diffusion for regression as a strong baseline for transduction in low-data regimes. Our comparison highlights the comparative advantages of inductive and transductive methods in cross-domain regression.

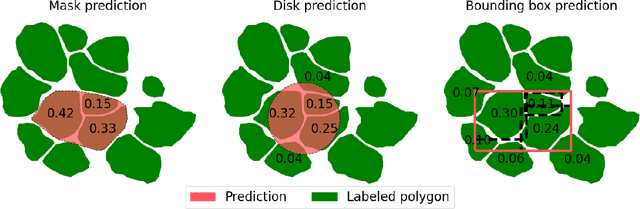

Benchmarking Individual Tree Mapping with Sub-meter Imagery

Nov 14, 2023

Abstract:There is a rising interest in mapping trees using satellite or aerial imagery, but there is no standardized evaluation protocol for comparing and enhancing methods. In dense canopy areas, the high variability of tree sizes and their spatial proximity makes it arduous to define the quality of the predictions. Concurrently, object-centric approaches such as bounding box detection usuallyperform poorly on small and dense objects. It thus remains unclear what is the ideal framework for individual tree mapping, in regards to detection and segmentation approaches, convolutional neural networks and transformers. In this paper, we introduce an evaluation framework suited for individual tree mapping in any physical environment, with annotation costs and applicative goals in mind. We review and compare different approaches and deep architectures, and introduce a new method that we experimentally prove to be a good compromise between segmentation and detection.

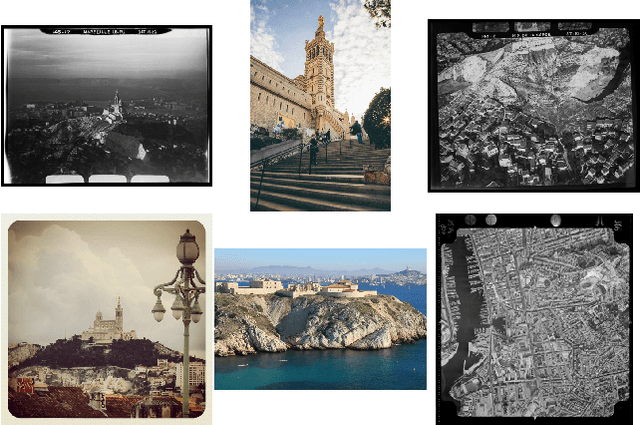

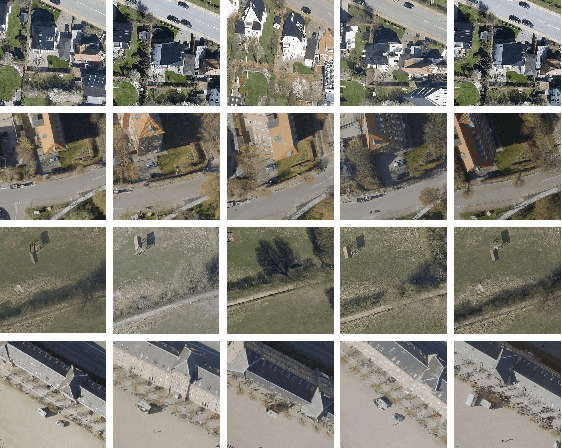

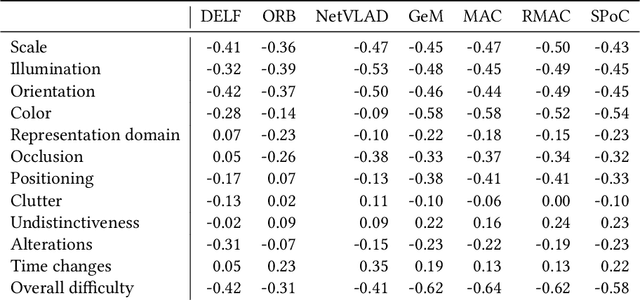

Connecting Images through Time and Sources: Introducing Low-data, Heterogeneous Instance Retrieval

Mar 19, 2021

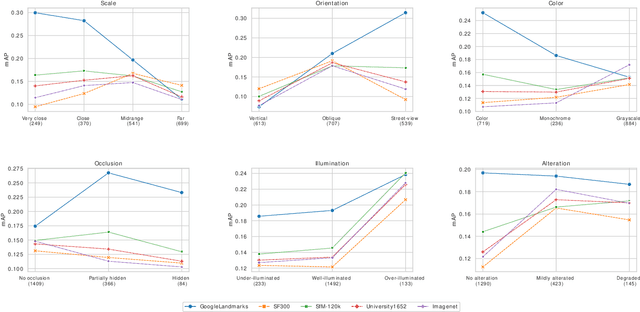

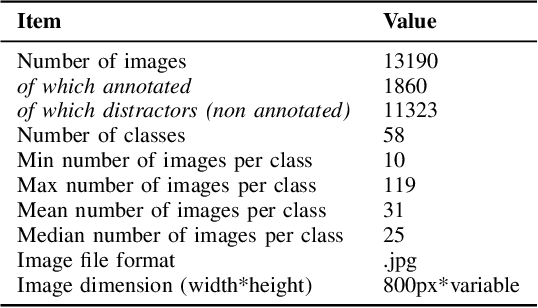

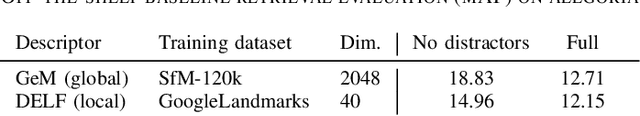

Abstract:With impressive results in applications relying on feature learning, deep learning has also blurred the line between algorithm and data. Pick a training dataset, pick a backbone network for feature extraction, and voil\`a ; this usually works for a variety of use cases. But the underlying hypothesis that there exists a training dataset matching the use case is not always met. Moreover, the demand for interconnections regardless of the variations of the content calls for increasing generalization and robustness in features. An interesting application characterized by these problematics is the connection of historical and cultural databases of images. Through the seemingly simple task of instance retrieval, we propose to show that it is not trivial to pick features responding well to a panel of variations and semantic content. Introducing a new enhanced version of the Alegoria benchmark, we compare descriptors using the detailed annotations. We further give insights about the core problems in instance retrieval, testing four state-of-the-art additional techniques to increase performance.

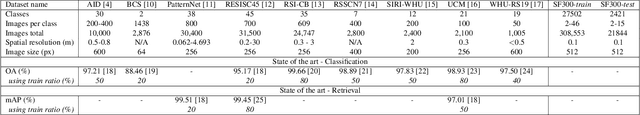

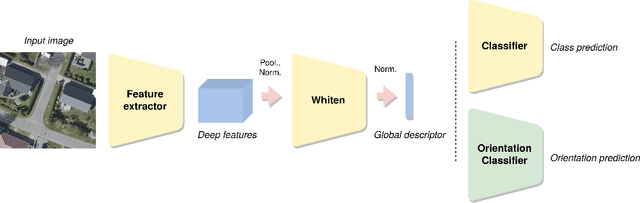

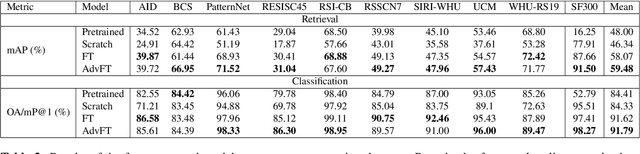

Unifying Remote Sensing Image Retrieval and Classification with Robust Fine-tuning

Feb 26, 2021

Abstract:Advances in high resolution remote sensing image analysis are currently hampered by the difficulty of gathering enough annotated data for training deep learning methods, giving rise to a variety of small datasets and associated dataset-specific methods. Moreover, typical tasks such as classification and retrieval lack a systematic evaluation on standard benchmarks and training datasets, which make it hard to identify durable and generalizable scientific contributions. We aim at unifying remote sensing image retrieval and classification with a new large-scale training and testing dataset, SF300, including both vertical and oblique aerial images and made available to the research community, and an associated fine-tuning method. We additionally propose a new adversarial fine-tuning method for global descriptors. We show that our framework systematically achieves a boost of retrieval and classification performance on nine different datasets compared to an ImageNet pretrained baseline, with currently no other method to compare to.

Challenging deep image descriptors for retrieval in heterogeneous iconographic collections

Sep 19, 2019

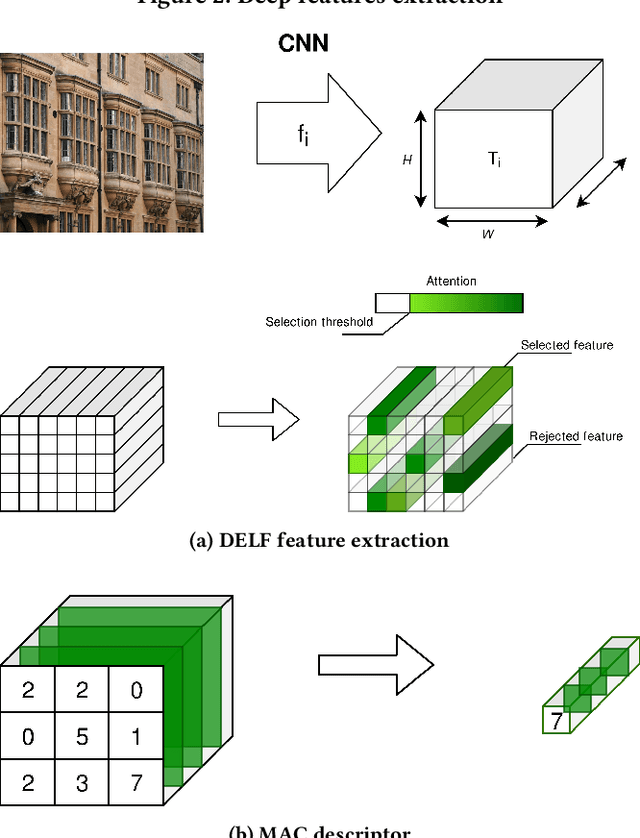

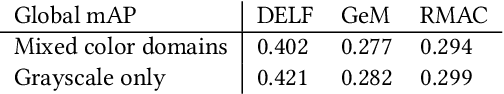

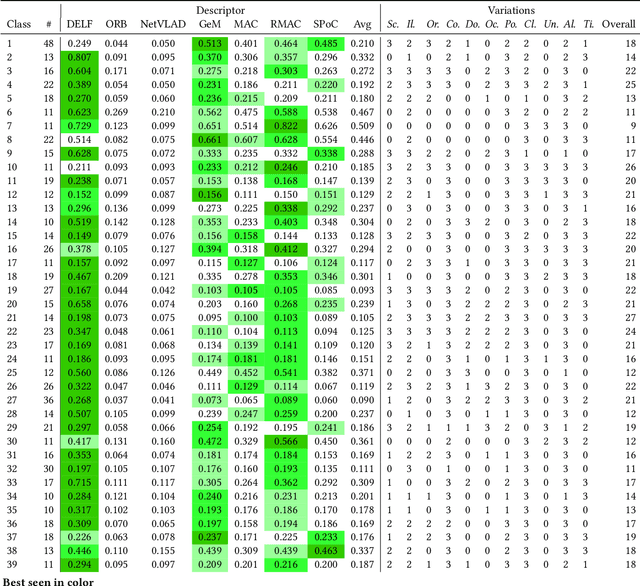

Abstract:This article proposes to study the behavior of recent and efficient state-of-the-art deep-learning based image descriptors for content-based image retrieval, facing a panel of complex variations appearing in heterogeneous image datasets, in particular in cultural collections that may involve multi-source, multi-date and multi-view Permission to make digital

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge