Dilshan Godaliyadda

MetaTele: Compact Refractive Metasurface Computational Telephoto Camera

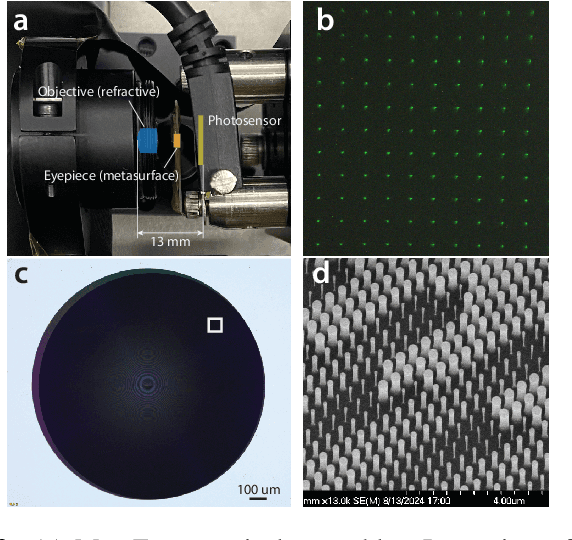

Apr 08, 2026Abstract:Smartphone cameras face fundamental form-factor constraints that limit their optical magnification, primarily due to the difficulty of reducing a lens assembly's telephoto ratio, the ratio between total track length (TTL) and effective focal length (EFL). Currently, conventional refractive optics struggle to achieve a telephoto ratio below 0.5 without requiring multiple bulky elements to correct optical aberrations. In this paper, we introduce MetaTele, a novel optics-algorithm co-design that breaks this bottleneck. MetaTele explicitly decouples the acquisition of scene structure and color information. First, it utilizes a compact refractive-metasurface optical assembly to capture a fine-detail structure image under a narrow wavelength band, inherently avoiding severe chromatic aberrations. Second, it captures a broadband color cue using the same optics; although this cue is heavily corrupted by chromatic aberrations, it retains sufficient spectral information to guide post-processing. We then employ a custom one-step diffusion model to computationally fuse these two raw measurements, successfully colorizing the structure image while correcting for system aberrations. We demonstrate a MetaTele prototype, achieving an unprecedented telephoto ratio of 0.44 with a TTL of just 13 mm for RGB imaging, paving the way for DSLR-level telephoto capabilities within smartphone form factors.

F2IDiff: Real-world Image Super-resolution using Feature to Image Diffusion Foundation Model

Dec 30, 2025Abstract:With the advent of Generative AI, Single Image Super-Resolution (SISR) quality has seen substantial improvement, as the strong priors learned by Text-2-Image Diffusion (T2IDiff) Foundation Models (FM) can bridge the gap between High-Resolution (HR) and Low-Resolution (LR) images. However, flagship smartphone cameras have been slow to adopt generative models because strong generation can lead to undesirable hallucinations. For substantially degraded LR images, as seen in academia, strong generation is required and hallucinations are more tolerable because of the wide gap between LR and HR images. In contrast, in consumer photography, the LR image has substantially higher fidelity, requiring only minimal hallucination-free generation. We hypothesize that generation in SISR is controlled by the stringency and richness of the FM's conditioning feature. First, text features are high level features, which often cannot describe subtle textures in an image. Additionally, Smartphone LR images are at least $12MP$, whereas SISR networks built on T2IDiff FM are designed to perform inference on much smaller images ($<1MP$). As a result, SISR inference has to be performed on small patches, which often cannot be accurately described by text feature. To address these shortcomings, we introduce an SISR network built on a FM with lower-level feature conditioning, specifically DINOv2 features, which we call a Feature-to-Image Diffusion (F2IDiff) Foundation Model (FM). Lower level features provide stricter conditioning while being rich descriptors of even small patches.

Diffusion Algorithm for Metalens Optical Aberration Correction

Nov 16, 2025

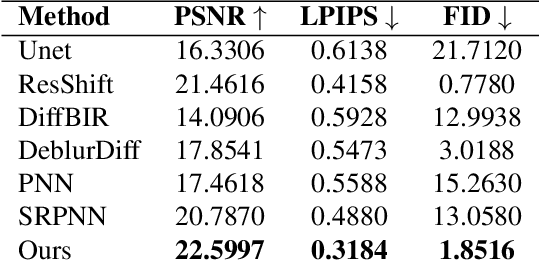

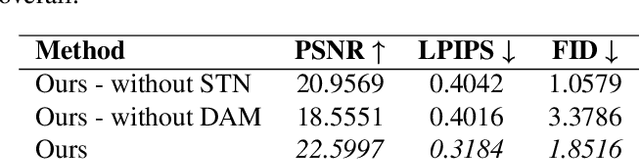

Abstract:Metalenses offer a path toward creating ultra-thin optical systems, but they inherently suffer from severe, spatially varying optical aberrations, especially chromatic aberration, which makes image reconstruction a significant challenge. This paper presents a novel algorithmic solution to this problem, designed to reconstruct a sharp, full-color image from two inputs: a sharp, bandpass-filtered grayscale ``structure image'' and a heavily distorted ``color cue'' image, both captured by the metalens system. Our method utilizes a dual-branch diffusion model, built upon a pre-trained Stable Diffusion XL framework, to fuse information from the two inputs. We demonstrate through quantitative and qualitative comparisons that our approach significantly outperforms existing deblurring and pansharpening methods, effectively restoring high-frequency details while accurately colorizing the image.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge