Dana Rezazadegan

Linked Adapters: Linking Past and Future to Present for Effective Continual Learning

Dec 14, 2024

Abstract:Continual learning allows the system to learn and adapt to new tasks while retaining the knowledge acquired from previous tasks. However, deep learning models suffer from catastrophic forgetting of knowledge learned from earlier tasks while learning a new task. Moreover, retraining large models like transformers from scratch for every new task is costly. An effective approach to address continual learning is to use a large pre-trained model with task-specific adapters to adapt to the new tasks. Though this approach can mitigate catastrophic forgetting, they fail to transfer knowledge across tasks as each task is learning adapters separately. To address this, we propose a novel approach Linked Adapters that allows knowledge transfer through a weighted attention mechanism to other task-specific adapters. Linked adapters use a multi-layer perceptron (MLP) to model the attention weights, which overcomes the challenge of backward knowledge transfer in continual learning in addition to modeling the forward knowledge transfer. During inference, our proposed approach effectively leverages knowledge transfer through MLP-based attention weights across all the lateral task adapters. Through numerous experiments conducted on diverse image classification datasets, we effectively demonstrated the improvement in performance on the continual learning tasks using Linked Adapters.

A Review of Uncertainty Quantification in Deep Learning: Techniques, Applications and Challenges

Nov 17, 2020

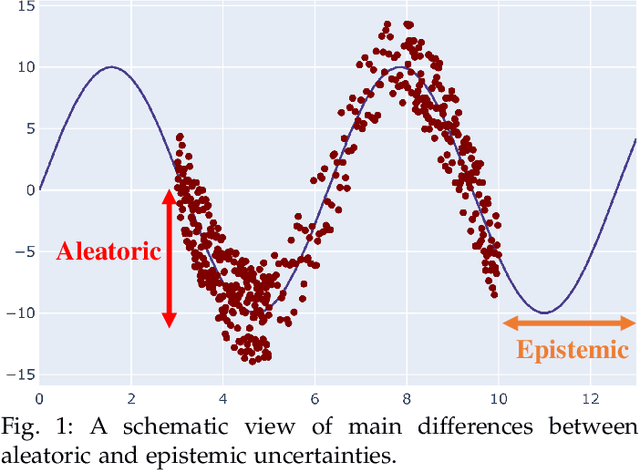

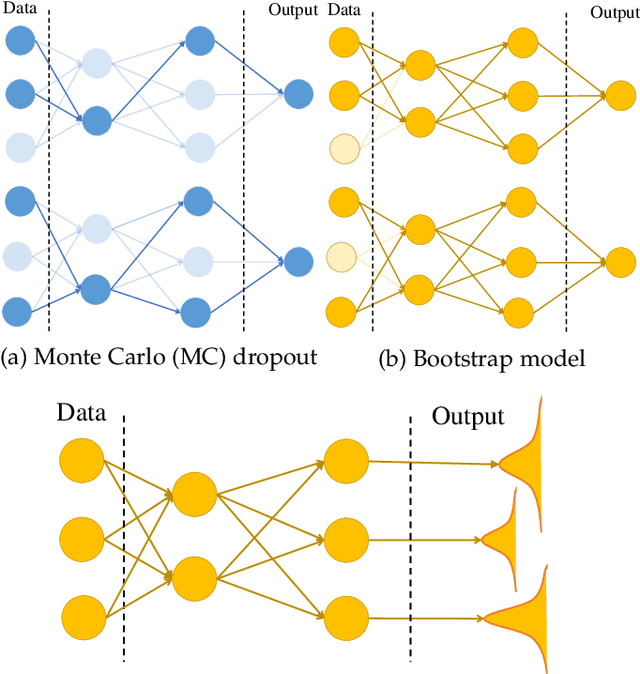

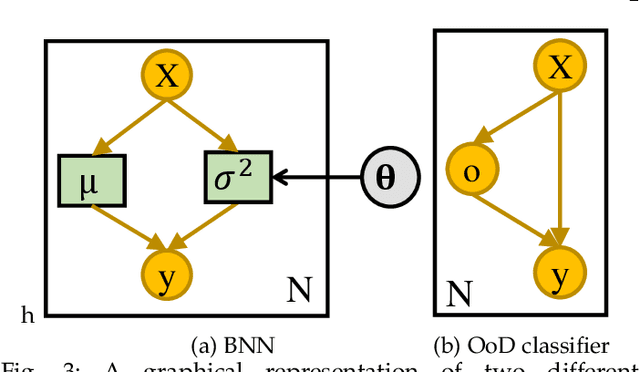

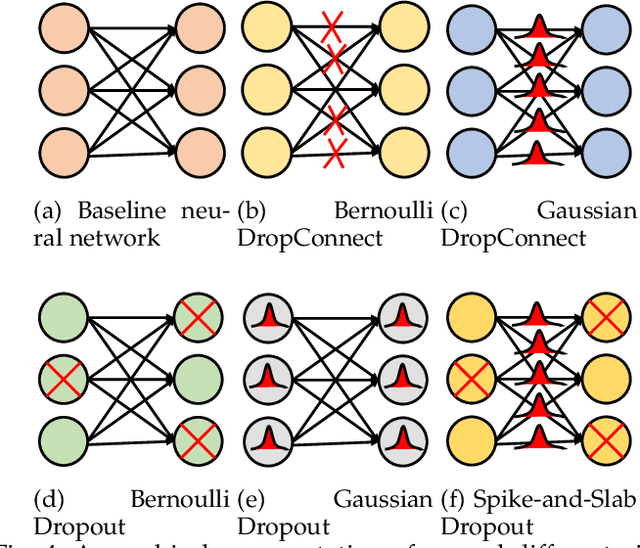

Abstract:Uncertainty quantification (UQ) plays a pivotal role in reduction of uncertainties during both optimization and decision making processes. It can be applied to solve a variety of real-world applications in science and engineering. Bayesian approximation and ensemble learning techniques are two most widely-used UQ methods in the literature. In this regard, researchers have proposed different UQ methods and examined their performance in a variety of applications such as computer vision (e.g., self-driving cars and object detection), image processing (e.g., image restoration), medical image analysis (e.g., medical image classification and segmentation), natural language processing (e.g., text classification, social media texts and recidivism risk-scoring), bioinformatics, etc. This study reviews recent advances in UQ methods used in deep learning. Moreover, we also investigate the application of these methods in reinforcement learning (RL). Then, we outline a few important applications of UQ methods. Finally, we briefly highlight the fundamental research challenges faced by UQ methods and discuss the future research directions in this field.

Automatic Speech Summarisation: A Scoping Review

Aug 27, 2020

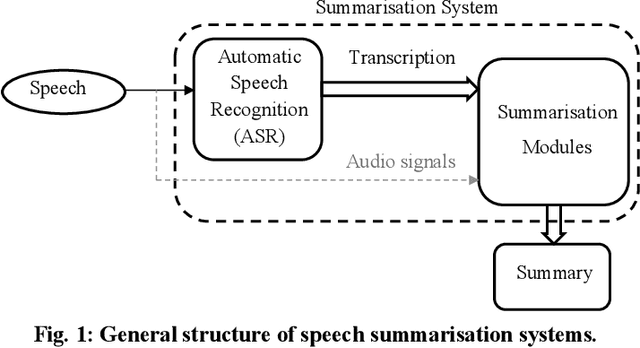

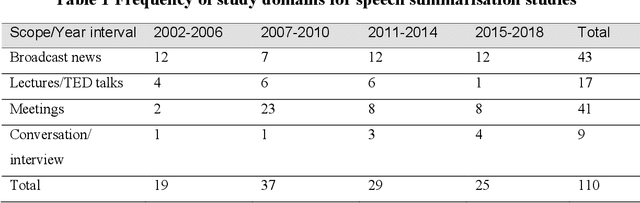

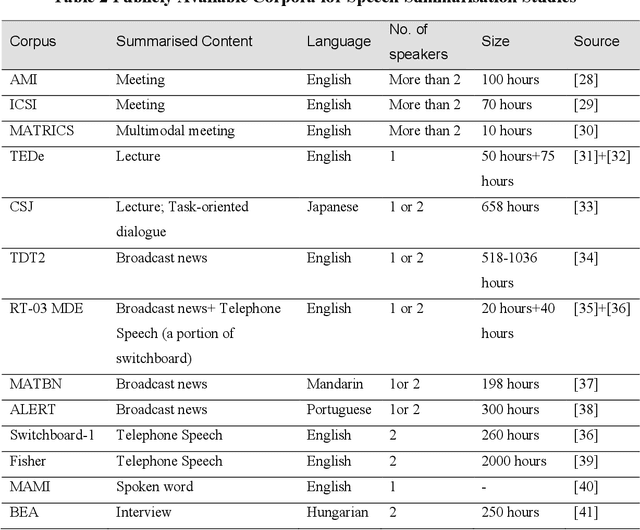

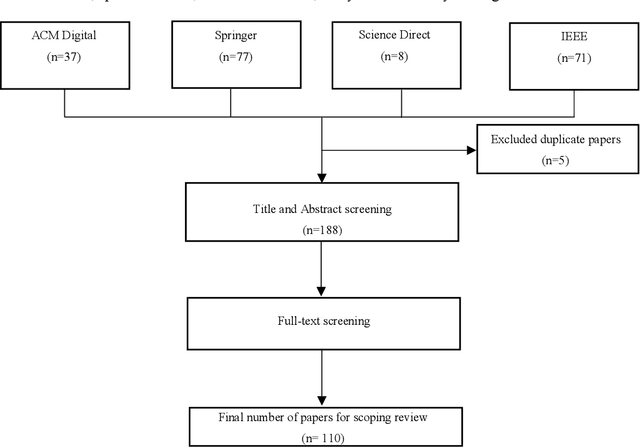

Abstract:Speech summarisation techniques take human speech as input and then output an abridged version as text or speech. Speech summarisation has applications in many domains from information technology to health care, for example improving speech archives or reducing clinical documentation burden. This scoping review maps the speech summarisation literature, with no restrictions on time frame, language summarised, research method, or paper type. We reviewed a total of 110 papers out of a set of 153 found through a literature search and extracted speech features used, methods, scope, and training corpora. Most studies employ one of four speech summarisation architectures: (1) Sentence extraction and compaction; (2) Feature extraction and classification or rank-based sentence selection; (3) Sentence compression and compression summarisation; and (4) Language modelling. We also discuss the strengths and weaknesses of these different methods and speech features. Overall, supervised methods (e.g. Hidden Markov support vector machines, Ranking support vector machines, Conditional random fields) performed better than unsupervised methods. As supervised methods require manually annotated training data which can be costly, there was more interest in unsupervised methods. Recent research into unsupervised methods focusses on extending language modelling, for example by combining Uni-gram modelling with deep neural networks. Protocol registration: The protocol for this scoping review is registered at https://osf.io.

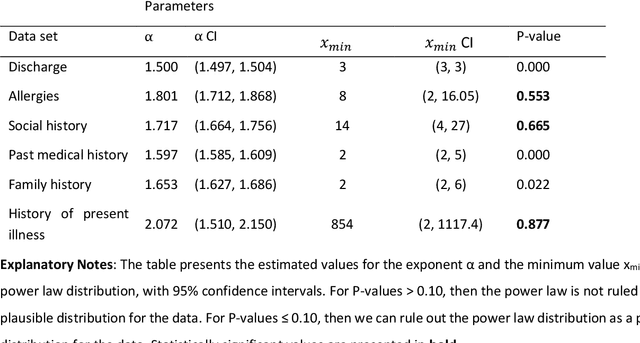

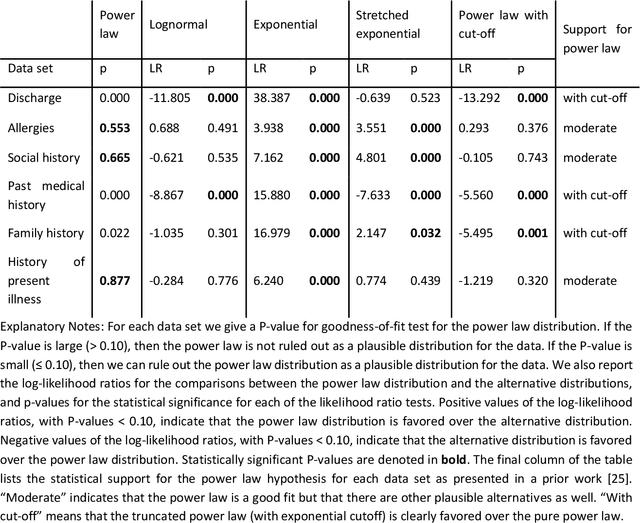

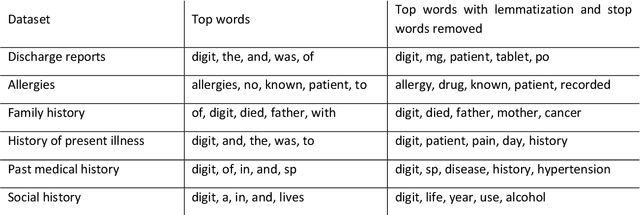

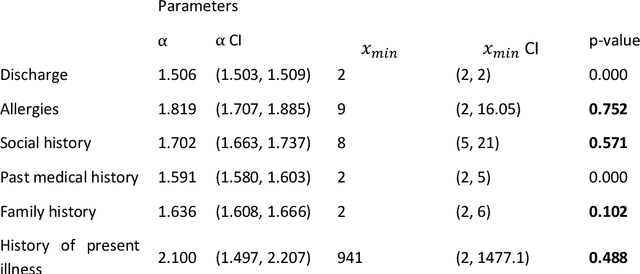

Empirical Analysis of Zipf's Law, Power Law, and Lognormal Distributions in Medical Discharge Reports

Mar 30, 2020

Abstract:Bayesian modelling and statistical text analysis rely on informed probability priors to encourage good solutions. This paper empirically analyses whether text in medical discharge reports follow Zipf's law, a commonly assumed statistical property of language where word frequency follows a discrete power law distribution. We examined 20,000 medical discharge reports from the MIMIC-III dataset. Methods included splitting the discharge reports into tokens, counting token frequency, fitting power law distributions to the data, and testing whether alternative distributions--lognormal, exponential, stretched exponential, and truncated power law--provided superior fits to the data. Results show that discharge reports are best fit by the truncated power law and lognormal distributions. Our findings suggest that Bayesian modelling and statistical text analysis of discharge report text would benefit from using truncated power law and lognormal probability priors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge