Chunxia Qin

TDATR: Improving End-to-End Table Recognition via Table Detail-Aware Learning and Cell-Level Visual Alignment

Mar 24, 2026Abstract:Tables are pervasive in diverse documents, making table recognition (TR) a fundamental task in document analysis. Existing modular TR pipelines separately model table structure and content, leading to suboptimal integration and complex workflows. End-to-end approaches rely heavily on large-scale TR data and struggle in data-constrained scenarios. To address these issues, we propose TDATR (Table Detail-Aware Table Recognition) improves end-to-end TR through table detail-aware learning and cell-level visual alignment. TDATR adopts a ``perceive-then-fuse'' strategy. The model first performs table detail-aware learning to jointly perceive table structure and content through multiple structure understanding and content recognition tasks designed under a language modeling paradigm. These tasks can naturally leverage document data from diverse scenarios to enhance model robustness. The model then integrates implicit table details to generate structured HTML outputs, enabling more efficient TR modeling when trained with limited data. Furthermore, we design a structure-guided cell localization module integrated into the end-to-end TR framework, which efficiently locates cell and strengthens vision-language alignment. It enhances the interpretability and accuracy of TR. We achieve state-of-the-art or highly competitive performance on seven benchmarks without dataset-specific fine-tuning.

SEMv3: A Fast and Robust Approach to Table Separation Line Detection

May 20, 2024Abstract:Table structure recognition (TSR) aims to parse the inherent structure of a table from its input image. The `"split-and-merge" paradigm is a pivotal approach to parse table structure, where the table separation line detection is crucial. However, challenges such as wireless and deformed tables make it demanding. In this paper, we adhere to the "split-and-merge" paradigm and propose SEMv3 (SEM: Split, Embed and Merge), a method that is both fast and robust for detecting table separation lines. During the split stage, we introduce a Keypoint Offset Regression (KOR) module, which effectively detects table separation lines by directly regressing the offset of each line relative to its keypoint proposals. Moreover, in the merge stage, we define a series of merge actions to efficiently describe the table structure based on table grids. Extensive ablation studies demonstrate that our proposed KOR module can detect table separation lines quickly and accurately. Furthermore, on public datasets (e.g. WTW, ICDAR-2019 cTDaR Historical and iFLYTAB), SEMv3 achieves state-of-the-art (SOTA) performance. The code is available at https://github.com/Chunchunwumu/SEMv3.

A weakly supervised registration-based framework for prostate segmentation via the combination of statistical shape model and CNN

Jul 30, 2020

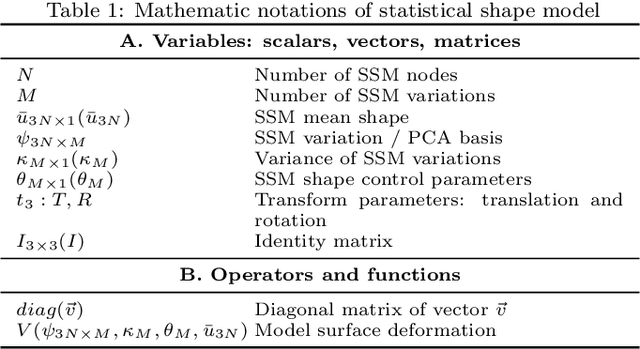

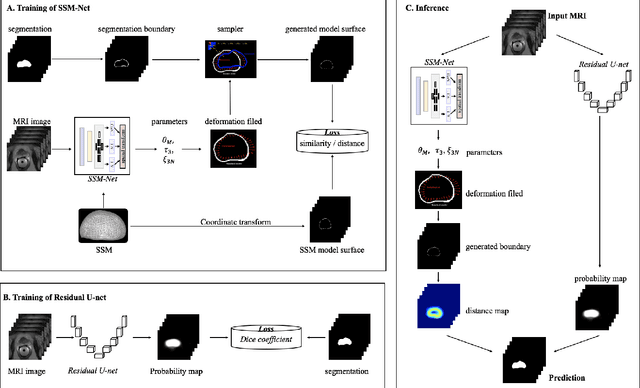

Abstract:Precise determination of target is an essential procedure in prostate interventions, such as the prostate biopsy, lesion detection and targeted therapy. However, the prostate delineation may be tough in some cases due to tissue ambiguity or lack of partial anatomical boundary. To address this problem, we proposed a weakly supervised registration-based framework for the precise prostate segmentation, by combining convolutional neural network (CNN) with statistical shape model (SSM). To obtain the prostate region, an inception-based neural network (SSM-Net) was firstly exploited to predict the model transform, shape control parameters and a fine-tuning vector, for the generation of prostate boundary. According to the inferred boundary, a normalized distance map was calculated. Then, a residual U-net (ResU-Net) was employed to predict a probability label map from the input images. Finally, the average of the distance map and the probability map was regarded as the prostate segmentation. After that, two public dataset PROMISE12 and NCI- ISBI 2013 were utilized for the model computation and for the network training and testing. The validation results demonstrate that the segmentation framework using a SSM with 9500 nodes achieved the best performance, with a dice of 0.904 and an average surface distance of 1.88 mm. In addition, we verified the impact of model elasticity augmentation and fine-tuning item on the network segmentation capability. As a result, both factors have improved the delineation accuracy, with dice increased by 10% and 7% respectively. In conclusion, via the combination of two weakly supervised neural networks, our segmentation method might be an effective and robust approach for prostate segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge