Chongsheng Zhang

Min-$k$ Sampling: Decoupling Truncation from Temperature Scaling via Relative Logit Dynamics

Apr 13, 2026Abstract:The quality of text generated by large language models depends critically on the decoding sampling strategy. While mainstream methods such as Top-$k$, Top-$p$, and Min-$p$ achieve a balance between diversity and accuracy through probability-space truncation, they share an inherent limitation: extreme sensitivity to the temperature parameter. Recent logit-space approaches like Top-$nσ$ achieve temperature invariance but rely on global statistics that are susceptible to long-tail noise, failing to capture fine-grained confidence structures among top candidates. We propose \textbf{Min-$k$ Sampling}, a novel dynamic truncation strategy that analyzes the local shape of the sorted logit distribution to identify "semantic cliffs": sharp transitions from high-confidence core tokens to uncertain long-tail tokens. By computing a position-weighted relative decay rate, Min-$k$ dynamically determines truncation boundaries at each generation step. We formally prove that Min-$k$ achieves strict temperature invariance and empirically demonstrate its low sensitivity to hyperparameter choices. Experiments on multiple reasoning benchmarks, creative writing tasks, and human evaluation show that Min-$k$ consistently improves text quality, maintaining robust performance even under extreme temperature settings where probability-based methods collapse. We make our code, models, and analysis tools publicly available.

Multi-Modal Character Localization and Extraction for Chinese Text Recognition

Mar 14, 2026Abstract:Scene text recognition (STR) methods have demonstrated their excellent capability in English text images. However, due to the complex inner structures of Chinese and the extensive character categories, it poses challenges for recognizing Chinese text in images. Recently, studies have shown that the methods designed for English text recognition encounter an accuracy bottleneck when recognizing Chinese text images. This raises the question: Is it appropriate to apply the model developed for English to the Chinese STR task? To explore this issue, we propose a novel method named LER, which explicitly decouples each character and independently recognizes characters while taking into account the complex inner structures of Chinese. LER consists of three modules: Localization, Extraction, and Recognition. Firstly, the localization module utilizes multimodal information to determine the character's position precisely. Then, the extraction module dissociates all characters in parallel. Finally, the recognition module considers the unique inner structures of Chinese to provide the text prediction results. Extensive experiments conducted on large-scale Chinese benchmarks indicate that our method significantly outperforms existing methods. Furthermore, extensive experiments conducted on six English benchmarks and the Union14M benchmark show impressive results in English text recognition by LER. Code is available at https://github.com/Pandarenlql/LER.

Scribe Verification in Chinese manuscripts using Siamese, Triplet, and Vision Transformer Neural Networks

Mar 14, 2026Abstract:The paper examines deep learning models for scribe verification in Chinese manuscripts. That is, to automatically determine whether two manuscript fragments were written by the same scribe using deep metric learning methods. Two datasets were used: the Tsinghua Bamboo Slips Dataset and a selected subset of the Multi-Attribute Chinese Calligraphy Dataset, focusing on the calligraphers with a large number of samples. Siamese and Triplet neural network architectures are implemented, including convolutional and Transformer-based models. The experimental results show that the MobileNetV3+ Custom Siamese model trained with contrastive loss achieves either the best or the second-best overall accuracy and area under the Receiver Operating Characteristic Curve on both datasets.

GUARD: Glocal Uncertainty-Aware Robust Decoding for Effective and Efficient Open-Ended Text Generation

Aug 28, 2025

Abstract:Open-ended text generation faces a critical challenge: balancing coherence with diversity in LLM outputs. While contrastive search-based decoding strategies have emerged to address this trade-off, their practical utility is often limited by hyperparameter dependence and high computational costs. We introduce GUARD, a self-adaptive decoding method that effectively balances these competing objectives through a novel "Glocal" uncertainty-driven framework. GUARD combines global entropy estimates with local entropy deviations to integrate both long-term and short-term uncertainty signals. We demonstrate that our proposed global entropy formulation effectively mitigates abrupt variations in uncertainty, such as sudden overconfidence or high entropy spikes, and provides theoretical guarantees of unbiasedness and consistency. To reduce computational overhead, we incorporate a simple yet effective token-count-based penalty into GUARD. Experimental results demonstrate that GUARD achieves a good balance between text diversity and coherence, while exhibiting substantial improvements in generation speed. In a more nuanced comparison study across different dimensions of text quality, both human and LLM evaluators validated its remarkable performance. Our code is available at https://github.com/YecanLee/GUARD.

OBD-Finder: Explainable Coarse-to-Fine Text-Centric Oracle Bone Duplicates Discovery

May 04, 2025

Abstract:Oracle Bone Inscription (OBI) is the earliest systematic writing system in China, while the identification of Oracle Bone (OB) duplicates is a fundamental issue in OBI research. In this work, we design a progressive OB duplicate discovery framework that combines unsupervised low-level keypoints matching with high-level text-centric content-based matching to refine and rank the candidate OB duplicates with semantic awareness and interpretability. We compare our approach with state-of-the-art content-based image retrieval and image matching methods, showing that our approach yields comparable recall performance and the highest simplified mean reciprocal rank scores for both Top-5 and Top-15 retrieval results, and with significantly accelerated computation efficiency. We have discovered over 60 pairs of new OB duplicates in real-world deployment, which were missed by OBI researchers for decades. The models, video illustration and demonstration of this work are available at: https://github.com/cszhangLMU/OBD-Finder/.

3D Human Pose Estimation via Spatial Graph Order Attention and Temporal Body Aware Transformer

May 02, 2025Abstract:Nowadays, Transformers and Graph Convolutional Networks (GCNs) are the prevailing techniques for 3D human pose estimation. However, Transformer-based methods either ignore the spatial neighborhood relationships between the joints when used for skeleton representations or disregard the local temporal patterns of the local joint movements in skeleton sequence modeling, while GCN-based methods often neglect the need for pose-specific representations. To address these problems, we propose a new method that exploits the graph modeling capability of GCN to represent each skeleton with multiple graphs of different orders, incorporated with a newly introduced Graph Order Attention module that dynamically emphasizes the most representative orders for each joint. The resulting spatial features of the sequence are further processed using a proposed temporal Body Aware Transformer that models the global body feature dependencies in the sequence with awareness of the local inter-skeleton feature dependencies of joints. Given that our 3D pose output aligns with the central 2D pose in the sequence, we improve the self-attention mechanism to be aware of the central pose while diminishing its focus gradually towards the first and the last poses. Extensive experiments on Human3.6m, MPIINF-3DHP, and HumanEva-I datasets demonstrate the effectiveness of the proposed method. Code and models are made available on Github.

Extremely Fine-Grained Visual Classification over Resembling Glyphs in the Wild

Aug 25, 2024

Abstract:Text recognition in the wild is an important technique for digital maps and urban scene understanding, in which the natural resembling properties between glyphs is one of the major reasons that lead to wrong recognition results. To address this challenge, we introduce two extremely fine-grained visual recognition benchmark datasets that contain very challenging resembling glyphs (characters/letters) in the wild to be distinguished. Moreover, we propose a simple yet effective two-stage contrastive learning approach to the extremely fine-grained recognition task of resembling glyphs discrimination. In the first stage, we utilize supervised contrastive learning to leverage label information to warm-up the backbone network. In the second stage, we introduce CCFG-Net, a network architecture that integrates classification and contrastive learning in both Euclidean and Angular spaces, in which contrastive learning is applied in both supervised learning and pairwise discrimination manners to enhance the model's feature representation capability. Overall, our proposed approach effectively exploits the complementary strengths of contrastive learning and classification, leading to improved recognition performance on the resembling glyphs. Comparative evaluations with state-of-the-art fine-grained classification approaches under both Convolutional Neural Network (CNN) and Transformer backbones demonstrate the superiority of our proposed method.

A Systematic Review on Long-Tailed Learning

Aug 01, 2024

Abstract:Long-tailed data is a special type of multi-class imbalanced data with a very large amount of minority/tail classes that have a very significant combined influence. Long-tailed learning aims to build high-performance models on datasets with long-tailed distributions, which can identify all the classes with high accuracy, in particular the minority/tail classes. It is a cutting-edge research direction that has attracted a remarkable amount of research effort in the past few years. In this paper, we present a comprehensive survey of latest advances in long-tailed visual learning. We first propose a new taxonomy for long-tailed learning, which consists of eight different dimensions, including data balancing, neural architecture, feature enrichment, logits adjustment, loss function, bells and whistles, network optimization, and post hoc processing techniques. Based on our proposed taxonomy, we present a systematic review of long-tailed learning methods, discussing their commonalities and alignable differences. We also analyze the differences between imbalance learning and long-tailed learning approaches. Finally, we discuss prospects and future directions in this field.

On Mask-based Image Set Desensitization with Recognition Support

Dec 14, 2023Abstract:In recent years, Deep Neural Networks (DNN) have emerged as a practical method for image recognition. The raw data, which contain sensitive information, are generally exploited within the training process. However, when the training process is outsourced to a third-party organization, the raw data should be desensitized before being transferred to protect sensitive information. Although masks are widely applied to hide important sensitive information, preventing inpainting masked images is critical, which may restore the sensitive information. The corresponding models should be adjusted for the masked images to reduce the degradation of the performance for recognition or classification tasks due to the desensitization of images. In this paper, we propose a mask-based image desensitization approach while supporting recognition. This approach consists of a mask generation algorithm and a model adjustment method. We propose exploiting an interpretation algorithm to maintain critical information for the recognition task in the mask generation algorithm. In addition, we propose a feature selection masknet as the model adjustment method to improve the performance based on the masked images. Extensive experimentation results based on multiple image datasets reveal significant advantages (up to 9.34% in terms of accuracy) of our approach for image desensitization while supporting recognition.

An Empirical Study on the Joint Impact of Feature Selection and Data Resampling on Imbalance Classification

Sep 01, 2021

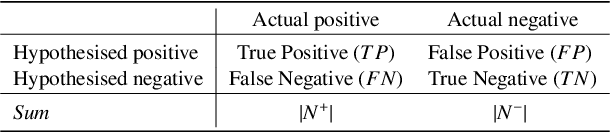

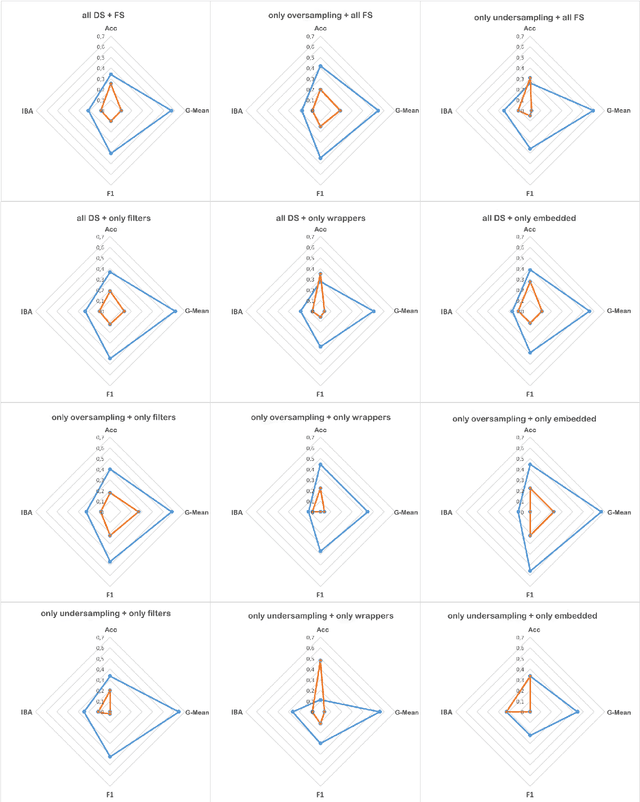

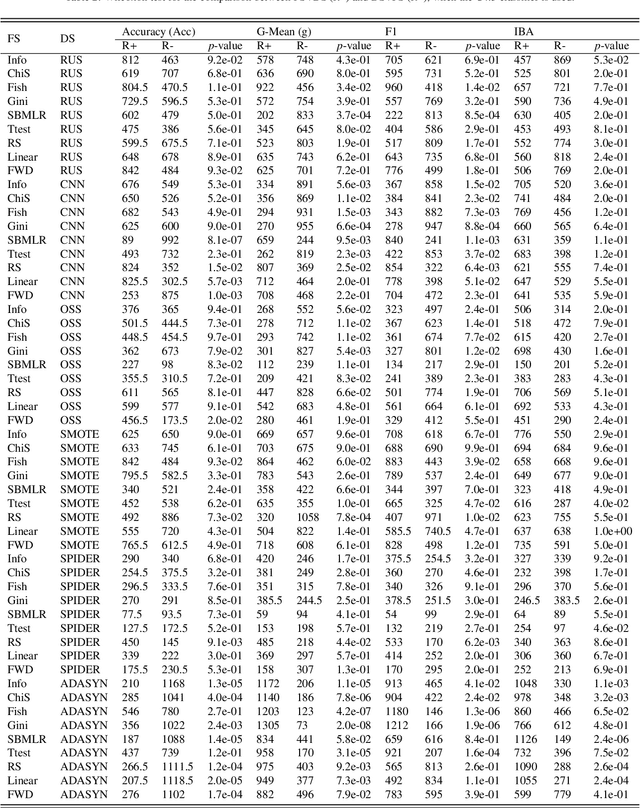

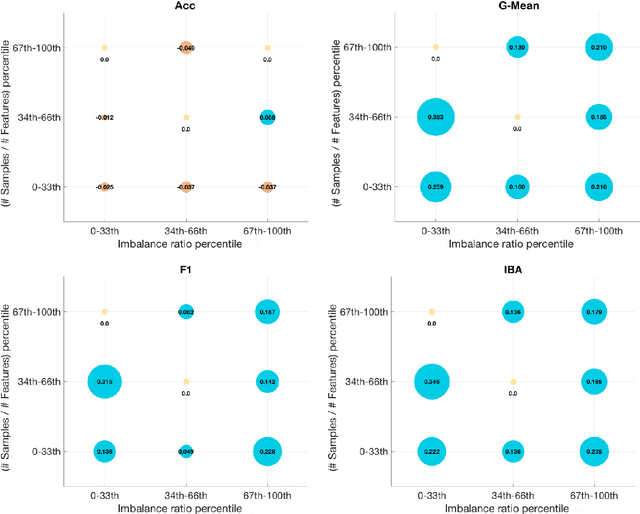

Abstract:Real-world datasets often present different degrees of imbalanced (i.e., long-tailed or skewed) distributions. While the majority (a.k.a., head or frequent) classes have sufficient samples, the minority (a.k.a., tail or rare) classes can be under-represented by a rather limited number of samples. On one hand, data resampling is a common approach to tackling class imbalance. On the other hand, dimension reduction, which reduces the feature space, is a conventional machine learning technique for building stronger classification models on a dataset. However, the possible synergy between feature selection and data resampling for high-performance imbalance classification has rarely been investigated before. To address this issue, this paper carries out a comprehensive empirical study on the joint influence of feature selection and resampling on two-class imbalance classification. Specifically, we study the performance of two opposite pipelines for imbalance classification, i.e., applying feature selection before or after data resampling. We conduct a large amount of experiments (a total of 9225 experiments) on 52 publicly available datasets, using 9 feature selection methods, 6 resampling approaches for class imbalance learning, and 3 well-known classification algorithms. Experimental results show that there is no constant winner between the two pipelines, thus both of them should be considered to derive the best performing model for imbalance classification. We also find that the performance of an imbalance classification model depends on the classifier adopted, the ratio between the number of majority and minority samples (IR), as well as on the ratio between the number of samples and features (SFR). Overall, this study should provide new reference value for researchers and practitioners in imbalance learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge