Brett T. Lopez

Joint On-Manifold Gravity and Accelerometer Intrinsics Estimation

Mar 06, 2023

Abstract:Aligning a robot's trajectory or map to the inertial frame is a critical capability that is often difficult to do accurately even though inertial measurement units (IMUs) can observe absolute roll and pitch with respect to gravity. Accelerometer biases and scale factor errors from the IMU's initial calibration are often the major source of inaccuracies when aligning the robot's odometry frame with the inertial frame, especially for low-grade IMUs. Practically, one would simultaneously estimate the true gravity vector, accelerometer biases, and scale factor to improve measurement quality but these quantities are not observable unless the IMU is sufficiently excited. While several methods estimate accelerometer bias and gravity, they do not explicitly address the observability issue nor do they estimate scale factor. We present a fixed-lag factor-graph-based estimator to address both of these issues. In addition to estimating accelerometer scale factor, our method mitigates limited observability by optimizing over a time window an order of magnitude larger than existing methods with significantly lower computational burden. The proposed method, which estimates accelerometer intrinsics and gravity separately from the other states, is enabled by a novel, velocity-agnostic measurement model for intrinsics and gravity, as well as a new method for gravity vector optimization on S2. Accurate IMU state prediction, gravity-alignment, and roll/pitch drift correction are experimentally demonstrated on public and self-collected datasets in diverse environments.

A Contracting Hierarchical Observer for Pose-Inertial Fusion

Mar 05, 2023Abstract:This work presents a contracting hierarchical observer that fuses position and orientation measurements with an IMU to generate smooth position, linear velocity, orientation, and IMU bias estimates that are guaranteed to converge to their true values. The proposed approach is composed of two contracting observers. The first is a quaternion-based orientation observer that also estimates gyroscope bias. The output of the orientation observer serves as an input for another contracting observer that estimates position, linear velocity, and accelerometer bias thus forming a hierarchy. We show that the proposed observer guarantees all state estimates converge to their true values. Simulation results confirm the theoretical performance guarantees.

Unmatched Control Barrier Functions: Certainty Equivalence Adaptive Safety

Aug 16, 2022Abstract:This work applies universal adaptive control to control barrier functions to achieve forward invariance of a safe set despite the presence of unmatched parametric uncertainties. The approach combines two ideas. The first is to construct a family of control barrier functions that ensures the system is safe for all possible models. The second is to use online parameter adaptation to methodically select a control barrier function and corresponding safety controller from the allowable set. While such a combination does not necessarily yield forward invariance without additional requirements on the barrier function, we show that such invariance can be established by simply adjusting the adaptation gain online. It is also shown that the developed method is applicable to systems with safety constraints that have a relative degree greater than one. This work thus represents the first adaptive safety approach that successfully employs the certainty equivalence principle for general state constraints without sacrificing safety guarantees.

Direct LiDAR-Inertial Odometry

Mar 18, 2022

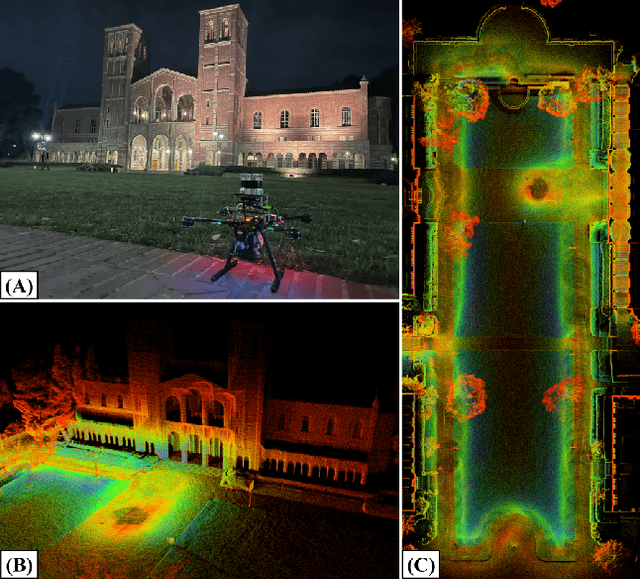

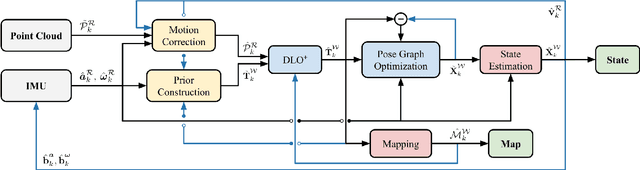

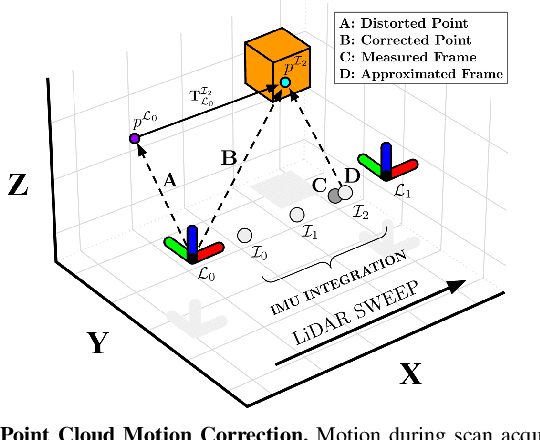

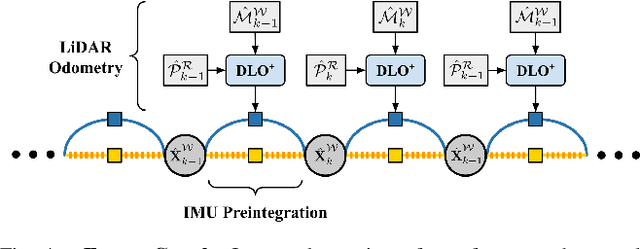

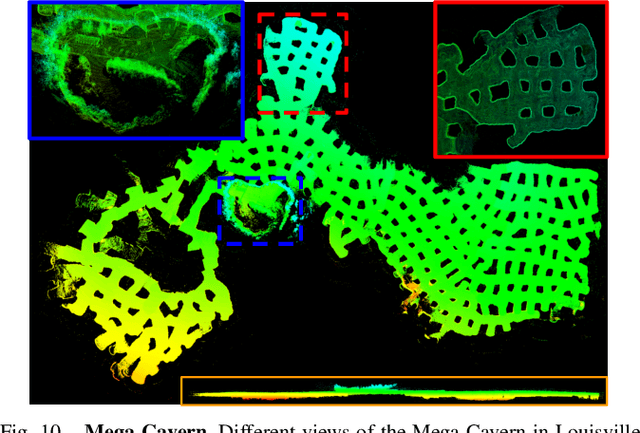

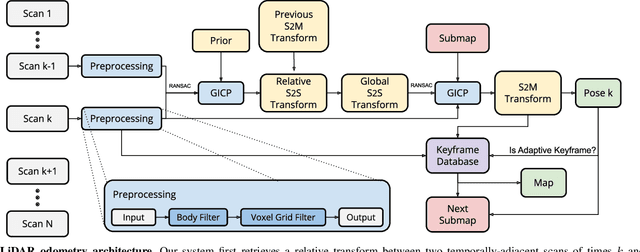

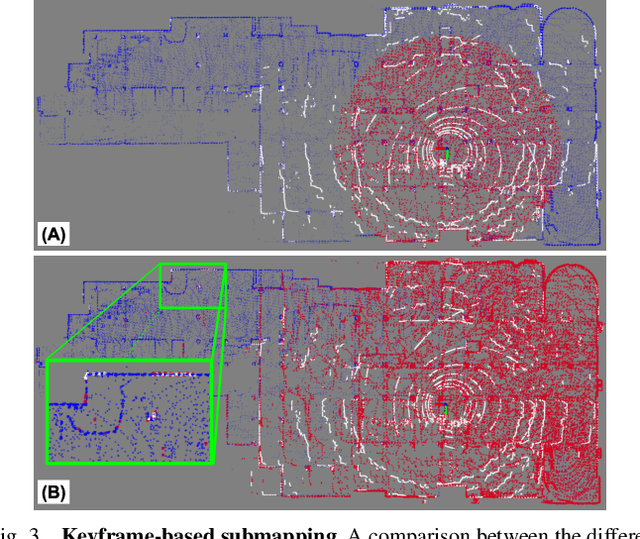

Abstract:This paper proposes a new LiDAR-inertial odometry framework that generates accurate state estimates and detailed maps in real-time on resource-constrained mobile robots. Our Direct LiDAR-Inertial Odometry (DLIO) algorithm utilizes a hybrid architecture that combines the benefits of loosely-coupled and tightly-coupled IMU integration to enhance reliability and real-time performance while improving accuracy. The proposed architecture has two key elements. The first is a fast keyframe-based LiDAR scan-matcher that builds an internal map by registering dense point clouds to a local submap with a translational and rotational prior generated by a nonlinear motion model. The second is a factor graph and high-rate propagator that fuses the output of the scan-matcher with preintegrated IMU measurements for up-to-date pose, velocity, and bias estimates. These estimates enable us to accurately deskew the next point cloud using a nonlinear kinematic model for precise motion correction, in addition to initializing the next scan-to-map optimization prior. We demonstrate DLIO's superior localization accuracy, map quality, and lower computational overhead by comparing it to the state-of-the-art using multiple benchmark, public, and self-collected datasets on both consumer and hobby-grade hardware.

Direct LiDAR Odometry: Fast Localization with Dense Point Clouds

Oct 01, 2021

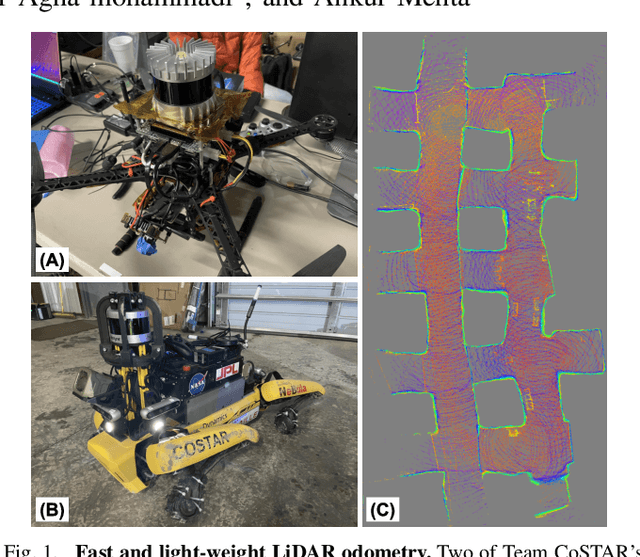

Abstract:This paper presents a light-weight frontend LiDAR odometry solution with consistent and accurate localization for computationally-limited robotic platforms. Our Direct LiDAR Odometry (DLO) method includes several key algorithmic innovations which prioritize computational efficiency and enables the use of full, minimally-preprocessed point clouds to provide accurate pose estimates in real-time. This work also presents several important algorithmic insights and design choices from developing on platforms with shared or otherwise limited computational resources, including a custom iterative closest point solver for fast point cloud registration with data structure recycling. Our method is more accurate with lower computational overhead than the current state-of-the-art and has been extensively evaluated in several perceptually-challenging environments on aerial and legged robots as part of NASA JPL Team CoSTAR's research and development efforts for the DARPA Subterranean Challenge.

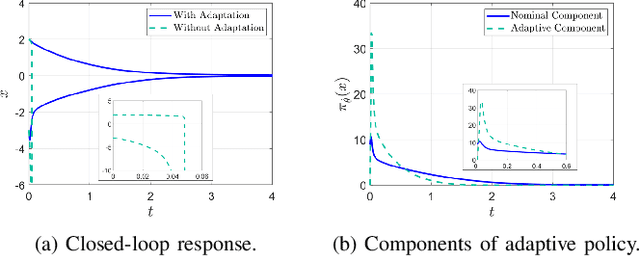

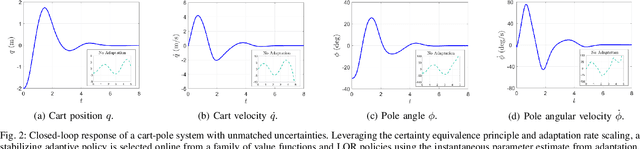

Adaptive Variants of Optimal Feedback Policies

Apr 06, 2021

Abstract:We combine adaptive control directly with optimal or near-optimal value functions to enhance stability and closed-loop performance in systems with parametric uncertainties. Leveraging the fundamental result that a value function is also a control Lyapunov function (CLF), combined with the fact that direct adaptive control can be immediately used once a CLF is known, we prove asymptotic closed-loop convergence of adaptive feedback controllers derived from optimization-based policies. Both matched and unmatched parametric variations are addressed, where the latter exploits a new technique based on adaptation rate scaling. The results may have particular resonance in machine learning for dynamical systems, where nominal feedback controllers are typically optimization-based but need to remain effective (beyond mere robustness) in the presence of significant but structured variations in parameters.

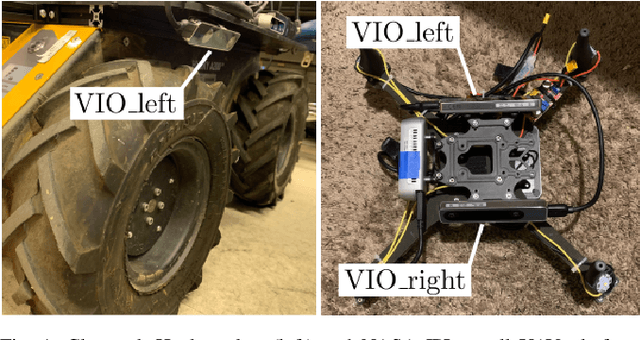

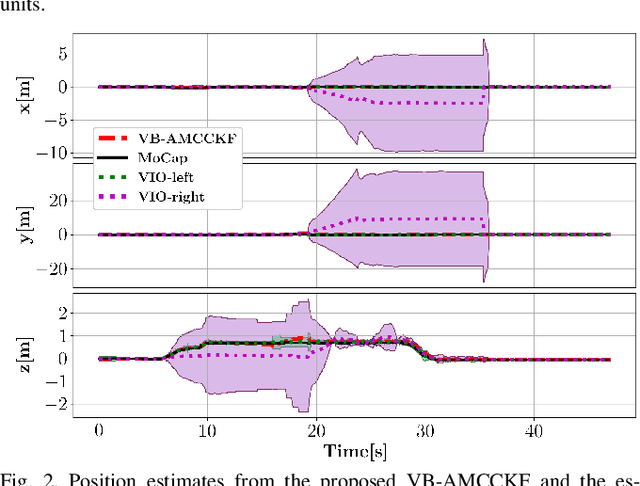

Towards Robust State Estimation by Boosting the Maximum Correntropy Criterion Kalman Filter with Adaptive Behaviors

Mar 29, 2021

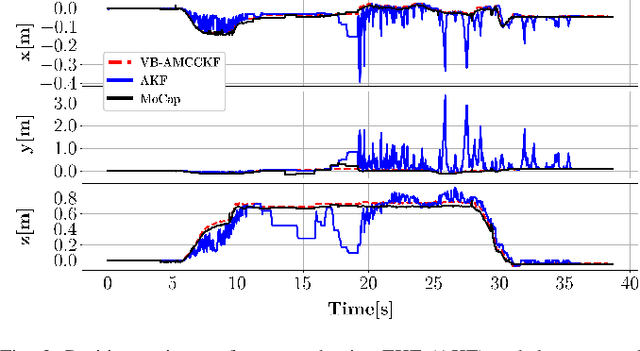

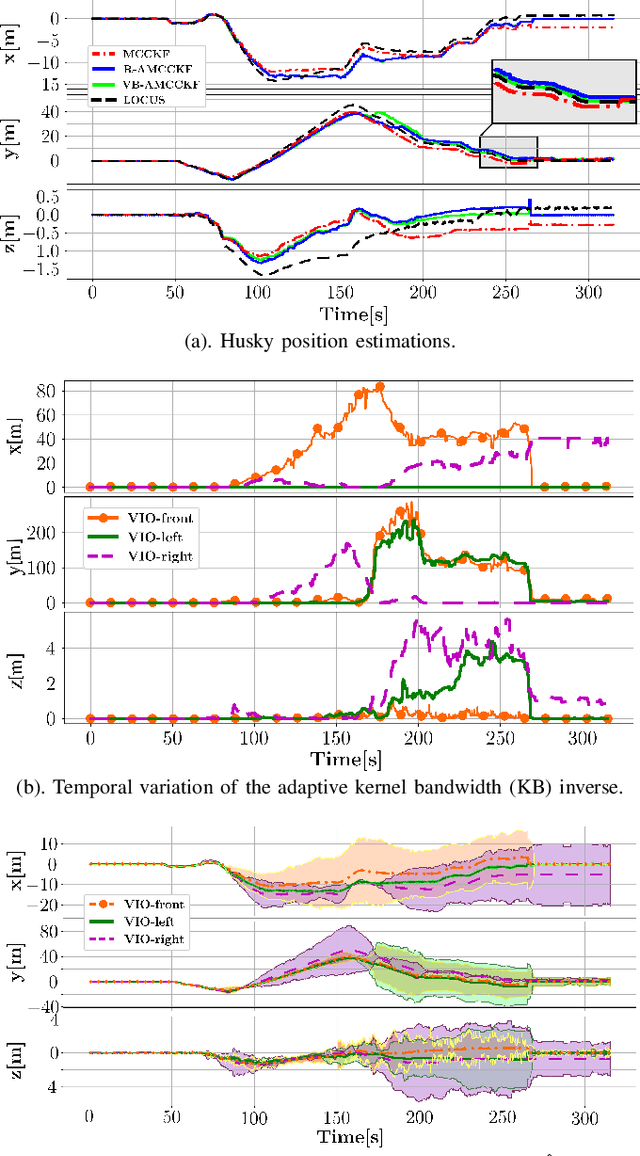

Abstract:This work proposes a resilient and adaptive state estimation framework for robots operating in perceptually-degraded environments. The approach, called Adaptive Maximum Correntropy Criterion Kalman Filtering (AMCCKF), is inherently robust to corrupted measurements, such as those containing jumps or general non-Gaussian noise, and is able to modify filter parameters online to improve performance. Two separate methods are developed -- the Variational Bayesian AMCCKF (VB-AMCCKF) and Residual AMCCKF (R-AMCCKF) -- that modify the process and measurement noise models in addition to the bandwidth of the kernel function used in MCCKF based on the quality of measurements received. The two approaches differ in computational complexity and overall performance which is experimentally analyzed. The method is demonstrated in real experiments on both aerial and ground robots and is part of the solution used by the COSTAR team participating at the DARPA Subterranean Challenge.

Universal Adaptive Control for Uncertain Nonlinear Systems

Dec 31, 2020

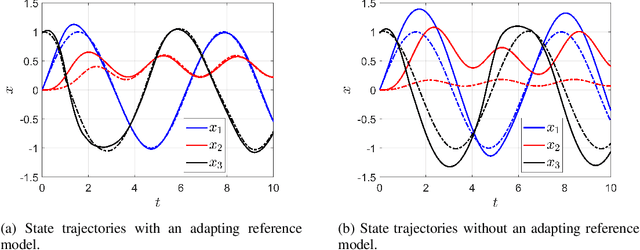

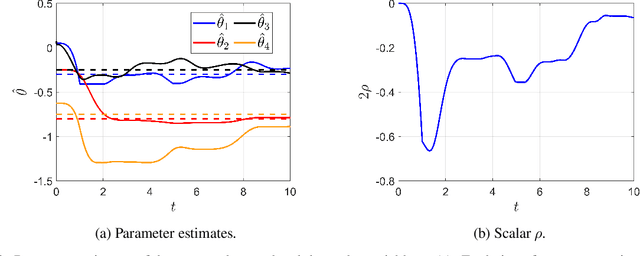

Abstract:Precise motion planning and control require accurate models which are often difficult, expensive, or time-consuming to obtain. Online model learning is an attractive approach that can handle model variations while achieving the desired level of performance. However, several model learning methods developed within adaptive nonlinear control are limited to certain systems or types of uncertainties. In particular, the so-called unmatched uncertainties pose significant problems for existing methods if the system is not in a particular form. This work presents an adaptive control framework for nonlinear systems with unmatched uncertainties that addresses several of the limitations of existing methods through two key innovations. The first is leveraging contraction theory and a new type of contraction metric that, when coupled with an adaptation law, is able to track feasible trajectories generated by an adapting reference model. The second is a natural modulation of the learning rate so the closed-loop system remains stable during learning transients. The proposed approach is more general than existing methods as it is able to handle unmatched uncertainties while only requiring the system be nominally contracting in closed-loop. Additionally, it can be used with learned feedback policies that are known to be contracting in some metric, facilitating transfer learning and bridging the sim2real gap. Simulation results demonstrate the effectiveness of the method.

Sliding on Manifolds: Geometric Attitude Control with Quaternions

Nov 07, 2020

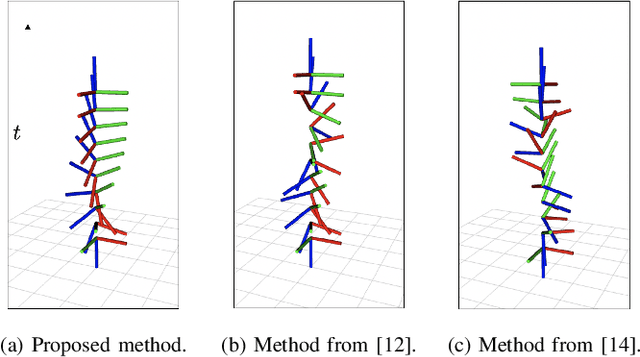

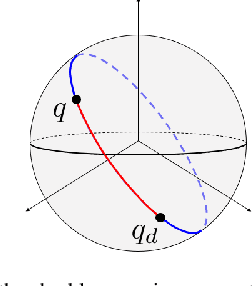

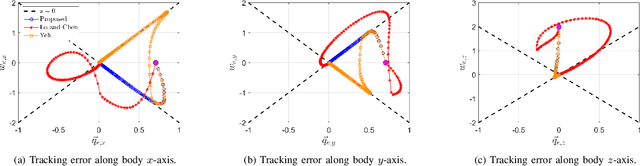

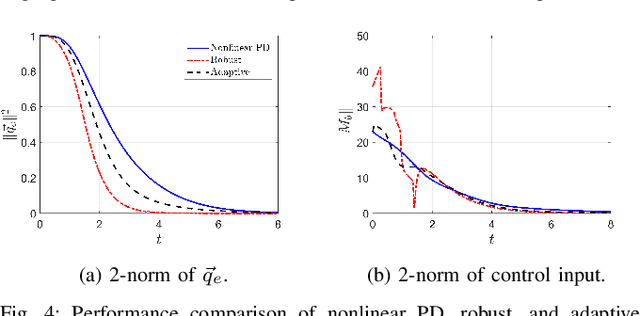

Abstract:This work proposes a quaternion-based sliding variable that describes exponentially convergent error dynamics for any forward complete desired attitude trajectory. The proposed sliding variable directly operates on the non-Euclidean space formed by quaternions and explicitly handles the double covering property to enable global attitude tracking when used in feedback. In-depth analysis of the sliding variable is provided and compared to others in the literature. Several feedback controllers including nonlinear PD, robust, and adaptive sliding control are then derived. Simulation results of a rigid body with uncertain dynamics demonstrate the effectiveness and superiority of the approach.

Unsupervised Monocular Depth Learning with Integrated Intrinsics and Spatio-Temporal Constraints

Nov 02, 2020

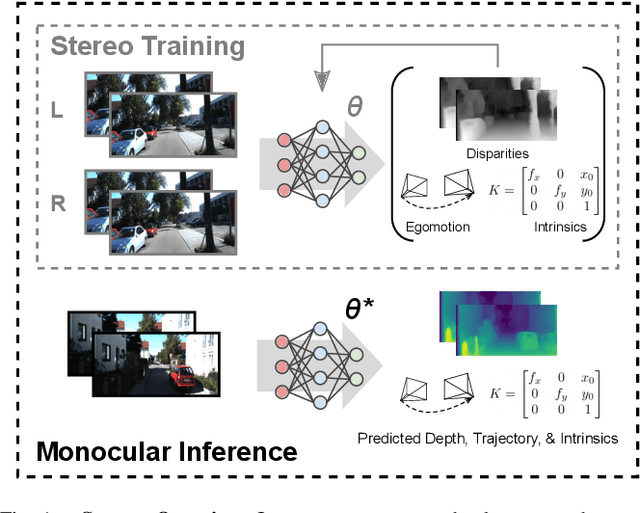

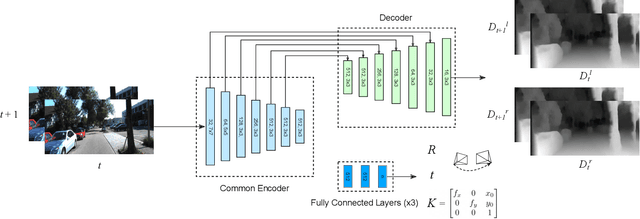

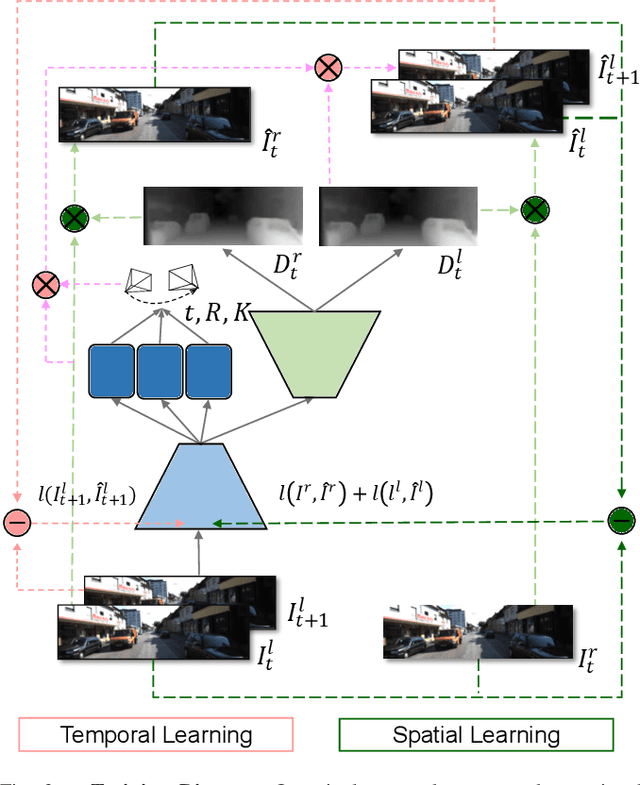

Abstract:Monocular depth inference has gained tremendous attention from researchers in recent years and remains as a promising replacement for expensive time-of-flight sensors, but issues with scale acquisition and implementation overhead still plague these systems. To this end, this work presents an unsupervised learning framework that is able to predict at-scale depth maps and egomotion, in addition to camera intrinsics, from a sequence of monocular images via a single network. Our method incorporates both spatial and temporal geometric constraints to resolve depth and pose scale factors, which are enforced within the supervisory reconstruction loss functions at training time. Only unlabeled stereo sequences are required for training the weights of our single-network architecture, which reduces overall implementation overhead as compared to previous methods. Our results demonstrate strong performance when compared to the current state-of-the-art on multiple sequences of the KITTI driving dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge