Binghao Tang

MTFM: A Scalable and Alignment-free Foundation Model for Industrial Recommendation in Meituan

Feb 13, 2026Abstract:Industrial recommendation systems typically involve multiple scenarios, yet existing cross-domain (CDR) and multi-scenario (MSR) methods often require prohibitive resources and strict input alignment, limiting their extensibility. We propose MTFM (Meituan Foundation Model for Recommendation), a transformer-based framework that addresses these challenges. Instead of pre-aligning inputs, MTFM transforms cross-domain data into heterogeneous tokens, capturing multi-scenario knowledge in an alignment-free manner. To enhance efficiency, we first introduce a multi-scenario user-level sample aggregation that significantly enhances training throughput by reducing the total number of instances. We further integrate Grouped-Query Attention and a customized Hybrid Target Attention to minimize memory usage and computational complexity. Furthermore, we implement various system-level optimizations, such as kernel fusion and the elimination of CPU-GPU blocking, to further enhance both training and inference throughput. Offline and online experiments validate the effectiveness of MTFM, demonstrating that significant performance gains are achieved by scaling both model capacity and multi-scenario training data.

Affective Image Editing: Shaping Emotional Factors via Text Descriptions

May 24, 2025Abstract:In daily life, images as common affective stimuli have widespread applications. Despite significant progress in text-driven image editing, there is limited work focusing on understanding users' emotional requests. In this paper, we introduce AIEdiT for Affective Image Editing using Text descriptions, which evokes specific emotions by adaptively shaping multiple emotional factors across the entire images. To represent universal emotional priors, we build the continuous emotional spectrum and extract nuanced emotional requests. To manipulate emotional factors, we design the emotional mapper to translate visually-abstract emotional requests to visually-concrete semantic representations. To ensure that editing results evoke specific emotions, we introduce an MLLM to supervise the model training. During inference, we strategically distort visual elements and subsequently shape corresponding emotional factors to edit images according to users' instructions. Additionally, we introduce a large-scale dataset that includes the emotion-aligned text and image pair set for training and evaluation. Extensive experiments demonstrate that AIEdiT achieves superior performance, effectively reflecting users' emotional requests.

ChatGPT is a Potential Zero-Shot Dependency Parser

Oct 25, 2023

Abstract:Pre-trained language models have been widely used in dependency parsing task and have achieved significant improvements in parser performance. However, it remains an understudied question whether pre-trained language models can spontaneously exhibit the ability of dependency parsing without introducing additional parser structure in the zero-shot scenario. In this paper, we propose to explore the dependency parsing ability of large language models such as ChatGPT and conduct linguistic analysis. The experimental results demonstrate that ChatGPT is a potential zero-shot dependency parser, and the linguistic analysis also shows some unique preferences in parsing outputs.

Schema-Free Dependency Parsing via Sequence Generation

Jan 28, 2022

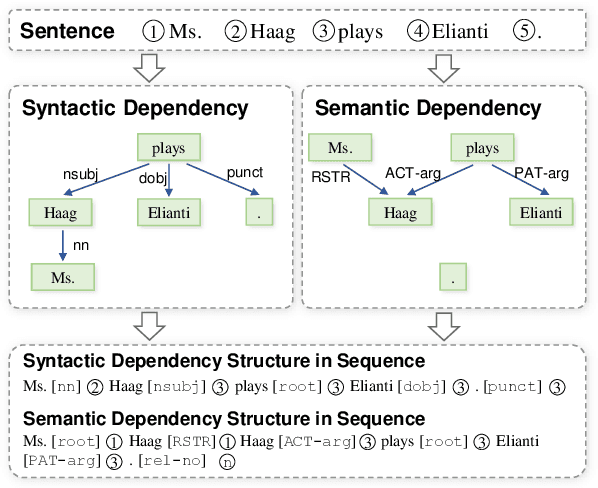

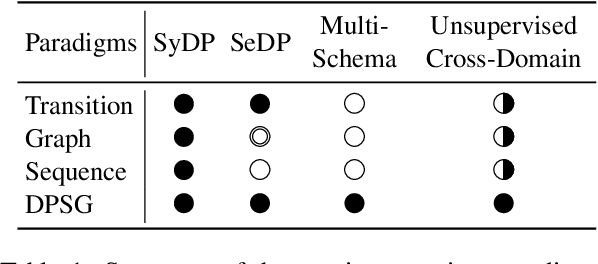

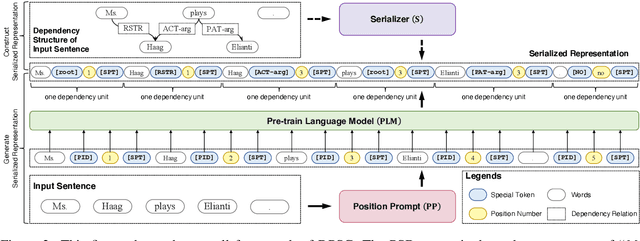

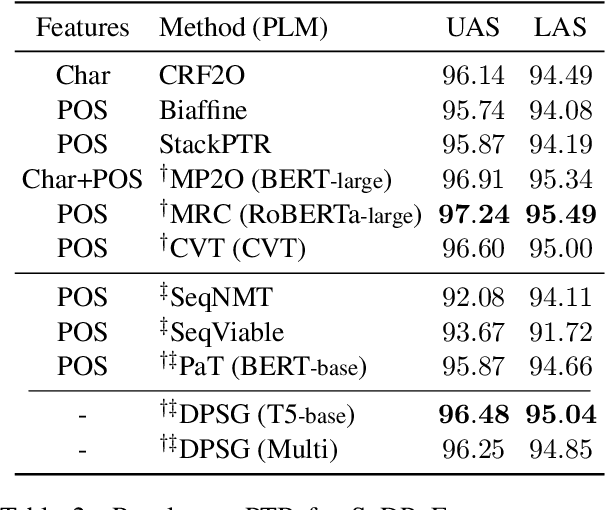

Abstract:Dependency parsing aims to extract syntactic dependency structure or semantic dependency structure for sentences. Existing methods suffer the drawbacks of lacking universality or highly relying on the auxiliary decoder. To remedy these drawbacks, we propose to achieve universal and schema-free Dependency Parsing (DP) via Sequence Generation (SG) DPSG by utilizing only the pre-trained language model (PLM) without any auxiliary structures or parsing algorithms. We first explore different serialization designing strategies for converting parsing structures into sequences. Then we design dependency units and concatenate these units into the sequence for DPSG. Thanks to the high flexibility of the sequence generation, our DPSG can achieve both syntactic DP and semantic DP using a single model. By concatenating the prefix to indicate the specific schema with the sequence, our DPSG can even accomplish multi-schemata parsing. The effectiveness of our DPSG is demonstrated by the experiments on widely used DP benchmarks, i.e., PTB, CODT, SDP15, and SemEval16. DPSG achieves comparable results with the first-tier methods on all the benchmarks and even the state-of-the-art (SOTA) performance in CODT and SemEval16. This paper demonstrates our DPSG has the potential to be a new parsing paradigm. We will release our codes upon acceptance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge