Barbara Bruno

Paving the Way for Culturally Competent Robots: a Position Paper

Mar 22, 2018

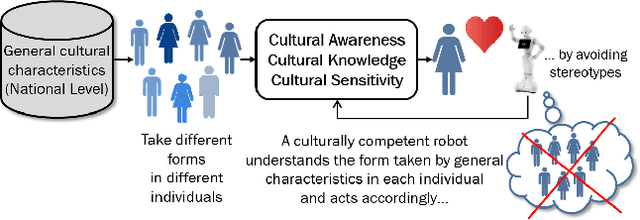

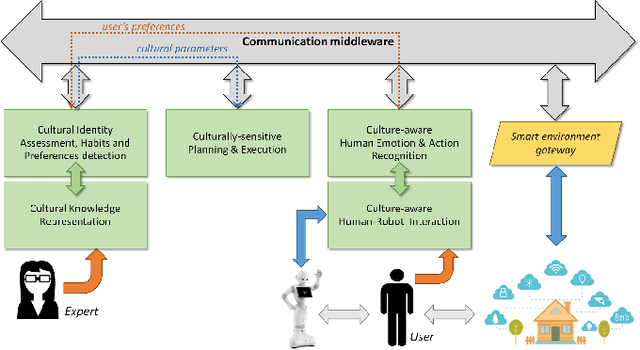

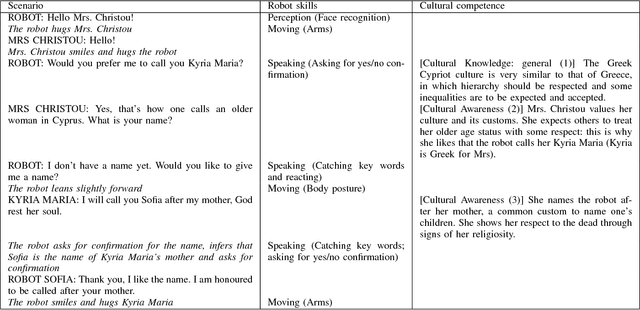

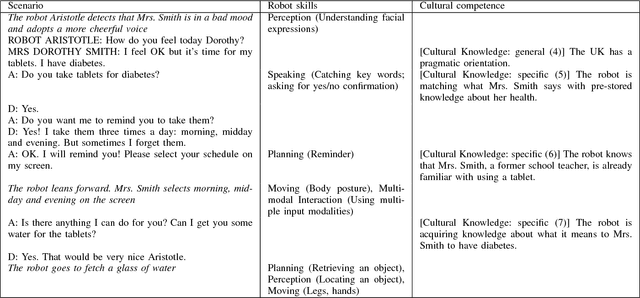

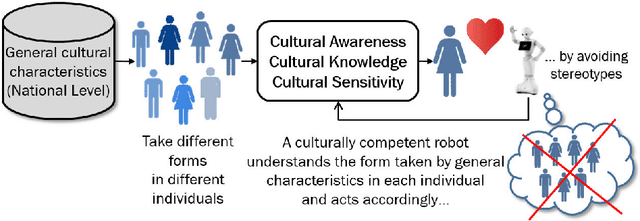

Abstract:Cultural competence is a well known requirement for an effective healthcare, widely investigated in the nursing literature. We claim that personal assistive robots should likewise be culturally competent, aware of general cultural characteristics and of the different forms they take in different individuals, and sensitive to cultural differences while perceiving, reasoning, and acting. Drawing inspiration from existing guidelines for culturally competent healthcare and the state-of-the-art in culturally competent robotics, we identify the key robot capabilities which enable culturally competent behaviours and discuss methodologies for their development and evaluation.

* Presented at: 26th IEEE International Symposium onRobot and Human Interactive Communication (RO-MAN), 2017, Lisbon, Portugal. arXiv admin note: substantial text overlap with arXiv:1708.06276

Modelling the Influence of Cultural Information on Vision-Based Human Home Activity Recognition

Mar 21, 2018

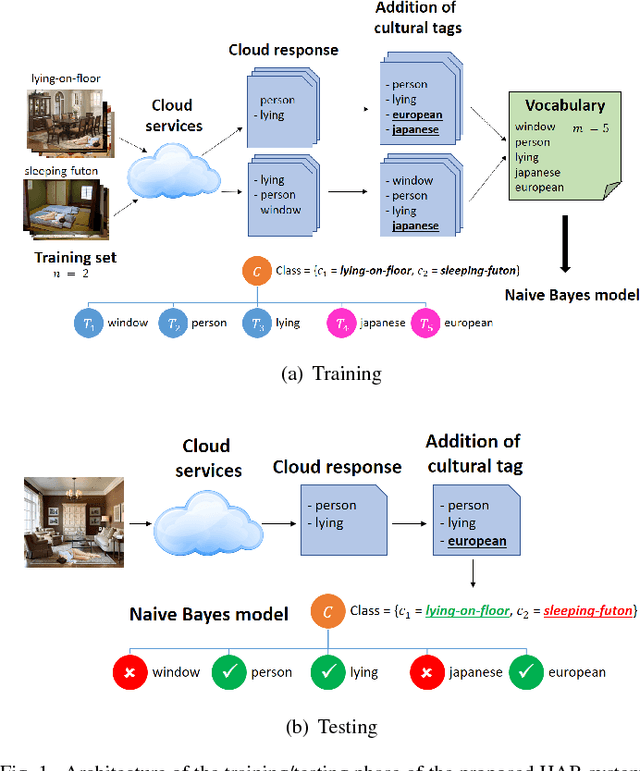

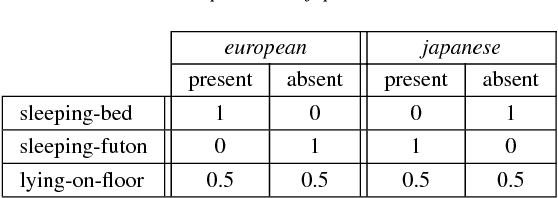

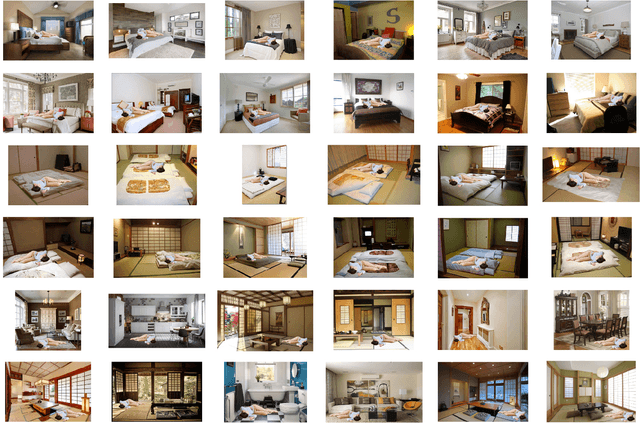

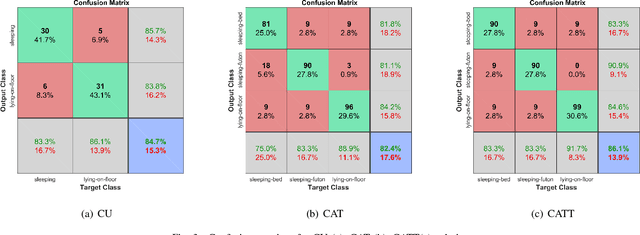

Abstract:Daily life activities, such as eating and sleeping, are deeply influenced by a person's culture, hence generating differences in the way a same activity is performed by individuals belonging to different cultures. We argue that taking cultural information into account can improve the performance of systems for the automated recognition of human activities. We propose four different solutions to the problem and present a system which uses a Naive Bayes model to associate cultural information with semantic information extracted from still images. Preliminary experiments with a dataset of images of individuals lying on the floor, sleeping on a futon and sleeping on a bed suggest that: i) solutions explicitly taking cultural information into account are more accurate than culture-unaware solutions; and ii) the proposed system is a promising starting point for the development of culture-aware Human Activity Recognition methods.

* 7 pages, 4 figures, Proc. URAI2017, International Conference on Ubiquitous Robots and Ambient Intelligence, Maison Glad Jeju, Jeju, Korea from June 28-July 2017

Towards a new paradigm for assistive technology at home: research challenges, design issues and performance assessment

Oct 27, 2017

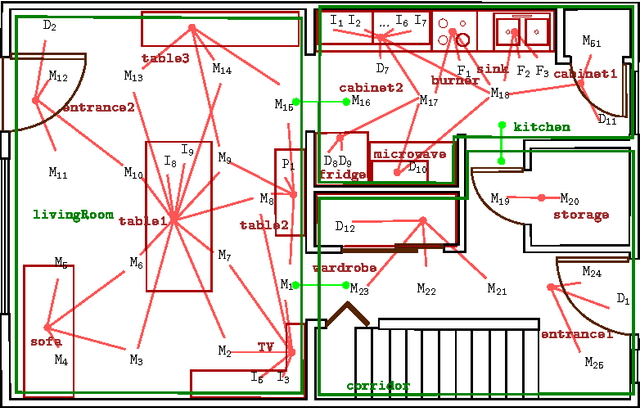

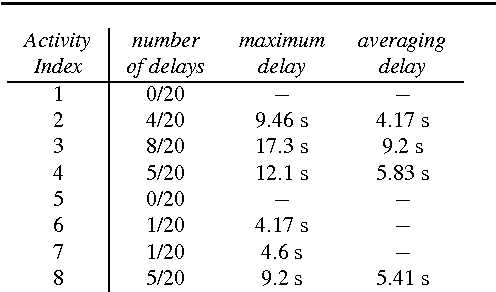

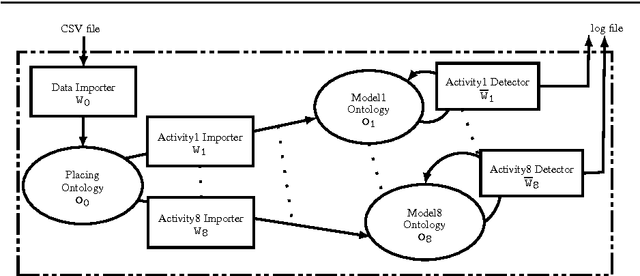

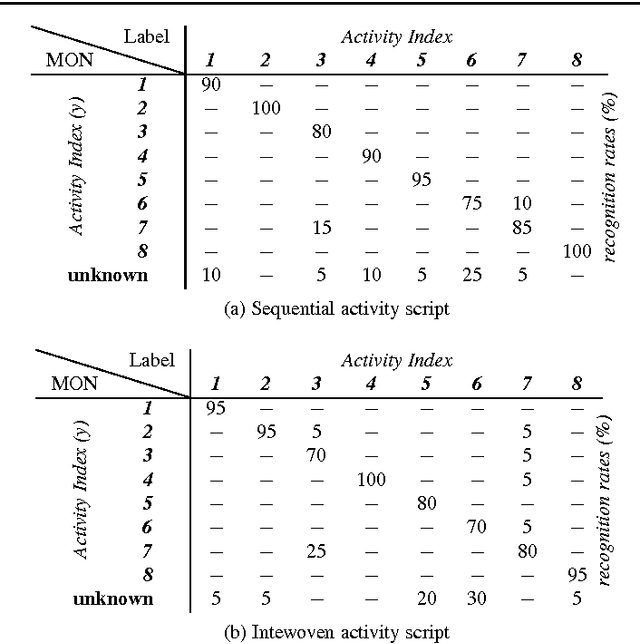

Abstract:Providing elderly and people with special needs, including those suffering from physical disabilities and chronic diseases, with the possibility of retaining their independence at best is one of the most important challenges our society is expected to face. Assistance models based on the home care paradigm are being adopted rapidly in almost all industrialized and emerging countries. Such paradigms hypothesize that it is necessary to ensure that the so-called Activities of Daily Living are correctly and regularly performed by the assisted person to increase the perception of an improved quality of life. This chapter describes the computational inference engine at the core of Arianna, a system able to understand whether an assisted person performs a given set of ADL and to motivate him/her in performing them through a speech-mediated motivational dialogue, using a set of nearables to be installed in an apartment, plus a wearable to be worn or fit in garments.

The CARESSES EU-Japan project: making assistive robots culturally competent

Aug 21, 2017

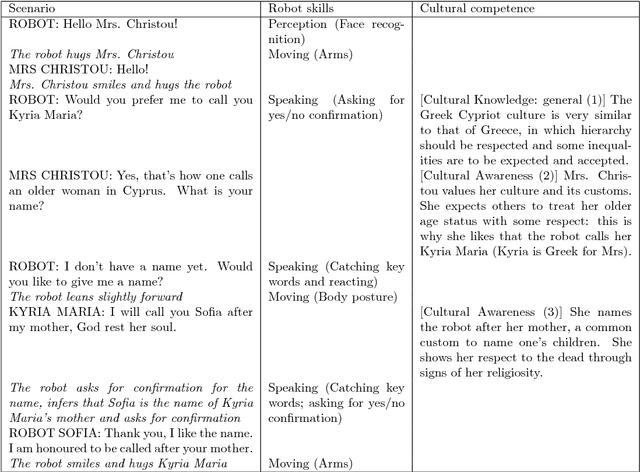

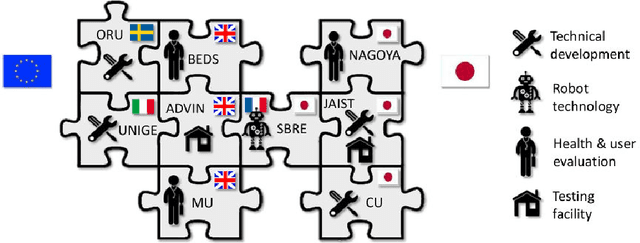

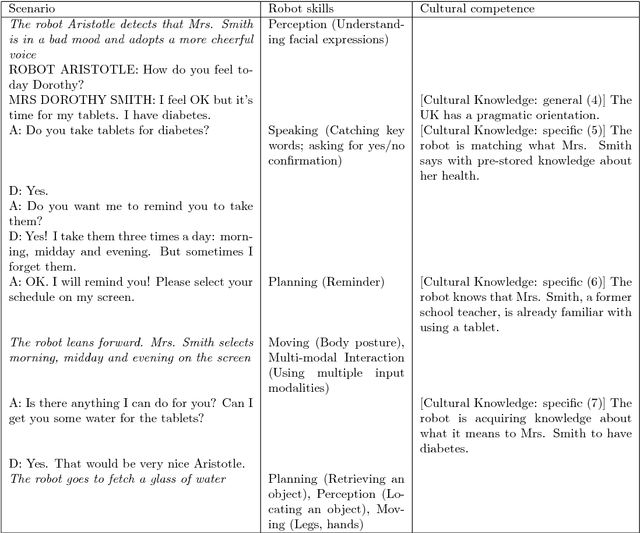

Abstract:The nursing literature shows that cultural competence is an important requirement for effective healthcare. We claim that personal assistive robots should likewise be culturally competent, that is, they should be aware of general cultural characteristics and of the different forms they take in different individuals, and take these into account while perceiving, reasoning, and acting. The CARESSES project is an Europe-Japan collaborative effort that aims at designing, developing and evaluating culturally competent assistive robots. These robots will be able to adapt the way they behave, speak and interact to the cultural identity of the person they assist. This paper describes the approach taken in the CARESSES project, its initial steps, and its future plans.

Detection of bimanual gestures everywhere: why it matters, what we need and what is missing

Jul 09, 2017

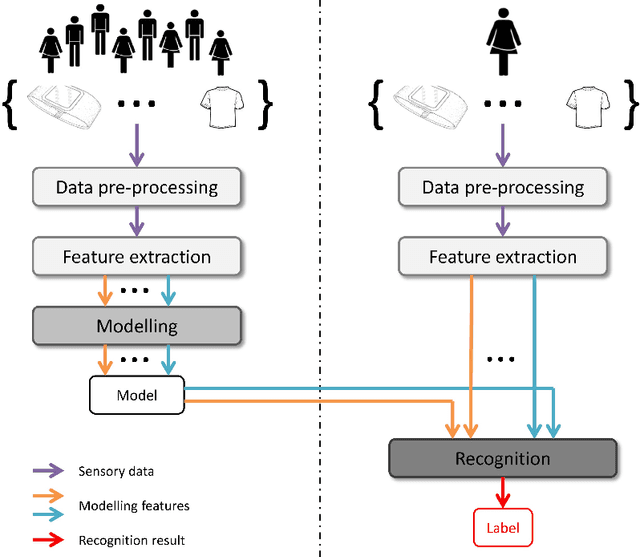

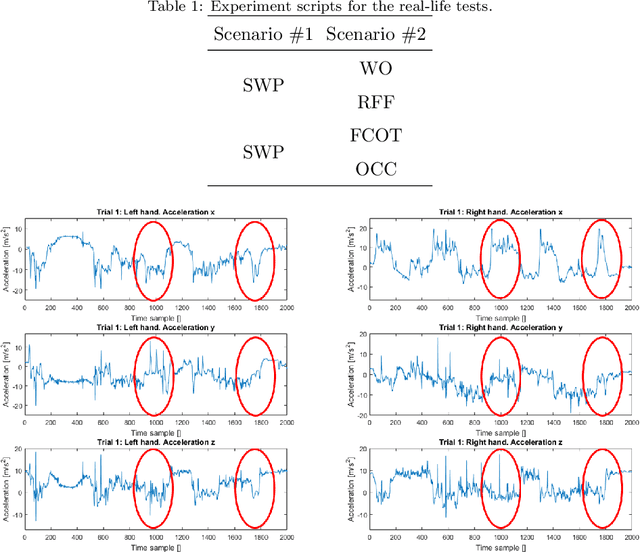

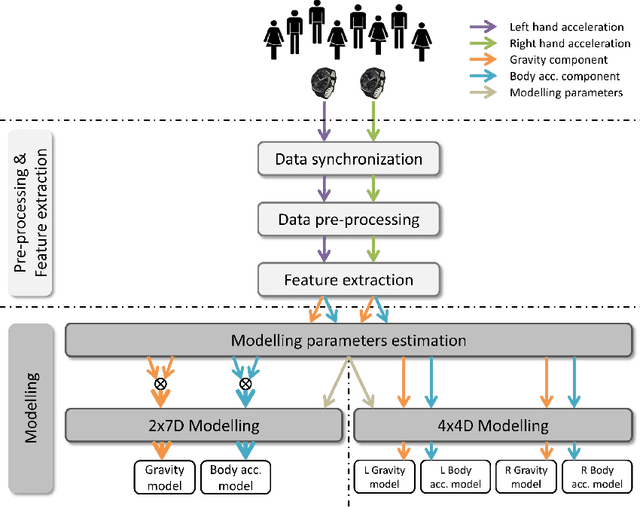

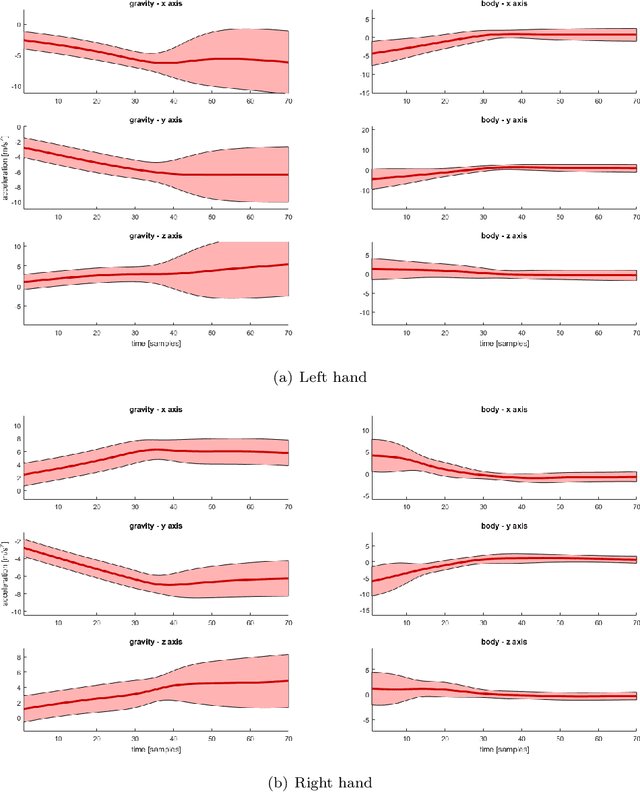

Abstract:Bimanual gestures are of the utmost importance for the study of motor coordination in humans and in everyday activities. A reliable detection of bimanual gestures in unconstrained environments is fundamental for their clinical study and to assess common activities of daily living. This paper investigates techniques for a reliable, unconstrained detection and classification of bimanual gestures. It assumes the availability of inertial data originating from the two hands/arms, builds upon a previously developed technique for gesture modelling based on Gaussian Mixture Modelling (GMM) and Gaussian Mixture Regression (GMR), and compares different modelling and classification techniques, which are based on a number of assumptions inspired by literature about how bimanual gestures are represented and modelled in the brain. Experiments show results related to 5 everyday bimanual activities, which have been selected on the basis of three main parameters: (not) constraining the two hands by a physical tool, (not) requiring a specific sequence of single-hand gestures, being recursive (or not). In the best performing combination of modeling approach and classification technique, five out of five activities are recognized up to an accuracy of 97%, a precision of 82% and a level of recall of 100%.

Flexible human-robot cooperation models for assisted shop-floor tasks

Jul 09, 2017

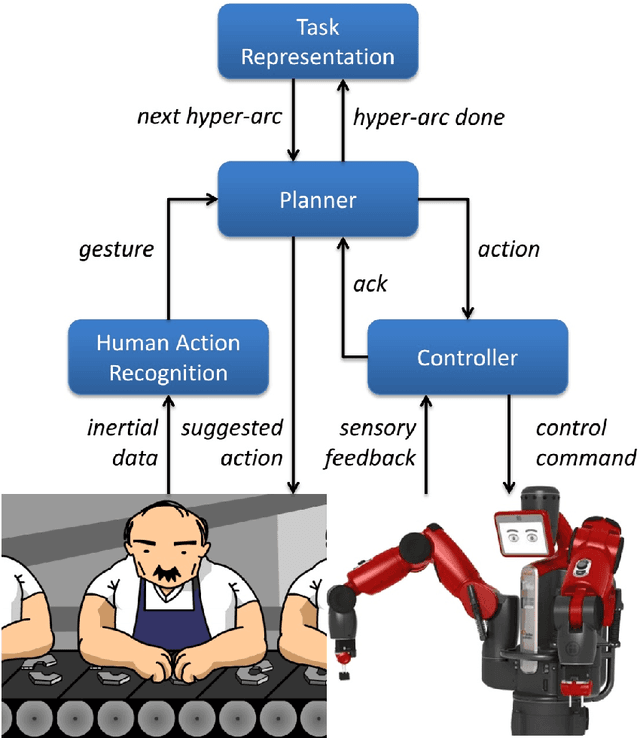

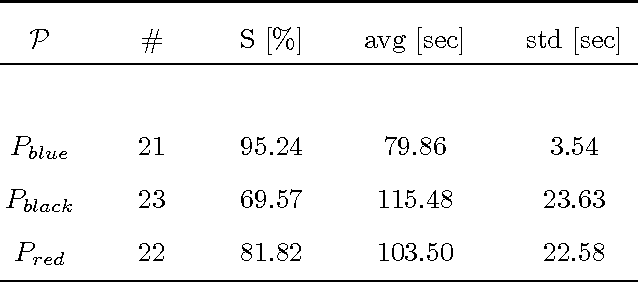

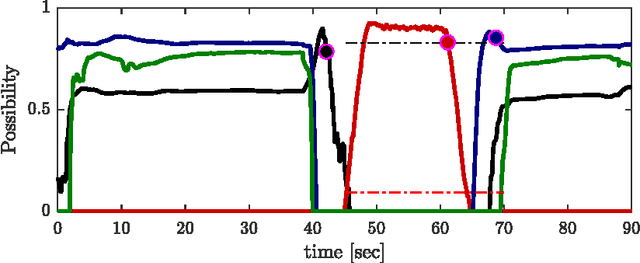

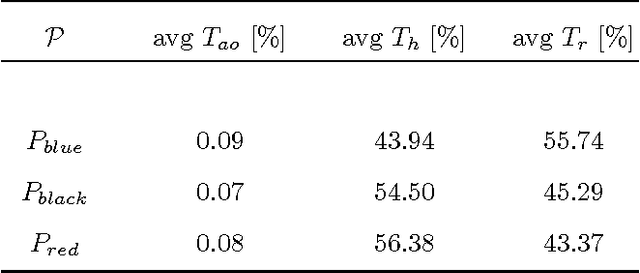

Abstract:The Industry 4.0 paradigm emphasizes the crucial benefits that collaborative robots, i.e., robots able to work alongside and together with humans, could bring to the whole production process. In this context, an enabling technology yet unreached is the design of flexible robots able to deal at all levels with humans' intrinsic variability, which is not only a necessary element for a comfortable working experience for the person but also a precious capability for efficiently dealing with unexpected events. In this paper, a sensing, representation, planning and control architecture for flexible human-robot cooperation, referred to as FlexHRC, is proposed. FlexHRC relies on wearable sensors for human action recognition, AND/OR graphs for the representation of and reasoning upon cooperation models, and a Task Priority framework to decouple action planning from robot motion planning and control.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge