"facial recognition": models, code, and papers

Research on facial expression recognition based on Multimodal data fusion and neural network

Sep 26, 2021

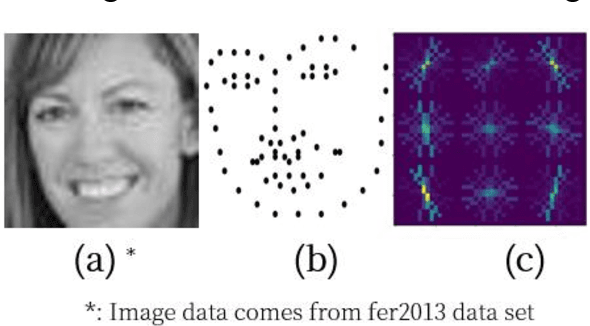

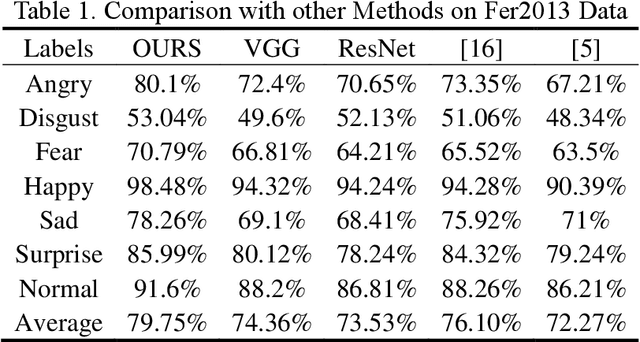

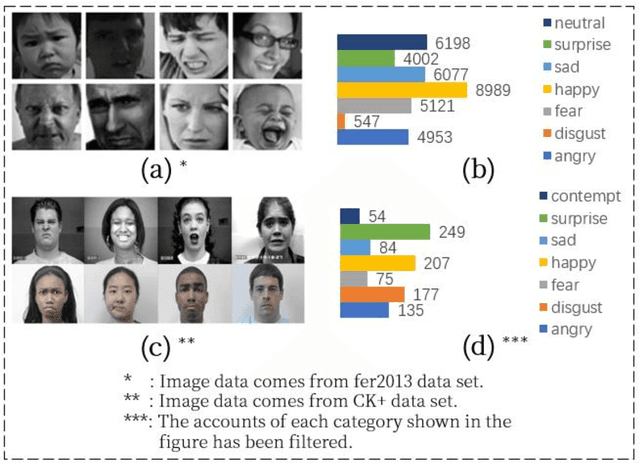

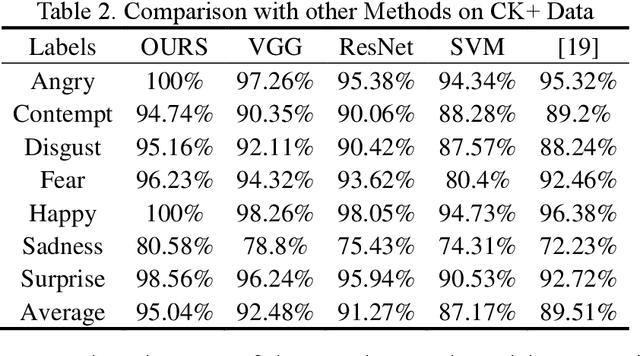

Facial expression recognition is a challenging task when neural network is applied to pattern recognition. Most of the current recognition research is based on single source facial data, which generally has the disadvantages of low accuracy and low robustness. In this paper, a neural network algorithm of facial expression recognition based on multimodal data fusion is proposed. The algorithm is based on the multimodal data, and it takes the facial image, the histogram of oriented gradient of the image and the facial landmarks as the input, and establishes CNN, LNN and HNN three sub neural networks to extract data features, using multimodal data feature fusion mechanism to improve the accuracy of facial expression recognition. Experimental results show that, benefiting by the complementarity of multimodal data, the algorithm has a great improvement in accuracy, robustness and detection speed compared with the traditional facial expression recognition algorithm. Especially in the case of partial occlusion, illumination and head posture transformation, the algorithm also shows a high confidence.

A Novel Active Solution for Two-Dimensional Face Presentation Attack Detection

Dec 14, 2022

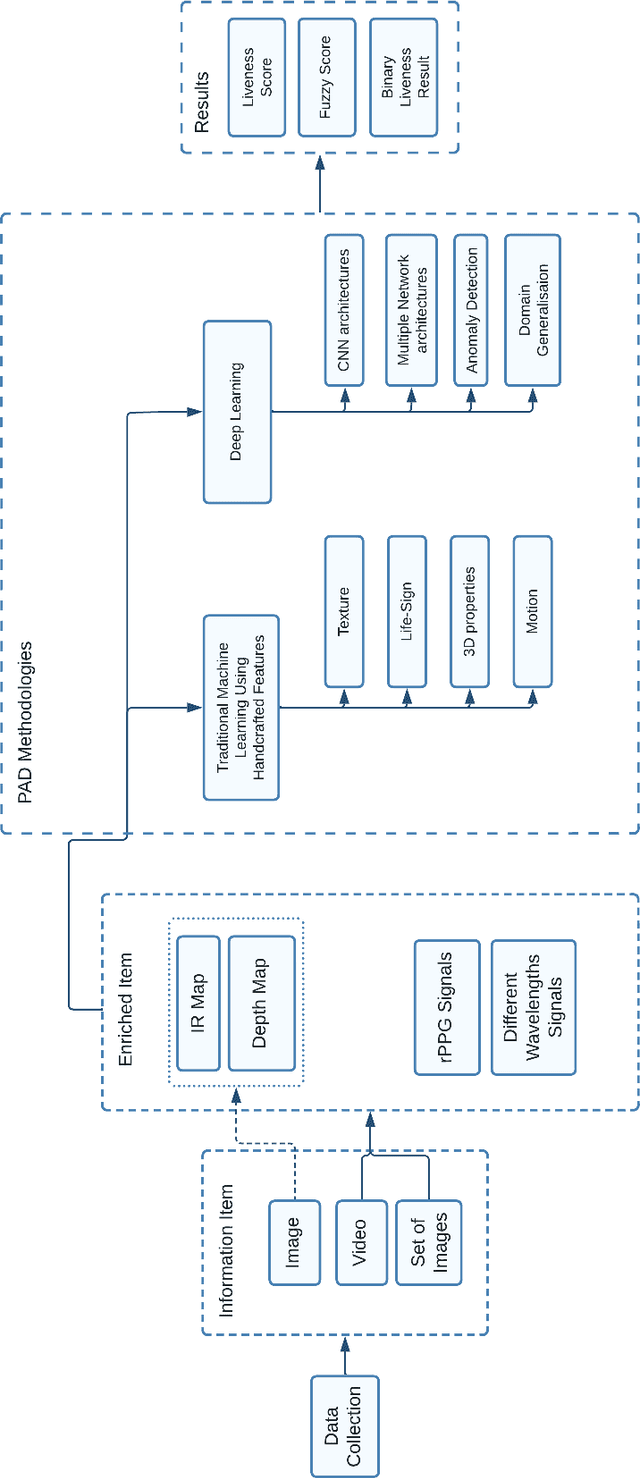

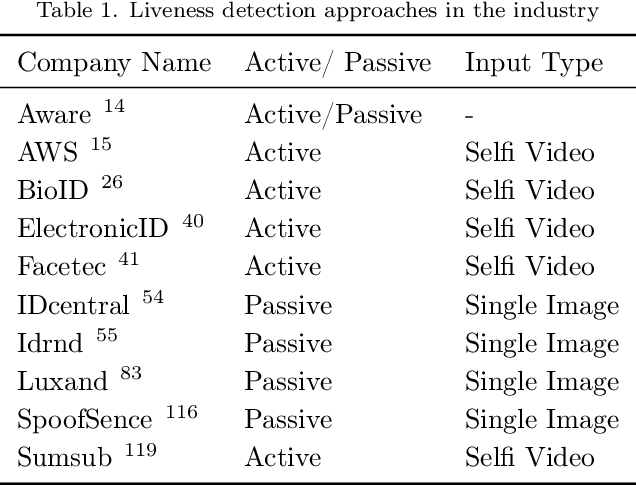

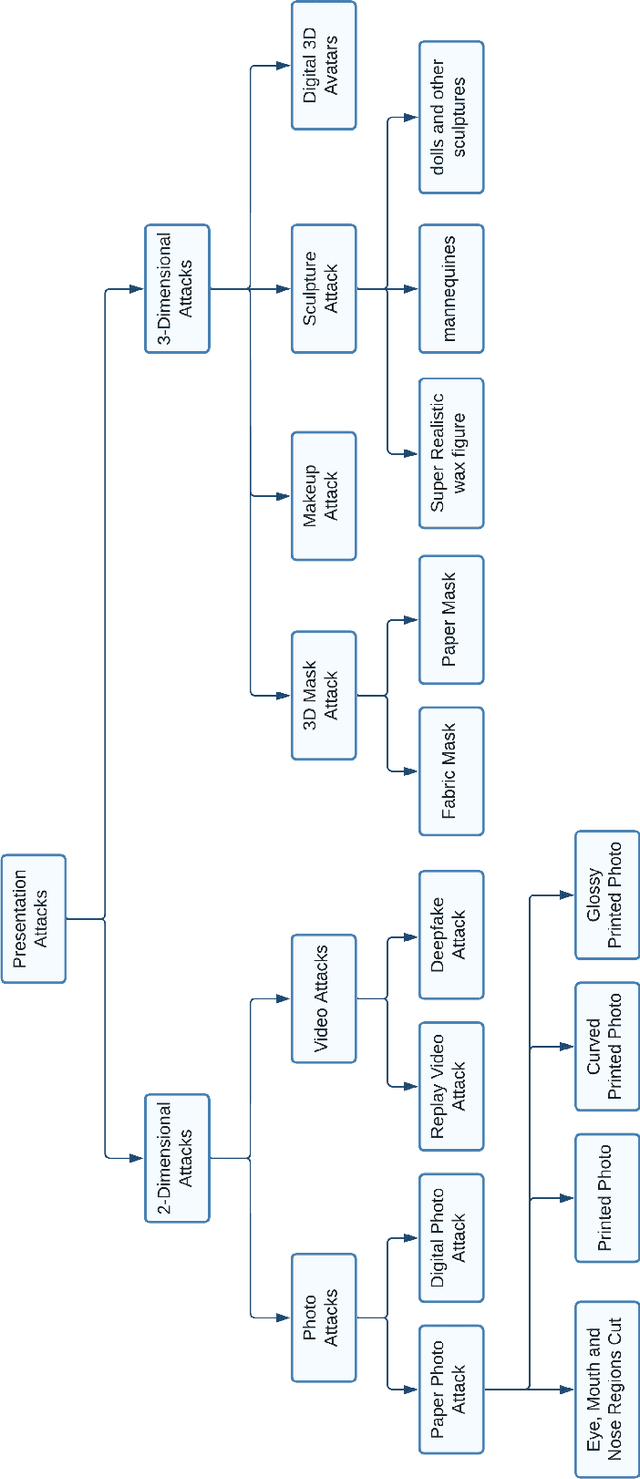

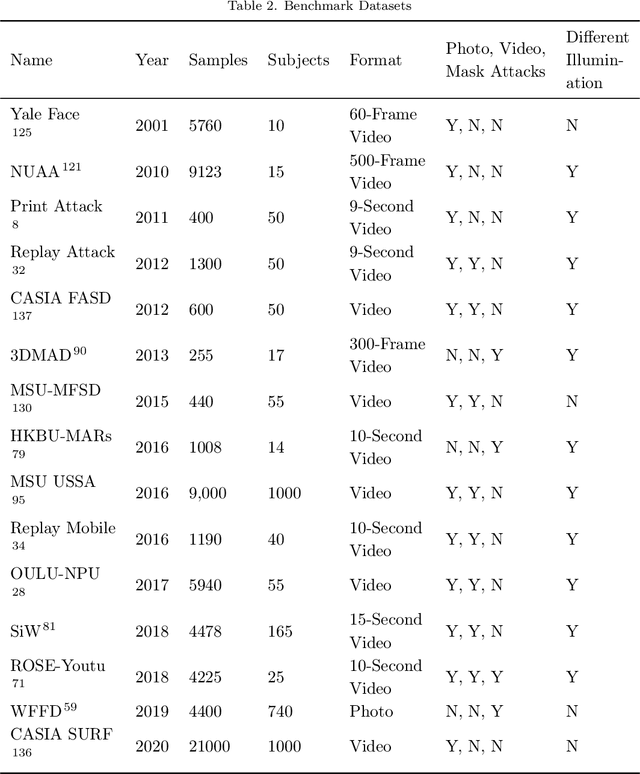

Identity authentication is the process of verifying one's identity. There are several identity authentication methods, among which biometric authentication is of utmost importance. Facial recognition is a sort of biometric authentication with various applications, such as unlocking mobile phones and accessing bank accounts. However, presentation attacks pose the greatest threat to facial recognition. A presentation attack is an attempt to present a non-live face, such as a photo, video, mask, and makeup, to the camera. Presentation attack detection is a countermeasure that attempts to identify between a genuine user and a presentation attack. Several industries, such as financial services, healthcare, and education, use biometric authentication services on various devices. This illustrates the significance of presentation attack detection as the verification step. In this paper, we study state-of-the-art to cover the challenges and solutions related to presentation attack detection in a single place. We identify and classify different presentation attack types and identify the state-of-the-art methods that could be used to detect each of them. We compare the state-of-the-art literature regarding attack types, evaluation metrics, accuracy, and datasets and discuss research and industry challenges of presentation attack detection. Most presentation attack detection approaches rely on extensive data training and quality, making them difficult to implement. We introduce an efficient active presentation attack detection approach that overcomes weaknesses in the existing literature. The proposed approach does not require training data, is CPU-light, can process low-quality images, has been tested with users of various ages and is shown to be user-friendly and highly robust to 2-dimensional presentation attacks.

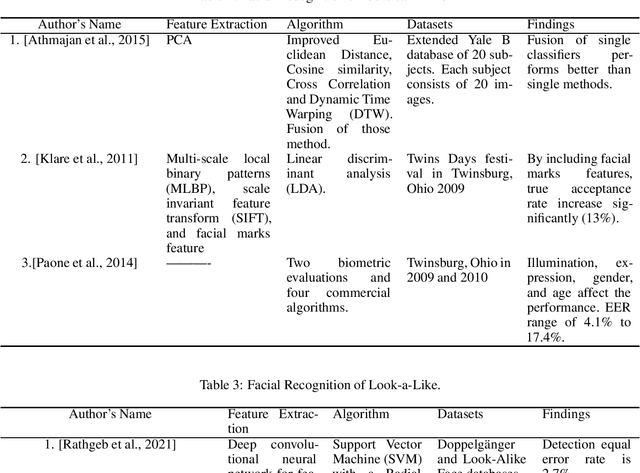

Benchmarking Human Face Similarity Using Identical Twins

Aug 25, 2022

The problem of distinguishing identical twins and non-twin look-alikes in automated facial recognition (FR) applications has become increasingly important with the widespread adoption of facial biometrics. Due to the high facial similarity of both identical twins and look-alikes, these face pairs represent the hardest cases presented to facial recognition tools. This work presents an application of one of the largest twin datasets compiled to date to address two FR challenges: 1) determining a baseline measure of facial similarity between identical twins and 2) applying this similarity measure to determine the impact of doppelgangers, or look-alikes, on FR performance for large face datasets. The facial similarity measure is determined via a deep convolutional neural network. This network is trained on a tailored verification task designed to encourage the network to group together highly similar face pairs in the embedding space and achieves a test AUC of 0.9799. The proposed network provides a quantitative similarity score for any two given faces and has been applied to large-scale face datasets to identify similar face pairs. An additional analysis which correlates the comparison score returned by a facial recognition tool and the similarity score returned by the proposed network has also been performed.

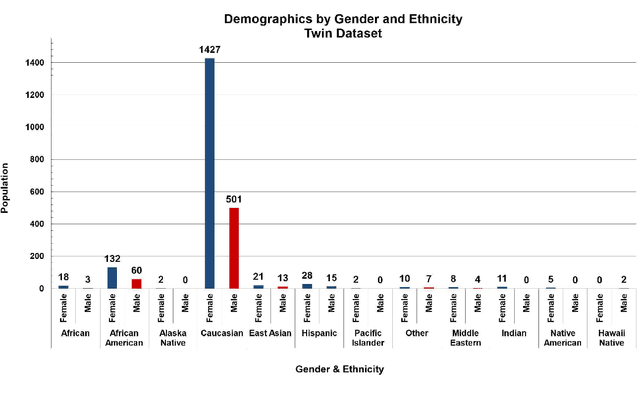

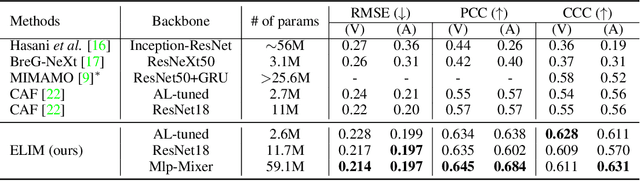

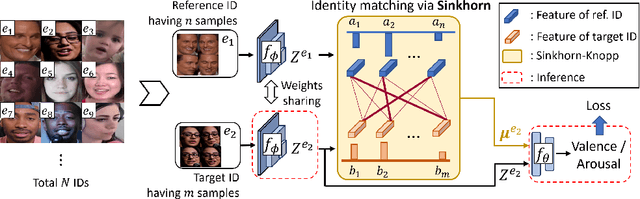

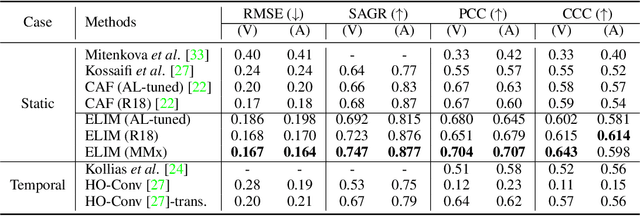

Optimal Transport-based Identity Matching for Identity-invariant Facial Expression Recognition

Sep 25, 2022

Identity-invariant facial expression recognition (FER) has been one of the challenging computer vision tasks. Since conventional FER schemes do not explicitly address the inter-identity variation of facial expressions, their neural network models still operate depending on facial identity. This paper proposes to quantify the inter-identity variation by utilizing pairs of similar expressions explored through a specific matching process. We formulate the identity matching process as an Optimal Transport (OT) problem. Specifically, to find pairs of similar expressions from different identities, we define the inter-feature similarity as a transportation cost. Then, optimal identity matching to find the optimal flow with minimum transportation cost is performed by Sinkhorn-Knopp iteration. The proposed matching method is not only easy to plug in to other models, but also requires only acceptable computational overhead. Extensive simulations prove that the proposed FER method improves the PCC/CCC performance by up to 10\% or more compared to the runner-up on wild datasets. The source code and software demo are available at https://github.com/kdhht2334/ELIM_FER.

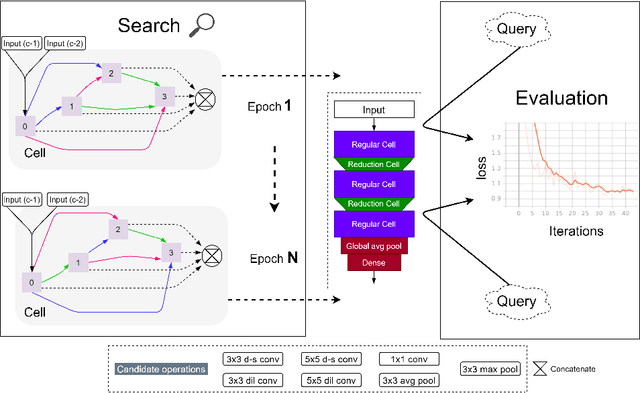

Efficient Neural Architecture Search for Emotion Recognition

Mar 23, 2023

Automated human emotion recognition from facial expressions is a well-studied problem and still remains a very challenging task. Some efficient or accurate deep learning models have been presented in the literature. However, it is quite difficult to design a model that is both efficient and accurate at the same time. Moreover, identifying the minute feature variations in facial regions for both macro and micro-expressions requires expertise in network design. In this paper, we proposed to search for a highly efficient and robust neural architecture for both macro and micro-level facial expression recognition. To the best of our knowledge, this is the first attempt to design a NAS-based solution for both macro and micro-expression recognition. We produce lightweight models with a gradient-based architecture search algorithm. To maintain consistency between macro and micro-expressions, we utilize dynamic imaging and convert microexpression sequences into a single frame, preserving the spatiotemporal features in the facial regions. The EmoNAS has evaluated over 13 datasets (7 macro expression datasets: CK+, DISFA, MUG, ISED, OULU-VIS CASIA, FER2013, RAF-DB, and 6 micro-expression datasets: CASME-I, CASME-II, CAS(ME)2, SAMM, SMIC, MEGC2019 challenge). The proposed models outperform the existing state-of-the-art methods and perform very well in terms of speed and space complexity.

Understanding bias in facial recognition technologies

Oct 05, 2020

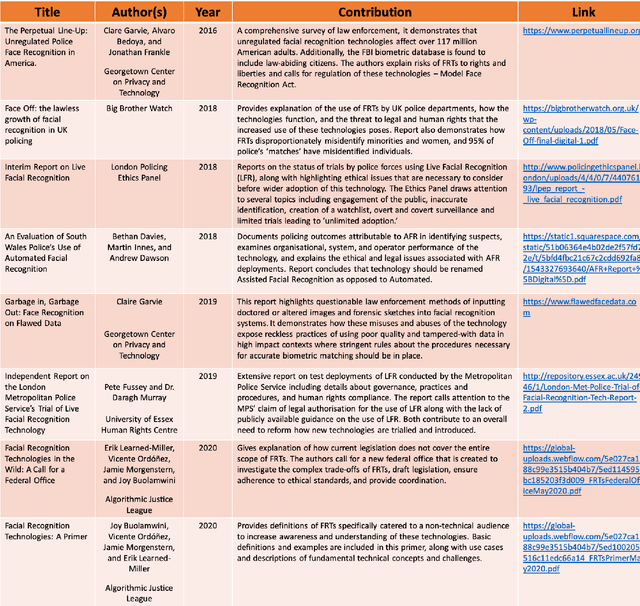

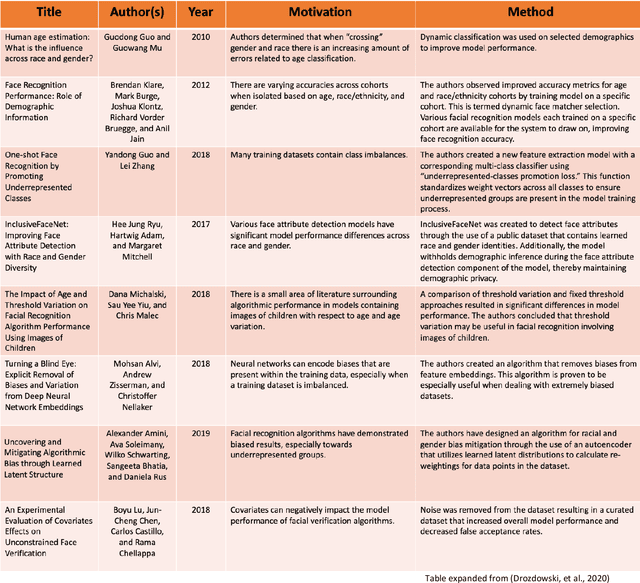

Over the past couple of years, the growing debate around automated facial recognition has reached a boiling point. As developers have continued to swiftly expand the scope of these kinds of technologies into an almost unbounded range of applications, an increasingly strident chorus of critical voices has sounded concerns about the injurious effects of the proliferation of such systems. Opponents argue that the irresponsible design and use of facial detection and recognition technologies (FDRTs) threatens to violate civil liberties, infringe on basic human rights and further entrench structural racism and systemic marginalisation. They also caution that the gradual creep of face surveillance infrastructures into every domain of lived experience may eventually eradicate the modern democratic forms of life that have long provided cherished means to individual flourishing, social solidarity and human self-creation. Defenders, by contrast, emphasise the gains in public safety, security and efficiency that digitally streamlined capacities for facial identification, identity verification and trait characterisation may bring. In this explainer, I focus on one central aspect of this debate: the role that dynamics of bias and discrimination play in the development and deployment of FDRTs. I examine how historical patterns of discrimination have made inroads into the design and implementation of FDRTs from their very earliest moments. And, I explain the ways in which the use of biased FDRTs can lead distributional and recognitional injustices. The explainer concludes with an exploration of broader ethical questions around the potential proliferation of pervasive face-based surveillance infrastructures and makes some recommendations for cultivating more responsible approaches to the development and governance of these technologies.

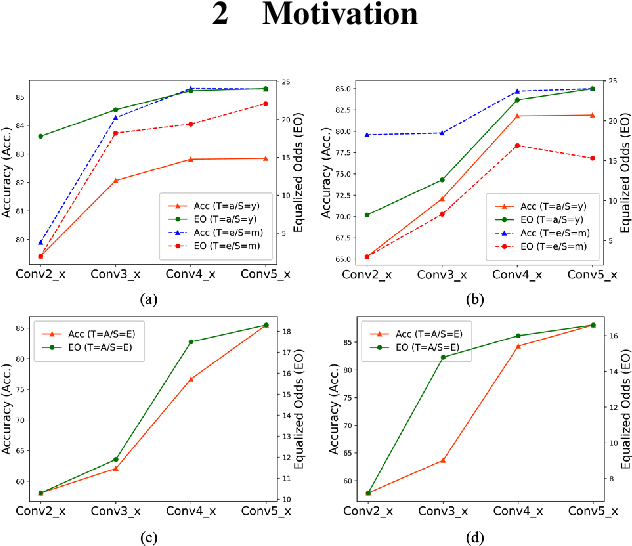

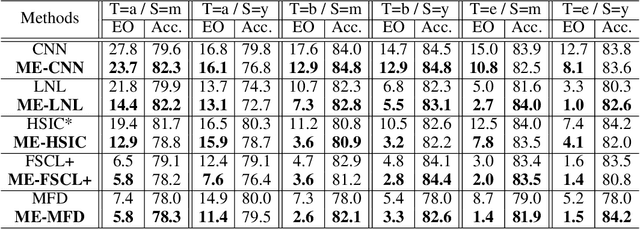

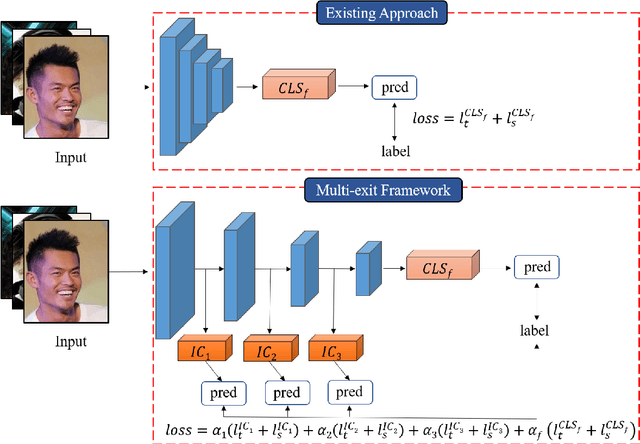

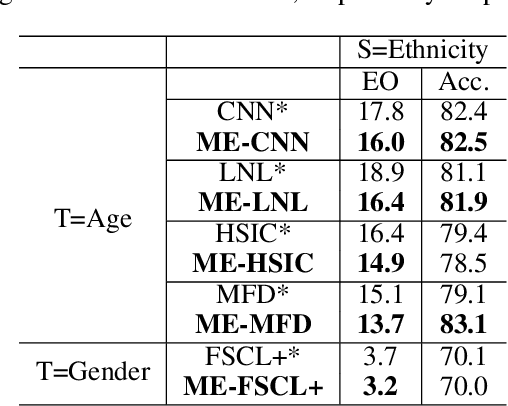

Fair Multi-Exit Framework for Facial Attribute Classification

Jan 08, 2023

Fairness has become increasingly pivotal in facial recognition. Without bias mitigation, deploying unfair AI would harm the interest of the underprivileged population. In this paper, we observe that though the higher accuracy that features from the deeper layer of a neural networks generally offer, fairness conditions deteriorate as we extract features from deeper layers. This phenomenon motivates us to extend the concept of multi-exit framework. Unlike existing works mainly focusing on accuracy, our multi-exit framework is fairness-oriented, where the internal classifiers are trained to be more accurate and fairer. During inference, any instance with high confidence from an internal classifier is allowed to exit early. Moreover, our framework can be applied to most existing fairness-aware frameworks. Experiment results show that the proposed framework can largely improve the fairness condition over the state-of-the-art in CelebA and UTK Face datasets.

Performance analysis of facial recognition: A critical review through glass factor

Apr 04, 2021

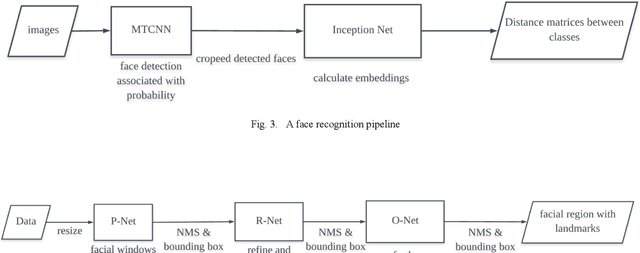

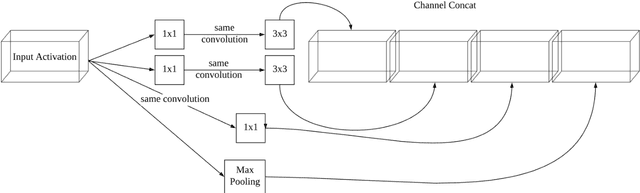

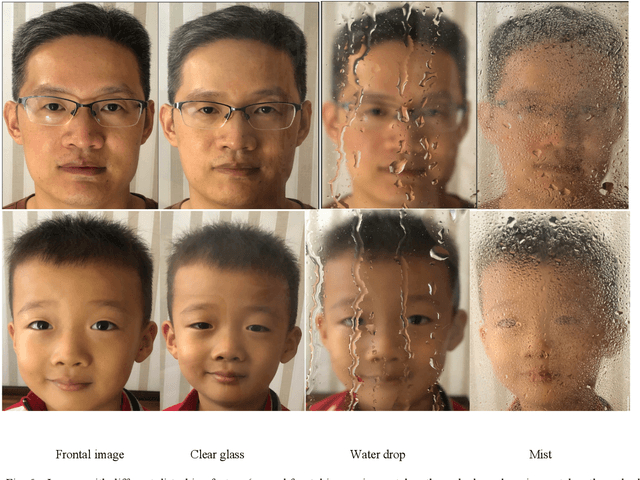

COVID-19 pandemic and social distancing urge a reliable human face recognition system in different abnormal situations. However, there is no research which studies the influence of glass factor in facial recognition system. This paper provides a comprehensive review of glass factor. The study contains two steps: data collection and accuracy test. Data collection includes collecting human face images through different situations, such as clear glasses, glass with water and glass with mist. Based on the collected data, an existing state-of-the-art face detection and recognition system built upon MTCNN and Inception V1 deep nets is tested for further analysis. Experimental data supports that 1) the system is robust for classification when comparing real-time images and 2) it fails at determining if two images are of same person by comparing real-time disturbed image with the frontal ones.

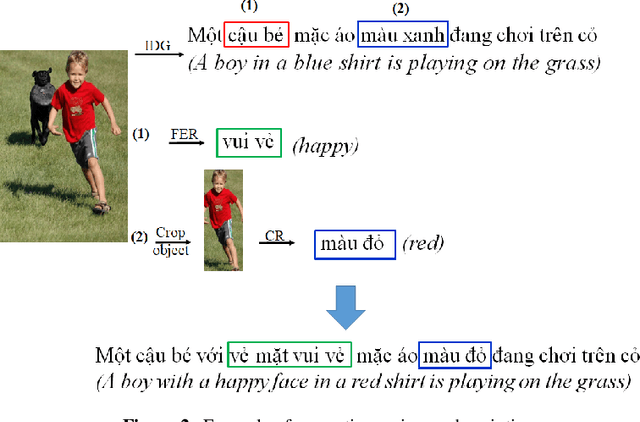

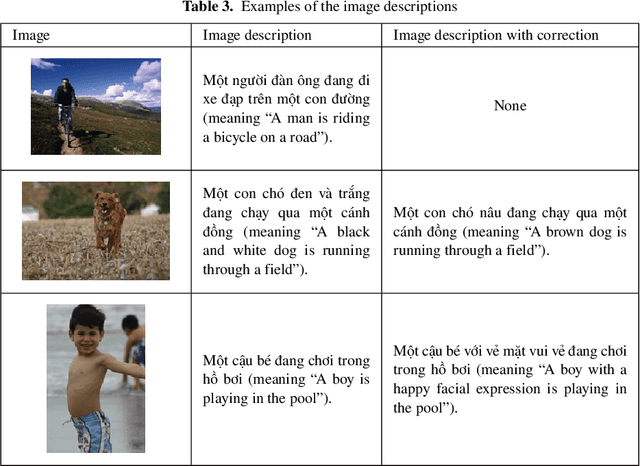

Facial Expression Recognition and Image Description Generation in Vietnamese

Aug 12, 2022

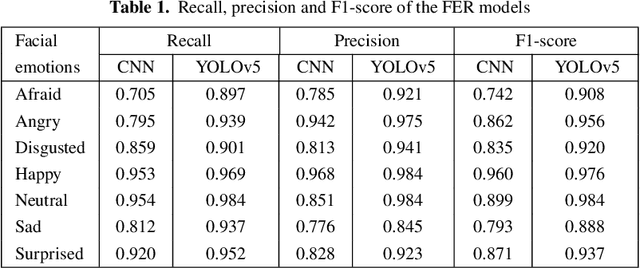

This paper discusses a facial expression recognition model and a description generation model to build descriptive sentences for images and facial expressions of people in images. Our study shows that YOLOv5 achieves better results than a traditional CNN for all emotions on the KDEF dataset. In particular, the accuracies of the CNN and YOLOv5 models for emotion recognition are 0.853 and 0.938, respectively. A model for generating descriptions for images based on a merged architecture is proposed using VGG16 with the descriptions encoded over an LSTM model. YOLOv5 is also used to recognize dominant colors of objects in the images and correct the color words in the descriptions generated if it is necessary. If the description contains words referring to a person, we recognize the emotion of the person in the image. Finally, we combine the results of all models to create sentences that describe the visual content and the human emotions in the images. Experimental results on the Flickr8k dataset in Vietnamese achieve BLEU-1, BLEU-2, BLEU-3, BLEU-4 scores of 0.628; 0.425; 0.280; and 0.174, respectively.

* 7 pages

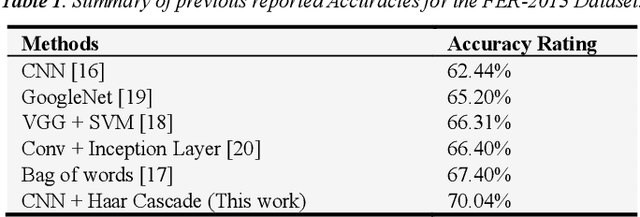

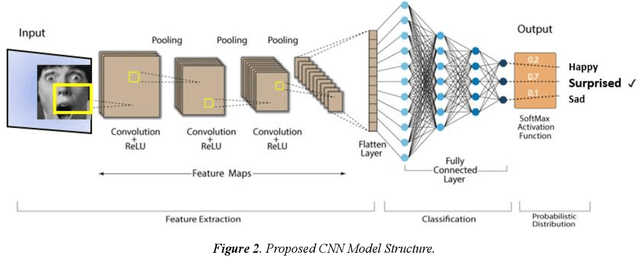

Hybrid Facial Expression Recognition (FER2013) Model for Real-Time Emotion Classification and Prediction

Jun 19, 2022

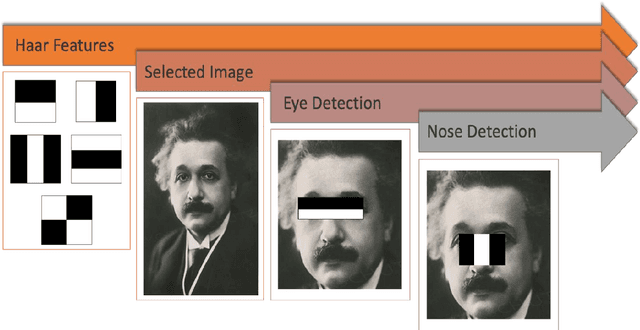

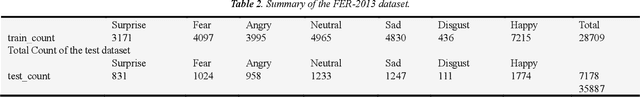

Facial Expression Recognition is a vital research topic in most fields ranging from artificial intelligence and gaming to Human-Computer Interaction (HCI) and Psychology. This paper proposes a hybrid model for Facial Expression recognition, which comprises a Deep Convolutional Neural Network (DCNN) and Haar Cascade deep learning architectures. The objective is to classify real-time and digital facial images into one of the seven facial emotion categories considered. The DCNN employed in this research has more convolutional layers, ReLU Activation functions, and multiple kernels to enhance filtering depth and facial feature extraction. In addition, a haar cascade model was also mutually used to detect facial features in real-time images and video frames. Grayscale images from the Kaggle repository (FER-2013) and then exploited Graphics Processing Unit (GPU) computation to expedite the training and validation process. Pre-processing and data augmentation techniques are applied to improve training efficiency and classification performance. The experimental results show a significantly improved classification performance compared to state-of-the-art (SoTA) experiments and research. Also, compared to other conventional models, this paper validates that the proposed architecture is superior in classification performance with an improvement of up to 6%, totaling up to 70% accuracy, and with less execution time of 2098.8s.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge