Zhongyuan Zhang

Prototype-Enhanced Multi-View Learning for Thyroid Nodule Ultrasound Classification

Mar 30, 2026Abstract:Thyroid nodule classification using ultrasound imaging is essential for early diagnosis and clinical decision-making; however, despite promising performance on in-distribution data, existing deep learning methods often exhibit limited robustness and generalisation when deployed across different ultrasound devices or clinical environments. This limitation is mainly attributed to the pronounced heterogeneity of thyroid ultrasound images, which can lead models to capture spurious correlations rather than reliable diagnostic cues. To address this challenge, we propose PEMV-thyroid, a Prototype-Enhanced Multi-View learning framework that accounts for data heterogeneity by learning complementary representations from multiple feature perspectives and refining decision boundaries through a prototype-based correction mechanism with mixed prototype information. By integrating multi-view representations with prototype-level guidance, the proposed approach enables more stable representation learning under heterogeneous imaging conditions. Extensive experiments on multiple thyroid ultrasound datasets demonstrate that PEMV-thyroid consistently outperforms state-of-the-art methods, particularly in cross-device and cross-domain evaluation scenarios, leading to improved diagnostic accuracy and generalisation performance in real-world clinical settings. The source code is available at https://github.com/chenyangmeii/Prototype-Enhanced-Multi-View-Learning.

Learning Hierarchical Orthogonal Prototypes for Generalized Few-Shot 3D Point Cloud Segmentation

Mar 20, 2026Abstract:Generalized few-shot 3D point cloud segmentation aims to adapt to novel classes from only a few annotations while maintaining strong performance on base classes, but this remains challenging due to the inherent stability-plasticity trade-off: adapting to novel classes can interfere with shared representations and cause base-class forgetting. We present HOP3D, a unified framework that learns hierarchical orthogonal prototypes with an entropy-based few-shot regularizer to enable robust novel-class adaptation without degrading base-class performance. HOP3D introduces hierarchical orthogonalization that decouples base and novel learning at both the gradient and representation levels, effectively mitigating base-novel interference. To further enhance adaptation under sparse supervision, we incorporate an entropy-based regularizer that leverages predictive uncertainty to refine prototype learning and promote balanced predictions. Extensive experiments on ScanNet200 and ScanNet++ demonstrate that HOP3D consistently outperforms state-of-the-art baselines under both 1-shot and 5-shot settings. The code is available at https://fdueblab-hop3d.github.io/.

Noise and Edge Based Dual Branch Image Manipulation Detection

Jul 02, 2022

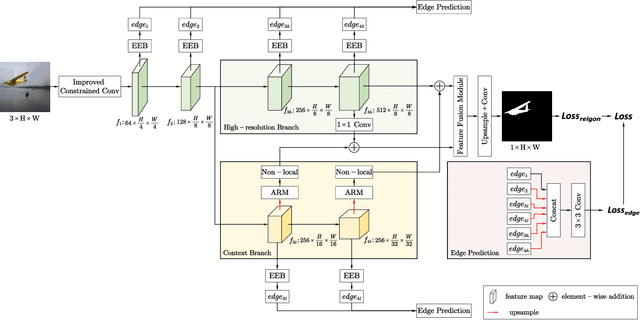

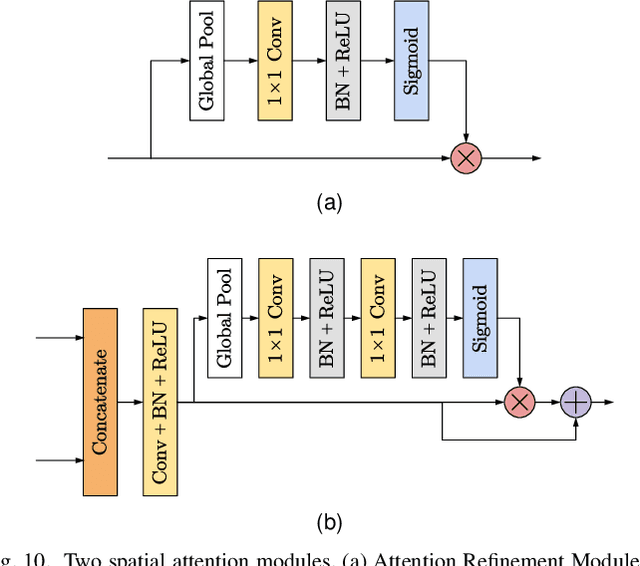

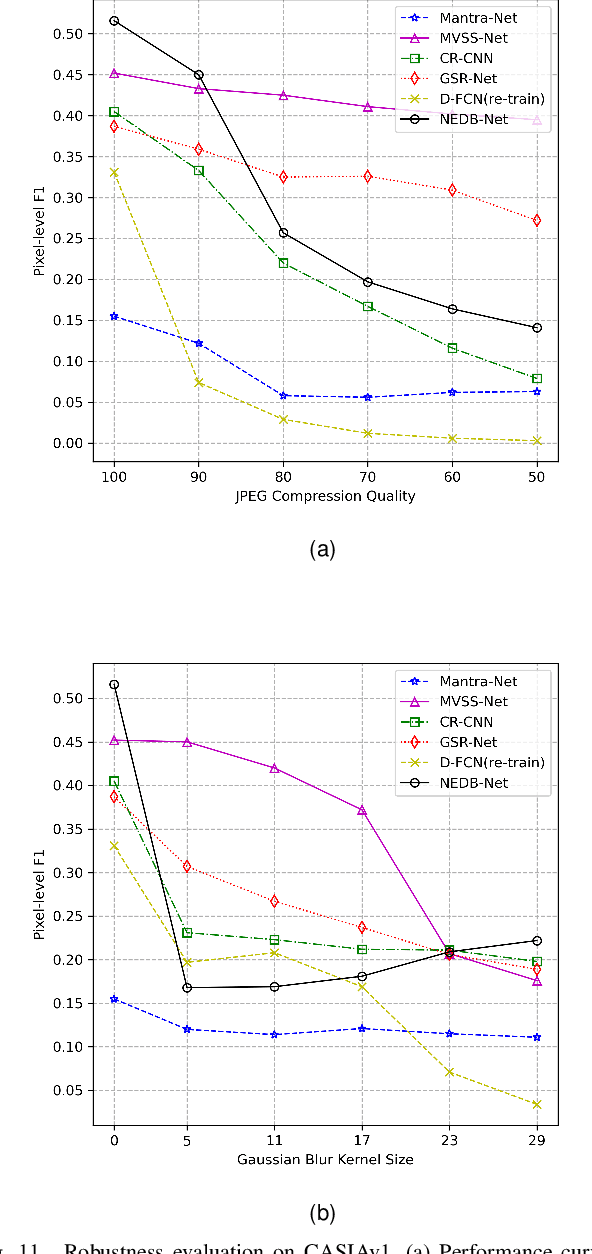

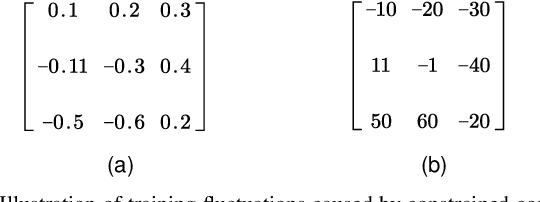

Abstract:Unlike ordinary computer vision tasks that focus more on the semantic content of images, the image manipulation detection task pays more attention to the subtle information of image manipulation. In this paper, the noise image extracted by the improved constrained convolution is used as the input of the model instead of the original image to obtain more subtle traces of manipulation. Meanwhile, the dual-branch network, consisting of a high-resolution branch and a context branch, is used to capture the traces of artifacts as much as possible. In general, most manipulation leaves manipulation artifacts on the manipulation edge. A specially designed manipulation edge detection module is constructed based on the dual-branch network to identify these artifacts better. The correlation between pixels in an image is closely related to their distance. The farther the two pixels are, the weaker the correlation. We add a distance factor to the self-attention module to better describe the correlation between pixels. Experimental results on four publicly available image manipulation datasets demonstrate the effectiveness of our model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge