Zhixiong Yue

Know Your Step: Faster and Better Alignment for Flow Matching Models via Step-aware Advantages

Feb 02, 2026Abstract:Recent advances in flow matching models, particularly with reinforcement learning (RL), have significantly enhanced human preference alignment in few step text to image generators. However, existing RL based approaches for flow matching models typically rely on numerous denoising steps, while suffering from sparse and imprecise reward signals that often lead to suboptimal alignment. To address these limitations, we propose Temperature Annealed Few step Sampling with Group Relative Policy Optimization (TAFS GRPO), a novel framework for training flow matching text to image models into efficient few step generators well aligned with human preferences. Our method iteratively injects adaptive temporal noise onto the results of one step samples. By repeatedly annealing the model's sampled outputs, it introduces stochasticity into the sampling process while preserving the semantic integrity of each generated image. Moreover, its step aware advantage integration mechanism combines the GRPO to avoid the need for the differentiable of reward function and provide dense and step specific rewards for stable policy optimization. Extensive experiments demonstrate that TAFS GRPO achieves strong performance in few step text to image generation and significantly improves the alignment of generated images with human preferences. The code and models of this work will be available to facilitate further research.

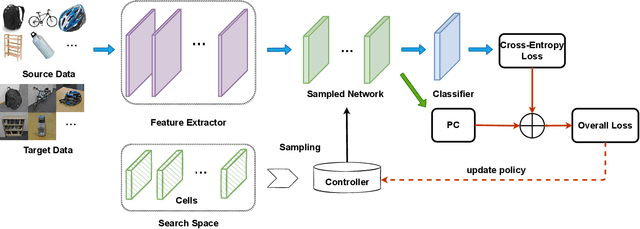

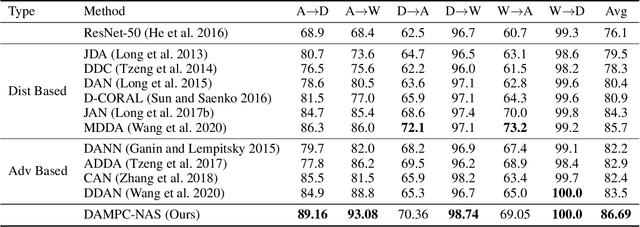

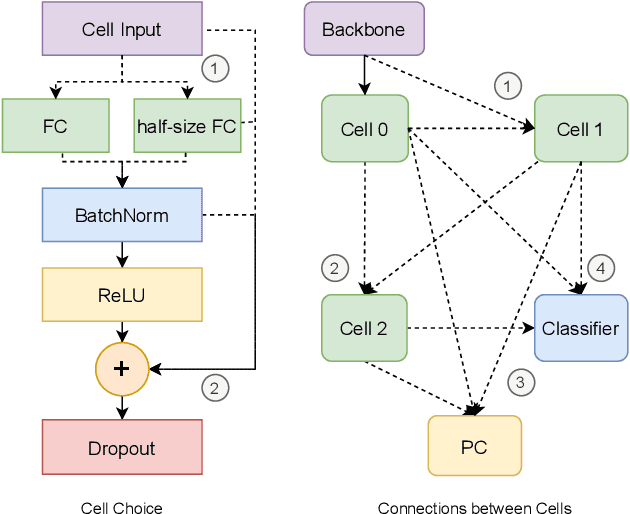

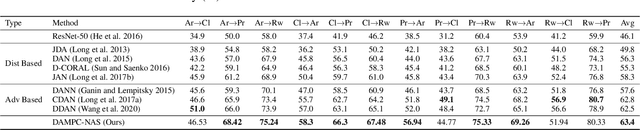

Domain Adaptation by Maximizing Population Correlation with Neural Architecture Search

Sep 12, 2021

Abstract:In Domain Adaptation (DA), where the feature distributions of the source and target domains are different, various distance-based methods have been proposed to minimize the discrepancy between the source and target domains to handle the domain shift. In this paper, we propose a new similarity function, which is called Population Correlation (PC), to measure the domain discrepancy for DA. Base on the PC function, we propose a new method called Domain Adaptation by Maximizing Population Correlation (DAMPC) to learn a domain-invariant feature representation for DA. Moreover, most existing DA methods use hand-crafted bottleneck networks, which may limit the capacity and flexibility of the corresponding model. Therefore, we further propose a method called DAMPC with Neural Architecture Search (DAMPC-NAS) to search the optimal network architecture for DAMPC. Experiments on several benchmark datasets, including Office-31, Office-Home, and VisDA-2017, show that the proposed DAMPC-NAS method achieves better results than state-of-the-art DA methods.

Multi-Objective Meta Learning

Feb 14, 2021

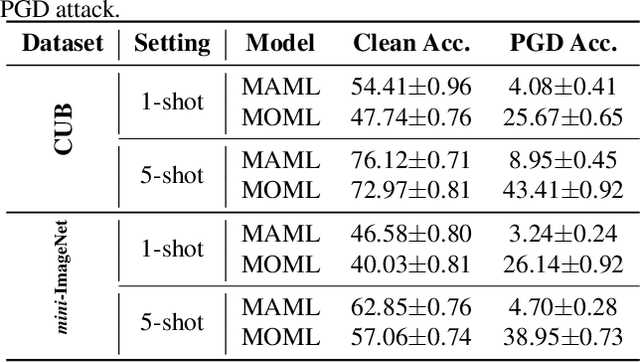

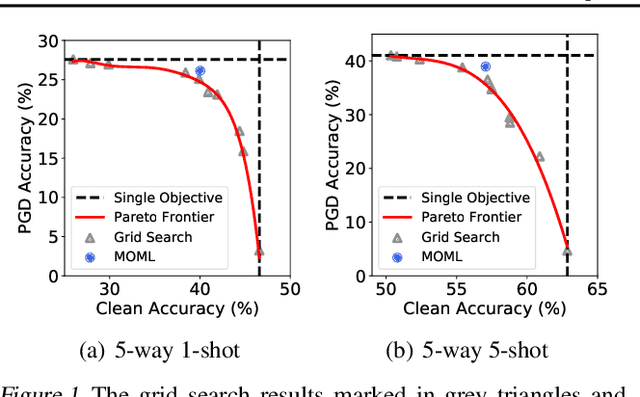

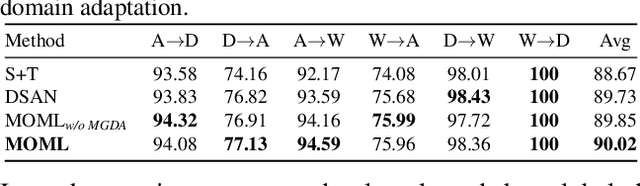

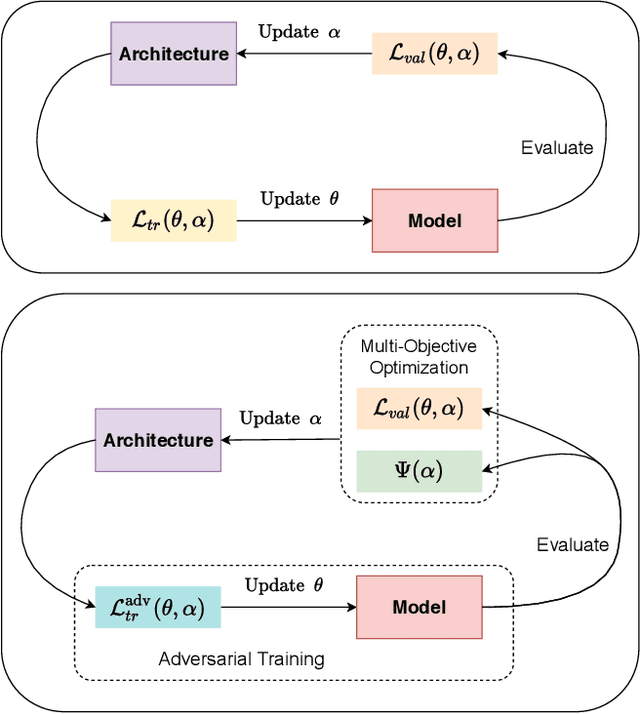

Abstract:Meta learning with multiple objectives can be formulated as a Multi-Objective Bi-Level optimization Problem (MOBLP) where the upper-level subproblem is to solve several possible conflicting targets for the meta learner. However, existing studies either apply an inefficient evolutionary algorithm or linearly combine multiple objectives as a single-objective problem with the need to tune combination weights. In this paper, we propose a unified gradient-based Multi-Objective Meta Learning (MOML) framework and devise the first gradient-based optimization algorithm to solve the MOBLP by alternatively solving the lower-level and upper-level subproblems via the gradient descent method and the gradient-based multi-objective optimization method, respectively. Theoretically, we prove the convergence properties of the proposed gradient-based optimization algorithm. Empirically, we show the effectiveness of the proposed MOML framework in several meta learning problems, including few-shot learning, neural architecture search, domain adaptation, and multi-task learning.

Effective, Efficient and Robust Neural Architecture Search

Nov 19, 2020

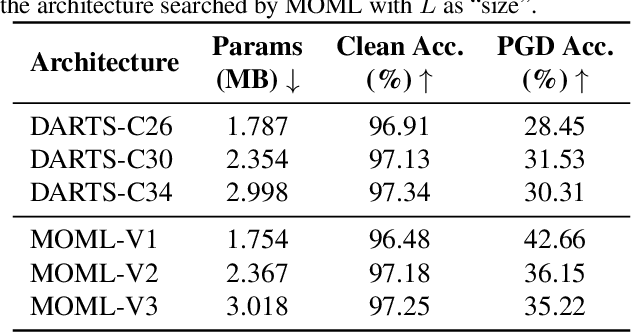

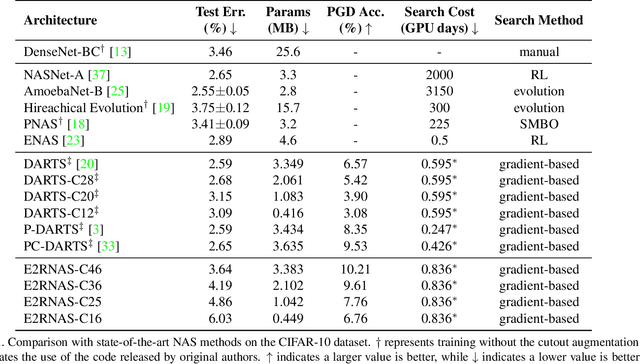

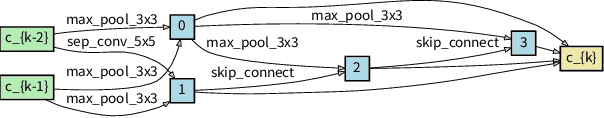

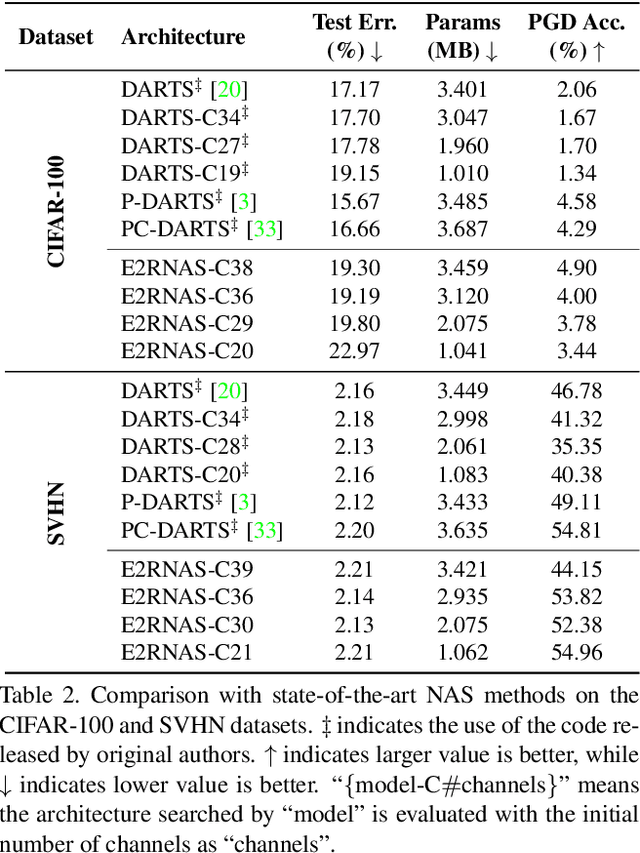

Abstract:Recent advances in adversarial attacks show the vulnerability of deep neural networks searched by Neural Architecture Search (NAS). Although NAS methods can find network architectures with the state-of-the-art performance, the adversarial robustness and resource constraint are often ignored in NAS. To solve this problem, we propose an Effective, Efficient, and Robust Neural Architecture Search (E2RNAS) method to search a neural network architecture by taking the performance, robustness, and resource constraint into consideration. The objective function of the proposed E2RNAS method is formulated as a bi-level multi-objective optimization problem with the upper-level problem as a multi-objective optimization problem, which is different from existing NAS methods. To solve the proposed objective function, we integrate the multiple-gradient descent algorithm, a widely studied gradient-based multi-objective optimization algorithm, with the bi-level optimization. Experiments on benchmark datasets show that the proposed E2RNAS method can find adversarially robust architectures with optimized model size and comparable classification accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge