Zhengyu Fu

FunFact: Building Probabilistic Functional 3D Scene Graphs via Factor-Graph Reasoning

Apr 04, 2026Abstract:Recent work in 3D scene understanding is moving beyond purely spatial analysis toward functional scene understanding. However, existing methods often consider functional relationships between object pairs in isolation, failing to capture the scene-wide interdependence that humans use to resolve ambiguity. We introduce FunFact, a framework for constructing probabilistic open-vocabulary functional 3D scene graphs from posed RGB-D images. FunFact first builds an object- and part-centric 3D map and uses foundation models to propose semantically plausible functional relations. These candidates are converted into factor graph variables and constrained by both LLM-derived common-sense priors and geometric priors. This formulation enables joint probabilistic inference over all functional edges and their marginals, yielding substantially better calibrated confidence scores. To benchmark this setting, we introduce FunThor, a synthetic dataset based on AI2-THOR with part-level geometry and rule-based functional annotations. Experiments on SceneFun3D, FunGraph3D, and FunThor show that FunFact improves node and relation discovery recall and significantly reduces calibration error for ambiguous relations, highlighting the benefits of holistic probabilistic modeling for functional scene understanding. See our project page at https://funfact-scenegraph.github.io/

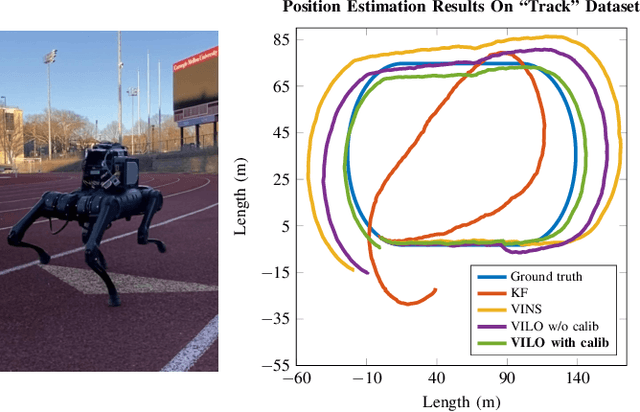

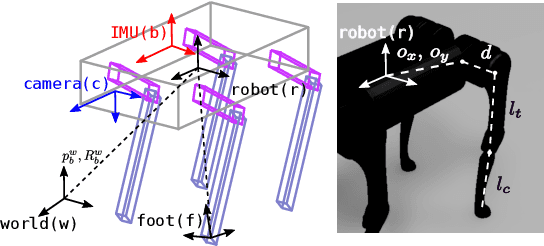

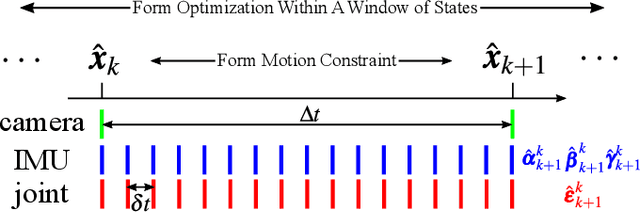

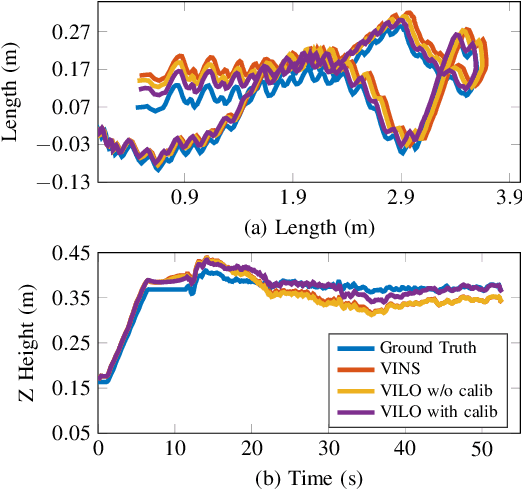

Cerberus: Low-Drift Visual-Inertial-Leg Odometry For Agile Locomotion

Sep 16, 2022

Abstract:We present an open-source Visual-Inertial-Leg Odometry (VILO) state estimation solution, Cerberus, for legged robots that estimates position precisely on various terrains in real time using a set of standard sensors, including stereo cameras, IMU, joint encoders, and contact sensors. In addition to estimating robot states, we also perform online kinematic parameter calibration and contact outlier rejection to substantially reduce position drift. Hardware experiments in various indoor and outdoor environments validate that calibrating kinematic parameters within the Cerberus can reduce estimation drift to lower than 1% during long distance high speed locomotion. Our drift results are better than any other state estimation method using the same set of sensors reported in the literature. Moreover, our state estimator performs well even when the robot is experiencing large impacts and camera occlusion. The implementation of the state estimator, along with the datasets used to compute our results, are available at https://github.com/ShuoYangRobotics/Cerberus.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge