Zhenglin Yang

Trajectory Generation with Endpoint Regulation and Momentum-Aware Dynamics for Visually Impaired Scenarios

Feb 25, 2026Abstract:Trajectory generation for visually impaired scenarios requires smooth and temporally consistent state in structured, low-speed dynamic environments. However, traditional jerk-based heuristic trajectory sampling with independent segment generation and conventional smoothness penalties often lead to unstable terminal behavior and state discontinuities under frequent regenerating. This paper proposes a trajectory generation approach that integrates endpoint regulation to stabilize terminal states within each segment and momentum-aware dynamics to regularize the evolution of velocity and acceleration for segment consistency. Endpoint regulation is incorporated into trajectory sampling to stabilize terminal behavior, while a momentum-aware dynamics enforces consistent velocity and acceleration evolution across consecutive trajectory segments. Experimental results demonstrate reduced acceleration peaks and lower jerk levels with decreased dispersion, smoother velocity and acceleration profiles, more stable endpoint distributions, and fewer infeasible trajectory candidates compared with a baseline planner.

Parameter-Free Adaptive Multi-Scale Channel-Spatial Attention Aggregation framework for 3D Indoor Semantic Scene Completion Toward Assisting Visually Impaired

Feb 19, 2026Abstract:In indoor assistive perception for visually impaired users, 3D Semantic Scene Completion (SSC) is expected to provide structurally coherent and semantically consistent occupancy under strictly monocular vision for safety-critical scene understanding. However, existing monocular SSC approaches often lack explicit modeling of voxel-feature reliability and regulated cross-scale information propagation during 2D-3D projection and multi-scale fusion, making them vulnerable to projection diffusion and feature entanglement and thus limiting structural stability. To address these challenges, this paper presents an Adaptive Multi-scale Attention Aggregation (AMAA) framework built upon the MonoScene pipeline. Rather than introducing a heavier backbone, AMAA focuses on reliability-oriented feature regulation within a monocular SSC framework. Specifically, lifted voxel features are jointly calibrated in semantic and spatial dimensions through parallel channel-spatial attention aggregation, while multi-scale encoder-decoder fusion is stabilized via a hierarchical adaptive feature-gating strategy that regulates information injection across scales. Experiments on the NYUv2 benchmark demonstrate consistent improvements over MonoScene without significantly increasing system complexity: AMAA achieves 27.25% SSC mIoU (+0.31) and 43.10% SC IoU (+0.59). In addition, system-level deployment on an NVIDIA Jetson platform verifies that the complete AMAA framework can be executed stably on embedded hardware. Overall, AMAA improves monocular SSC quality and provides a reliable and deployable perception framework for indoor assistive systems targeting visually impaired users.

NPU-BOLT: A Dataset for Bolt Object Detection in Natural Scene Images

May 25, 2022

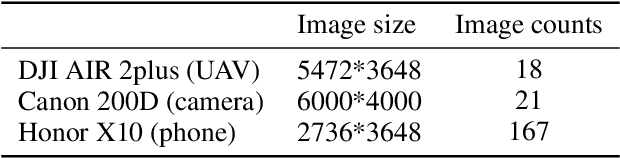

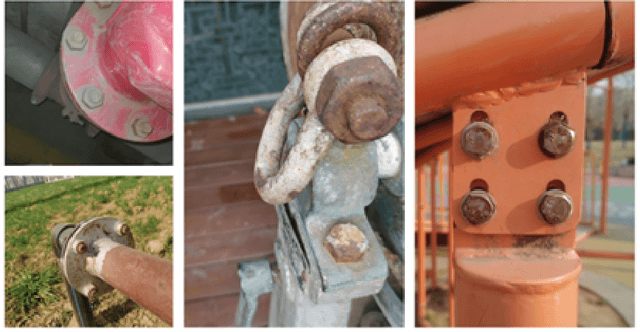

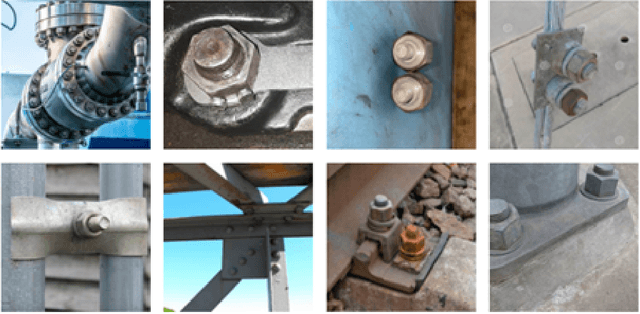

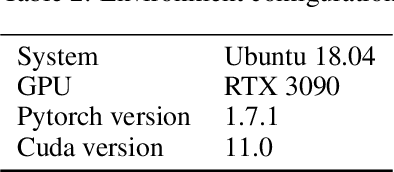

Abstract:Bolt joints are very common and important in engineering structures. Due to extreme service environment and load factors, bolts often get loose or even disengaged. To real-time or timely detect the loosed or disengaged bolts is an urgent need in practical engineering, which is critical to keep structural safety and service life. In recent years, many bolt loosening detection methods using deep learning and machine learning techniques have been proposed and are attracting more and more attention. However, most of these studies use bolt images captured in laboratory for deep leaning model training. The images are obtained in a well-controlled light, distance, and view angle conditions. Also, the bolted structures are well designed experimental structures with brand new bolts and the bolts are exposed without any shelter nearby. It is noted that in practical engineering, the above well controlled lab conditions are not easy realized and the real bolt images often have blur edges, oblique perspective, partial occlusion and indistinguishable colors etc., which make the trained models obtained in laboratory conditions loss their accuracy or fails. Therefore, the aim of this study is to develop a dataset named NPU-BOLT for bolt object detection in natural scene images and open it to researchers for public use and further development. In the first version of the dataset, it contains 337 samples of bolt joints images mainly in the natural environment, with image data sizes ranging from 400*400 to 6000*4000, totaling approximately 1275 bolt targets. The bolt targets are annotated into four categories named blur bolt, bolt head, bolt nut and bolt side. The dataset is tested with advanced object detection models including yolov5, Faster-RCNN and CenterNet. The effectiveness of the dataset is validated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge