Yuying Chen

Gaze Training by Modulated Dropout Improves Imitation Learning

Apr 17, 2019

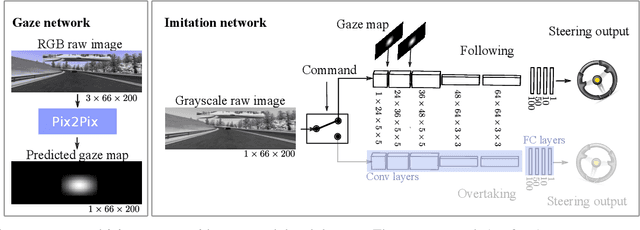

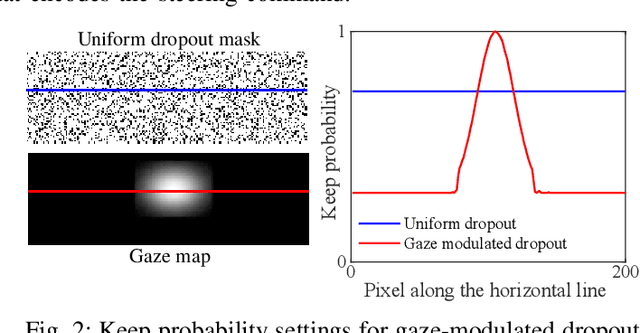

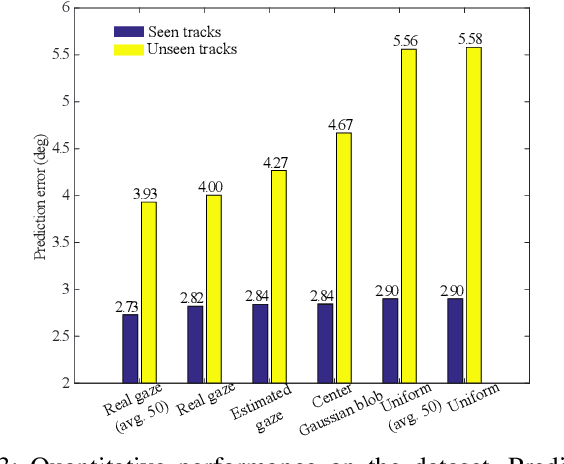

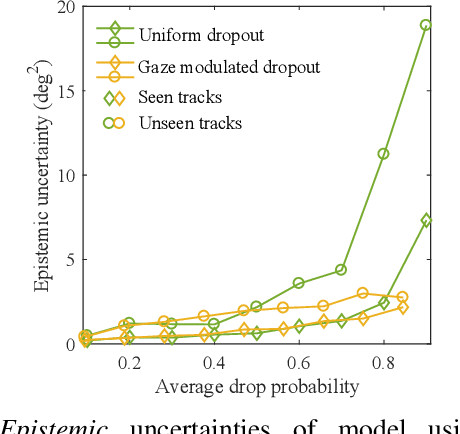

Abstract:Imitation learning by behavioral cloning is a prevalent method which has achieved some success in vision-based autonomous driving. The basic idea behind behavioral cloning is to have the neural network learn from observing a human expert's behavior. Typically, a convolutional neural network learns to predict the steering commands from raw driver-view images by mimicking the behaviors of human drivers. However, there are other cues, e.g. gaze behavior, available from human drivers that have yet to be exploited. Previous researches have shown that novice human learners can benefit from observing experts' gaze patterns. We present here that deep neural networks can also profit from this. We propose a method, gaze-modulated dropout, for integrating this gaze information into a deep driving network implicitly rather than as an additional input. Our experimental results demonstrate that gaze-modulated dropout enhances the generalization capability of the network to unseen scenes. Prediction error in steering commands is reduced by 23.5% compared to uniform dropout. Running closed loop in the simulator, the gaze-modulated dropout net increased the average distance travelled between infractions by 58.5%. Consistent with these results, we also found the gaze-modulated dropout net to have lower model uncertainty.

Tightly Coupled 3D Lidar Inertial Odometry and Mapping

Apr 15, 2019

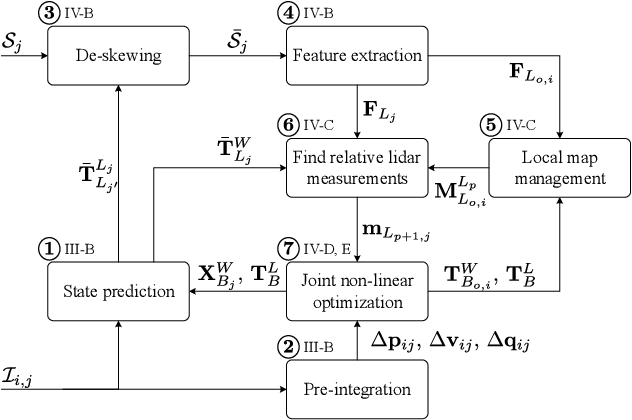

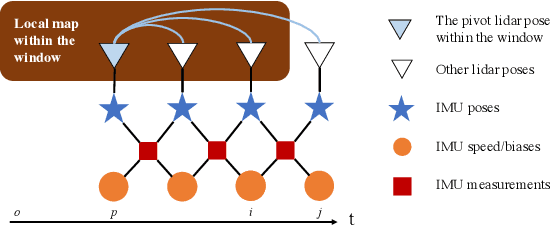

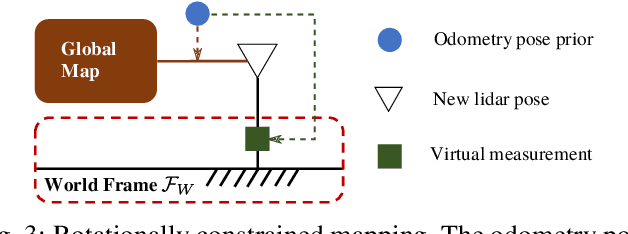

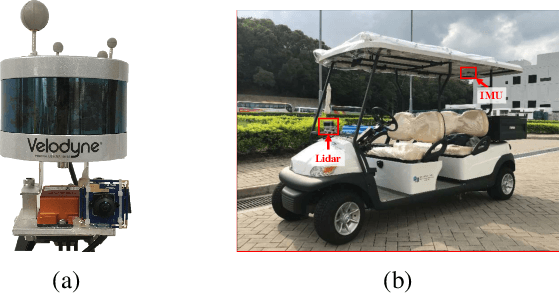

Abstract:Ego-motion estimation is a fundamental requirement for most mobile robotic applications. By sensor fusion, we can compensate the deficiencies of stand-alone sensors and provide more reliable estimations. We introduce a tightly coupled lidar-IMU fusion method in this paper. By jointly minimizing the cost derived from lidar and IMU measurements, the lidar-IMU odometry (LIO) can perform well with acceptable drift after long-term experiment, even in challenging cases where the lidar measurements can be degraded. Besides, to obtain more reliable estimations of the lidar poses, a rotation-constrained refinement algorithm (LIO-mapping) is proposed to further align the lidar poses with the global map. The experiment results demonstrate that the proposed method can estimate the poses of the sensor pair at the IMU update rate with high precision, even under fast motion conditions or with insufficient features.

Hierarchical Trajectory Planning for Autonomous Driving in Low-speed Driving Scenarios Based on RRT and Optimization

Apr 04, 2019

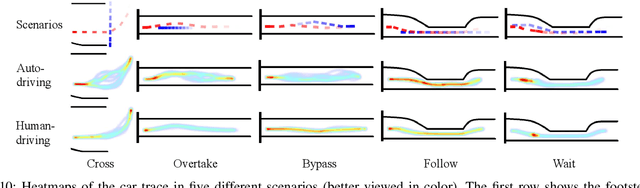

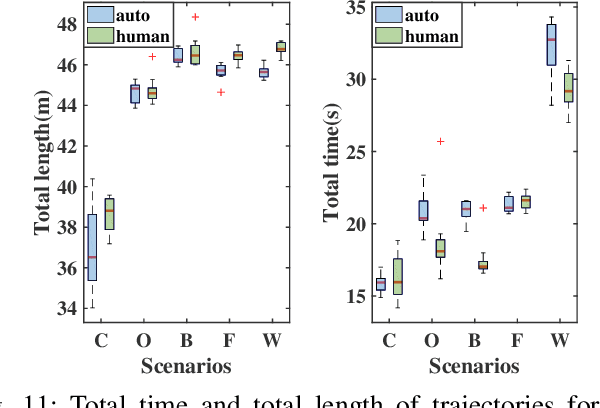

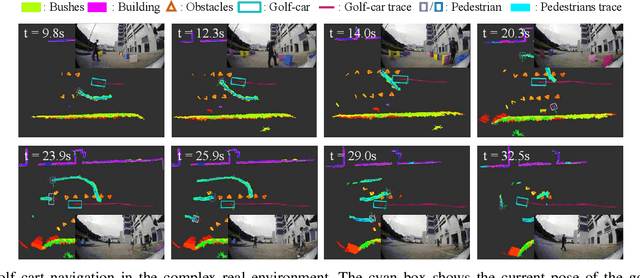

Abstract:Though great effort has been put into the study of path planning on urban roads and highways, few works have studied the driving strategy and trajectory planning in low-speed driving scenarios, e.g., driving on a university campus or driving through a housing or industrial estate. The study of planning in these scenarios is crucial as these environments often cover the first or the last one kilometer of a daily travel or logistic system. Additionally, it is essential to treat these scenarios differently as, in most cases, the driving environment is narrow, dynamic, and rich with obstacles, which also causes the planning in such environments to continue to be a challenging task. This paper proposes a hierarchical planning approach that separates the path planning and the temporal planning. A path that satisfies the kinematic constraints is generated through a modified bidirectional rapidly exploring random tree (bi-RRT) approach. Following that, the timestamp of each node of the path is optimized through sequential quadratic programming (SQP) with the feasible searching bounds defined by safe intervals (SIs). Simulations and real tests in different driving scenarios prove the effectiveness of this method.

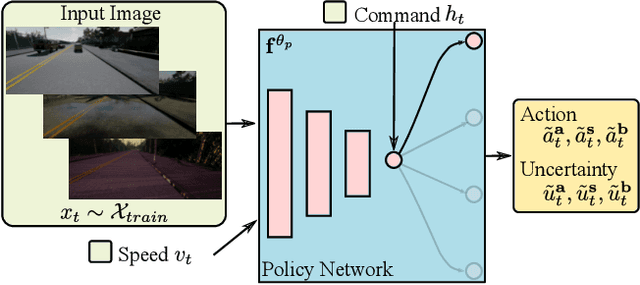

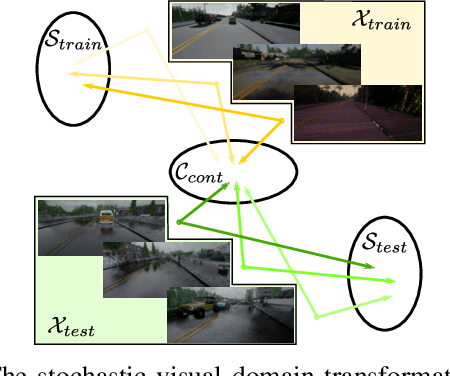

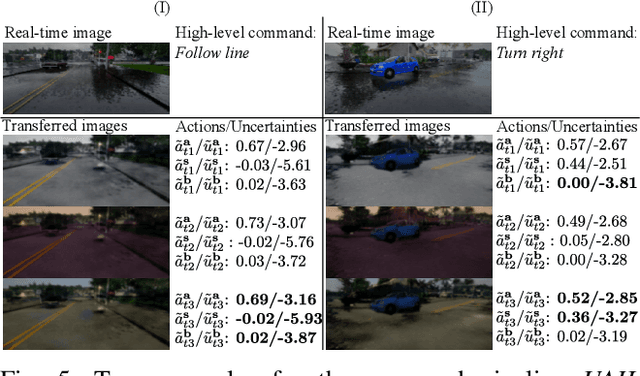

End-to-end Driving Deploying through Uncertainty-Aware Imitation Learning and Stochastic Visual Domain Adaptation

Mar 03, 2019

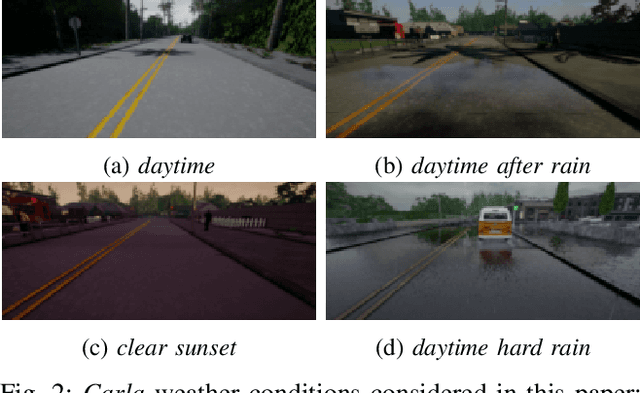

Abstract:End-to-end visual-based imitation learning has been widely applied in autonomous driving. When deploying the trained visual-based driving policy, a deterministic command is usually directly applied without considering the uncertainty of the input data. Such kind of policies may bring dramatical damage when applied in the real world. In this paper, we follow the recent real-to-sim pipeline by translating the testing world image back to the training domain when using the trained policy. In the translating process, a stochastic generator is used to generate various images stylized under the training domain randomly or directionally. Based on those translated images, the trained uncertainty-aware imitation learning policy would output both the predicted action and the data uncertainty motivated by the aleatoric loss function. Through the uncertainty-aware imitation learning policy, we can easily choose the safest one with the lowest uncertainty among the generated images. Experiments in the Carla navigation benchmark show that our strategy outperforms previous methods, especially in dynamic environments.

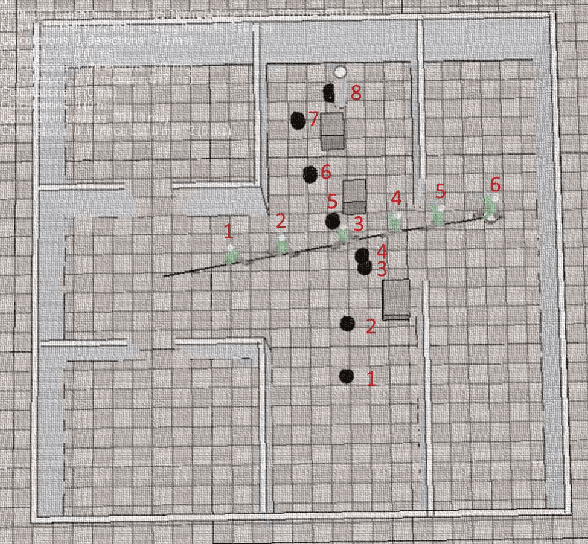

RRT* Combined with GVO for Real-time Nonholonomic Robot Navigation in Dynamic Environment

Apr 11, 2018

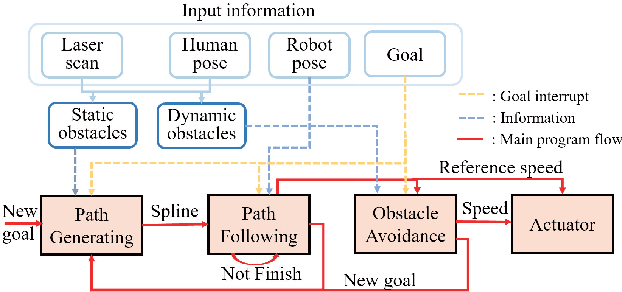

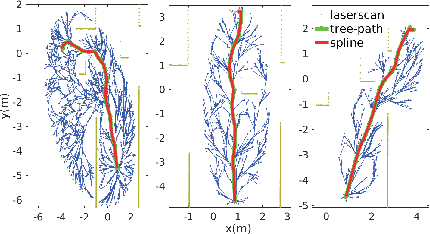

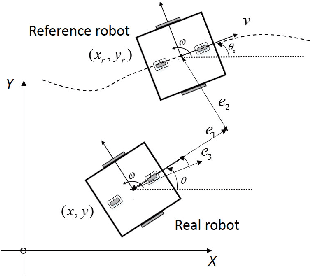

Abstract:Challenges persist in nonholonomic robot navigation in dynamic environments. This paper presents a framework for such navigation based on the model of generalized velocity obstacles (GVO). The idea of velocity obstacles has been well studied and developed for obstacle avoidance since being proposed in 1998. Though it has been proved to be successful, most studies have assumed equations of motion to be linear, which limits their application to holonomic robots. In addition, more attention has been paid to the immediate reaction of robots, while advance planning has been neglected. By applying the GVO model to differential drive robots and by combining it with RRT*, we reduce the uncertainty of the robot trajectory, thus further reducing the range of concern, and save both computation time and running time. By introducing uncertainty for the dynamic obstacles with a Kalman filter, we dilute the risk of considering the obstacles as uniformly moving along a straight line and guarantee the safety. Special concern is given to path generation, including curvature check, making the generated path feasible for nonholonomic robots. We experimentally demonstrate the feasibility of the framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge