Young J. Kim

Adaptive Trajectory Refinement for Optimization-based Local Planning in Narrow Passages

Oct 30, 2025

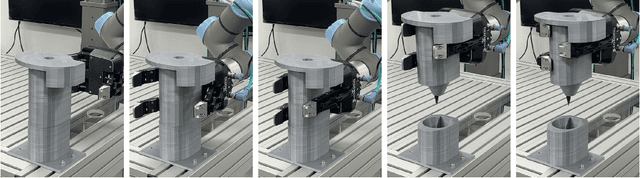

Abstract:Trajectory planning for mobile robots in cluttered environments remains a major challenge due to narrow passages, where conventional methods often fail or generate suboptimal paths. To address this issue, we propose the adaptive trajectory refinement algorithm, which consists of two main stages. First, to ensure safety at the path-segment level, a segment-wise conservative collision test is applied, where risk-prone trajectory path segments are recursively subdivided until collision risks are eliminated. Second, to guarantee pose-level safety, pose correction based on penetration direction and line search is applied, ensuring that each pose in the trajectory is collision-free and maximally clear from obstacles. Simulation results demonstrate that the proposed method achieves up to 1.69x higher success rates and up to 3.79x faster planning times than state-of-the-art approaches. Furthermore, real-world experiments confirm that the robot can safely pass through narrow passages while maintaining rapid planning performance.

Kinodynamic Task and Motion Planning using VLM-guided and Interleaved Sampling

Oct 30, 2025Abstract:Task and Motion Planning (TAMP) integrates high-level task planning with low-level motion feasibility, but existing methods are costly in long-horizon problems due to excessive motion sampling. While LLMs provide commonsense priors, they lack 3D spatial reasoning and cannot ensure geometric or dynamic feasibility. We propose a kinodynamic TAMP framework based on a hybrid state tree that uniformly represents symbolic and numeric states during planning, enabling task and motion decisions to be jointly decided. Kinodynamic constraints embedded in the TAMP problem are verified by an off-the-shelf motion planner and physics simulator, and a VLM guides exploring a TAMP solution and backtracks the search based on visual rendering of the states. Experiments on the simulated domains and in the real world show 32.14% - 1166.67% increased average success rates compared to traditional and LLM-based TAMP planners and reduced planning time on complex problems, with ablations further highlighting the benefits of VLM guidance.

Fast and Accurate Task Planning using Neuro-Symbolic Language Models and Multi-level Goal Decomposition

Sep 28, 2024

Abstract:In robotic task planning, symbolic planners using rule-based representations like PDDL are effective but struggle with long-sequential tasks in complicated planning environments due to exponentially increasing search space. Recently, Large Language Models (LLMs) based on artificial neural networks have emerged as promising alternatives for autonomous robot task planning, offering faster inference and leveraging commonsense knowledge. However, they typically suffer from lower success rates. In this paper, to address the limitations of the current symbolic (slow speed) or LLM-based approaches (low accuracy), we propose a novel neuro-symbolic task planner that decomposes complex tasks into subgoals using LLM and carries out task planning for each subgoal using either symbolic or MCTS-based LLM planners, depending on the subgoal complexity. Generating subgoals helps reduce planning time and improve success rates by narrowing the overall search space and enabling LLMs to focus on smaller, more manageable tasks. Our method significantly reduces planning time while maintaining a competitive success rate, as demonstrated through experiments in different public task planning domains, as well as real-world and simulated robotics environments.

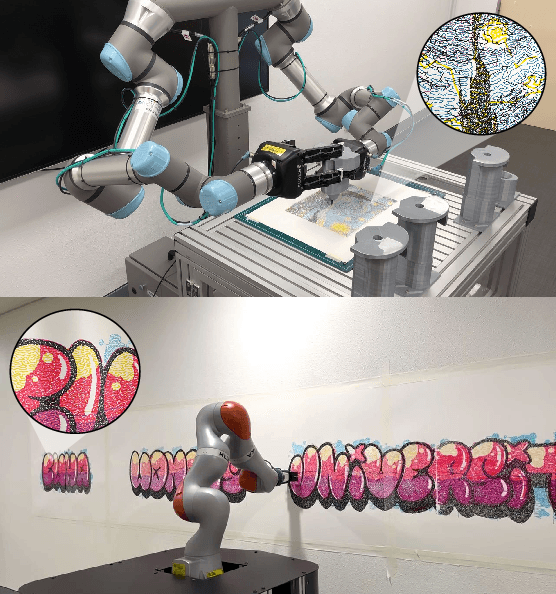

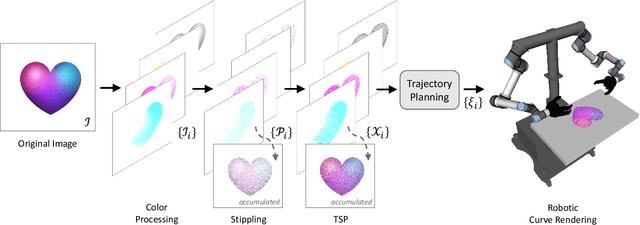

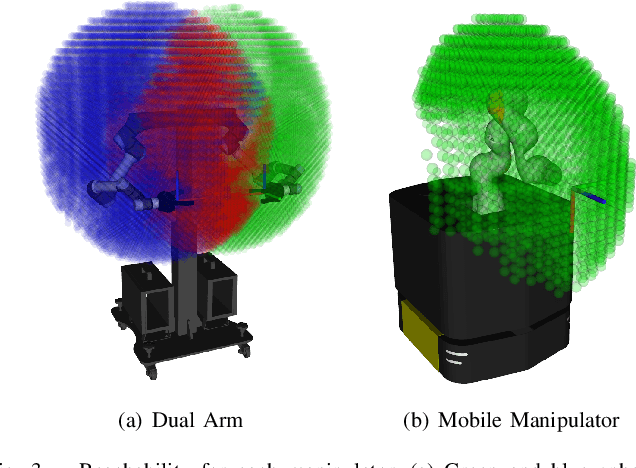

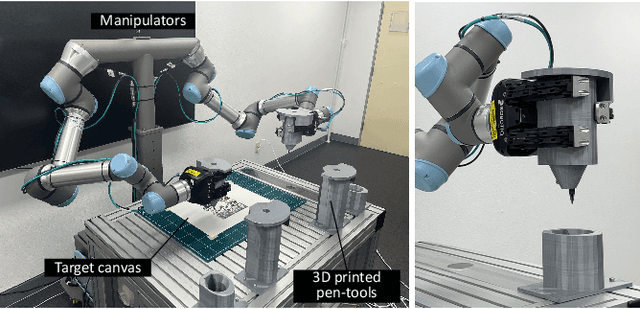

TSP-Bot: Robotic TSP Pen Art using High-DoF Manipulators

Oct 17, 2022

Abstract:TSP art is an art form for drawing an image using piecewise-continuous line segments with no crossings. This paper presents a multi-color robotic pen drawing system capable of drawing complicated TSP pen art on a planar surface. Given a colored, raster image, we first convert it into a set of points representing the original image's tone by controlling the density of the points. Then, we find a piecewise-continuous linear path that visits every point exactly once, equivalent to solving a Traveling Salesman Problem (TSP). Our robotic drawing system consisting of single or dual manipulators with fingered grippers and a mobile platform, performs the drawing task by following the resulting complex and sophisticated path composed of thousands of TSP sites. As a result, our system can draw a complicated and visually-pleasing TSP pen art with high accuracy and efficiency. We also demonstrate that our system can draw a TSP pen art on a large wall, which is very hard for a human artist to achieve.

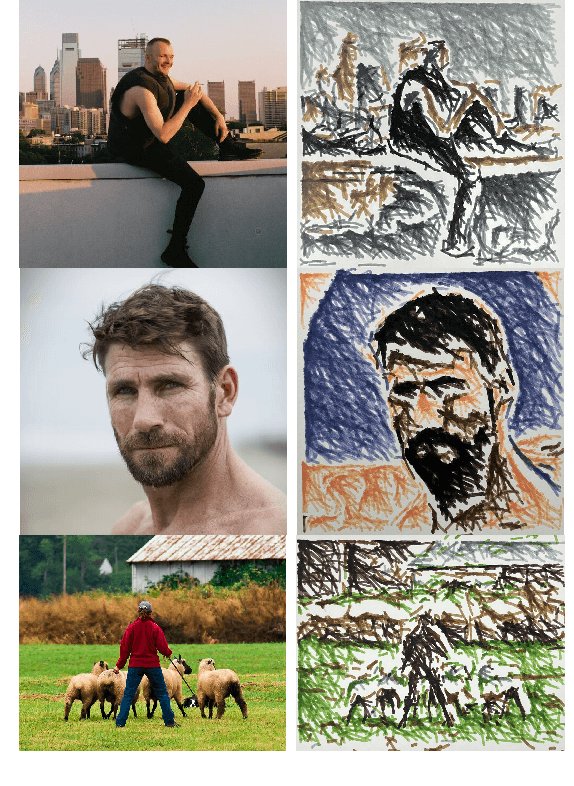

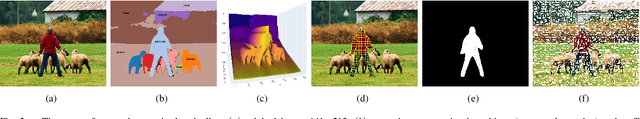

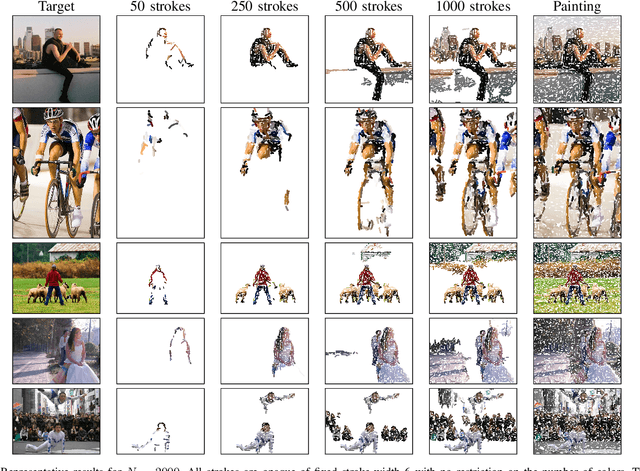

Stroke-based Rendering and Planning for Robotic Performance of Artistic Drawing

Oct 14, 2022

Abstract:We present a new robotic drawing system based on stroke-based rendering (SBR). Our motivation is the artistic quality of the whole performance. Not only should the generated strokes in the final drawing resemble the input image, but the stroke sequence should also exhibit a human artist's planning process. Thus, when a robot executes the drawing task, both the drawing results and the way the robot executes would look artistic. Our SBR system is based on image segmentation and depth estimation. It generates the drawing strokes in an order that allows for the intended shape to be perceived quickly and for its detailed features to be filled in and emerge gradually when observed by the human. This ordering represents a stroke plan that the drawing robot should follow to create an artistic rendering of images. We experimentally demonstrate that our SBR-based drawing makes visually pleasing artistic images, and our robotic system can replicate the result with proper sequences of stroke drawing.

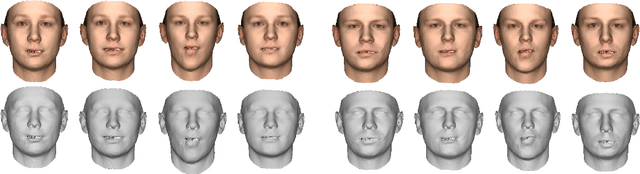

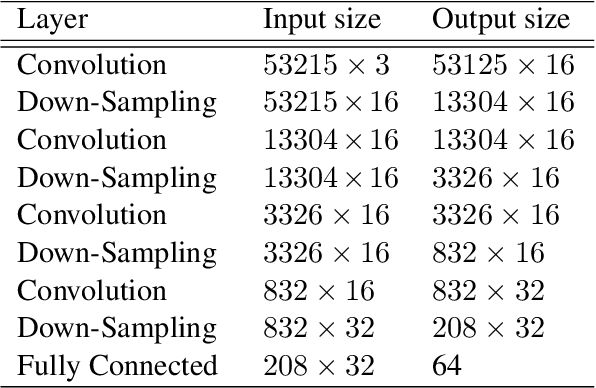

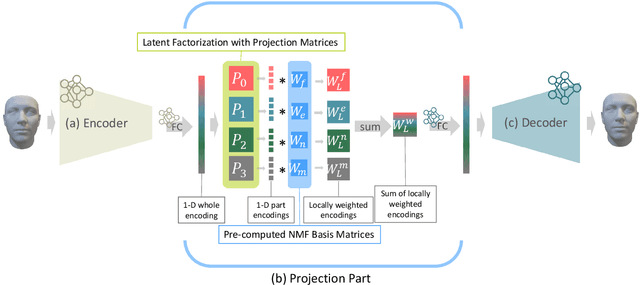

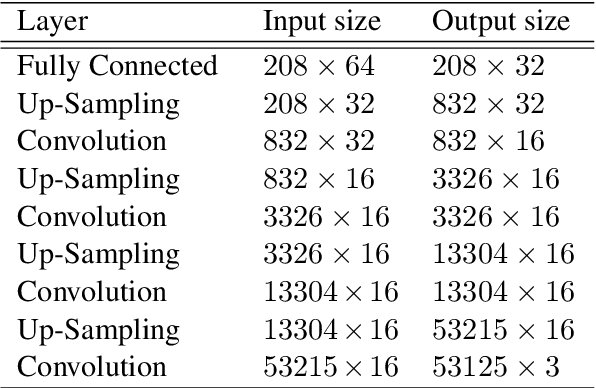

Synthesizing Human Faces using Latent Space Factorization and Local Weights (Extended Version)

Jul 19, 2021

Abstract:We propose a 3D face generative model with local weights to increase the model's variations and expressiveness. The proposed model allows partial manipulation of the face while still learning the whole face mesh. For this purpose, we address an effective way to extract local facial features from the entire data and explore a way to manipulate them during a holistic generation. First, we factorize the latent space of the whole face to the subspace indicating different parts of the face. In addition, local weights generated by non-negative matrix factorization are applied to the factorized latent space so that the decomposed part space is semantically meaningful. We experiment with our model and observe that effective facial part manipulation is possible and that the model's expressiveness is improved.

Accelerating Probabilistic Volumetric Mapping using Ray-Tracing Graphics Hardware

Dec 02, 2020

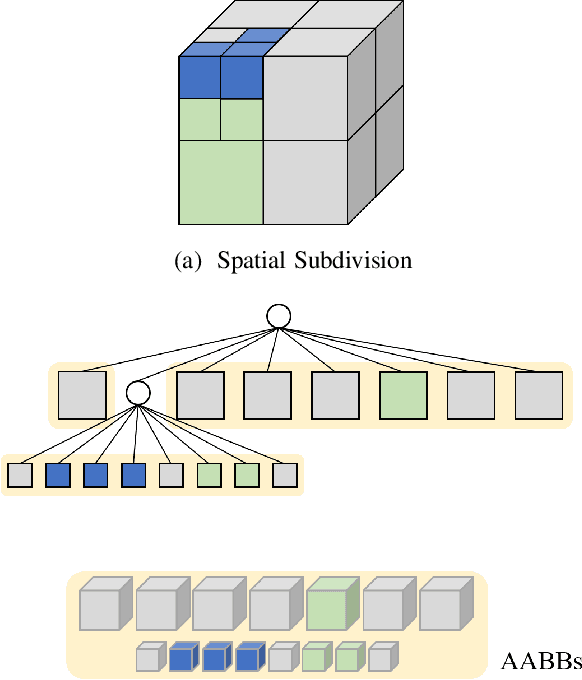

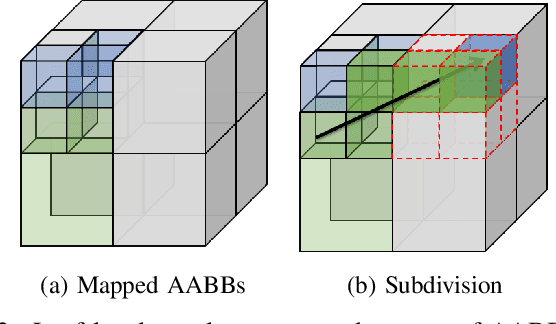

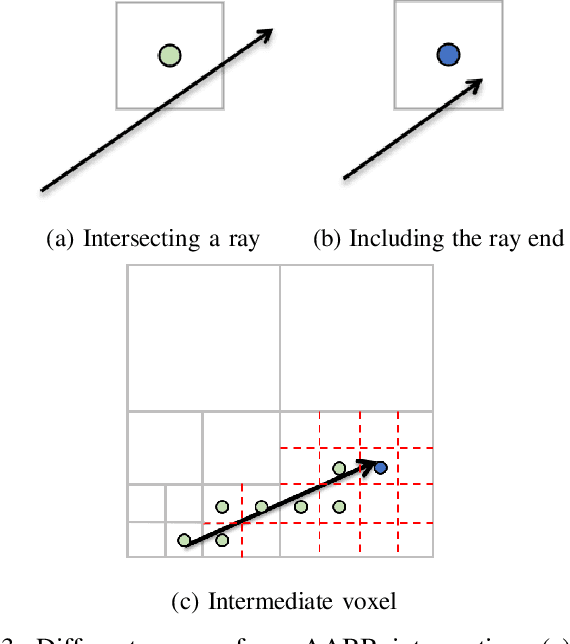

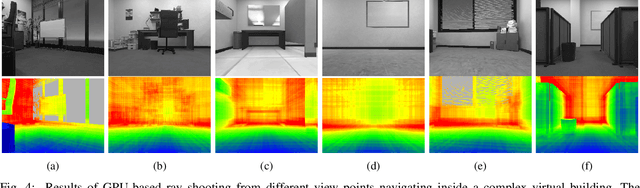

Abstract:Probabilistic volumetric mapping (PVM) represents a 3D environmental map for an autonomous robotic navigational task. A popular implementation such as Octomap is widely used in the robotics community for such a purpose. The Octomap relies on octree to represent a PVM and its main bottleneck lies in massive ray-shooting to determine the occupancy of the underlying volumetric voxel grids. In this paper, we propose GPU-based ray shooting to drastically improve the ray shooting performance in Octomap. Our main idea is based on the use of recent ray-tracing RTX GPU, mainly designed for real-time photo-realistic computer graphics and the accompanying graphics API, known as DXR. Our ray-shooting first maps leaf-level voxels in the given octree to a set of axis-aligned bounding boxes (AABBs) and employ massively parallel ray shooting on them using GPUs to find free and occupied voxels. These are fed back into CPU to update the voxel occupancy and restructure the octree. In our experiments, we have observed more than three-orders-of-magnitude performance improvement in terms of ray shooting using ray-tracing RTX GPU over a state-of-the-art Octomap CPU implementation, where the benchmarking environments consist of more than 77K points and 25K~34K voxel grids.

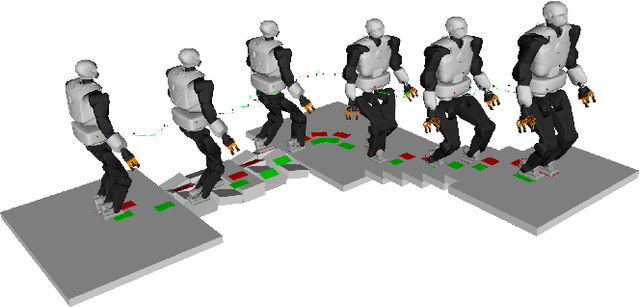

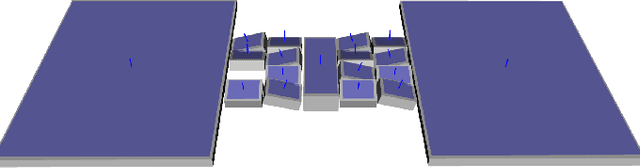

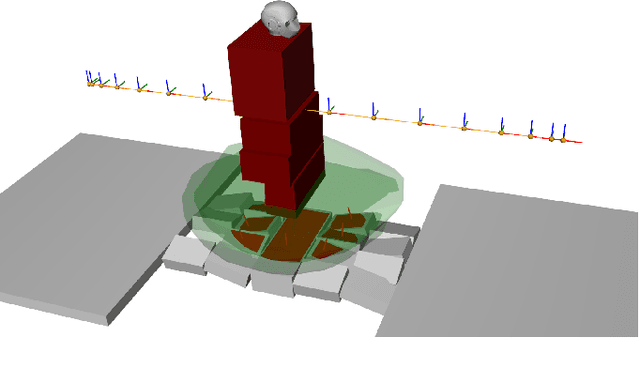

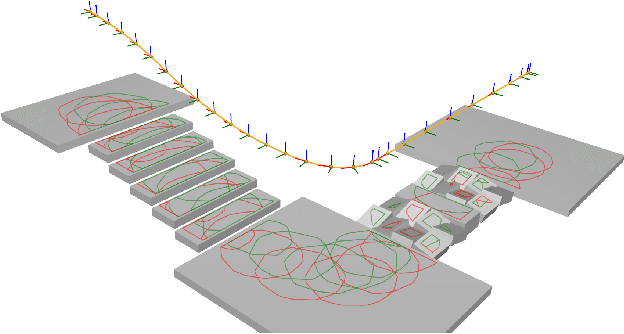

Solving Footstep Planning as a Feasibility Problem using L1-norm Minimization

Nov 19, 2020

Abstract:One challenge of legged locomotion on uneven terrains is to deal with both the discrete problem of selecting a contact surface for each footstep and the continuous problem of placing each footstep on the selected surface. Consequently, footstep planning can be addressed with a Mixed Integer Program (MIP), an elegant but computationally-demanding method, which can make it unsuitable for online planning. We reformulate the MIP into a cardinality problem, then approximate it as a computationally efficient l1-norm minimisation, called SL1M. Moreover, we improve the performance and convergence of SL1M by combining it with a sampling-based root trajectory planner to prune irrelevant surface candidates. Our tests on the humanoid Talos in four representative scenarios show that SL1M always converges faster than MIP. For scenarios when the combinatorial complexity is small (< 10 surfaces per step), SL1M converges at least two times faster than MIP with no need for pruning. In more complex cases, SL1M converges up to 100 times faster than MIP with the help of pruning. Moreover, pruning can also improve the MIP computation time. The versatility of the framework is shown with additional tests on the quadruped robot ANYmal.

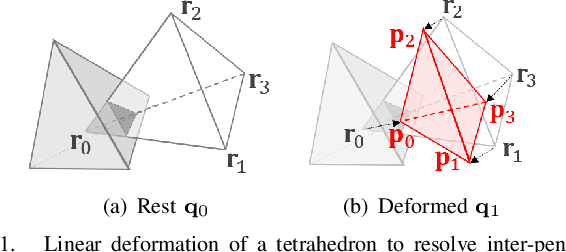

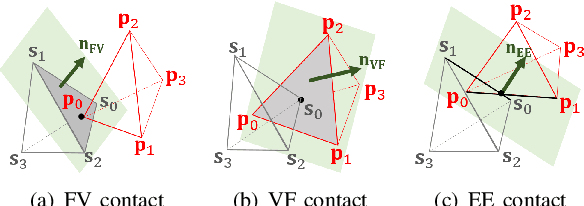

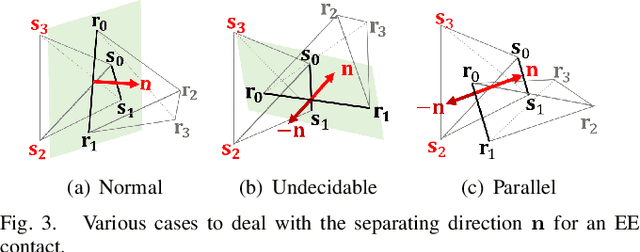

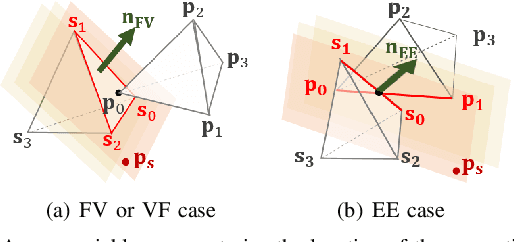

A Penetration Metric for Deforming Tetrahedra using Object Norm

Nov 13, 2019

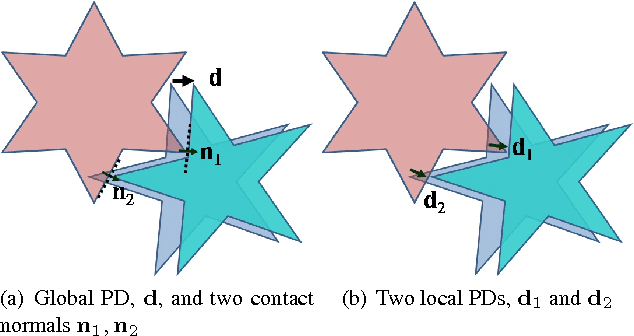

Abstract:In this paper, we propose a novel penetration metric, called deformable penetration depth PDd, to define a measure of inter-penetration between two linearly deforming tetrahedra using the object norm. First of all, we show that a distance metric for a tetrahedron deforming between two configurations can be found in closed form based on object norm. Then, we show that the PDd between an intersecting pair of static and deforming tetrahedra can be found by solving a quadratic programming (QP) problem in terms of the distance metric with non-penetration constraints. We also show that the PDd between two, intersected, deforming tetrahedra can be found by solving a similar QP problem under some assumption on penetrating directions, and it can be also accelerated by an order of magnitude using pre-calculated penetration direction. We have implemented our algorithm on a standard PC platform using an off-the-shelf QP optimizer, and experimentally show that both the static/deformable and deformable/deformable tetrahedra cases can be solvable in from a few to tens of milliseconds. Finally, we demonstrate that our penetration metric is three-times smaller (or tighter) than the classical, rigid penetration depth metric in our experiments.

PolyDepth: Real-time Penetration Depth Computation using Iterative Contact-Space Projection

Aug 25, 2015

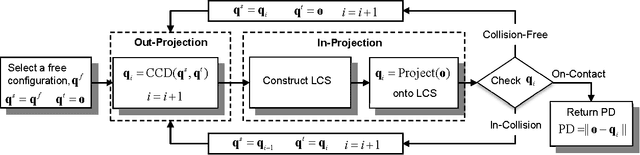

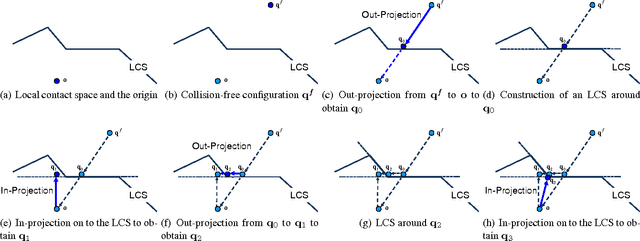

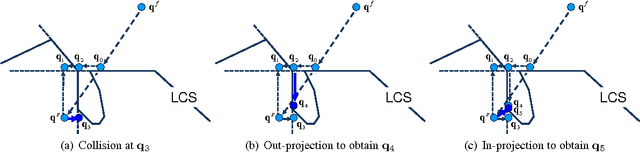

Abstract:We present a real-time algorithm that finds the Penetration Depth (PD) between general polygonal models based on iterative and local optimization techniques. Given an in-collision configuration of an object in configuration space, we find an initial collision-free configuration using several methods such as centroid difference, maximally clear configuration, motion coherence, random configuration, and sampling-based search. We project this configuration on to a local contact space using a variant of continuous collision detection algorithm and construct a linear convex cone around the projected configuration. We then formulate a new projection of the in-collision configuration onto the convex cone as a Linear Complementarity Problem (LCP), which we solve using a type of Gauss-Seidel iterative algorithm. We repeat this procedure until a locally optimal PD is obtained. Our algorithm can process complicated models consisting of tens of thousands triangles at interactive rates.

* Presented in ACM SIGGRAPH 2012. 15 pages, 23 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge