Xiongren Chen

Deconfounding Time Series Forecasting

Oct 27, 2024

Abstract:Time series forecasting is a critical task in various domains, where accurate predictions can drive informed decision-making. Traditional forecasting methods often rely on current observations of variables to predict future outcomes, typically overlooking the influence of latent confounders, unobserved variables that simultaneously affect both the predictors and the target outcomes. This oversight can introduce bias and degrade the performance of predictive models. In this study, we address this challenge by proposing an enhanced forecasting approach that incorporates representations of latent confounders derived from historical data. By integrating these confounders into the predictive process, our method aims to improve the accuracy and robustness of time series forecasts. The proposed approach is demonstrated through its application to climate science data, showing significant improvements over traditional methods that do not account for confounders.

Causal GNNs: A GNN-Driven Instrumental Variable Approach for Causal Inference in Networks

Sep 13, 2024

Abstract:As network data applications continue to expand, causal inference within networks has garnered increasing attention. However, hidden confounders complicate the estimation of causal effects. Most methods rely on the strong ignorability assumption, which presumes the absence of hidden confounders-an assumption that is both difficult to validate and often unrealistic in practice. To address this issue, we propose CgNN, a novel approach that leverages network structure as instrumental variables (IVs), combined with graph neural networks (GNNs) and attention mechanisms, to mitigate hidden confounder bias and improve causal effect estimation. By utilizing network structure as IVs, we reduce confounder bias while preserving the correlation with treatment. Our integration of attention mechanisms enhances robustness and improves the identification of important nodes. Validated on two real-world datasets, our results demonstrate that CgNN effectively mitigates hidden confounder bias and offers a robust GNN-driven IV framework for causal inference in complex network data.

A Deconfounding Approach to Climate Model Bias Correction

Aug 22, 2024

Abstract:Global Climate Models (GCMs) are crucial for predicting future climate changes by simulating the Earth systems. However, GCM outputs exhibit systematic biases due to model uncertainties, parameterization simplifications, and inadequate representation of complex climate phenomena. Traditional bias correction methods, which rely on historical observation data and statistical techniques, often neglect unobserved confounders, leading to biased results. This paper proposes a novel bias correction approach to utilize both GCM and observational data to learn a factor model that captures multi-cause latent confounders. Inspired by recent advances in causality based time series deconfounding, our method first constructs a factor model to learn latent confounders from historical data and then applies them to enhance the bias correction process using advanced time series forecasting models. The experimental results demonstrate significant improvements in the accuracy of precipitation outputs. By addressing unobserved confounders, our approach offers a robust and theoretically grounded solution for climate model bias correction.

Estimating Peer Direct and Indirect Effects in Observational Network Data

Aug 21, 2024

Abstract:Estimating causal effects is crucial for decision-makers in many applications, but it is particularly challenging with observational network data due to peer interactions. Many algorithms have been proposed to estimate causal effects involving network data, particularly peer effects, but they often overlook the variety of peer effects. To address this issue, we propose a general setting which considers both peer direct effects and peer indirect effects, and the effect of an individual's own treatment, and provide identification conditions of these causal effects and proofs. To estimate these causal effects, we utilize attention mechanisms to distinguish the influences of different neighbors and explore high-order neighbor effects through multi-layer graph neural networks (GNNs). Additionally, to control the dependency between node features and representations, we incorporate the Hilbert-Schmidt Independence Criterion (HSIC) into the GNN, fully utilizing the structural information of the graph, to enhance the robustness and accuracy of the model. Extensive experiments on two semi-synthetic datasets confirm the effectiveness of our approach. Our theoretical findings have the potential to improve intervention strategies in networked systems, with applications in areas such as social networks and epidemiology.

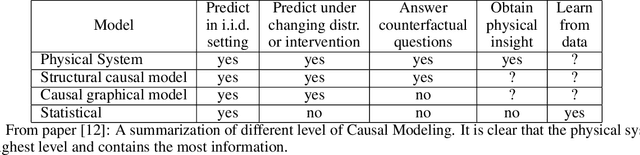

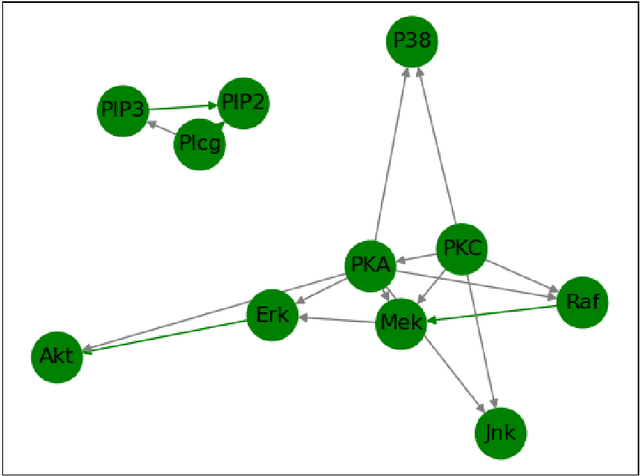

Physical System for Non Time Sequence Data

Oct 07, 2020

Abstract:We propose a novelty approach to connect machine learning to causal structure learning by jacobian matrix of neural network w.r.t. input variables. In this paper, we extend the jacobian-based approach to physical system which is the method human explore and reason the world and it is the highest level of causality. By functions fitting with Neural ODE, we can read out causal structure from functions. This method also enforces a important acylicity constraint on continuous adjacency matrix of graph nodes and significantly reduce the computational complexity of search space of graph.

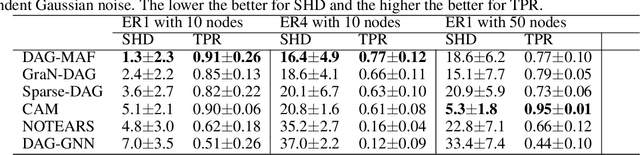

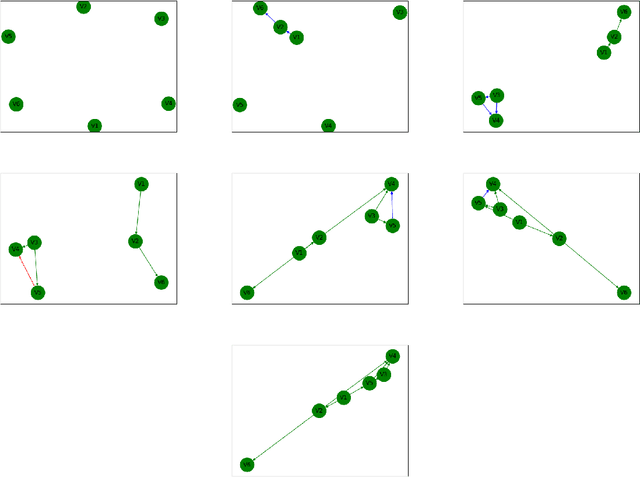

Gradient-based Causal Structure Learning with Normalizing Flow

Oct 07, 2020

Abstract:In this paper, we propose a score-based normalizing flow method called DAG-NF to learn dependencies of input observation data. Inspired by Grad-CAM in computer vision, we use jacobian matrix of output on input as causal relationships and this method can be generalized to any neural networks especially for flow-based generative neural networks such as Masked Autoregressive Flow(MAF) and Continuous Normalizing Flow(CNF) which compute the log likelihood loss and divergence of distribution of input data and target distribution. This method extends NOTEARS which enforces a important acylicity constraint on continuous adjacency matrix of graph nodes and significantly reduce the computational complexity of search space of graph.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge