Xionghao Ding

ESL-Bench: An Event-Driven Synthetic Longitudinal Benchmark for Health Agents

Apr 03, 2026Abstract:Longitudinal health agents must reason across multi-source trajectories that combine continuous device streams, sparse clinical exams, and episodic life events - yet evaluating them is hard: real-world data cannot be released at scale, and temporally grounded attribution questions seldom admit definitive answers without structured ground truth. We present ESL-Bench, an event-driven synthesis framework and benchmark providing 100 synthetic users, each with a 1-5 year trajectory comprising a health profile, a multi-phase narrative plan, daily device measurements, periodic exam records, and an event log with explicit per-indicator impact parameters. Each indicator follows a baseline stochastic process driven by discrete events with sigmoid-onset, exponential-decay kernels under saturation and projection constraints; a hybrid pipeline delegates sparse semantic artifacts to LLM-based planning and dense indicator dynamics to algorithmic simulation with hard physiological bounds. Users are each paired with 100 evaluation queries across five dimensions - Lookup, Trend, Comparison, Anomaly, Explanation - stratified into Easy, Medium, and Hard tiers, with all ground-truth answers programmatically computable from the recorded event-indicator relationships. Evaluating 13 methods spanning LLMs with tools, DB-native agents, and memory-augmented RAG, we find that DB agents (48-58%) substantially outperform memory RAG baselines (30-38%), with the gap concentrated on Comparison and Explanation queries where multi-hop reasoning and evidence attribution are required.

VGPN: Voice-Guided Pointing Robot Navigation for Humans

Apr 03, 2020

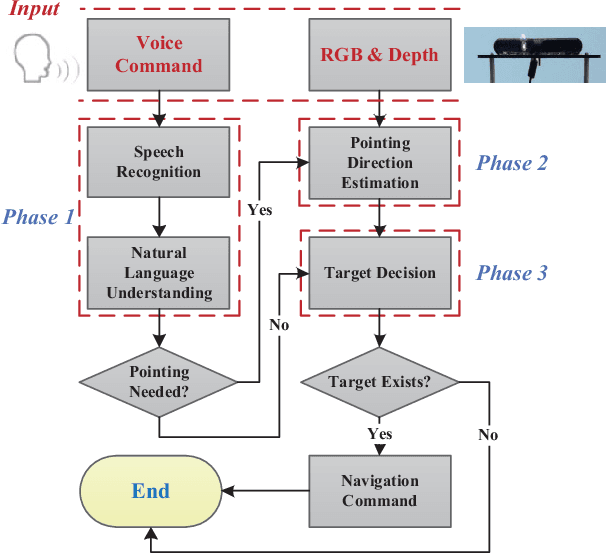

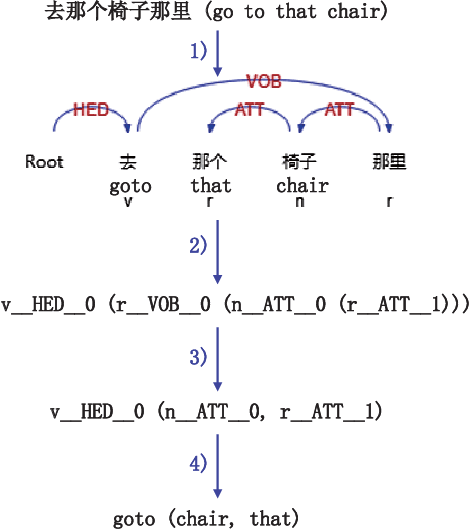

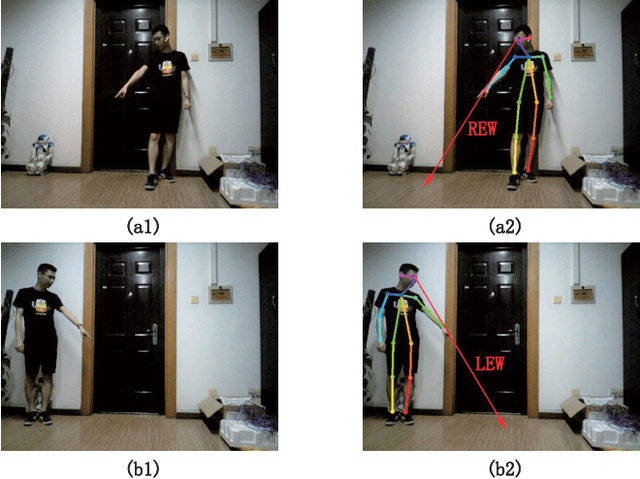

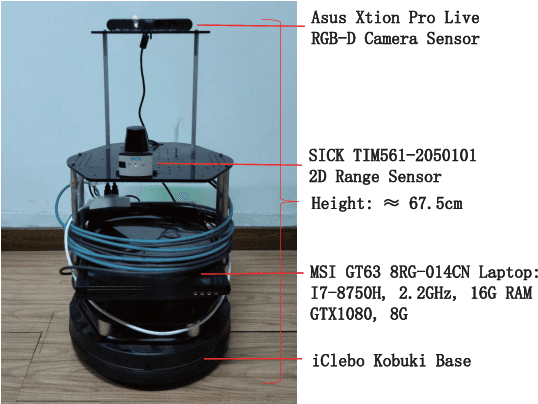

Abstract:Pointing gestures are widely used in robot navigationapproaches nowadays. However, most approaches only use point-ing gestures, and these have two major limitations. Firstly, they need to recognize pointing gestures all the time, which leads to long processing time and significant system overheads. Secondly,the user's pointing direction may not be very accurate, so the robot may go to an undesired place. To relieve these limitations,we propose a voice-guided pointing robot navigation approach named VGPN, and implement its prototype on a wheeled robot,TurtleBot 2. VGPN recognizes a pointing gesture only if voice information is insufficient for navigation. VGPN also uses voice information as a supplementary channel to help determine the target position of the user's pointing gesture. In the evaluation,we compare VGPN to the pointing-only navigation approach. The results show that VGPN effectively reduces the processing timecost when pointing gesture is unnecessary, and improves the usersatisfaction with navigation accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge