Terry R. Payne

The AnIML Ontology: Enabling Semantic Interoperability for Large-Scale Experimental Data in Interconnected Scientific Labs

Apr 02, 2026Abstract:Achieving semantic interoperability across heterogeneous experimental data systems remains a major barrier to data-driven scientific discovery. The Analytical Information Markup Language (AnIML), a flexible XML-based standard for analytical chemistry and biology, is increasingly used in industrial R&D labs for managing and exchanging experimental data. However, the expressivity of the XML schema permits divergent interpretations across stakeholders, introducing inconsistencies that undermine the interoperability the AnIML schema was designed to support. In this paper, we present the AnIML Ontology, an OWL 2 ontology that formalises the semantics of AnIML and aligns it with the Allotrope Data Format to support future cross-system and cross-lab interoperability. The ontology was developed using an expert-in-the-loop approach combining LLM-assisted requirement elicitation with collaborative ontology engineering. We validate the ontology through a multi-layered approach: data-driven transformation of real-world AnIML files into knowledge graphs, competency question verification via SPARQL, and a novel validation protocol based on adversarial negative competency questions mapped to established ontological anti-patterns and enforced via SHACL constraints.

IDEA2: Expert-in-the-loop competency question elicitation for collaborative ontology engineering

Apr 01, 2026Abstract:Competency question (CQ) elicitation represents a critical but resource-intensive bottleneck in ontology engineering. This foundational phase is often hampered by the communication gap between domain experts, who possess the necessary knowledge, and ontology engineers, who formalise it. This paper introduces IDEA2, a novel, semi-automated workflow that integrates Large Language Models (LLMs) within a collaborative, expert-in-the-loop process to address this challenge. The methodology is characterised by a core iterative loop: an initial LLM-based extraction of CQs from requirement documents, a co-creational review and feedback phase by domain experts on an accessible collaborative platform, and an iterative, feedback-driven reformulation of rejected CQs by an LLM until consensus is achieved. To ensure transparency and reproducibility, the entire lifecycle of each CQ is tracked using a provenance model that captures the full lineage of edits, anonymised feedback, and generation parameters. The workflow was validated in 2 real-world scenarios (scientific data, cultural heritage), demonstrating that IDEA2 can accelerate the requirements engineering process, improve the acceptance and relevance of the resulting CQs, and exhibit high usability and effectiveness among domain experts. We release all code and experiments at https://github.com/KE-UniLiv/IDEA2

Evaluating the Fitness of Ontologies for the Task of Question Generation

Apr 08, 2025Abstract:Ontology-based question generation is an important application of semantic-aware systems that enables the creation of large question banks for diverse learning environments. The effectiveness of these systems, both in terms of the calibre and cognitive difficulty of the resulting questions, depends heavily on the quality and modelling approach of the underlying ontologies, making it crucial to assess their fitness for this task. To date, there has been no comprehensive investigation into the specific ontology aspects or characteristics that affect the question generation process. Therefore, this paper proposes a set of requirements and task-specific metrics for evaluating the fitness of ontologies for question generation tasks in pedagogical settings. Using the ROMEO methodology, a structured framework for deriving task-specific metrics, an expert-based approach is employed to assess the performance of various ontologies in Automatic Question Generation (AQG) tasks, which is then evaluated over a set of ontologies. Our results demonstrate that ontology characteristics significantly impact the effectiveness of question generation, with different ontologies exhibiting varying performance levels. This highlights the importance of assessing ontology quality with respect to AQG tasks.

Unlocking Your Sales Insights: Advanced XGBoost Forecasting Models for Amazon Products

Nov 01, 2024

Abstract:One of the important factors of profitability is the volume of transactions. An accurate prediction of the future transaction volume becomes a pivotal factor in shaping corporate operations and decision-making processes. E-commerce has presented manufacturers with convenient sales channels to, with which the sales can increase dramatically. In this study, we introduce a solution that leverages the XGBoost model to tackle the challenge of predict-ing sales for consumer electronics products on the Amazon platform. Initial-ly, our attempts to solely predict sales volume yielded unsatisfactory results. However, by replacing the sales volume data with sales range values, we achieved satisfactory accuracy with our model. Furthermore, our results in-dicate that XGBoost exhibits superior predictive performance compared to traditional models.

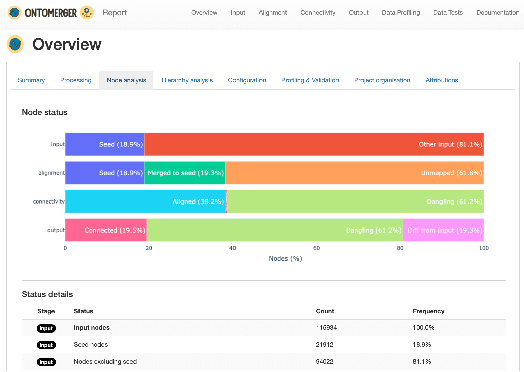

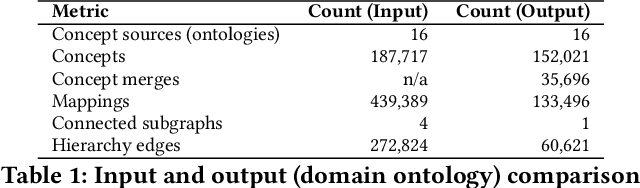

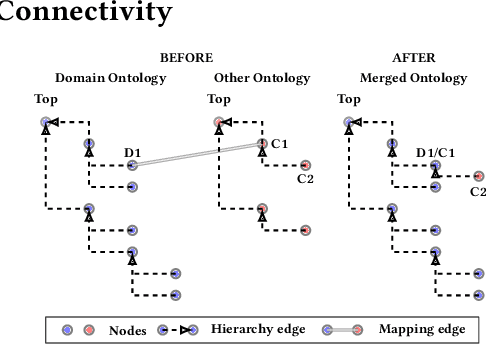

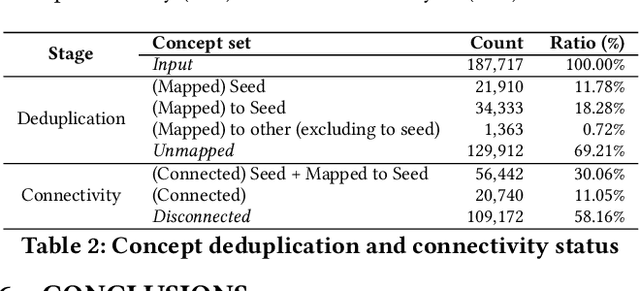

OntoMerger: An Ontology Integration Library for Deduplicating and Connecting Knowledge Graph Nodes

Jun 05, 2022

Abstract:Duplication of nodes is a common problem encountered when building knowledge graphs (KGs) from heterogeneous datasets, where it is crucial to be able to merge nodes having the same meaning. OntoMerger is a Python ontology integration library whose functionality is to deduplicate KG nodes. Our approach takes a set of KG nodes, mappings and disconnected hierarchies and generates a set of merged nodes together with a connected hierarchy. In addition, the library provides analytic and data testing functionalities that can be used to fine-tune the inputs, further reducing duplication, and to increase connectivity of the output graph. OntoMerger can be applied to a wide variety of ontologies and KGs. In this paper we introduce OntoMerger and illustrate its functionality on a real-world biomedical KG.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge