Sofia Vallecorsa

Quantum anomaly detection in the latent space of proton collision events at the LHC

Jan 25, 2023Abstract:We propose a new strategy for anomaly detection at the LHC based on unsupervised quantum machine learning algorithms. To accommodate the constraints on the problem size dictated by the limitations of current quantum hardware we develop a classical convolutional autoencoder. The designed quantum anomaly detection models, namely an unsupervised kernel machine and two clustering algorithms, are trained to find new-physics events in the latent representation of LHC data produced by the autoencoder. The performance of the quantum algorithms is benchmarked against classical counterparts on different new-physics scenarios and its dependence on the dimensionality of the latent space and the size of the training dataset is studied. For kernel-based anomaly detection, we identify a regime where the quantum model significantly outperforms its classical counterpart. An instance of the kernel machine is implemented on a quantum computer to verify its suitability for available hardware. We demonstrate that the observed consistent performance advantage is related to the inherent quantum properties of the circuit used.

The Quantum Path Kernel: a Generalized Quantum Neural Tangent Kernel for Deep Quantum Machine Learning

Dec 22, 2022

Abstract:Building a quantum analog of classical deep neural networks represents a fundamental challenge in quantum computing. A key issue is how to address the inherent non-linearity of classical deep learning, a problem in the quantum domain due to the fact that the composition of an arbitrary number of quantum gates, consisting of a series of sequential unitary transformations, is intrinsically linear. This problem has been variously approached in the literature, principally via the introduction of measurements between layers of unitary transformations. In this paper, we introduce the Quantum Path Kernel, a formulation of quantum machine learning capable of replicating those aspects of deep machine learning typically associated with superior generalization performance in the classical domain, specifically, hierarchical feature learning. Our approach generalizes the notion of Quantum Neural Tangent Kernel, which has been used to study the dynamics of classical and quantum machine learning models. The Quantum Path Kernel exploits the parameter trajectory, i.e. the curve delineated by model parameters as they evolve during training, enabling the representation of differential layer-wise convergence behaviors, or the formation of hierarchical parametric dependencies, in terms of their manifestation in the gradient space of the predictor function. We evaluate our approach with respect to variants of the classification of Gaussian XOR mixtures - an artificial but emblematic problem that intrinsically requires multilevel learning in order to achieve optimal class separation.

Conditional Progressive Generative Adversarial Network for satellite image generation

Nov 28, 2022

Abstract:Image generation and image completion are rapidly evolving fields, thanks to machine learning algorithms that are able to realistically replace missing pixels. However, generating large high resolution images, with a large level of details, presents important computational challenges. In this work, we formulate the image generation task as completion of an image where one out of three corners is missing. We then extend this approach to iteratively build larger images with the same level of detail. Our goal is to obtain a scalable methodology to generate high resolution samples typically found in satellite imagery data sets. We introduce a conditional progressive Generative Adversarial Networks (GAN), that generates the missing tile in an image, using as input three initial adjacent tiles encoded in a latent vector by a Wasserstein auto-encoder. We focus on a set of images used by the United Nations Satellite Centre (UNOSAT) to train flood detection tools, and validate the quality of synthetic images in a realistic setup.

Running the Dual-PQC GAN on noisy simulators and real quantum hardware

May 30, 2022

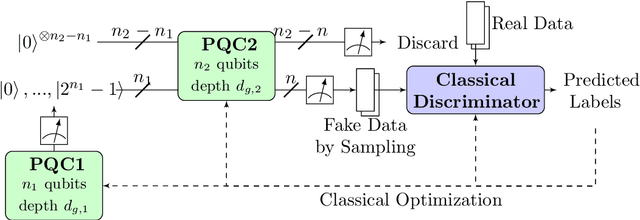

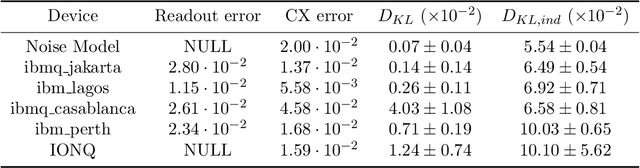

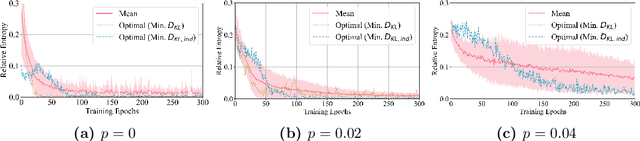

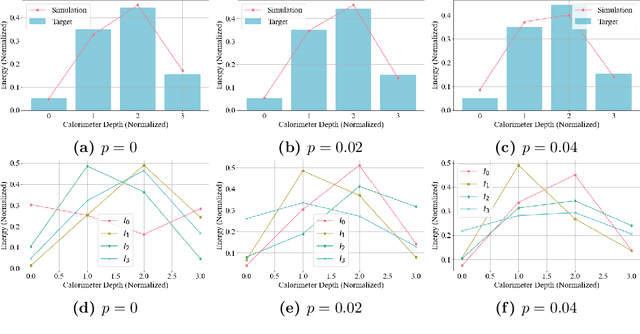

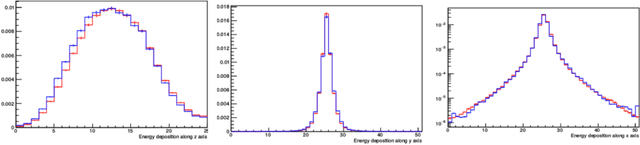

Abstract:In an earlier work, we introduced dual-Parameterized Quantum Circuit (PQC) Generative Adversarial Networks (GAN), an advanced prototype of a quantum GAN. We applied the model on a realistic High-Energy Physics (HEP) use case: the exact theoretical simulation of a calorimeter response with a reduced problem size. This paper explores the dual- PQC GAN for a more practical usage by testing its performance in the presence of different types of quantum noise, which are the major obstacles to overcome for successful deployment using near-term quantum devices. The results propose the possibility of running the model on current real hardware, but improvements are still required in some areas.

Conditional Born machine for Monte Carlo events generation

May 16, 2022

Abstract:Generative modeling is a promising task for near-term quantum devices, which can use the stochastic nature of quantum measurements as random source. So called Born machines are purely quantum models and promise to generate probability distributions in a quantum way, inaccessible to classical computers. This paper presents an application of Born machines to Monte Carlo simulations and extends their reach to multivariate and conditional distributions. Models are run on (noisy) simulators and IBM Quantum superconducting quantum hardware. More specifically, Born machines are used to generate muonic force carriers (MFC) events resulting from scattering processes between muons and the detector material in high-energy-physics colliders experiments. MFCs are bosons appearing in beyond the standard model theoretical frameworks, which are candidates for dark matter. Empirical evidences suggest that Born machines can reproduce the underlying distribution of datasets coming from Monte Carlo simulations, and are competitive with classical machine learning-based generative models of similar complexity.

Quantum neural networks force fields generation

Mar 09, 2022

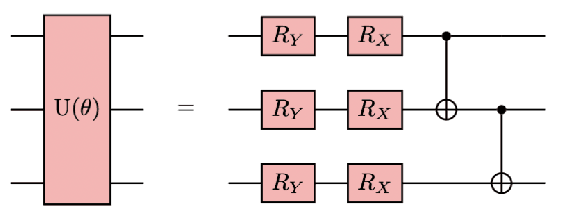

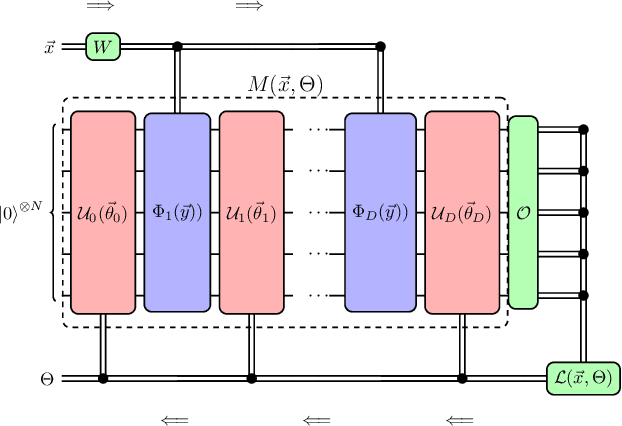

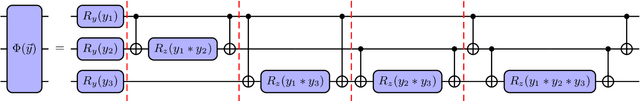

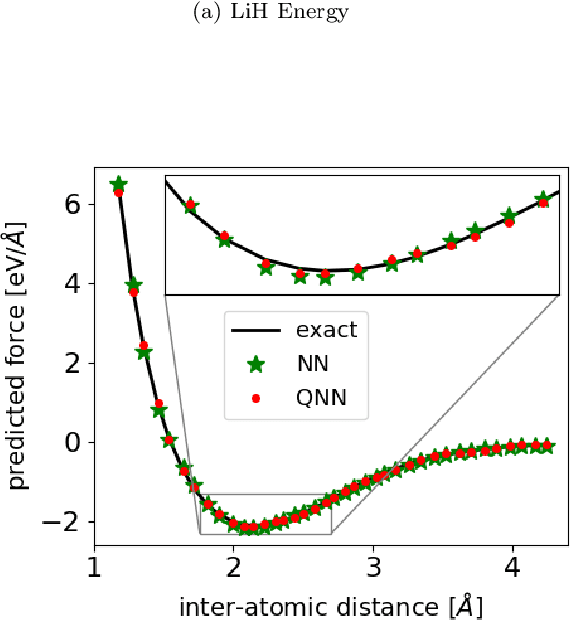

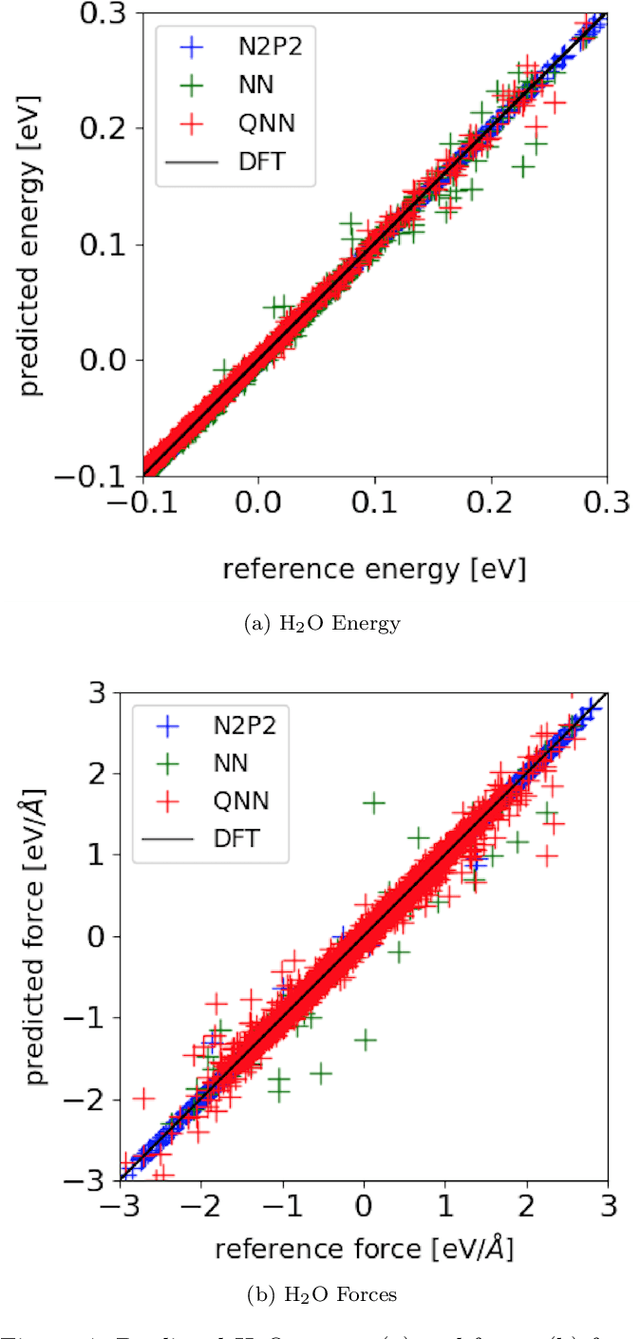

Abstract:Accurate molecular force fields are of paramount importance for the efficient implementation of molecular dynamics techniques at large scales. In the last decade, machine learning methods have demonstrated impressive performances in predicting accurate values for energy and forces when trained on finite size ensembles generated with ab initio techniques. At the same time, quantum computers have recently started to offer new viable computational paradigms to tackle such problems. On the one hand, quantum algorithms may notably be used to extend the reach of electronic structure calculations. On the other hand, quantum machine learning is also emerging as an alternative and promising path to quantum advantage. Here we follow this second route and establish a direct connection between classical and quantum solutions for learning neural network potentials. To this end, we design a quantum neural network architecture and apply it successfully to different molecules of growing complexity. The quantum models exhibit larger effective dimension with respect to classical counterparts and can reach competitive performances, thus pointing towards potential quantum advantages in natural science applications via quantum machine learning.

Dual-Tasks Siamese Transformer Framework for Building Damage Assessment

Jan 26, 2022

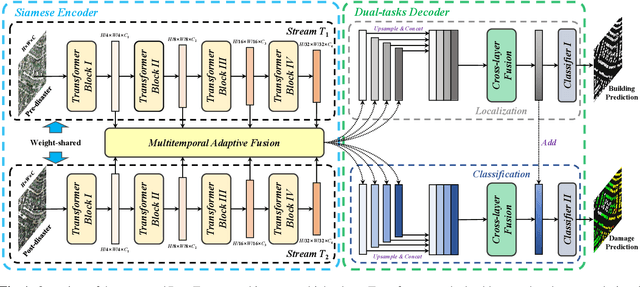

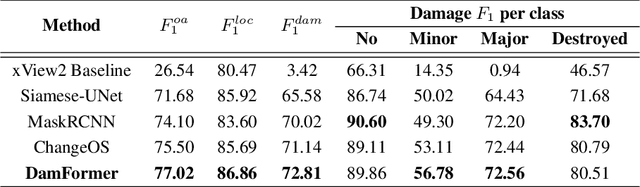

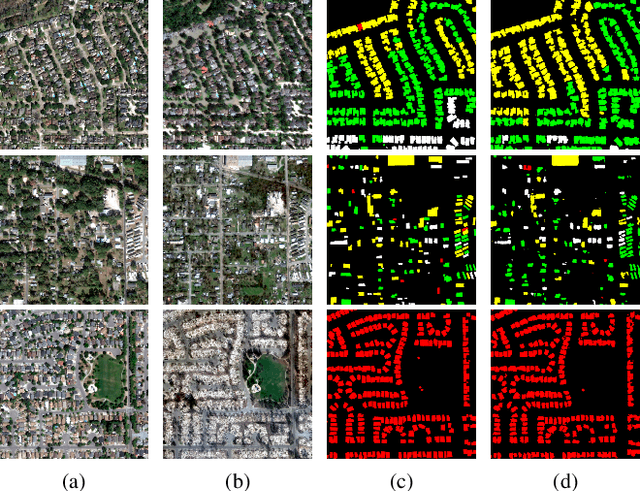

Abstract:Accurate and fine-grained information about the extent of damage to buildings is essential for humanitarian relief and disaster response. However, as the most commonly used architecture in remote sensing interpretation tasks, Convolutional Neural Networks (CNNs) have limited ability to model the non-local relationship between pixels. Recently, Transformer architecture first proposed for modeling long-range dependency in natural language processing has shown promising results in computer vision tasks. Considering the frontier advances of Transformer architecture in the computer vision field, in this paper, we present the first attempt at designing a Transformer-based damage assessment architecture (DamFormer). In DamFormer, a siamese Transformer encoder is first constructed to extract non-local and representative deep features from input multitemporal image-pairs. Then, a multitemporal fusion module is designed to fuse information for downstream tasks. Finally, a lightweight dual-tasks decoder aggregates multi-level features for final prediction. To the best of our knowledge, it is the first time that such a deep Transformer-based network is proposed for multitemporal remote sensing interpretation tasks. The experimental results on the large-scale damage assessment dataset xBD demonstrate the potential of the Transformer-based architecture.

Accelerating GAN training using highly parallel hardware on public cloud

Nov 08, 2021

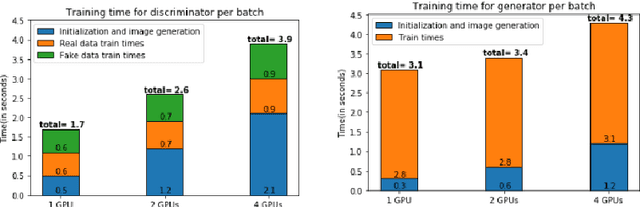

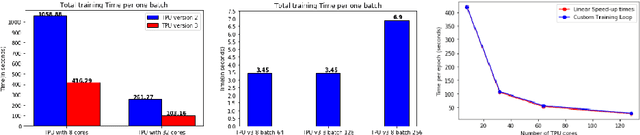

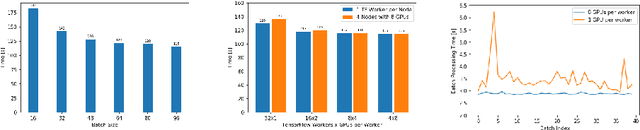

Abstract:With the increasing number of Machine and Deep Learning applications in High Energy Physics, easy access to dedicated infrastructure represents a requirement for fast and efficient R&D. This work explores different types of cloud services to train a Generative Adversarial Network (GAN) in a parallel environment, using Tensorflow data parallel strategy. More specifically, we parallelize the training process on multiple GPUs and Google Tensor Processing Units (TPU) and we compare two algorithms: the TensorFlow built-in logic and a custom loop, optimised to have higher control of the elements assigned to each GPU worker or TPU core. The quality of the generated data is compared to Monte Carlo simulation. Linear speed-up of the training process is obtained, while retaining most of the performance in terms of physics results. Additionally, we benchmark the aforementioned approaches, at scale, over multiple GPU nodes, deploying the training process on different public cloud providers, seeking for overall efficiency and cost-effectiveness. The combination of data science, cloud deployment options and associated economics allows to burst out heterogeneously, exploring the full potential of cloud-based services.

Hybrid Quantum Classical Graph Neural Networks for Particle Track Reconstruction

Sep 26, 2021

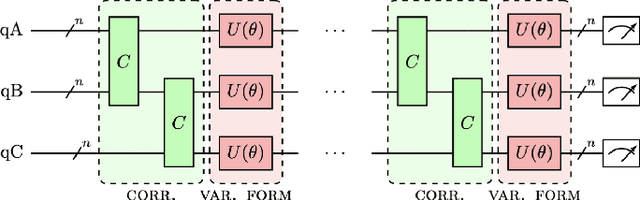

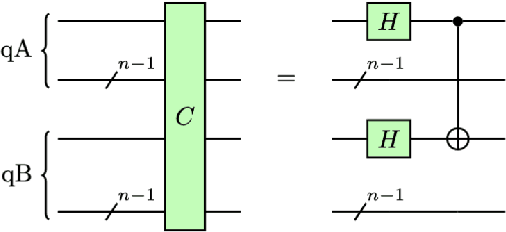

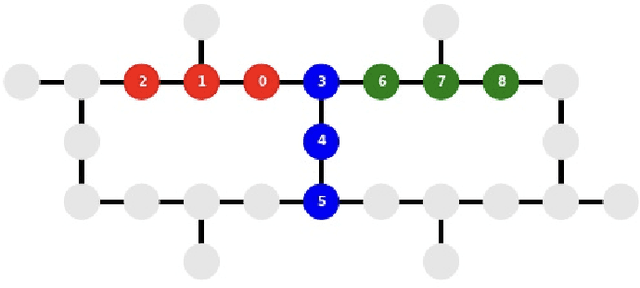

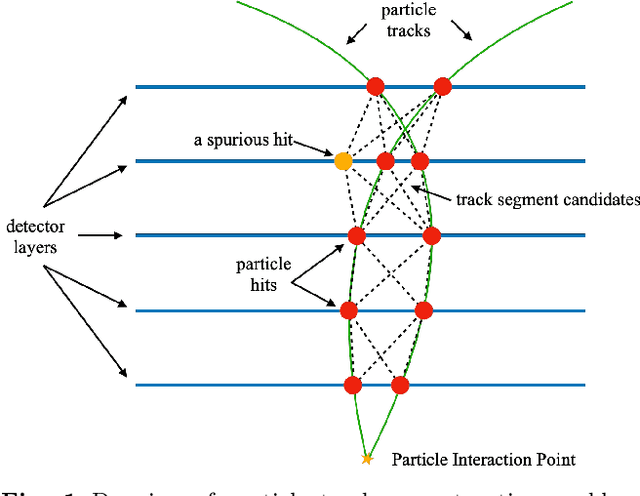

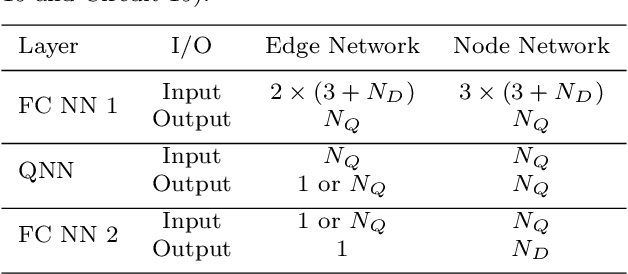

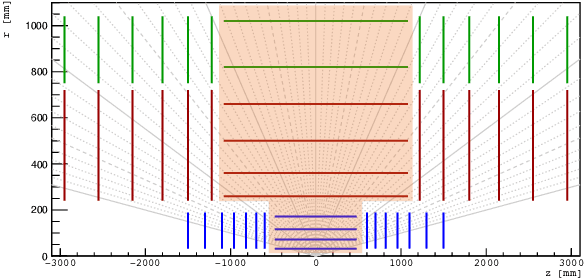

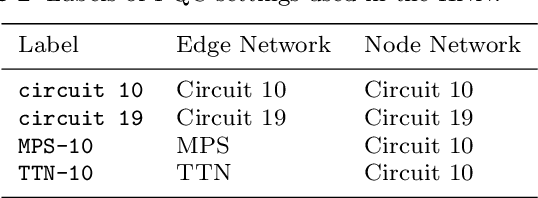

Abstract:The Large Hadron Collider (LHC) at the European Organisation for Nuclear Research (CERN) will be upgraded to further increase the instantaneous rate of particle collisions (luminosity) and become the High Luminosity LHC (HL-LHC). This increase in luminosity will significantly increase the number of particles interacting with the detector. The interaction of particles with a detector is referred to as "hit". The HL-LHC will yield many more detector hits, which will pose a combinatorial challenge by using reconstruction algorithms to determine particle trajectories from those hits. This work explores the possibility of converting a novel Graph Neural Network model, that can optimally take into account the sparse nature of the tracking detector data and their complex geometry, to a Hybrid Quantum-Classical Graph Neural Network that benefits from using Variational Quantum layers. We show that this hybrid model can perform similar to the classical approach. Also, we explore Parametrized Quantum Circuits (PQC) with different expressibility and entangling capacities, and compare their training performance in order to quantify the expected benefits. These results can be used to build a future road map to further develop circuit based Hybrid Quantum-Classical Graph Neural Networks.

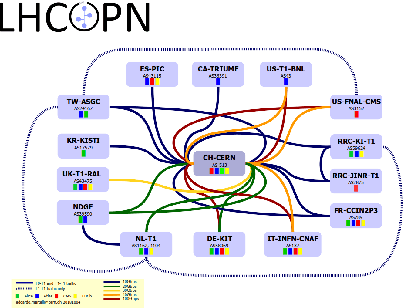

Convolutional LSTM models to estimate network traffic

Jul 06, 2021

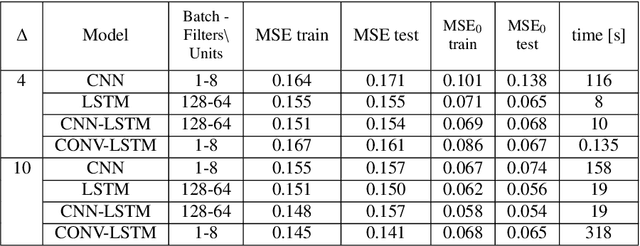

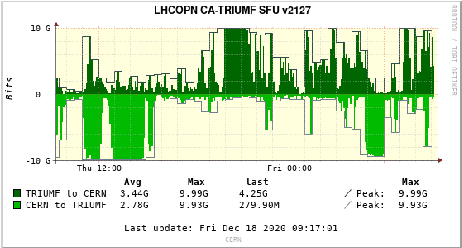

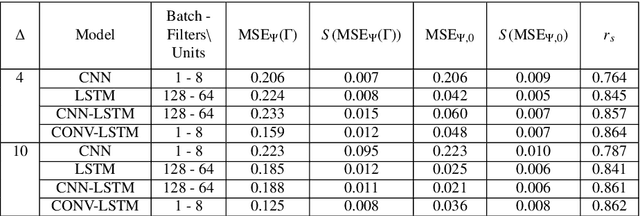

Abstract:Network utilisation efficiency can, at least in principle, often be improved by dynamically re-configuring routing policies to better distribute on-going large data transfers. Unfortunately, the information necessary to decide on an appropriate reconfiguration - details of on-going and upcoming data transfers such as their source and destination and, most importantly, their volume and duration - is usually lacking. Fortunately, the increased use of scheduled transfer services, such as FTS, makes it possible to collect the necessary information. However, the mere detection and characterisation of larger transfers is not sufficient to predict with confidence the likelihood a network link will become overloaded. In this paper we present the use of LSTM-based models (CNN-LSTM and Conv-LSTM) to effectively estimate future network traffic and so provide a solid basis for formulating a sensible network configuration plan.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge