Daniel Dobos

Design Patterns for Securing LLM Agents against Prompt Injections

Jun 11, 2025

Abstract:As AI agents powered by Large Language Models (LLMs) become increasingly versatile and capable of addressing a broad spectrum of tasks, ensuring their security has become a critical challenge. Among the most pressing threats are prompt injection attacks, which exploit the agent's resilience on natural language inputs -- an especially dangerous threat when agents are granted tool access or handle sensitive information. In this work, we propose a set of principled design patterns for building AI agents with provable resistance to prompt injection. We systematically analyze these patterns, discuss their trade-offs in terms of utility and security, and illustrate their real-world applicability through a series of case studies.

Hybrid Quantum Classical Graph Neural Networks for Particle Track Reconstruction

Sep 26, 2021

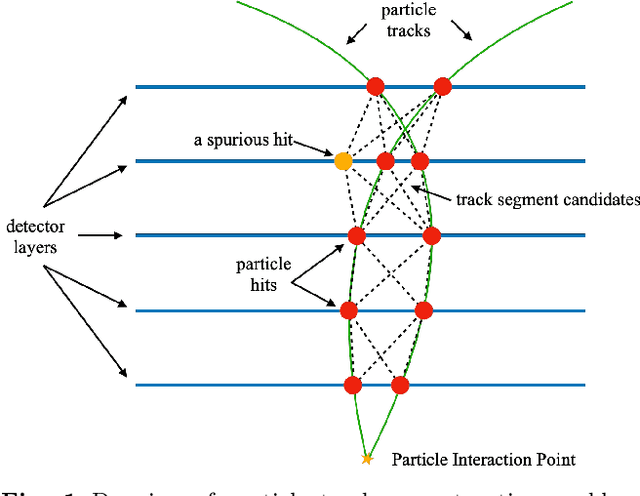

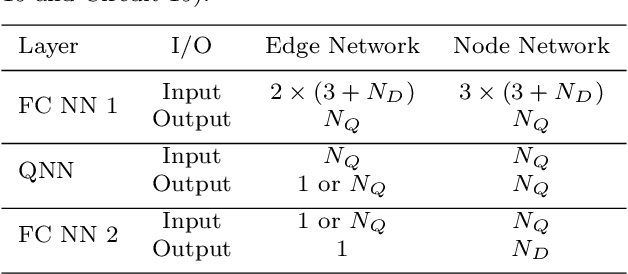

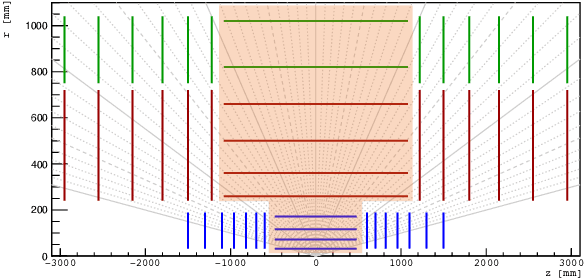

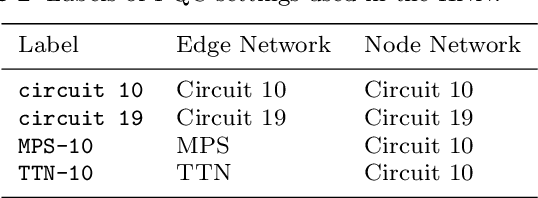

Abstract:The Large Hadron Collider (LHC) at the European Organisation for Nuclear Research (CERN) will be upgraded to further increase the instantaneous rate of particle collisions (luminosity) and become the High Luminosity LHC (HL-LHC). This increase in luminosity will significantly increase the number of particles interacting with the detector. The interaction of particles with a detector is referred to as "hit". The HL-LHC will yield many more detector hits, which will pose a combinatorial challenge by using reconstruction algorithms to determine particle trajectories from those hits. This work explores the possibility of converting a novel Graph Neural Network model, that can optimally take into account the sparse nature of the tracking detector data and their complex geometry, to a Hybrid Quantum-Classical Graph Neural Network that benefits from using Variational Quantum layers. We show that this hybrid model can perform similar to the classical approach. Also, we explore Parametrized Quantum Circuits (PQC) with different expressibility and entangling capacities, and compare their training performance in order to quantify the expected benefits. These results can be used to build a future road map to further develop circuit based Hybrid Quantum-Classical Graph Neural Networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge