Roman Andriushchenko

Shields to Guarantee Probabilistic Safety in MDPs

May 11, 2026Abstract:Shielding is a prominent model-based technique to ensure safety of autonomous agents. Classical shielding aims to ensure that nothing bad ever happens and comes with strong guarantees about safety and maximal permissiveness. However, shielding systems for probabilistic safety, where something bad is allowed to happen with an acceptable probability, has proven to be more intricate. This paper presents a formal framework that conservatively extends classical shields to probabilistic safety. In this framework, we (i) demonstrate the impossibility of preserving the strong guarantees on safety and permissiveness, (ii) provide natural shields with weaker guarantees, and (iii) introduce offline and online shield constructions ensuring strong safety guarantees. The empirical evaluation highlights the practical advantages of the new shields, as well as their computational feasibility.

\textsc{rfPG}: Robust Finite-Memory Policy Gradients for Hidden-Model POMDPs

May 14, 2025Abstract:Partially observable Markov decision processes (POMDPs) model specific environments in sequential decision-making under uncertainty. Critically, optimal policies for POMDPs may not be robust against perturbations in the environment. Hidden-model POMDPs (HM-POMDPs) capture sets of different environment models, that is, POMDPs with a shared action and observation space. The intuition is that the true model is hidden among a set of potential models, and it is unknown which model will be the environment at execution time. A policy is robust for a given HM-POMDP if it achieves sufficient performance for each of its POMDPs. We compute such robust policies by combining two orthogonal techniques: (1) a deductive formal verification technique that supports tractable robust policy evaluation by computing a worst-case POMDP within the HM-POMDP and (2) subgradient ascent to optimize the candidate policy for a worst-case POMDP. The empirical evaluation shows that, compared to various baselines, our approach (1) produces policies that are more robust and generalize better to unseen POMDPs and (2) scales to HM-POMDPs that consist of over a hundred thousand environments.

Decentralized Planning Using Probabilistic Hyperproperties

Feb 19, 2025

Abstract:Multi-agent planning under stochastic dynamics is usually formalised using decentralized (partially observable) Markov decision processes ( MDPs) and reachability or expected reward specifications. In this paper, we propose a different approach: we use an MDP describing how a single agent operates in an environment and probabilistic hyperproperties to capture desired temporal objectives for a set of decentralized agents operating in the environment. We extend existing approaches for model checking probabilistic hyperproperties to handle temporal formulae relating paths of different agents, thus requiring the self-composition between multiple MDPs. Using several case studies, we demonstrate that our approach provides a flexible and expressive framework to broaden the specification capabilities with respect to existing planning techniques. Additionally, we establish a close connection between a subclass of probabilistic hyperproperties and planning for a particular type of Dec-MDPs, for both of which we show undecidability. This lays the ground for the use of existing decentralized planning tools in the field of probabilistic hyperproperty verification.

Deductive Controller Synthesis for Probabilistic Hyperproperties

Jul 10, 2023Abstract:Probabilistic hyperproperties specify quantitative relations between the probabilities of reaching different target sets of states from different initial sets of states. This class of behavioral properties is suitable for capturing important security, privacy, and system-level requirements. We propose a new approach to solve the controller synthesis problem for Markov decision processes (MDPs) and probabilistic hyperproperties. Our specification language builds on top of the logic HyperPCTL and enhances it with structural constraints over the synthesized controllers. Our approach starts from a family of controllers represented symbolically and defined over the same copy of an MDP. We then introduce an abstraction refinement strategy that can relate multiple computation trees and that we employ to prune the search space deductively. The experimental evaluation demonstrates that the proposed approach considerably outperforms HyperProb, a state-of-the-art SMT-based model checking tool for HyperPCTL. Moreover, our approach is the first one that is able to effectively combine probabilistic hyperproperties with additional intra-controller constraints (e.g. partial observability) as well as inter-controller constraints (e.g. agreements on a common action).

Inductive Synthesis for Probabilistic Programs Reaches New Horizons

Jan 29, 2021

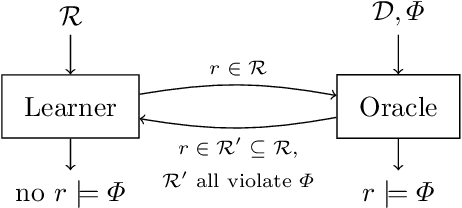

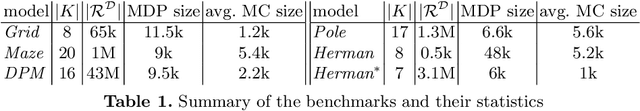

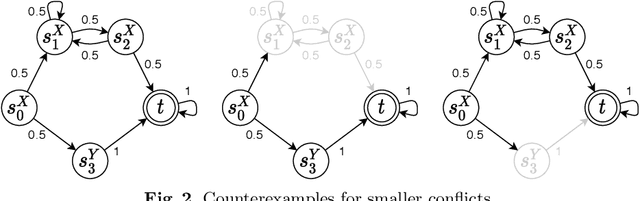

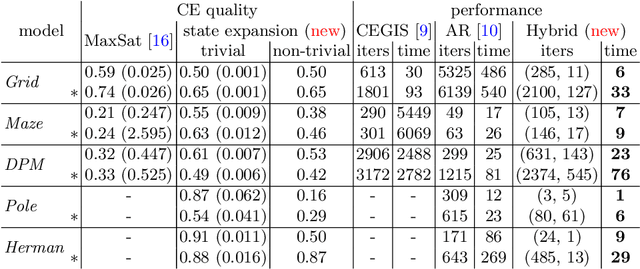

Abstract:This paper presents a novel method for the automated synthesis of probabilistic programs. The starting point is a program sketch representing a finite family of finite-state Markov chains with related but distinct topologies, and a PCTL specification. The method builds on a novel inductive oracle that greedily generates counter-examples (CEs) for violating programs and uses them to prune the family. These CEs leverage the semantics of the family in the form of bounds on its best- and worst-case behaviour provided by a deductive oracle using an MDP abstraction. The method further monitors the performance of the synthesis and adaptively switches between the inductive and deductive reasoning. Our experiments demonstrate that the novel CE construction provides a significantly faster and more effective pruning strategy leading to acceleration of the synthesis process on a wide range of benchmarks. For challenging problems, such as the synthesis of decentralized partially-observable controllers, we reduce the run-time from a day to minutes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge