Richard Demo Souza

Active IoT User Detection in Near-Field with Location Information

Feb 23, 2026Abstract:In this paper, we address active users detection (AUD) in near-field Internet of Things (IoT) networks by exploring prior knowledge of users' locations. We consider a scenario where users are distributed in a semi-circular area within the Rayleigh distance of a multi-antenna base station (BS). We propose the BS to use location estimates of the users to reconstruct their line-of-sight (LoS) channel components, hence assisting the AUD process. For this, the BS combines these reconstructed channels with users' pilot sequences, enhancing the correlation between received signals and active users. We formulate the location-aided AUD as a convex optimization problem, solved via the alternating direction method of multipliers (ADMM). {Our proposal has a higher computational complexity compared to the baseline ADMM approach where location information is not used. Moreover, the proposal requires location information of users, which can be readily informed if users are static, or inferred via established localization algorithms if they are mobile.} Simulation results compare our proposal against the baseline across varying systems parameters, such as number of users, pilot length and LoS component strength. We demonstrate that under perfect location estimation and strong LoS, our proposed method significantly outperforms the baseline. Furthermore, robustness analysis shows that performance gains persist under imperfect location estimation, provided the estimation error remains within bounds determined by the system parameters.

Wireless Energy Transfer Beamforming Optimization for Intelligent Transmitting Surface

Jul 09, 2025

Abstract:Radio frequency (RF) wireless energy transfer (WET) is a promising technology for powering the growing ecosystem of Internet of Things (IoT) devices using power beacons (PBs). Recent research focuses on designing efficient PB architectures that can support numerous antennas. In this context, PBs equipped with intelligent surfaces present a promising approach, enabling physically large, reconfigurable arrays. Motivated by these advantages, this work aims to minimize the power consumption of a PB equipped with a passive intelligent transmitting surface (ITS) and a collocated digital beamforming-based feeder to charge multiple single-antenna devices. To model the PB's power consumption accurately, we consider power amplifiers nonlinearities, ITS control power, and feeder-to-ITS air interface losses. The resulting optimization problem is highly nonlinear and nonconvex due to the high-power amplifier (HPA), the received power constraints at the devices, and the unit-modulus constraint imposed by the phase shifter configuration of the ITS. To tackle this issue, we apply successive convex approximation (SCA) to iteratively solve convex subproblems that jointly optimize the digital precoder and phase configuration. Given SCA's sensitivity to initialization, we propose an algorithm that ensures initialization feasibility while balancing convergence speed and solution quality. We compare the proposed ITS-equipped PB's power consumption against benchmark architectures featuring digital and hybrid analog-digital beamforming. Results demonstrate that the proposed architecture efficiently scales with the number of RF chains and ITS elements. We also show that nonuniform ITS power distribution influences beamforming and can shift a device between near- and far-field regions, even with a constant aperture.

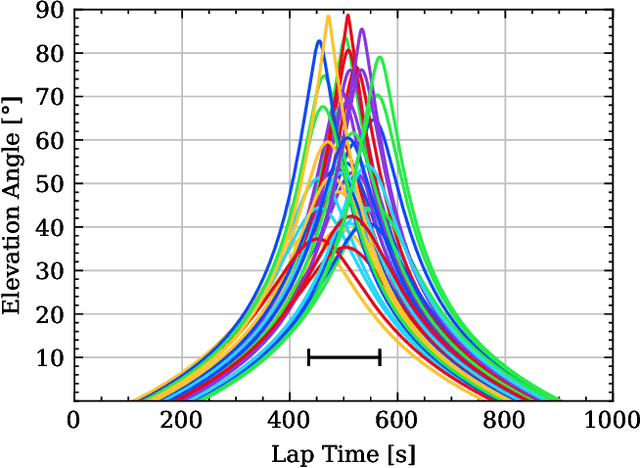

Doppler Estimation and Compensation Techniques in LoRa Direct-to-Satellite Communications

Jun 25, 2025Abstract:Within the LPWAN framework, the LoRa modulation adopted by LoRaWAN technology has garnered significant interest as a connectivity solution for IoT applications due to its ability to offer low-cost, low-power, and long-range communications. One emerging use case of LoRa is DtS connectivity, which extends coverage to remote areas for supporting IoT operations. The satellite IoT industry mainly prefers LEO because it has lower launch costs and less path loss compared to Geostationary orbit. However, a major drawback of LEO satellites is the impact of the Doppler effect caused by their mobility. Earlier studies have confirmed that the Doppler effect significantly degrades the LoRa DtS performance. In this paper, we propose four frameworks for Doppler estimation and compensation in LoRa DtS connectivity and numerically compare the performance against the ideal scenario without the Doppler effect. Furthermore, we investigate the trade-offs among these frameworks by analyzing the interplay between spreading factor, and other key parameters related to the Doppler effect. The results provide insights into how to achieve robust LoRa configurations for DtS connectivity.

Federated Learning-Distillation Alternation for Resource-Constrained IoT

May 26, 2025Abstract:Federated learning (FL) faces significant challenges in Internet of Things (IoT) networks due to device limitations in energy and communication resources, especially when considering the large size of FL models. From an energy perspective, the challenge is aggravated if devices rely on energy harvesting (EH), as energy availability can vary significantly over time, influencing the average number of participating users in each iteration. Additionally, the transmission of large model updates is more susceptible to interference from uncorrelated background traffic in shared wireless environments. As an alternative, federated distillation (FD) reduces communication overhead and energy consumption by transmitting local model outputs, which are typically much smaller than the entire model used in FL. However, this comes at the cost of reduced model accuracy. Therefore, in this paper, we propose FL-distillation alternation (FLDA). In FLDA, devices alternate between FD and FL phases, balancing model information with lower communication overhead and energy consumption per iteration. We consider a multichannel slotted-ALOHA EH-IoT network subject to background traffic/interference. In such a scenario, FLDA demonstrates higher model accuracy than both FL and FD, and achieves faster convergence than FL. Moreover, FLDA achieves target accuracies saving up to 98% in energy consumption, while also being less sensitive to interference, both relative to FL.

Age of Information in Multi-Relay Networks with Maximum Age Scheduling

Mar 20, 2025

Abstract:We propose and evaluate age of information (AoI)-aware multiple access mechanisms for the Internet of Things (IoT) in multi-relay two-hop networks. The network considered comprises end devices (EDs) communicating with a set of relays in ALOHA fashion, with new information packets to be potentially transmitted every time slot. The relays, in turn, forward the collected packets to an access point (AP), the final destination of the information generated by the EDs. More specifically, in this work we investigate the performance of four age-aware algorithms that prioritize older packets to be transmitted, namely max-age matching (MAM), iterative max-age scheduling (IMAS), age-based delayed request (ABDR), and buffered ABDR (B-ABDR). The former two algorithms are adapted into the multi-relay setup from previous research, and achieve satisfactory average AoI and average peak AoI performance, at the expense of a significant amount of information exchange between the relays and the AP. The latter two algorithms are newly proposed to let relays decide which one(s) will transmit in a given time slot, requiring less signaling than the former algorithms. We provide an analytical formulation for the AoI lower bound performance, compare the performance of all algorithms in this set-up, and show that they approach the lower bound. The latter holds especially true for B-ABDR, which approaches the lower bound the most closely, tilting the scale in its favor, as it also requires far less signaling than MAM and IMAS.

Probabilistic Allocation of Payload Code Rate and Header Copies in LR-FHSS Networks

Oct 07, 2024

Abstract:We evaluate the performance of the LoRaWAN Long-Range Frequency Hopping Spread Spectrum (LR-FHSS) technique using a device-level probabilistic strategy for code rate and header replica allocation. Specifically, we investigate the effects of different header replica and code rate allocations at each end-device, guided by a probability distribution provided by the network server. As a benchmark, we compare the proposed strategy with the standardized LR-FHSS data rates DR8 and DR9. Our numerical results demonstrate that the proposed strategy consistently outperforms the DR8 and DR9 standard data rates across all considered scenarios. Notably, our findings reveal that the optimal distribution rarely includes data rate DR9, while data rate DR8 significantly contributes to the goodput and energy efficiency optimizations.

Reinforcenment Learning-Aided NOMA Random Access: An AoI-Based Timeliness Perspective

Oct 07, 2024Abstract:In this paper, we investigate the age-of-information (AoI) of a power domain non-orthogonal multiple access (NOMA) network, where multiple internet-of-things (IoT) devices transmit to a common gateway in a grant-free random fashion. More specifically, we consider a framed setup composed of multiple time slots, and resort to the $Q$-learning algorithm to properly define, in a distributed manner, the time slot and the power level each IoT device transmits within a frame. In the proposed AoI-QL-NOMA scheme, the $Q$-learning reward is adapted with the aim of minimizing the average AoI of the network, while only requiring a single feedback bit per time slot, in a frame basis. Our results show that AoI-QL-NOMA significantly improves the AoI performance compared to some recently proposed schemes, without significantly reducing the network throughput.

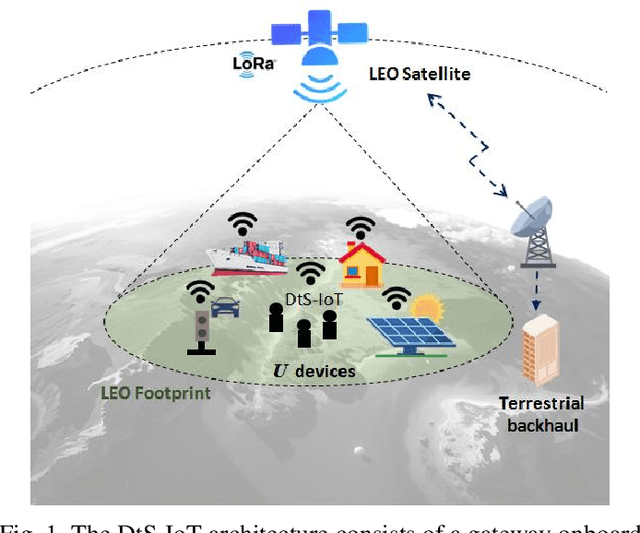

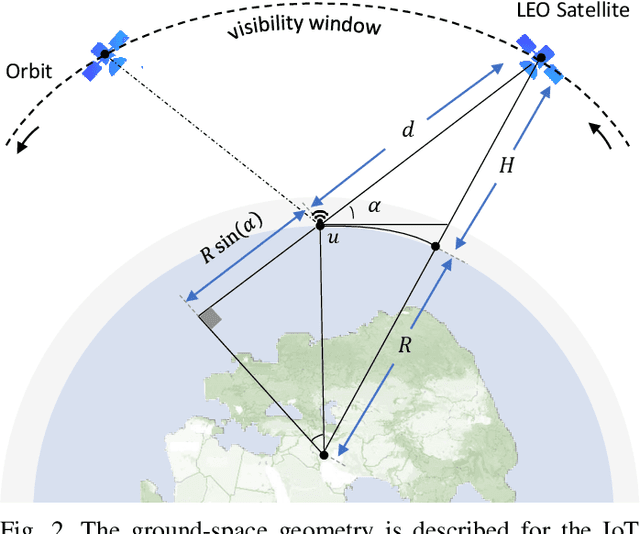

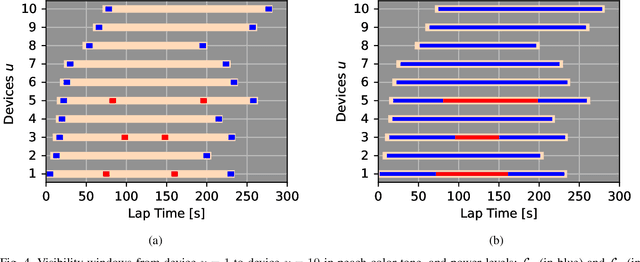

Non-Orthogonal Multiple-Access Strategies for Direct-to-Satellite IoT Networks

Sep 04, 2024

Abstract:Direct-to-Satellite IoT (DtS-IoT) has the potential to support multiple verticals, including agriculture, industry, smart cities, and environmental disaster prevention. This work introduces two novel DtS-IoT schemes using power domain NonOrthogonal Multiple Access (NOMA) in the uplink with either fixed (FTP) or controlled (CTP) transmit power. We consider that the IoT devices use LoRa technology to transmit data packets to the satellite in orbit, equipped with a Successive Interference Cancellation (SIC)-enabled gateway. We also assume the IoT devices are empowered with a predictor of the satellite orbit. Using real geographic location and trajectory data, we evaluate the performance of the average number of successfully decoded transmissions, goodput (bytes/lap), and energy consumption (bytes/Joule) as a function of the number of network devices. Numerical results show the trade-off between goodput and energy efficiency for both proposed schemes. Comparing FTP and CTP with regular ALOHA for 100 (600) devices, we find goodput improvements of 65% (29%) and 52% (101%), respectively. Notably, CTP effectively leverages transmission opportunities as the network size increases, outperforming the other strategies. Moreover, CTP shows the best performance in energy efficiency compared to FTP and ALOHA.

On the Spectral Efficiency of Movable and Rotary Access Points under Rician Fading

Aug 15, 2024

Abstract:Multi-User Multiple-Input Multiple-Output (MU-MIMO) is a pivotal technology in present-day wireless communication systems. In such systems, a base station or Access Point (AP) is equipped with multiple antenna elements and serves multiple active devices simultaneously. Nevertheless, most of the works evaluating the performance of MU-MIMO systems consider APs with static antenna arrays, that is, without any movement capability. Recently, the idea of APs and antenna arrays that are able to move have gained traction among the research community. Many works evaluate the communications performance of antenna systems able to move on the horizontal plane. However, such APs require a very bulky, complex and expensive movement system. In this work, we propose a simpler and cheaper alternative: the utilization of rotary APs, i.e. APs that can rotate. We also analyze the performance of a system in which the AP is able to both move and rotate. The movements and/or rotations of the APs are computed in order to maximize the mean per-user achievable spectral efficiency, based on estimates of the locations of the active devices and using particle swarm optimization. We adopt a spatially correlated Rician fading channel model, and evaluate the resulting optimized performance of the different setups in terms of mean per-user achievable spectral efficiencies. Our numerical results show that both the optimal rotations and movements of the APs can provide substantial performance gains when the line-of-sight components of the channel vectors are strong. Moreover, the simpler rotary APs can outperform the movable APs when their movement area is constrained.

Distributed MIMO Networks with Rotary ULAs for Indoor Scenarios under Rician Fading

Jun 27, 2024

Abstract:The Fifth-Generation (5G) wireless communications networks introduced native support for Machine-Type Communications (MTC) use cases. Nevertheless, current 5G networks cannot fully meet the very stringent requirements regarding latency, reliability, and number of connected devices of most MTC use cases. Industry and academia have been working on the evolution from 5G to Sixth Generation (6G) networks. One of the main novelties is adopting Distributed Multiple-Input Multiple-Output (D-MIMO) networks. However, most works studying D-MIMO consider antenna arrays with no movement capabilities, even though some recent works have shown that this could bring substantial performance improvements. In this work, we propose the utilization of Access Points (APs) equipped with Rotary Uniform Linear Arrays (RULAs) for this purpose. Considering a spatially correlated Rician fading model, the optimal angular position of the RULAs is jointly computed by the central processing unit using particle swarm optimization as a function of the position of the active devices. Considering the impact of imperfect positioning estimates, our numerical results show that the RULAs's optimal rotation brings substantial performance gains in terms of mean per-user spectral efficiency. The improvement grows with the strength of the line-of-sight components of the channel vectors. Given the total number of antenna elements, we study the trade-off between the number of APs and the number of antenna elements per AP, revealing an optimal number of APs for the cases of APs equipped with static ULAs and RULAs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge