Rafael F. Schaefer

Frequency Hopping Waveform Design for Secure Integrated Sensing and Communications

Apr 14, 2025Abstract:We introduce a comprehensive approach to enhance the security, privacy, and sensing capabilities of integrated sensing and communications (ISAC) systems by leveraging random frequency agility (RFA) and random pulse repetition interval (PRI) agility (RPA) techniques. The combination of these techniques, which we refer to collectively as random frequency and PRI agility (RFPA), with channel reciprocity-based key generation (CRKG) obfuscates both Doppler frequency and PRIs, significantly hindering the chances that passive adversaries can successfully estimate radar parameters. In addition, a hybrid information embedding method integrating amplitude shift keying (ASK), phase shift keying (PSK), index modulation (IM), and spatial modulation (SM) is incorporated to increase the achievable bit rate of the system significantly. Next, a sparse-matched filter receiver design is proposed to efficiently decode the embedded information with a low bit error rate (BER). Finally, a novel RFPA-based secret generation scheme using CRKG ensures secure code creation without a coordinating authority. The improved range and velocity estimation and reduced clutter effects achieved with the method are demonstrated via the evaluation of the ambiguity function (AF) of the proposed waveforms.

MinGRU-Based Encoder for Turbo Autoencoder Frameworks

Mar 11, 2025

Abstract:Early neural channel coding approaches leveraged dense neural networks with one-hot encodings to design adaptive encoder-decoder pairs, improving block error rate (BLER) and automating the design process. However, these methods struggled with scalability as the size of message sets and block lengths increased. TurboAE addressed this challenge by focusing on bit-sequence inputs rather than symbol-level representations, transforming the scalability issue associated with large message sets into a sequence modeling problem. While recurrent neural networks (RNNs) were a natural fit for sequence processing, their reliance on sequential computations made them computationally expensive and inefficient for long sequences. As a result, TurboAE adopted convolutional network blocks, which were faster to train and more scalable, but lacked the sequential modeling advantages of RNNs. Recent advances in efficient RNN architectures, such as minGRU and minLSTM, and structured state space models (SSMs) like S4 and S6, overcome these limitations by significantly reducing memory and computational overhead. These models enable scalable sequence processing, making RNNs competitive for long-sequence tasks. In this work, we revisit RNNs for Turbo autoencoders by integrating the lightweight minGRU model with a Mamba block from SSMs into a parallel Turbo autoencoder framework. Our results demonstrate that this hybrid design matches the performance of convolutional network-based Turbo autoencoder approaches for short sequences while significantly improving scalability and training efficiency for long block lengths. This highlights the potential of efficient RNNs in advancing neural channel coding for long-sequence scenarios.

Deep Learning-based Codes for Wiretap Fading Channels

Sep 13, 2024

Abstract:The wiretap channel is a well-studied problem in the physical layer security (PLS) literature. Although it is proven that the decoding error probability and information leakage can be made arbitrarily small in the asymptotic regime, further research on finite-blocklength codes is required on the path towards practical, secure communications systems. This work provides the first experimental characterization of a deep learning-based, finite-blocklength code construction for multi-tap fading wiretap channels without channel state information (CSI). In addition to the evaluation of the average probability of error and information leakage, we illustrate the influence of (i) the number of fading taps, (ii) differing variances of the fading coefficients and (iii) the seed selection for the hash function-based security layer.

Building Resilience in Wireless Communication Systems With a Secret-Key Budget

Jul 16, 2024

Abstract:Resilience and power consumption are two important performance metrics for many modern communication systems, and it is therefore important to define, analyze, and optimize them. In this work, we consider a wireless communication system with secret-key generation, in which the secret-key bits are added to and used from a pool of available key bits. We propose novel physical layer resilience metrics for the survivability of such systems. In addition, we propose multiple power allocation schemes and analyze their trade-off between resilience and power consumption. In particular, we investigate and compare constant power allocation, an adaptive analytical algorithm, and a reinforcement learning-based solution. It is shown how the transmit power can be minimized such that a specified resilience is guaranteed. These results can be used directly by designers of such systems to optimize the system parameters for the desired performance in terms of reliability, security, and resilience.

Secret Key Generation Rates for Line of Sight Multipath Channels in the Presence of Eavesdroppers

Apr 25, 2024

Abstract:In this paper, the feasibility of implementing a lightweight key distribution scheme using physical layer security for secret key generation (SKG) is explored. Specifically, we focus on examining SKG with the received signal strength (RSS) serving as the primary source of shared randomness. Our investigation centers on a frequency-selective line-of-sight (LoS) multipath channel, with a particular emphasis on assessing SKG rates derived from the distributions of RSS. We derive the received signal distributions based on how the multipath components resolve at the receiver. The mutual information (MI) is evaluated based on LoS 3GPP channel models using a numerical estimator. We study how the bandwidth, delay spread, and Rician K-factor impact the estimated MI. This MI then serves as a benchmark setting bounds for the SKG rates in our exploration.

Learning End-to-End Channel Coding with Diffusion Models

Sep 21, 2023Abstract:The training of neural encoders via deep learning necessitates a differentiable channel model due to the backpropagation algorithm. This requirement can be sidestepped by approximating either the channel distribution or its gradient through pilot signals in real-world scenarios. The initial approach draws upon the latest advancements in image generation, utilizing generative adversarial networks (GANs) or their enhanced variants to generate channel distributions. In this paper, we address this channel approximation challenge with diffusion models, which have demonstrated high sample quality in image generation. We offer an end-to-end channel coding framework underpinned by diffusion models and propose an efficient training algorithm. Our simulations with various channel models establish that our diffusion models learn the channel distribution accurately, thereby achieving near-optimal end-to-end symbol error rates (SERs). We also note a significant advantage of diffusion models: A robust generalization capability in high signal-to-noise ratio regions, in contrast to GAN variants that suffer from error floor. Furthermore, we examine the trade-off between sample quality and sampling speed, when an accelerated sampling algorithm is deployed, and investigate the effect of the noise scheduling on this trade-off. With an apt choice of noise scheduling, sampling time can be significantly reduced with a minor increase in SER.

Generalized Rainbow Differential Privacy

Sep 11, 2023Abstract:We study a new framework for designing differentially private (DP) mechanisms via randomized graph colorings, called rainbow differential privacy. In this framework, datasets are nodes in a graph, and two neighboring datasets are connected by an edge. Each dataset in the graph has a preferential ordering for the possible outputs of the mechanism, and these orderings are called rainbows. Different rainbows partition the graph of connected datasets into different regions. We show that if a DP mechanism at the boundary of such regions is fixed and it behaves identically for all same-rainbow boundary datasets, then a unique optimal $(\epsilon,\delta)$-DP mechanism exists (as long as the boundary condition is valid) and can be expressed in closed-form. Our proof technique is based on an interesting relationship between dominance ordering and DP, which applies to any finite number of colors and for $(\epsilon,\delta)$-DP, improving upon previous results that only apply to at most three colors and for $\epsilon$-DP. We justify the homogeneous boundary condition assumption by giving an example with non-homogeneous boundary condition, for which there exists no optimal DP mechanism.

Secure Integrated Sensing and Communication

Mar 20, 2023

Abstract:This work considers the problem of mitigating information leakage between communication and sensing in systems jointly performing both operations. Specifically, a discrete memoryless state-dependent broadcast channel model is studied in which (i) the presence of feedback enables a transmitter to convey information, while simultaneously performing channel state estimation; (ii) one of the receivers is treated as an eavesdropper whose state should be estimated but which should remain oblivious to part of the transmitted information. The model abstracts the challenges behind security for joint communication and sensing if one views the channel state as a key attribute, e.g., location. For independent and identically distributed states, perfect output feedback, and when part of the transmitted message should be kept secret, a partial characterization of the secrecy-distortion region is developed. The characterization is exact when the broadcast channel is either physically-degraded or reversely-physically-degraded. The partial characterization is also extended to the situation in which the entire transmitted message should be kept secret. The benefits of a joint approach compared to separation-based secure communication and state-sensing methods are illustrated with binary joint communication and sensing models.

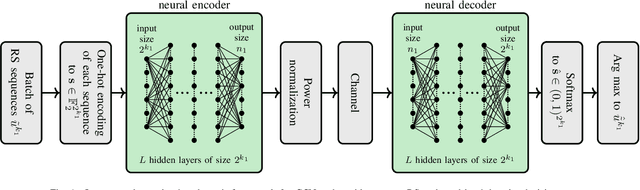

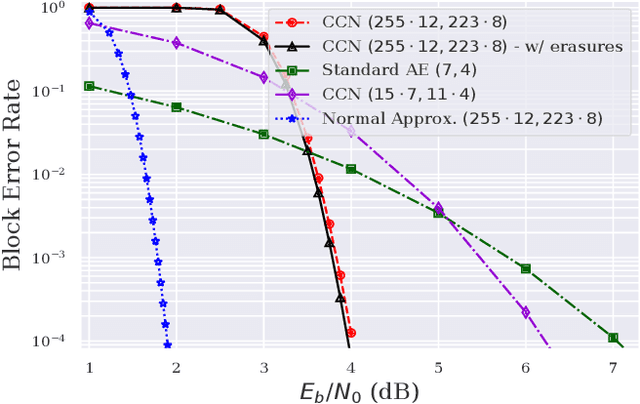

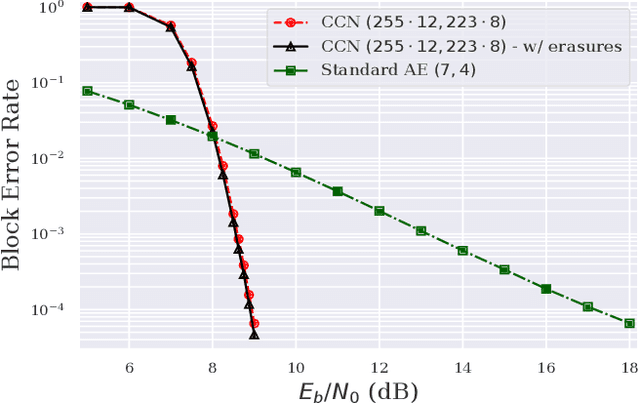

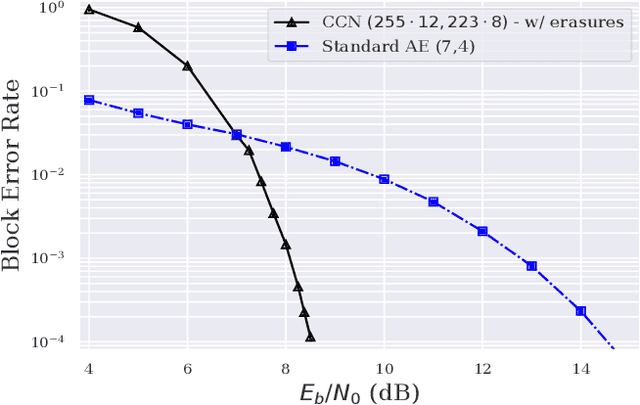

Concatenated Classic and Neural (CCN) Codes: ConcatenatedAE

Sep 04, 2022

Abstract:Small neural networks (NNs) used for error correction were shown to improve on classic channel codes and to address channel model changes. We extend the code dimension of any such structure by using the same NN under one-hot encoding multiple times, which are serially-concatenated with an outer classic code. We design NNs with the same network parameters, where each Reed-Solomon codeword symbol is an input to a different NN. Significant improvements in block error probabilities for an additive Gaussian noise channel as compared to the small neural code are illustrated, as well as robustness to channel model changes.

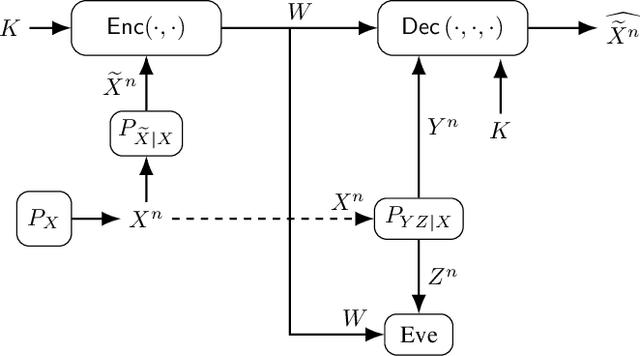

Secure and Private Source Coding with Private Key and Decoder Side Information

May 10, 2022

Abstract:The problem of secure source coding with multiple terminals is extended by considering a remote source whose noisy measurements are the correlated random variables used for secure source reconstruction. The main additions to the problem include 1) all terminals noncausally observe a noisy measurement of the remote source; 2) a private key is available to all legitimate terminals; 3) the public communication link between the encoder and decoder is rate-limited; 4) the secrecy leakage to the eavesdropper is measured with respect to the encoder input, whereas the privacy leakage is measured with respect to the remote source. Exact rate regions are characterized for a lossy source coding problem with a private key, remote source, and decoder side information under security, privacy, communication, and distortion constraints. By replacing the distortion constraint with a reliability constraint, we obtain the exact rate region also for the lossless case. Furthermore, the lossy rate region for scalar discrete-time Gaussian sources and measurement channels is established.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge