Qi Zou

Relating-Up: Advancing Graph Neural Networks through Inter-Graph Relationships

May 07, 2024

Abstract:Graph Neural Networks (GNNs) have excelled in learning from graph-structured data, especially in understanding the relationships within a single graph, i.e., intra-graph relationships. Despite their successes, GNNs are limited by neglecting the context of relationships across graphs, i.e., inter-graph relationships. Recognizing the potential to extend this capability, we introduce Relating-Up, a plug-and-play module that enhances GNNs by exploiting inter-graph relationships. This module incorporates a relation-aware encoder and a feedback training strategy. The former enables GNNs to capture relationships across graphs, enriching relation-aware graph representation through collective context. The latter utilizes a feedback loop mechanism for the recursively refinement of these representations, leveraging insights from refining inter-graph dynamics to conduct feedback loop. The synergy between these two innovations results in a robust and versatile module. Relating-Up enhances the expressiveness of GNNs, enabling them to encapsulate a wider spectrum of graph relationships with greater precision. Our evaluations across 16 benchmark datasets demonstrate that integrating Relating-Up into GNN architectures substantially improves performance, positioning Relating-Up as a formidable choice for a broad spectrum of graph representation learning tasks.

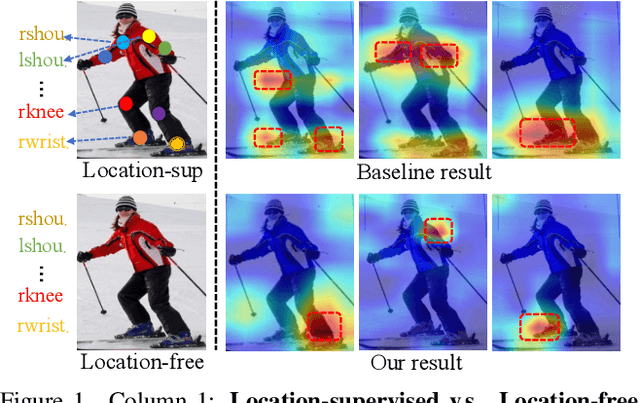

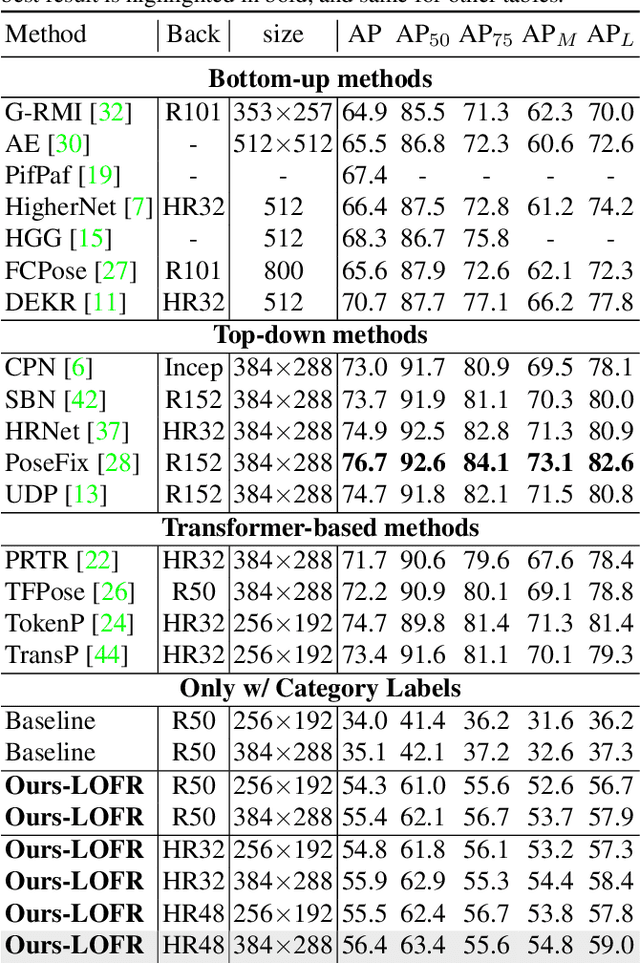

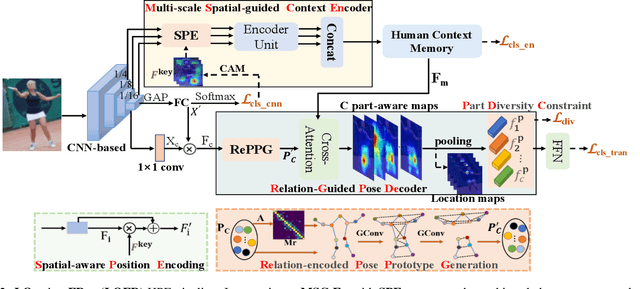

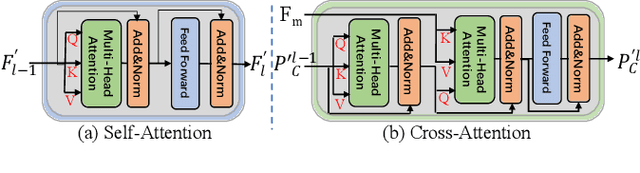

Location-free Human Pose Estimation

May 25, 2022

Abstract:Human pose estimation (HPE) usually requires large-scale training data to reach high performance. However, it is rather time-consuming to collect high-quality and fine-grained annotations for human body. To alleviate this issue, we revisit HPE and propose a location-free framework without supervision of keypoint locations. We reformulate the regression-based HPE from the perspective of classification. Inspired by the CAM-based weakly-supervised object localization, we observe that the coarse keypoint locations can be acquired through the part-aware CAMs but unsatisfactory due to the gap between the fine-grained HPE and the object-level localization. To this end, we propose a customized transformer framework to mine the fine-grained representation of human context, equipped with the structural relation to capture subtle differences among keypoints. Concretely, we design a Multi-scale Spatial-guided Context Encoder to fully capture the global human context while focusing on the part-aware regions and a Relation-encoded Pose Prototype Generation module to encode the structural relations. All these works together for strengthening the weak supervision from image-level category labels on locations. Our model achieves competitive performance on three datasets when only supervised at a category-level and importantly, it can achieve comparable results with fully-supervised methods with only 25\% location labels on MS-COCO and MPII.

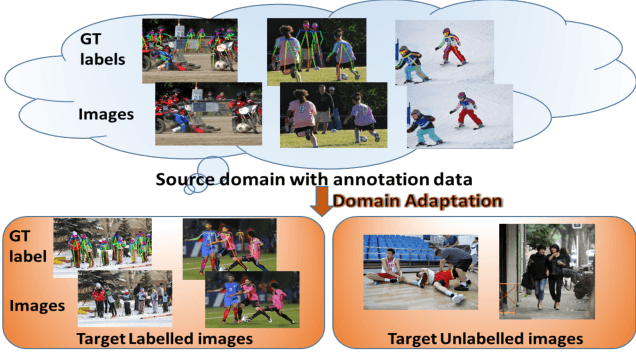

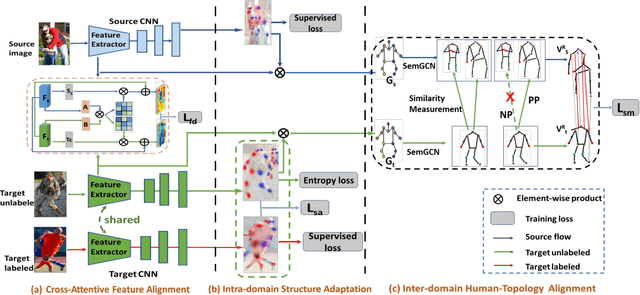

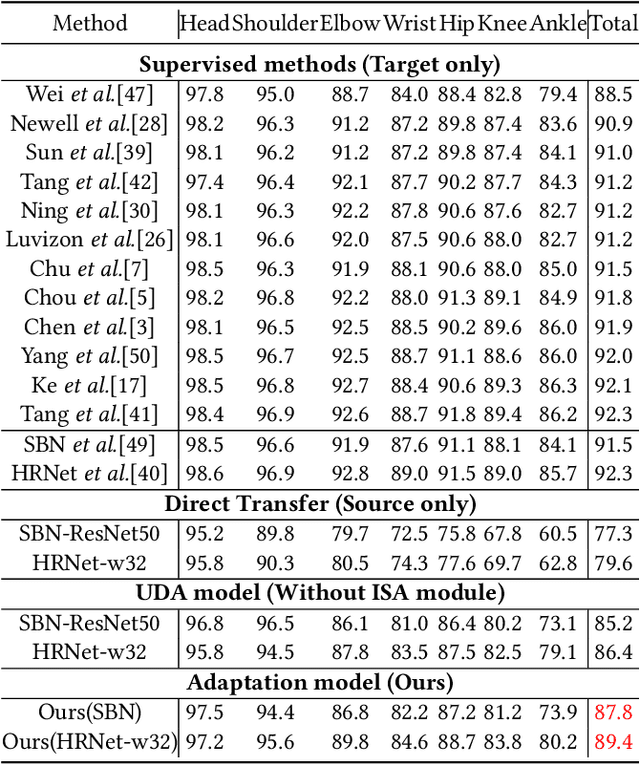

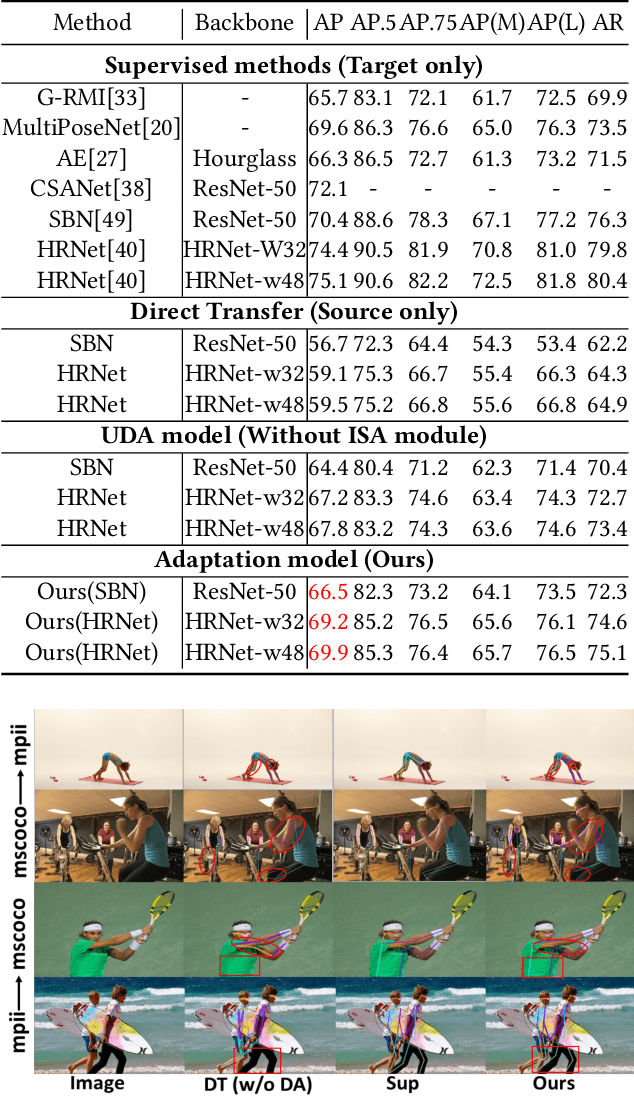

Alleviating Human-level Shift : A Robust Domain Adaptation Method for Multi-person Pose Estimation

Aug 13, 2020

Abstract:Human pose estimation has been widely studied with much focus on supervised learning requiring sufficient annotations. However, in real applications, a pretrained pose estimation model usually need be adapted to a novel domain with no labels or sparse labels. Such domain adaptation for 2D pose estimation hasn't been explored. The main reason is that a pose, by nature, has typical topological structure and needs fine-grained features in local keypoints. While existing adaptation methods do not consider topological structure of object-of-interest and they align the whole images coarsely. Therefore, we propose a novel domain adaptation method for multi-person pose estimation to conduct the human-level topological structure alignment and fine-grained feature alignment. Our method consists of three modules: Cross-Attentive Feature Alignment (CAFA), Intra-domain Structure Adaptation (ISA) and Inter-domain Human-Topology Alignment (IHTA) module. The CAFA adopts a bidirectional spatial attention module (BSAM)that focuses on fine-grained local feature correlation between two humans to adaptively aggregate consistent features for adaptation. We adopt ISA only in semi-supervised domain adaptation (SSDA) to exploit the corresponding keypoint semantic relationship for reducing the intra-domain bias. Most importantly, we propose an IHTA to learn more domain-invariant human topological representation for reducing the inter-domain discrepancy. We model the human topological structure via the graph convolution network (GCN), by passing messages on which, high-order relations can be considered. This structure preserving alignment based on GCN is beneficial to the occluded or extreme pose inference. Extensive experiments are conducted on two popular benchmarks and results demonstrate the competency of our method compared with existing supervised approaches.

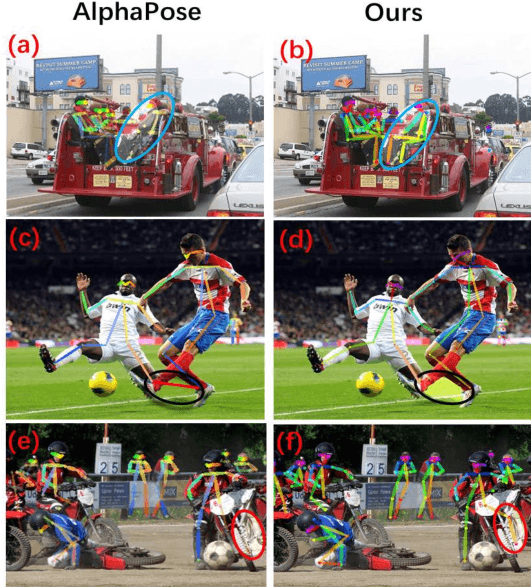

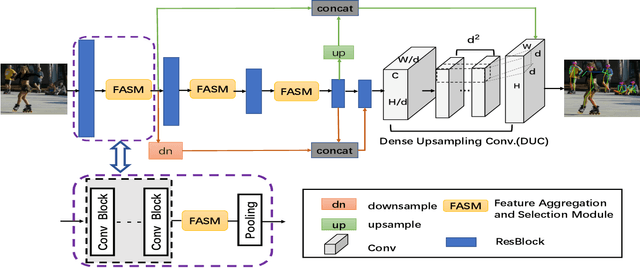

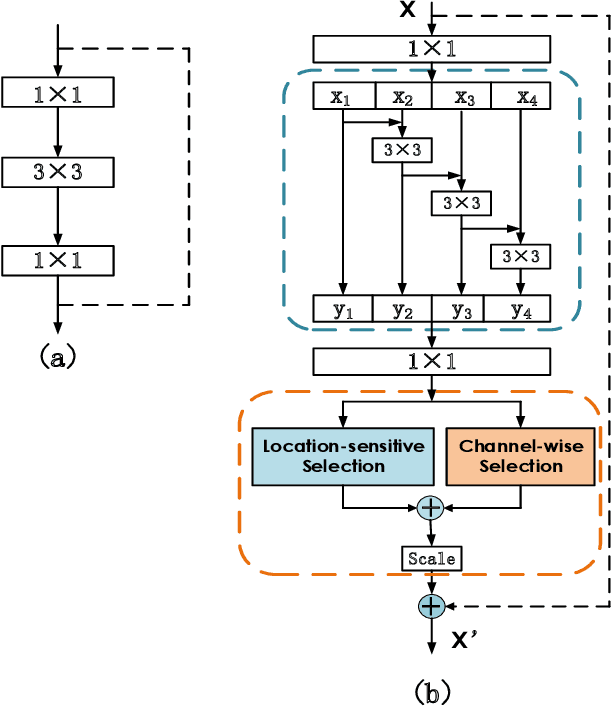

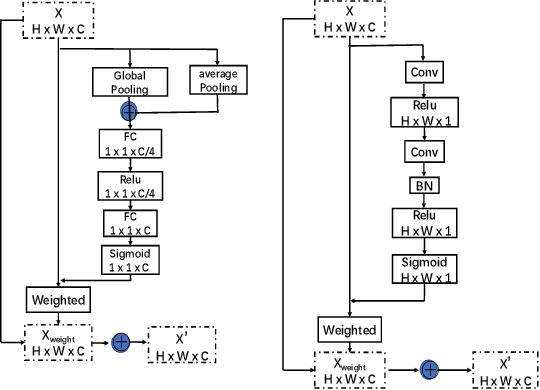

Multi-Person Pose Estimation with Enhanced Feature Aggregation and Selection

Mar 20, 2020

Abstract:We propose a novel Enhanced Feature Aggregation and Selection network (EFASNet) for multi-person 2D human pose estimation. Due to enhanced feature representation, our method can well handle crowded, cluttered and occluded scenes. More specifically, a Feature Aggregation and Selection Module (FASM), which constructs hierarchical multi-scale feature aggregation and makes the aggregated features discriminative, is proposed to get more accurate fine-grained representation, leading to more precise joint locations. Then, we perform a simple Feature Fusion (FF) strategy which effectively fuses high-resolution spatial features and low-resolution semantic features to obtain more reliable context information for well-estimated joints. Finally, we build a Dense Upsampling Convolution (DUC) module to generate more precise prediction, which can recover missing joint details that are usually unavailable in common upsampling process. As a result, the predicted keypoint heatmaps are more accurate. Comprehensive experiments demonstrate that the proposed approach outperforms the state-of-the-art methods and achieves the superior performance over three benchmark datasets: the recent big dataset CrowdPose, the COCO keypoint detection dataset and the MPII Human Pose dataset. Our code will be released upon acceptance.

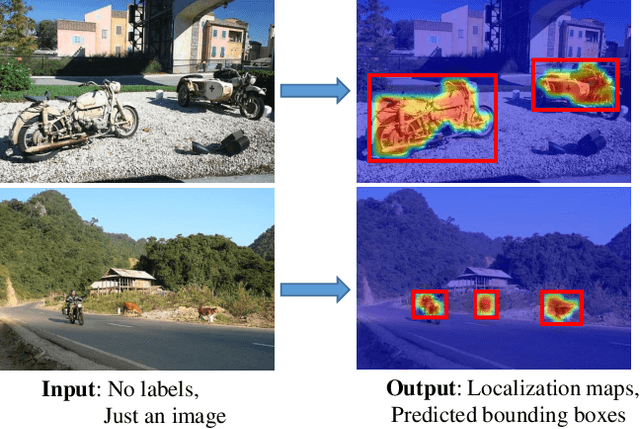

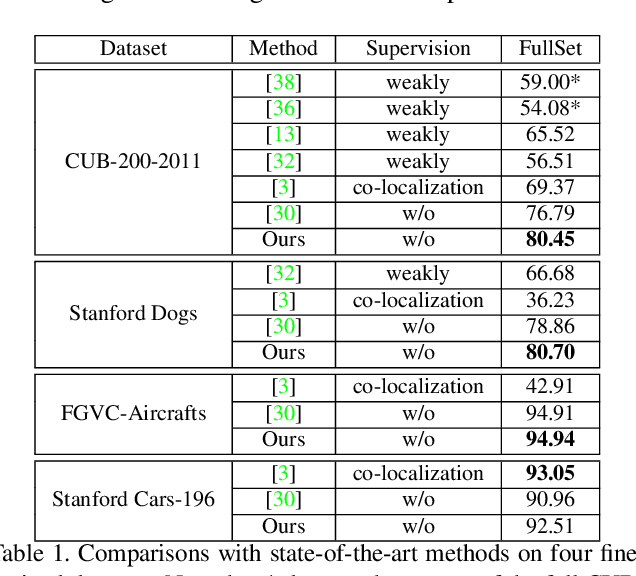

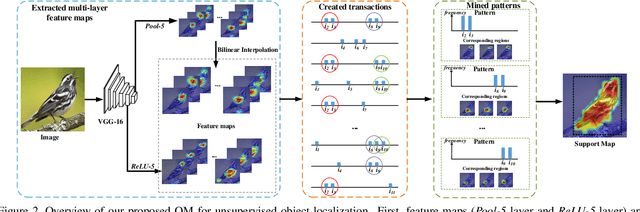

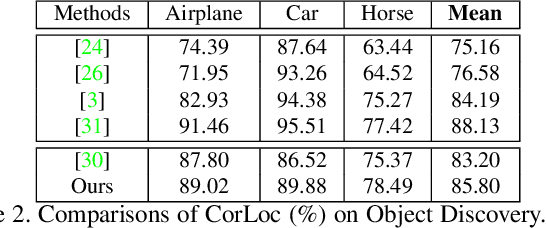

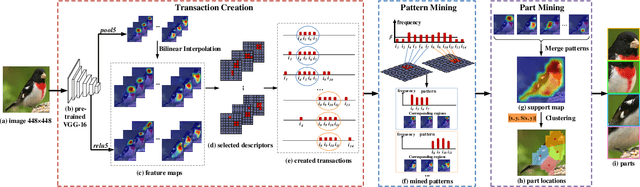

Mining Objects: Fully Unsupervised Object Discovery and Localization From a Single Image

Feb 26, 2019

Abstract:The goal of our work is to discover dominant objects without using any annotations. We focus on performing unsupervised object discovery and localization in a strictly general setting where only a single image is given. This is far more challenge than typical co-localization or weakly-supervised localization tasks. To tackle this problem, we propose a simple but effective pattern mining-based method, called Object Mining (OM), which exploits the ad-vantages of data mining and feature representation of pre-trained convolutional neural networks (CNNs). Specifically,Object Mining first converts the feature maps from a pre-trained CNN model into a set of transactions, and then frequent patterns are discovered from transaction data base through pattern mining techniques. We observe that those discovered patterns, i.e., co-occurrence highlighted regions,typically hold appearance and spatial consistency. Motivated by this observation, we can easily discover and localize possible objects by merging relevant meaningful pat-terns in an unsupervised manner. Extensive experiments on a variety of benchmarks demonstrate that Object Mining achieves competitive performance compared with the state-of-the-art methods.

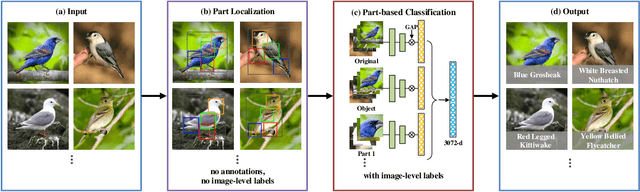

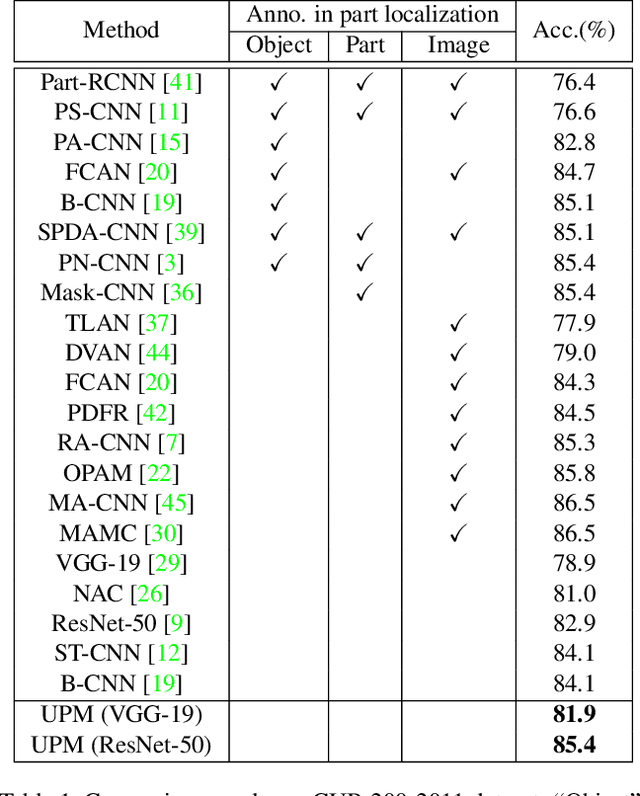

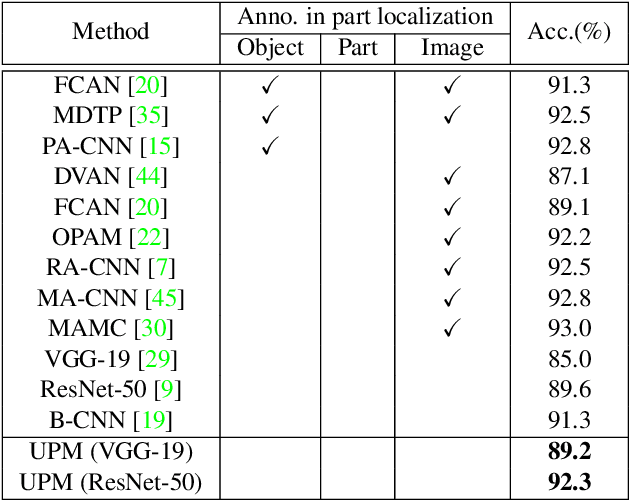

Unsupervised Part Mining for Fine-grained Image Classification

Feb 26, 2019

Abstract:Fine-grained image classification remains challenging due to the large intra-class variance and small inter-class variance. Since the subtle visual differences are only in local regions of discriminative parts among subcategories, part localization is a key issue for fine-grained image classification. Most existing approaches localize object or parts in an image with object or part annotations, which are expensive and labor-consuming. To tackle this issue, we propose a fully unsupervised part mining (UPM) approach to localize the discriminative parts without even image-level annotations, which largely improves the fine-grained classification performance. We first utilize pattern mining techniques to discover frequent patterns, i.e., co-occurrence highlighted regions, in the feature maps extracted from a pre-trained convolutional neural network (CNN) model. Inspired by the fact that these relevant meaningful patterns typically hold appearance and spatial consistency, we then cluster the mined regions to obtain the cluster centers and the discriminative parts surrounding the cluster centers are generated. Importantly, any annotations and sophisticated training procedures are not used in our proposed part localization approach. Finally, a multi-stream classification network is built for aggregating the original, object-level and part-level features simultaneously. Compared with other state-of-the-art approaches, our UPM approach achieves the competitive performance.

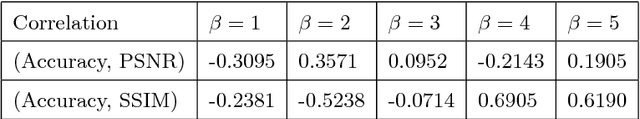

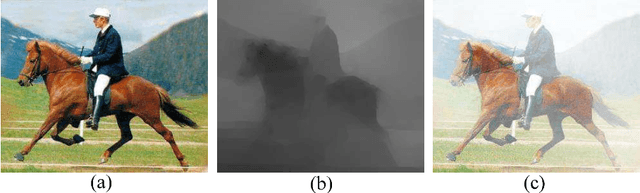

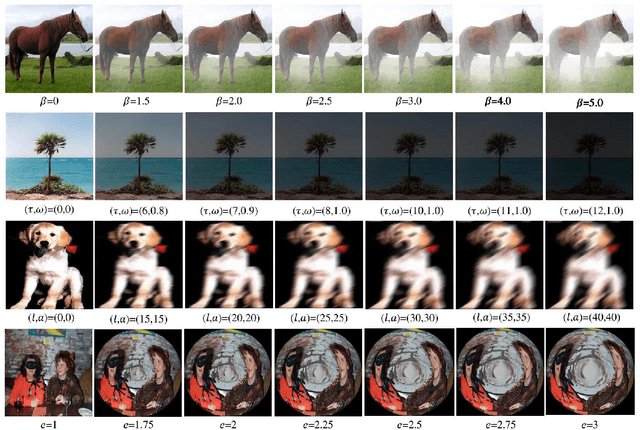

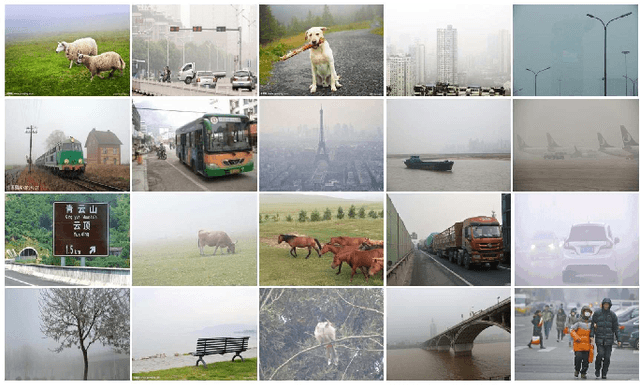

Does Haze Removal Help CNN-based Image Classification?

Oct 12, 2018

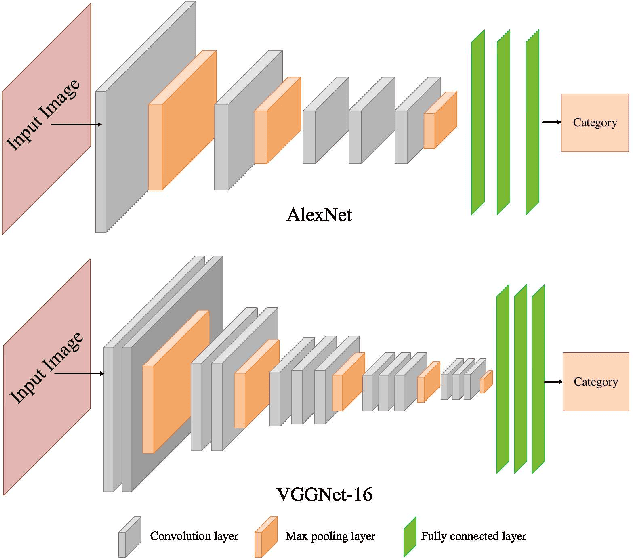

Abstract:Hazy images are common in real scenarios and many dehazing methods have been developed to automatically remove the haze from images. Typically, the goal of image dehazing is to produce clearer images from which human vision can better identify the object and structural details present in the images. When the ground-truth haze-free image is available for a hazy image, quantitative evaluation of image dehazing is usually based on objective metrics, such as Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity (SSIM). However, in many applications, large-scale images are collected not for visual examination by human. Instead, they are used for many high-level vision tasks, such as automatic classification, recognition and categorization. One fundamental problem here is whether various dehazing methods can produce clearer images that can help improve the performance of the high-level tasks. In this paper, we empirically study this problem in the important task of image classification by using both synthetic and real hazy image datasets. From the experimental results, we find that the existing image-dehazing methods cannot improve much the image-classification performance and sometimes even reduce the image-classification performance.

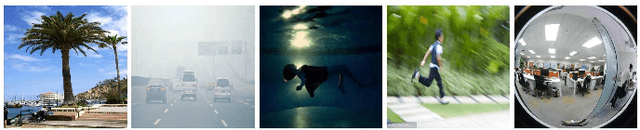

Effects of Image Degradations to CNN-based Image Classification

Oct 12, 2018

Abstract:Just like many other topics in computer vision, image classification has achieved significant progress recently by using deep-learning neural networks, especially the Convolutional Neural Networks (CNN). Most of the existing works are focused on classifying very clear natural images, evidenced by the widely used image databases such as Caltech-256, PASCAL VOCs and ImageNet. However, in many real applications, the acquired images may contain certain degradations that lead to various kinds of blurring, noise, and distortions. One important and interesting problem is the effect of such degradations to the performance of CNN-based image classification. More specifically, we wonder whether image-classification performance drops with each kind of degradation, whether this drop can be avoided by including degraded images into training, and whether existing computer vision algorithms that attempt to remove such degradations can help improve the image-classification performance. In this paper, we empirically study this problem for four kinds of degraded images -- hazy images, underwater images, motion-blurred images and fish-eye images. For this study, we synthesize a large number of such degraded images by applying respective physical models to the clear natural images and collect a new hazy image dataset from the Internet. We expect this work can draw more interests from the community to study the classification of degraded images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge