Po-Wen Chang

FAIR Universe Weak Lensing ML Uncertainty Challenge: Handling Uncertainties and Distribution Shifts for Precision Cosmology

Apr 15, 2026Abstract:Weak gravitational lensing, the correlated distortion of background galaxy shapes by foreground structures, is a powerful probe of the matter distribution in our universe and allows accurate constraints on the cosmological model. In recent years, high-order statistics and machine learning (ML) techniques have been applied to weak lensing data to extract the nonlinear information beyond traditional two-point analysis. However, these methods typically rely on cosmological simulations, which poses several challenges: simulations are computationally expensive, limiting most realistic setups to a low training data regime; inaccurate modeling of systematics in the simulations create distribution shifts that can bias cosmological parameter constraints; and varying simulation setups across studies make method comparison difficult. To address these difficulties, we present the first weak lensing benchmark dataset with several realistic systematics and launch the FAIR Universe Weak Lensing Machine Learning Uncertainty Challenge. The challenge focuses on measuring the fundamental properties of the universe from weak lensing data with limited training set and potential distribution shifts, while providing a standardized benchmark for rigorous comparison across methods. Organized in two phases, the challenge will bring together the physics and ML communities to advance the methodologies for handling systematic uncertainties, data efficiency, and distribution shifts in weak lensing analysis with ML, ultimately facilitating the deployment of ML approaches into upcoming weak lensing survey analysis.

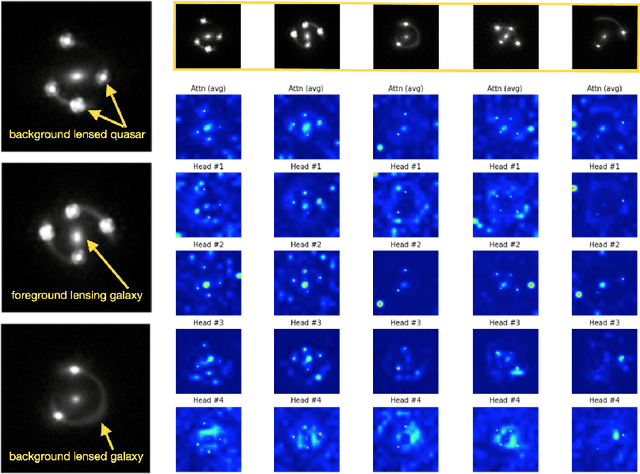

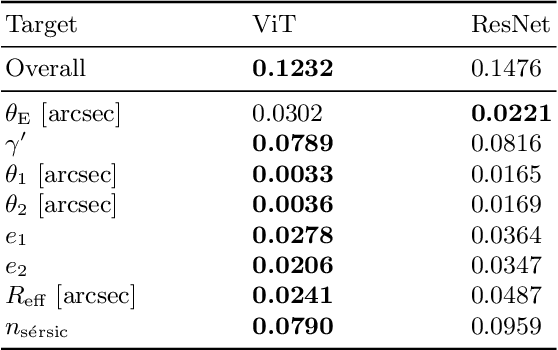

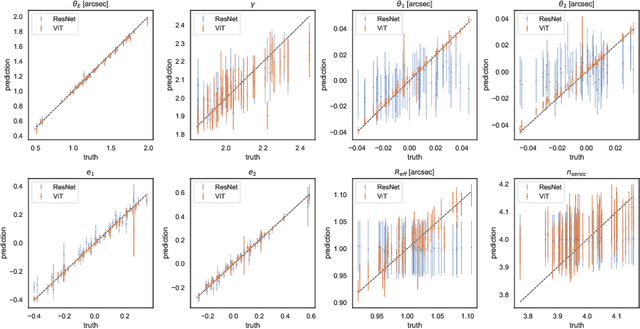

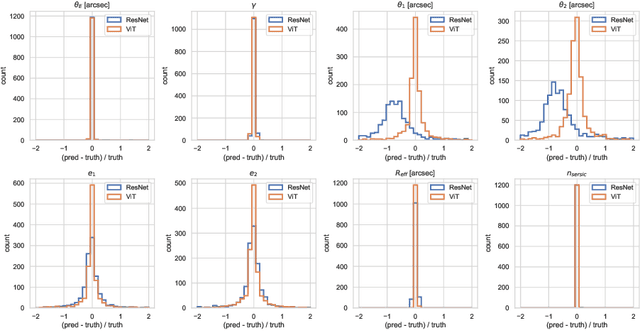

Strong Gravitational Lensing Parameter Estimation with Vision Transformer

Oct 09, 2022

Abstract:Quantifying the parameters and corresponding uncertainties of hundreds of strongly lensed quasar systems holds the key to resolving one of the most important scientific questions: the Hubble constant ($H_{0}$) tension. The commonly used Markov chain Monte Carlo (MCMC) method has been too time-consuming to achieve this goal, yet recent work has shown that convolution neural networks (CNNs) can be an alternative with seven orders of magnitude improvement in speed. With 31,200 simulated strongly lensed quasar images, we explore the usage of Vision Transformer (ViT) for simulated strong gravitational lensing for the first time. We show that ViT could reach competitive results compared with CNNs, and is specifically good at some lensing parameters, including the most important mass-related parameters such as the center of lens $\theta_{1}$ and $\theta_{2}$, the ellipticities $e_1$ and $e_2$, and the radial power-law slope $\gamma'$. With this promising preliminary result, we believe the ViT (or attention-based) network architecture can be an important tool for strong lensing science for the next generation of surveys. The open source of our code and data is in \url{https://github.com/kuanweih/strong_lensing_vit_resnet}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge