Nouhaila Innan

How Embeddings Shape Graph Neural Networks: Classical vs Quantum-Oriented Node Representations

Apr 16, 2026Abstract:Node embeddings act as the information interface for graph neural networks, yet their empirical impact is often reported under mismatched backbones, splits, and training budgets. This paper provides a controlled benchmark of embedding choices for graph classification, comparing classical baselines with quantum-oriented node representations under a unified pipeline. We evaluate two classical baselines alongside quantum-oriented alternatives, including a circuit-defined variational embedding and quantum-inspired embeddings computed via graph operators and linear-algebraic constructions. All variants are trained and tested with the same backbone, stratified splits, identical optimization and early stopping, and consistent metrics. Experiments on five different TU datasets and on QM9 converted to classification via target binning show clear dataset dependence: quantum-oriented embeddings yield the most consistent gains on structure-driven benchmarks, while social graphs with limited node attributes remain well served by classical baselines. The study highlights practical trade-offs between inductive bias, trainability, and stability under a fixed training budget, and offers a reproducible reference point for selecting quantum-oriented embeddings in graph learning.

Design Space Exploration of Hybrid Quantum Neural Networks for Chronic Kidney Disease

Apr 15, 2026Abstract:Hybrid Quantum Neural Networks (HQNNs) have recently emerged as a promising paradigm for near-term quantum machine learning. However, their practical performance strongly depends on design choices such as classical-to-quantum data encoding, quantum circuit architecture, measurement strategy and shots. In this paper, we present a comprehensive design space exploration of HQNNs for Chronic Kidney Disease (CKD) diagnosis. Using a carefully curated and preprocessed clinical dataset, we benchmark 625 different HQNN models obtained by combining five encoding schemes, five entanglement architectures, five measurement strategies, and five different shot settings. To ensure fair and robust evaluation, all models are trained using 10-fold stratified cross-validation and assessed on a test set using a comprehensive set of metrics, including accuracy, area under the curve (AUC), F1-score, and a composite performance score. Our results reveal strong and non-trivial interactions between encoding choices and circuit architectures, showing that high performance does not necessarily require large parameter counts or complex circuits. In particular, we find that compact architectures combined with appropriate encodings (e.g., IQP with Ring entanglement) can achieve the best trade-off between accuracy, robustness, and efficiency. Beyond absolute performance analysis, we also provide actionable insights into how different design dimensions influence learning behavior in HQNNs.

Quantum vs. Classical Machine Learning: A Benchmark Study for Financial Prediction

Jan 07, 2026Abstract:In this paper, we present a reproducible benchmarking framework that systematically compares QML models with architecture-matched classical counterparts across three financial tasks: (i) directional return prediction on U.S. and Turkish equities, (ii) live-trading simulation with Quantum LSTMs versus classical LSTMs on the S\&P 500, and (iii) realized volatility forecasting using Quantum Support Vector Regression. By standardizing data splits, features, and evaluation metrics, our study provides a fair assessment of when current-generation QML models can match or exceed classical methods. Our results reveal that quantum approaches show performance gains when data structure and circuit design are well aligned. In directional classification, hybrid quantum neural networks surpass the parameter-matched ANN by \textbf{+3.8 AUC} and \textbf{+3.4 accuracy points} on \texttt{AAPL} stock and by \textbf{+4.9 AUC} and \textbf{+3.6 accuracy points} on Turkish stock \texttt{KCHOL}. In live trading, the QLSTM achieves higher risk-adjusted returns in \textbf{two of four} S\&P~500 regimes. For volatility forecasting, an angle-encoded QSVR attains the \textbf{lowest QLIKE} on \texttt{KCHOL} and remains within $\sim$0.02-0.04 QLIKE of the best classical kernels on \texttt{S\&P~500} and \texttt{AAPL}. Our benchmarking framework clearly identifies the scenarios where current QML architectures offer tangible improvements and where established classical methods continue to dominate.

Graph-Based Bayesian Optimization for Quantum Circuit Architecture Search with Uncertainty Calibrated Surrogates

Dec 10, 2025

Abstract:Quantum circuit design is a key bottleneck for practical quantum machine learning on complex, real-world data. We present an automated framework that discovers and refines variational quantum circuits (VQCs) using graph-based Bayesian optimization with a graph neural network (GNN) surrogate. Circuits are represented as graphs and mutated and selected via an expected improvement acquisition function informed by surrogate uncertainty with Monte Carlo dropout. Candidate circuits are evaluated with a hybrid quantum-classical variational classifier on the next generation firewall telemetry and network internet of things (NF-ToN-IoT-V2) cybersecurity dataset, after feature selection and scaling for quantum embedding. We benchmark our pipeline against an MLP-based surrogate, random search, and greedy GNN selection. The GNN-guided optimizer consistently finds circuits with lower complexity and competitive or superior classification accuracy compared to all baselines. Robustness is assessed via a noise study across standard quantum noise channels, including amplitude damping, phase damping, thermal relaxation, depolarizing, and readout bit flip noise. The implementation is fully reproducible, with time benchmarking and export of best found circuits, providing a scalable and interpretable route to automated quantum circuit discovery.

FAQNAS: FLOPs-aware Hybrid Quantum Neural Architecture Search using Genetic Algorithm

Nov 13, 2025

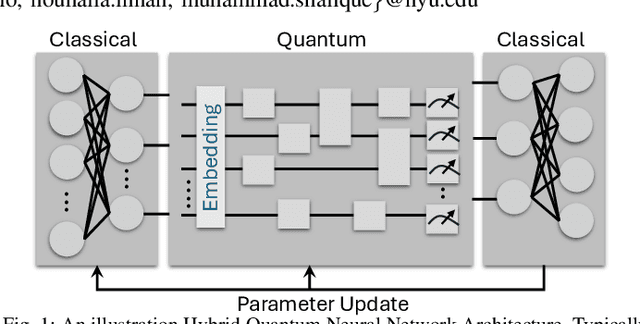

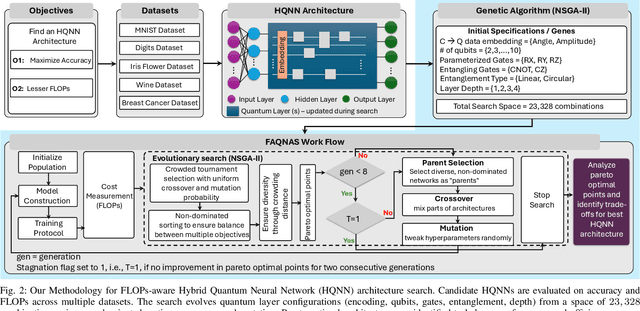

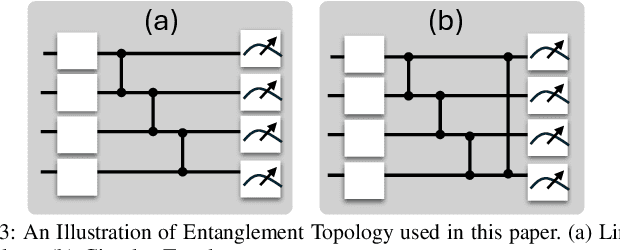

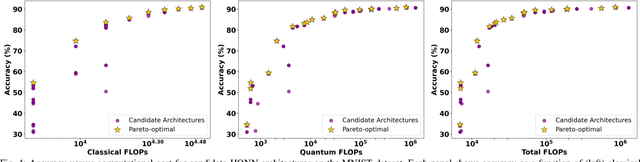

Abstract:Hybrid Quantum Neural Networks (HQNNs), which combine parameterized quantum circuits with classical neural layers, are emerging as promising models in the noisy intermediate-scale quantum (NISQ) era. While quantum circuits are not naturally measured in floating point operations (FLOPs), most HQNNs (in NISQ era) are still trained on classical simulators where FLOPs directly dictate runtime and scalability. Hence, FLOPs represent a practical and viable metric to measure the computational complexity of HQNNs. In this work, we introduce FAQNAS, a FLOPs-aware neural architecture search (NAS) framework that formulates HQNN design as a multi-objective optimization problem balancing accuracy and FLOPs. Unlike traditional approaches, FAQNAS explicitly incorporates FLOPs into the optimization objective, enabling the discovery of architectures that achieve strong performance while minimizing computational cost. Experiments on five benchmark datasets (MNIST, Digits, Wine, Breast Cancer, and Iris) show that quantum FLOPs dominate accuracy improvements, while classical FLOPs remain largely fixed. Pareto-optimal solutions reveal that competitive accuracy can often be achieved with significantly reduced computational cost compared to FLOPs-agnostic baselines. Our results establish FLOPs-awareness as a practical criterion for HQNN design in the NISQ era and as a scalable principle for future HQNN systems.

RobQFL: Robust Quantum Federated Learning in Adversarial Environment

Sep 05, 2025Abstract:Quantum Federated Learning (QFL) merges privacy-preserving federation with quantum computing gains, yet its resilience to adversarial noise is unknown. We first show that QFL is as fragile as centralized quantum learning. We propose Robust Quantum Federated Learning (RobQFL), embedding adversarial training directly into the federated loop. RobQFL exposes tunable axes: client coverage $\gamma$ (0-100\%), perturbation scheduling (fixed-$\varepsilon$ vs $\varepsilon$-mixes), and optimization (fine-tune vs scratch), and distils the resulting $\gamma \times \varepsilon$ surface into two metrics: Accuracy-Robustness Area and Robustness Volume. On 15-client simulations with MNIST and Fashion-MNIST, IID and Non-IID conditions, training only 20-50\% clients adversarially boosts $\varepsilon \leq 0.1$ accuracy $\sim$15 pp at $< 2$ pp clean-accuracy cost; fine-tuning adds 3-5 pp. With $\geq$75\% coverage, a moderate $\varepsilon$-mix is optimal, while high-$\varepsilon$ schedules help only at 100\% coverage. Label-sorted non-IID splits halve robustness, underscoring data heterogeneity as a dominant risk.

HQNN-FSP: A Hybrid Classical-Quantum Neural Network for Regression-Based Financial Stock Market Prediction

Mar 19, 2025Abstract:Financial time-series forecasting remains a challenging task due to complex temporal dependencies and market fluctuations. This study explores the potential of hybrid quantum-classical approaches to assist in financial trend prediction by leveraging quantum resources for improved feature representation and learning. A custom Quantum Neural Network (QNN) regressor is introduced, designed with a novel ansatz tailored for financial applications. Two hybrid optimization strategies are proposed: (1) a sequential approach where classical recurrent models (RNN/LSTM) extract temporal dependencies before quantum processing, and (2) a joint learning framework that optimizes classical and quantum parameters simultaneously. Systematic evaluation using TimeSeriesSplit, k-fold cross-validation, and predictive error analysis highlights the ability of these hybrid models to integrate quantum computing into financial forecasting workflows. The findings demonstrate how quantum-assisted learning can contribute to financial modeling, offering insights into the practical role of quantum resources in time-series analysis.

QUIET-SR: Quantum Image Enhancement Transformer for Single Image Super-Resolution

Mar 11, 2025

Abstract:Recent advancements in Single-Image Super-Resolution (SISR) using deep learning have significantly improved image restoration quality. However, the high computational cost of processing high-resolution images due to the large number of parameters in classical models, along with the scalability challenges of quantum algorithms for image processing, remains a major obstacle. In this paper, we propose the Quantum Image Enhancement Transformer for Super-Resolution (QUIET-SR), a hybrid framework that extends the Swin transformer architecture with a novel shifted quantum window attention mechanism, built upon variational quantum neural networks. QUIET-SR effectively captures complex residual mappings between low-resolution and high-resolution images, leveraging quantum attention mechanisms to enhance feature extraction and image restoration while requiring a minimal number of qubits, making it suitable for the Noisy Intermediate-Scale Quantum (NISQ) era. We evaluate our framework in MNIST (30.24 PSNR, 0.989 SSIM), FashionMNIST (29.76 PSNR, 0.976 SSIM) and the MedMNIST dataset collection, demonstrating that QUIET-SR achieves PSNR and SSIM scores comparable to state-of-the-art methods while using fewer parameters. These findings highlight the potential of scalable variational quantum machine learning models for SISR, marking a step toward practical quantum-enhanced image super-resolution.

PennyLang: Pioneering LLM-Based Quantum Code Generation with a Novel PennyLane-Centric Dataset

Mar 04, 2025Abstract:Large Language Models (LLMs) offer remarkable capabilities in code generation, natural language processing, and domain-specific reasoning. Their potential in aiding quantum software development remains underexplored, particularly for the PennyLane framework-a leading platform for hybrid quantum-classical computing. To address this gap, we introduce a novel, high-quality dataset comprising 3,347 PennyLane-specific code samples of quantum circuits and their contextual descriptions, specifically curated to train/fine-tune LLM-based quantum code assistance. Our key contributions are threefold: (1) the automatic creation and open-source release of a comprehensive PennyLane dataset leveraging quantum computing textbooks, official documentation, and open-source repositories; (2) the development of a systematic methodology for data refinement, annotation, and formatting to optimize LLM training efficiency; and (3) a thorough evaluation, based on a Retrieval-Augmented Generation (RAG) framework, demonstrating the effectiveness of our dataset in streamlining PennyLane code generation and improving quantum development workflows. Compared to existing efforts that predominantly focus on Qiskit, our dataset significantly broadens the spectrum of quantum frameworks covered in AI-driven code assistance. By bridging this gap and providing reproducible dataset-creation methodologies, we aim to advance the field of AI-assisted quantum programming, making quantum computing more accessible to both newcomers and experienced developers.

QFAL: Quantum Federated Adversarial Learning

Feb 28, 2025

Abstract:Quantum federated learning (QFL) merges the privacy advantages of federated systems with the computational potential of quantum neural networks (QNNs), yet its vulnerability to adversarial attacks remains poorly understood. This work pioneers the integration of adversarial training into QFL, proposing a robust framework, quantum federated adversarial learning (QFAL), where clients collaboratively defend against perturbations by combining local adversarial example generation with federated averaging (FedAvg). We systematically evaluate the interplay between three critical factors: client count (5, 10, 15), adversarial training coverage (0-100%), and adversarial attack perturbation strength (epsilon = 0.01-0.5), using the MNIST dataset. Our experimental results show that while fewer clients often yield higher clean-data accuracy, larger federations can more effectively balance accuracy and robustness when partially adversarially trained. Notably, even limited adversarial coverage (e.g., 20%-50%) can significantly improve resilience to moderate perturbations, though at the cost of reduced baseline performance. Conversely, full adversarial training (100%) may regain high clean accuracy but is vulnerable under stronger attacks. These findings underscore an inherent trade-off between robust and standard objectives, which is further complicated by quantum-specific factors. We conclude that a carefully chosen combination of client count and adversarial coverage is critical for mitigating adversarial vulnerabilities in QFL. Moreover, we highlight opportunities for future research, including adaptive adversarial training schedules, more diverse quantum encoding schemes, and personalized defense strategies to further enhance the robustness-accuracy trade-off in real-world quantum federated environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge