Nathan Michael

Carnegie Mellon University

Autonomous Cave Surveying with an Aerial Robot

Mar 31, 2020

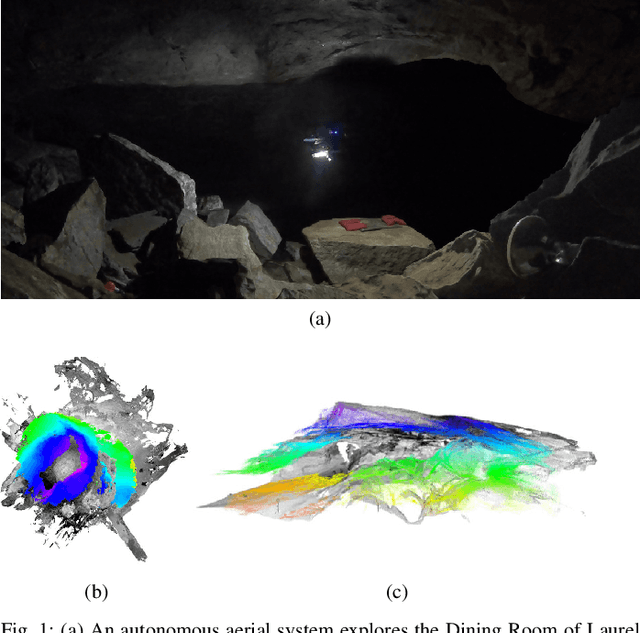

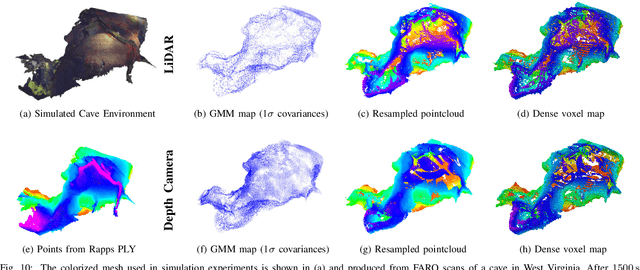

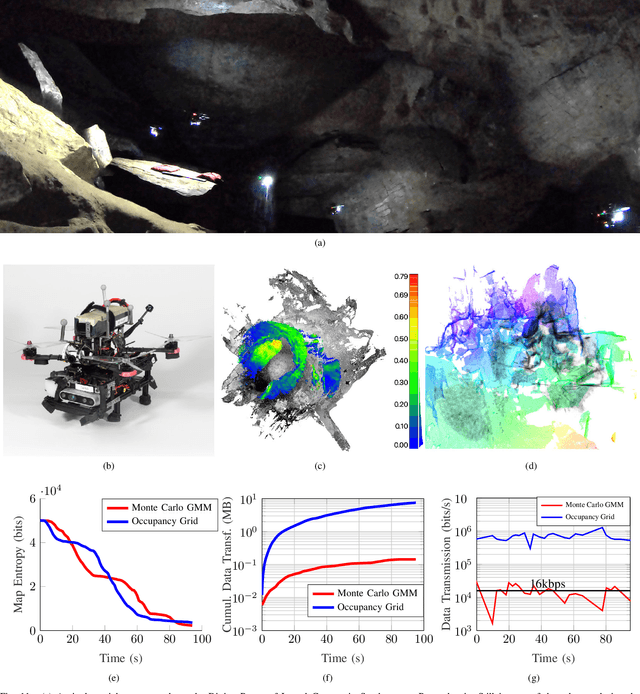

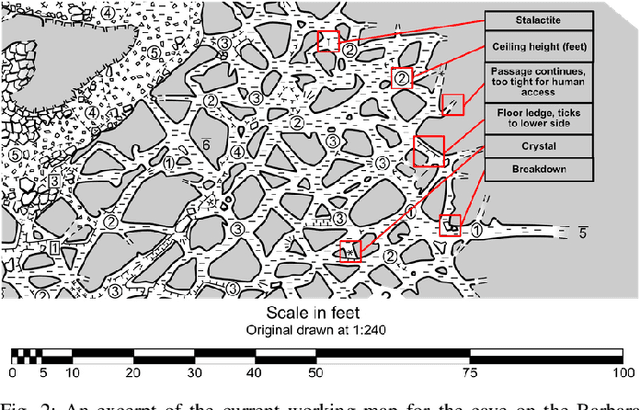

Abstract:This paper presents a method for cave surveying in complete darkness with an autonomous aerial vehicle equipped with a depth camera for mapping, downward-facing camera for state estimation, and forward and downward lights. Traditional methods of cave surveying are labor-intensive and dangerous due to the risk of hypothermia when collecting data over extended periods of time in cold and damp environments, the risk of injury when operating in darkness in rocky or muddy environments, and the potential structural instability of the subterranean environment. Robots could be leveraged to reduce risk to human surveyors, but undeveloped caves are challenging environments in which to operate due to low-bandwidth or nonexistent communications infrastructure. The potential for communications dropouts motivates autonomy in this context. Because the topography of the environment may not be known a priori, it is advantageous for human operators to receive real-time feedback of high-resolution map data that encodes both large and small passageways. Given this capability, directed exploration, where human operators transmit guidance to the autonomous robot to prioritize certain leads over others, lies within the realm of the possible. Few state-of-the-art, high-resolution perceptual modeling techniques quantify the time to transfer the model across low bandwidth, high reliability communications channels such as radio. To bridge this gap in the state of the art, this work compactly represents sensor observations as Gaussian mixture models and maintains a local occupancy grid map for a motion planner that greedily maximizes an information-theoretic objective function. The methodology is extensively evaluated in long duration simulations on an embedded PC and deployed to an aerial system in Laurel Caverns, a commercially owned and operated cave in Southwestern Pennsylvania, USA.

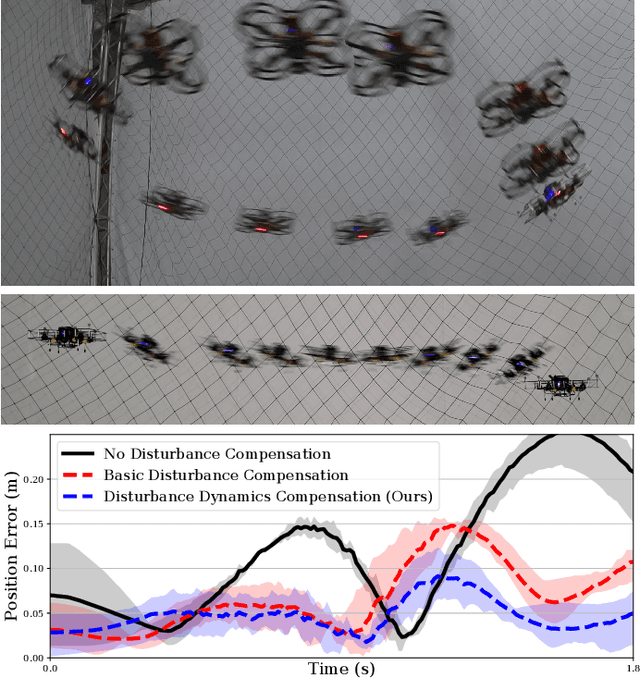

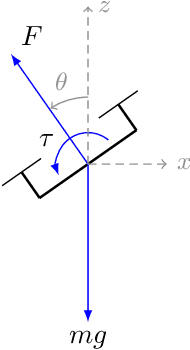

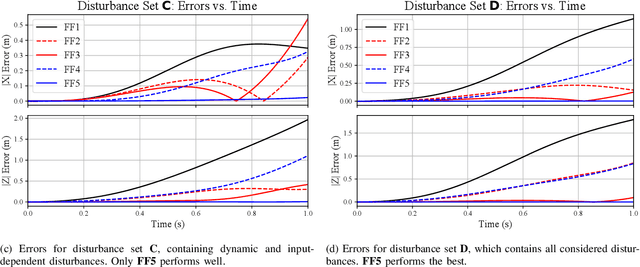

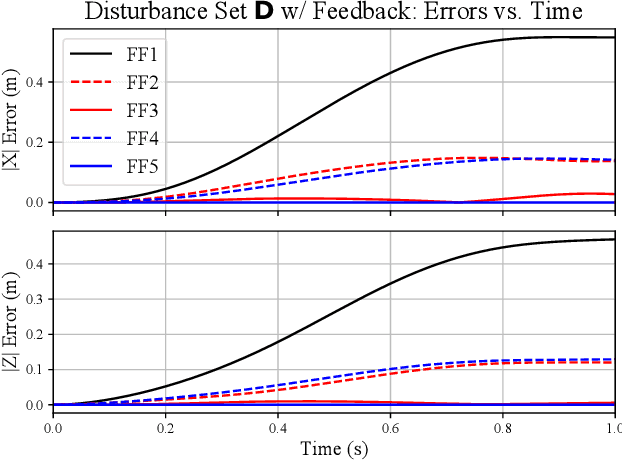

Inverting Learned Dynamics Models for Aggressive Multirotor Control

May 31, 2019

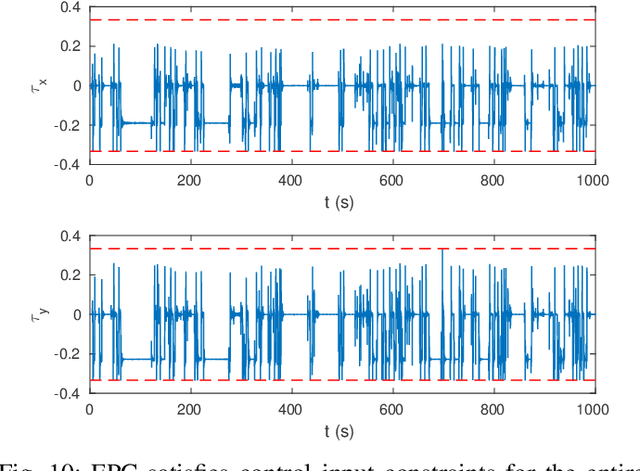

Abstract:We present a control strategy that applies inverse dynamics to a learned acceleration error model for accurate multirotor control input generation. This allows us to retain accurate trajectory and control input generation despite the presence of exogenous disturbances and modeling errors. Although accurate control input generation is traditionally possible when combined with parameter learning-based techniques, we propose a method that can do so while solving the relatively easier non-parametric model learning problem. We show that our technique is able to compensate for a larger class of model disturbances than traditional techniques can and we show reduced tracking error while following trajectories demanding accelerations of more than 7 m/s^2 in multirotor simulation and hardware experiments.

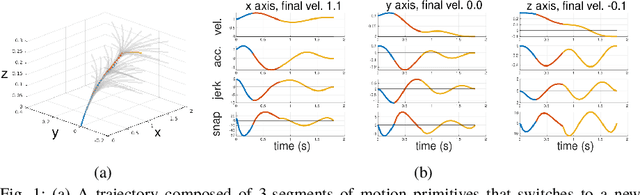

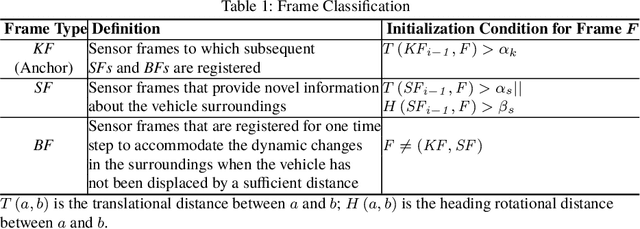

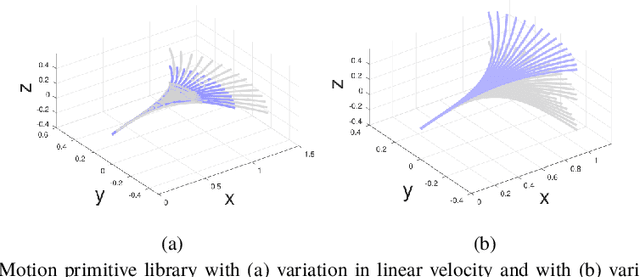

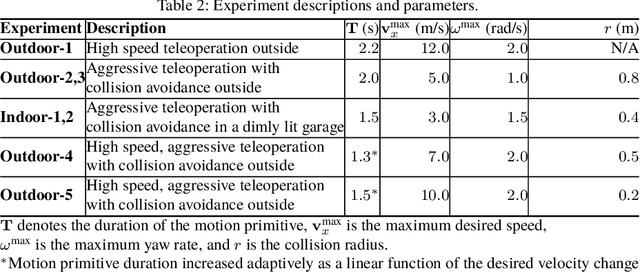

Fast and Agile Vision-Based Flight with Teleoperation and Collision Avoidance on a Multirotor

May 31, 2019

Abstract:We present a multirotor architecture capable of aggressive autonomous flight and collision-free teleoperation in unstructured, GPS-denied environments. The proposed system enables aggressive and safe autonomous flight around clutter by integrating recent advancements in visual-inertial state estimation and teleoperation. Our teleoperation framework maps user inputs onto smooth and dynamically feasible motion primitives. Collision-free trajectories are ensured by querying a locally consistent map that is incrementally constructed from forward-facing depth observations. Our system enables a non-expert operator to safely navigate a multirotor around obstacles at speeds of 10 m/s. We achieve autonomous flights at speeds exceeding 12 m/s and accelerations exceeding 12 m/s^2 in a series of outdoor field experiments that validate our approach.

RaD-VIO: Rangefinder-aided Downward Visual-Inertial Odometry

Oct 19, 2018

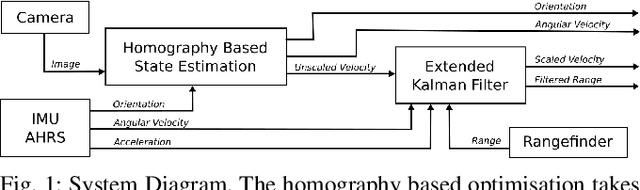

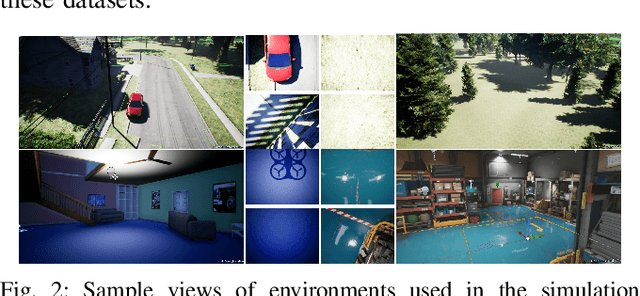

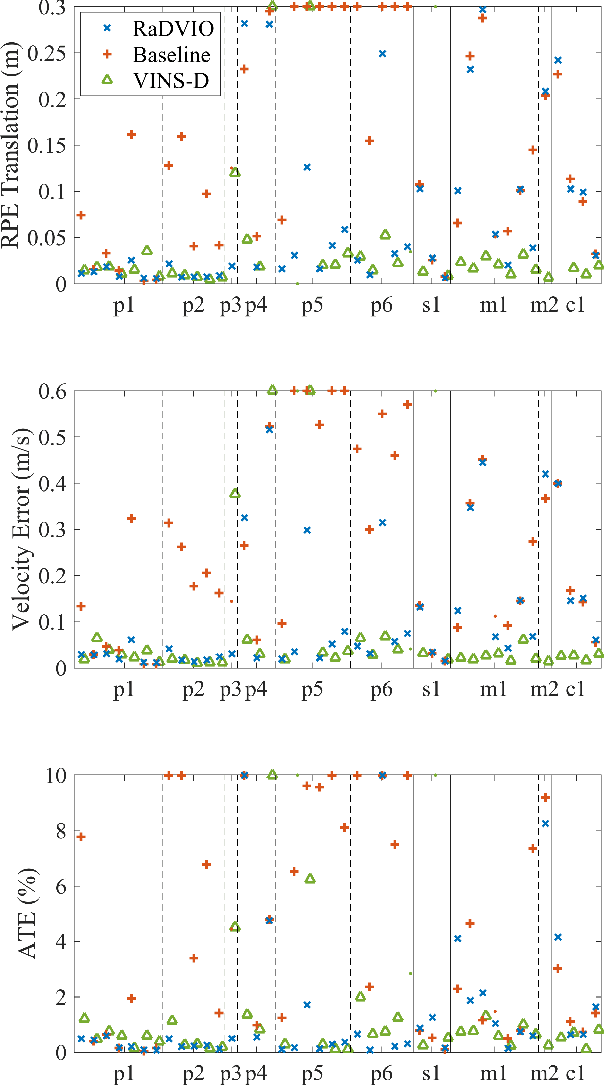

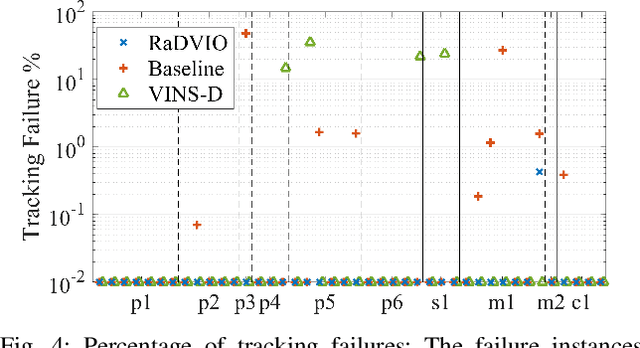

Abstract:State-of-the-art forward facing monocular visual-inertial odometry algorithms are often brittle in practice, especially whilst dealing with initialisation and motion in directions that render the state unobservable. In such cases having a reliable complementary odometry algorithm enables robust and resilient flight. Using the common local planarity assumption, we present a fast, dense, and direct frame-to-frame visual-inertial odometry algorithm for downward facing cameras that minimises a joint cost function involving a homography based photometric cost and an IMU regularisation term. Via extensive evaluation in a variety of scenarios we demonstrate superior performance than existing state-of-the-art downward facing odometry algorithms for Micro Aerial Vehicles (MAVs).

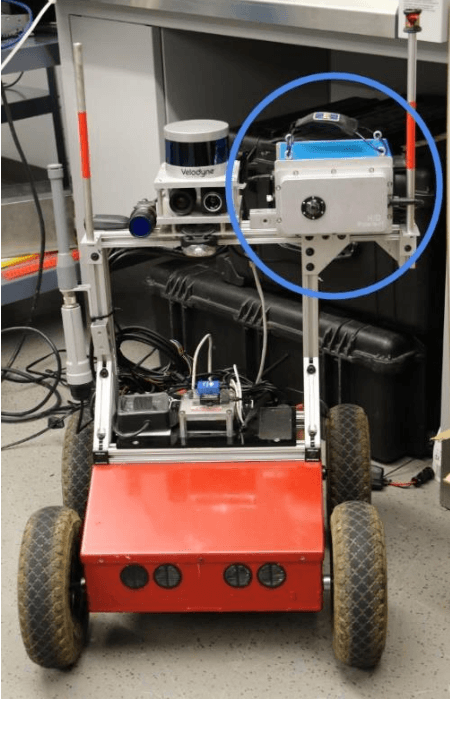

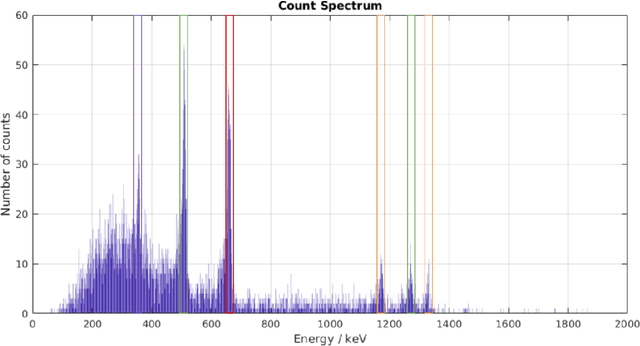

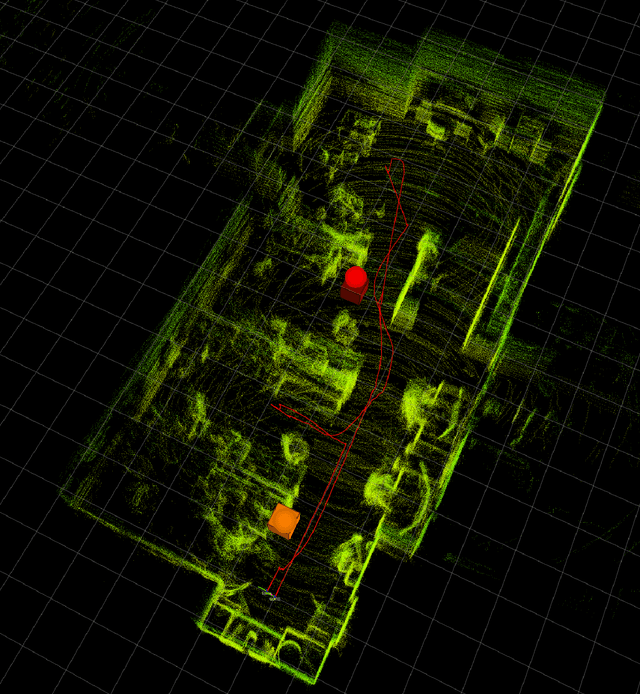

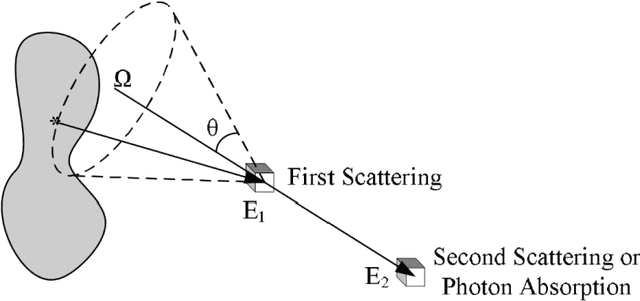

3-D Volumetric Gamma-ray Imaging and Source Localization with a Mobile Robot

Mar 31, 2018

Abstract:Radiation detection has largely been a manual inspection process with point sensors such as Geiger-Muller counters and scintillation spectrometers to date. While their observations of source proximity prove useful, they lack the directional information necessary for efficient source localization and characterization in cluttered environments with multiple radiation sources. The recent commercialization of Compton gamma cameras provides directional information to the broader radiation detection community for the first time. This paper presents the integration of a Compton gamma camera with a self-localizing ground robot for accurate 3D radiation mapping. Using the position and orientation of the robot, radiation images from the gamma camera are accumulated over a traversed path in a shared frame of reference to construct a consistent voxel grid-based radiation map. The peaks of the map at pre-specified energy windows are selected as the source location estimates, which are compared to the ground truth source locations. The proposed approach localizes multiple sources to within an average of 0.2 m in two 5 x 4 m^2 and 14 x 6 m^2 laboratory environments.

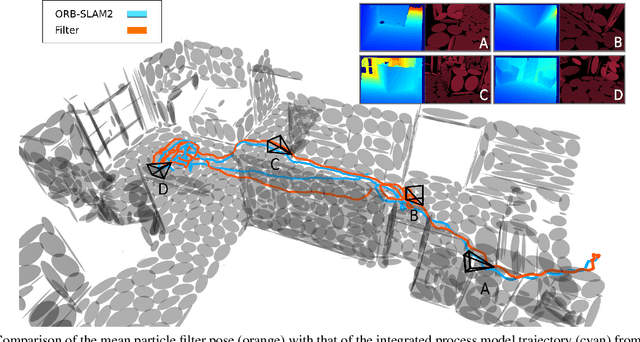

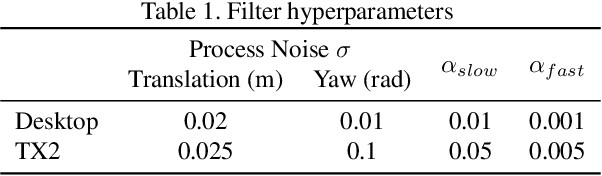

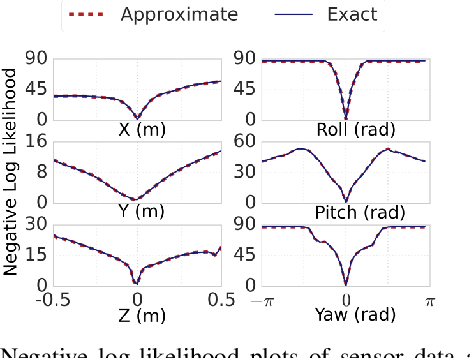

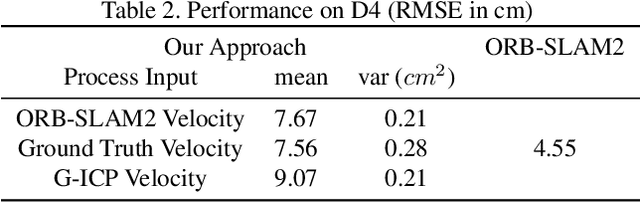

Fast Monte-Carlo Localization on Aerial Vehicles using Approximate Continuous Belief Representations

Mar 29, 2018

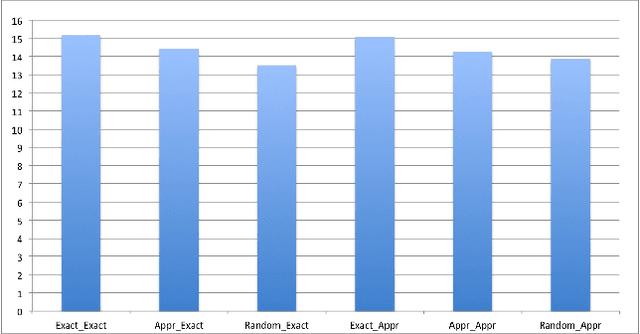

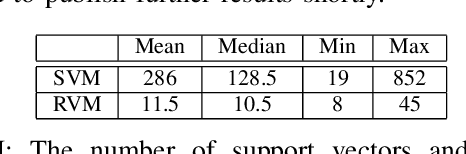

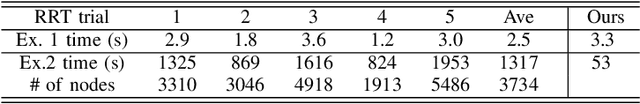

Abstract:Size, weight, and power constrained platforms impose constraints on computational resources that introduce unique challenges in implementing localization algorithms. We present a framework to perform fast localization on such platforms enabled by the compressive capabilities of Gaussian Mixture Model representations of point cloud data. Given raw structural data from a depth sensor and pitch and roll estimates from an on-board attitude reference system, a multi-hypothesis particle filter localizes the vehicle by exploiting the likelihood of the data originating from the mixture model. We demonstrate analysis of this likelihood in the vicinity of the ground truth pose and detail its utilization in a particle filter-based vehicle localization strategy, and later present results of real-time implementations on a desktop system and an off-the-shelf embedded platform that outperform localization results from running a state-of-the-art algorithm on the same environment.

Proceedings of the 1st International Workshop on Robot Learning and Planning

Oct 08, 2016

Abstract:Proceedings of the 1st International Workshop on Robot Learning and Planning (RLP 2016)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge