Mohamed Abouelenien

CliniDial: A Naturally Occurring Multimodal Dialogue Dataset for Team Reflection in Action During Clinical Operation

Jun 15, 2025Abstract:In clinical operations, teamwork can be the crucial factor that determines the final outcome. Prior studies have shown that sufficient collaboration is the key factor that determines the outcome of an operation. To understand how the team practices teamwork during the operation, we collected CliniDial from simulations of medical operations. CliniDial includes the audio data and its transcriptions, the simulated physiology signals of the patient manikins, and how the team operates from two camera angles. We annotate behavior codes following an existing framework to understand the teamwork process for CliniDial. We pinpoint three main characteristics of our dataset, including its label imbalances, rich and natural interactions, and multiple modalities, and conduct experiments to test existing LLMs' capabilities on handling data with these characteristics. Experimental results show that CliniDial poses significant challenges to the existing models, inviting future effort on developing methods that can deal with real-world clinical data. We open-source the codebase at https://github.com/MichiganNLP/CliniDial

Deception Detection from Linguistic and Physiological Data Streams Using Bimodal Convolutional Neural Networks

Nov 18, 2023

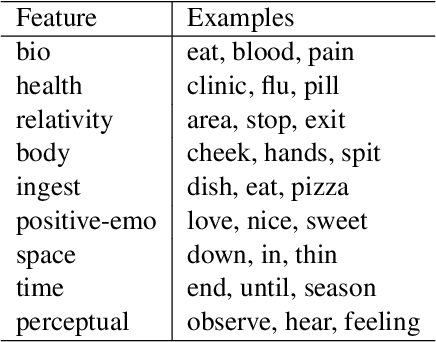

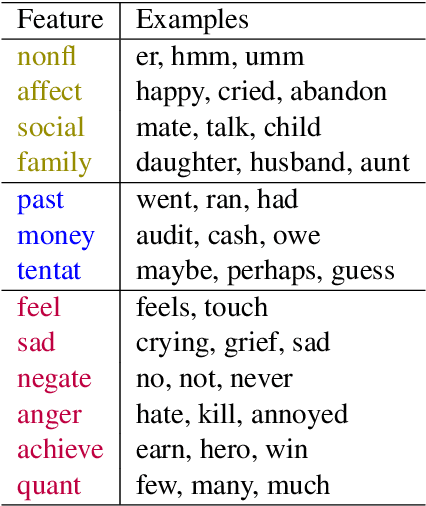

Abstract:Deception detection is gaining increasing interest due to ethical and security concerns. This paper explores the application of convolutional neural networks for the purpose of multimodal deception detection. We use a dataset built by interviewing 104 subjects about two topics, with one truthful and one falsified response from each subject about each topic. In particular, we make three main contributions. First, we extract linguistic and physiological features from this data to train and construct the neural network models. Second, we propose a fused convolutional neural network model using both modalities in order to achieve an improved overall performance. Third, we compare our new approach with earlier methods designed for multimodal deception detection. We find that our system outperforms regular classification methods; our results indicate the feasibility of using neural networks for deception detection even in the presence of limited amounts of data.

MUSER: MUltimodal Stress Detection using Emotion Recognition as an Auxiliary Task

May 17, 2021

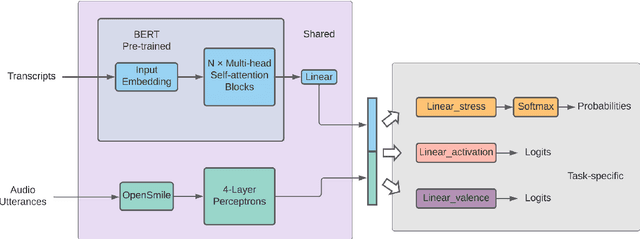

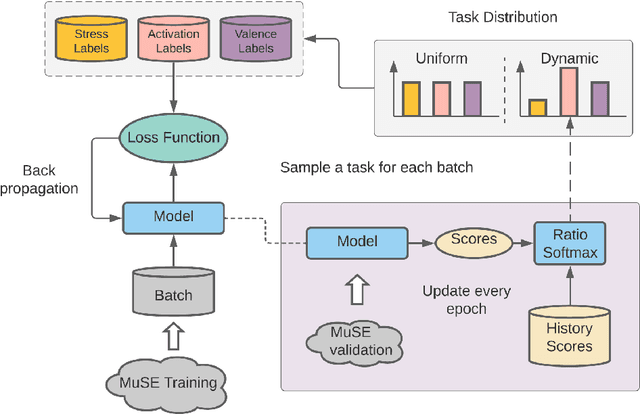

Abstract:The capability to automatically detect human stress can benefit artificial intelligent agents involved in affective computing and human-computer interaction. Stress and emotion are both human affective states, and stress has proven to have important implications on the regulation and expression of emotion. Although a series of methods have been established for multimodal stress detection, limited steps have been taken to explore the underlying inter-dependence between stress and emotion. In this work, we investigate the value of emotion recognition as an auxiliary task to improve stress detection. We propose MUSER -- a transformer-based model architecture and a novel multi-task learning algorithm with speed-based dynamic sampling strategy. Evaluations on the Multimodal Stressed Emotion (MuSE) dataset show that our model is effective for stress detection with both internal and external auxiliary tasks, and achieves state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge