Maximilian Arnold

Dynamic Tool Dependency Retrieval for Efficient Function Calling

Dec 23, 2025

Abstract:Function calling agents powered by Large Language Models (LLMs) select external tools to automate complex tasks. On-device agents typically use a retrieval module to select relevant tools, improving performance and reducing context length. However, existing retrieval methods rely on static and limited inputs, failing to capture multi-step tool dependencies and evolving task context. This limitation often introduces irrelevant tools that mislead the agent, degrading efficiency and accuracy. We propose Dynamic Tool Dependency Retrieval (DTDR), a lightweight retrieval method that conditions on both the initial query and the evolving execution context. DTDR models tool dependencies from function calling demonstrations, enabling adaptive retrieval as plans unfold. We benchmark DTDR against state-of-the-art retrieval methods across multiple datasets and LLM backbones, evaluating retrieval precision, downstream task accuracy, and computational efficiency. Additionally, we explore strategies to integrate retrieved tools into prompts. Our results show that dynamic tool retrieval improves function calling success rates between $23\%$ and $104\%$ compared to state-of-the-art static retrievers.

Neural 5G Indoor Localization with IMU Supervision

Feb 15, 2024

Abstract:Radio signals are well suited for user localization because they are ubiquitous, can operate in the dark and maintain privacy. Many prior works learn mappings between channel state information (CSI) and position fully-supervised. However, that approach relies on position labels which are very expensive to acquire. In this work, this requirement is relaxed by using pseudo-labels during deployment, which are calculated from an inertial measurement unit (IMU). We propose practical algorithms for IMU double integration and training of the localization system. We show decimeter-level accuracy on simulated and challenging real data of 5G measurements. Our IMU-supervised method performs similarly to fully-supervised, but requires much less effort to deploy.

Vision-Assisted Digital Twin Creation for mmWave Beam Management

Jan 31, 2024

Abstract:In the context of communication networks, digital twin technology provides a means to replicate the radio frequency (RF) propagation environment as well as the system behaviour, allowing for a way to optimize the performance of a deployed system based on simulations. One of the key challenges in the application of Digital Twin technology to mmWave systems is the prevalent channel simulators' stringent requirements on the accuracy of the 3D Digital Twin, reducing the feasibility of the technology in real applications. We propose a practical Digital Twin creation pipeline and a channel simulator, that relies only on a single mounted camera and position information. We demonstrate the performance benefits compared to methods that do not explicitly model the 3D environment, on downstream sub-tasks in beam acquisition, using the real-world dataset of the DeepSense6G challenge

Attacking and Defending Deep-Learning-Based Off-Device Wireless Positioning Systems

Nov 15, 2022

Abstract:Localization services for wireless devices play an increasingly important role and a plethora of emerging services and applications already rely on precise position information. Widely used on-device positioning methods, such as the global positioning system, enable accurate outdoor positioning and provide the users with full control over what services are allowed to access location information. To provide accurate positioning indoors or in cluttered urban scenarios without line-of-sight satellite connectivity, powerful off-device positioning systems, which process channel state information (CSI) with deep neural networks, have emerged recently. Such off-device positioning systems inherently link a user's data transmission with its localization, since accurate CSI measurements are necessary for reliable wireless communication -- this not only prevents the users from controlling who can access this information but also enables virtually everyone in the device's range to estimate its location, resulting in serious privacy and security concerns. We propose on-device attacks against off-device wireless positioning systems in multi-antenna orthogonal frequency-division multiplexing systems while minimizing the impact on quality-of-service, and we demonstrate their efficacy using measured datasets for outdoor and indoor scenarios. We also investigate defenses to counter such attack mechanisms, and we discuss the limitations and implications on protecting location privacy in future wireless communication systems.

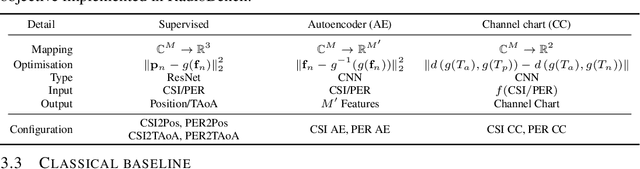

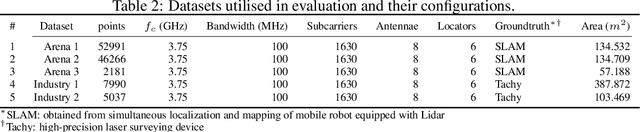

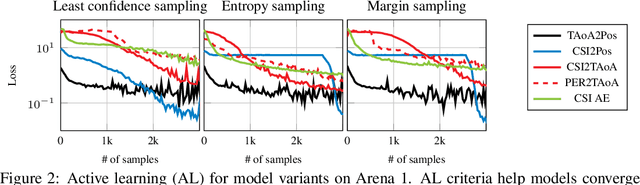

Benchmarking Learnt Radio Localisation under Distribution Shift

Oct 04, 2022

Abstract:Deploying radio frequency (RF) localisation systems invariably entails non-trivial effort, particularly for the latest learning-based breeds. There has been little prior work on characterising and comparing how learnt localiser networks can be deployed in the field under real-world RF distribution shifts. In this paper, we present RadioBench: a suite of 8 learnt localiser nets from the state-of-the-art to study and benchmark their real-world deployability, utilising five novel industry-grade datasets. We train 10k models to analyse the inner workings of these learnt localiser nets and uncover their differing behaviours across three performance axes: (i) learning, (ii) proneness to distribution shift, and (iii) localisation. We use insights gained from this analysis to recommend best practices for the deployability of learning-based RF localisation under practical constraints.

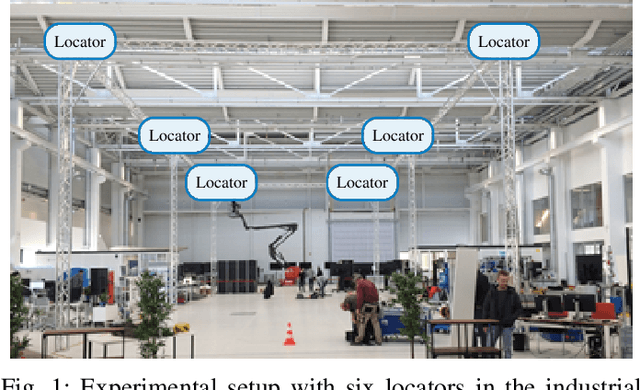

Probabilistic 5G Indoor Positioning Proof of Concept with Outlier Rejection

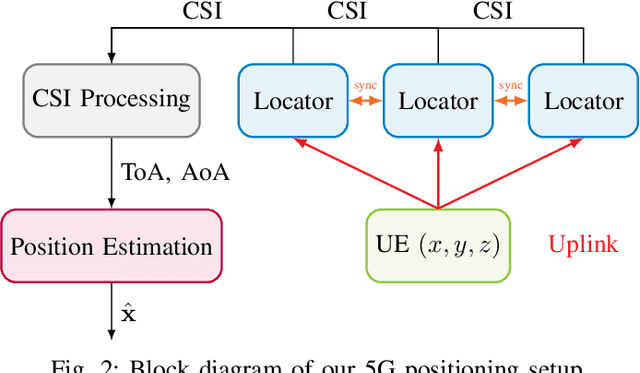

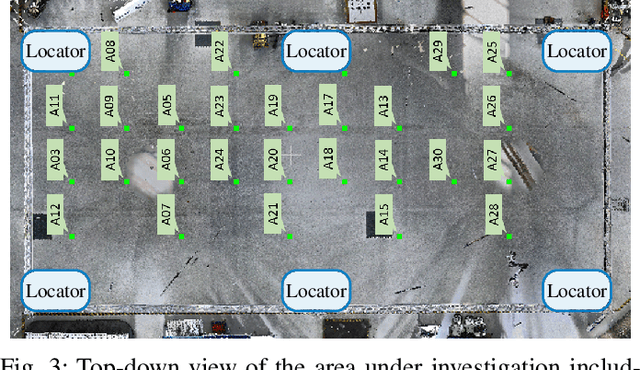

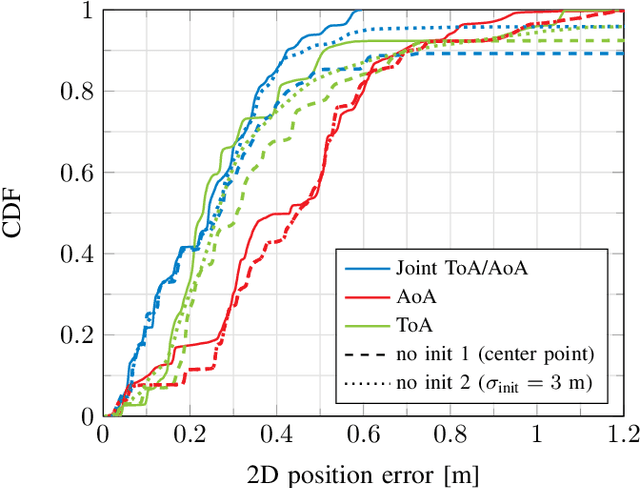

Jul 18, 2022

Abstract:The continuously increasing bandwidth and antenna aperture available in wireless networks laid the foundation for developing competitive positioning solutions relying on communications standards and hardware. However, poor propagation conditions such as non-line of sight (NLOS) and rich multipath still pose many challenges due to outlier measurements that significantly degrade the positioning performance. In this work, we introduce an iterative positioning method that reweights the time of arrival (ToA) and angle of arrival (AoA) measurements originating from multiple locators in order to efficiently remove outliers. In contrast to existing approaches that typically rely on a single locator to set the time reference for the time difference of arrival (TDoA) measurements corresponding to the remaining locators, and whose measurements may be unreliable, the proposed iterative approach does not rely on a reference locator only. The resulting robust position estimate is then used to initialize a computationally efficient gradient search to perform maximum likelihood position estimation. Our proposal is validated with an experimental setup at 3.75 GHz with 5G numerology in an indoor factory scenario, achieving an error of less than 50 cm in 95% of the measurements. To the best of our knowledge, this paper describes the first proof of concept for 5G-based joint ToA and AoA localization.

* Correction of small error in Eq. (1) of accepted 2022 Joint European Conference on Networks and Communications & 6G Summit (EuCNC/6G Summit) paper, which has no impact on the final results

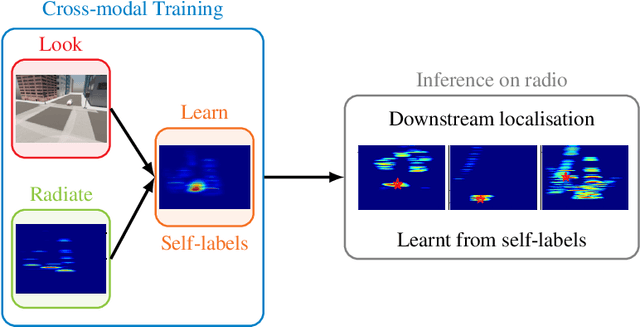

Look, Radiate, and Learn: Self-supervised Localisation via Radio-Visual Correspondence

Jun 13, 2022

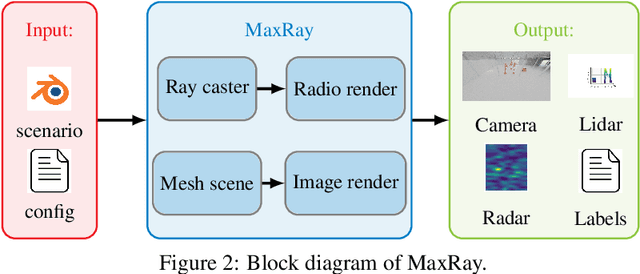

Abstract:Next generation cellular networks will implement radio sensing functions alongside customary communications, thereby enabling unprecedented worldwide sensing coverage outdoors. Deep learning has revolutionised computer vision but has had limited application to radio perception tasks, in part due to lack of systematic datasets and benchmarks dedicated to the study of the performance and promise of radio sensing. To address this gap, we present MaxRay: a synthetic radio-visual dataset and benchmark that facilitate precise target localisation in radio. We further propose to learn to localise targets in radio without supervision by extracting self-coordinates from radio-visual correspondence. We use such self-supervised coordinates to train a radio localiser network. We characterise our performance against a number of state-of-the-art baselines. Our results indicate that accurate radio target localisation can be automatically learned from paired radio-visual data without labels, which is highly relevant to empirical data. This opens the door for vast data scalability and may prove key to realising the promise of robust radio sensing atop a unified perception-communication cellular infrastructure. Dataset will be hosted on IEEE DataPort.

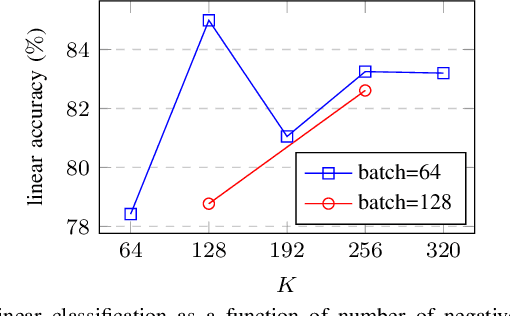

Self-Supervised Radio-Visual Representation Learning for 6G Sensing

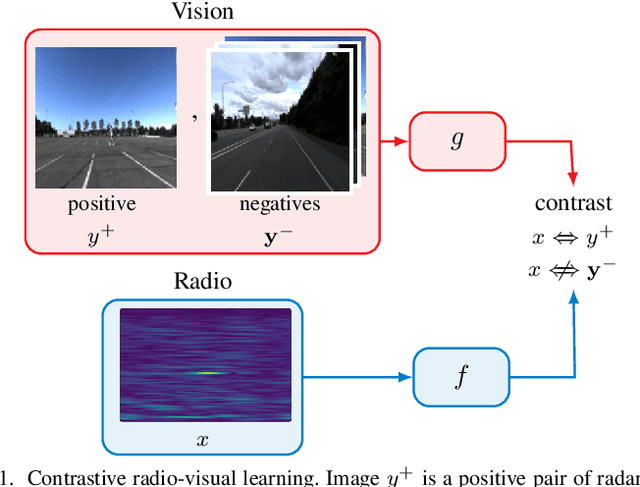

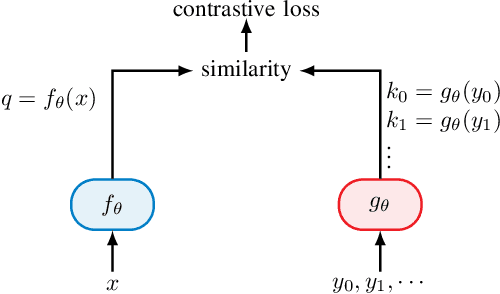

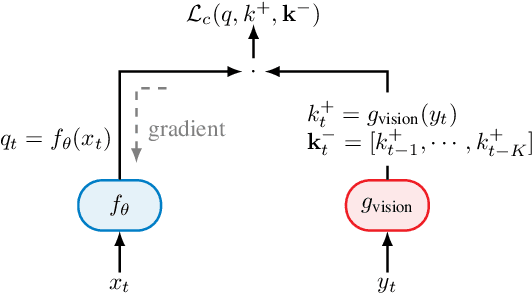

Nov 01, 2021

Abstract:In future 6G cellular networks, a joint communication and sensing protocol will allow the network to perceive the environment, opening the door for many new applications atop a unified communication-perception infrastructure. However, interpreting the sparse radio representation of sensing scenes is challenging, which hinders the potential of these emergent systems. We propose to combine radio and vision to automatically learn a radio-only sensing model with minimal human intervention. We want to build a radio sensing model that can feed on millions of uncurated data points. To this end, we leverage recent advances in self-supervised learning and formulate a new label-free radio-visual co-learning scheme, whereby vision trains radio via cross-modal mutual information. We implement and evaluate our scheme according to the common linear classification benchmark, and report qualitative and quantitative performance metrics. In our evaluation, the representation learnt by radio-visual self-supervision works well for a downstream sensing demonstrator, and outperforms its fully-supervised counterpart when less labelled data is used. This indicates that self-supervised learning could be an important enabler for future scalable radio sensing systems.

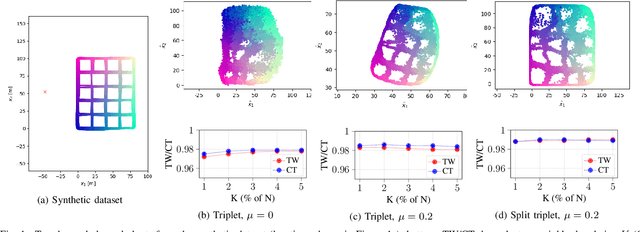

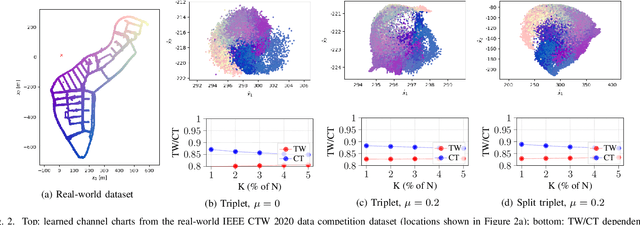

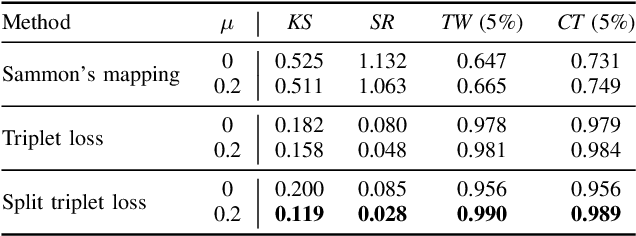

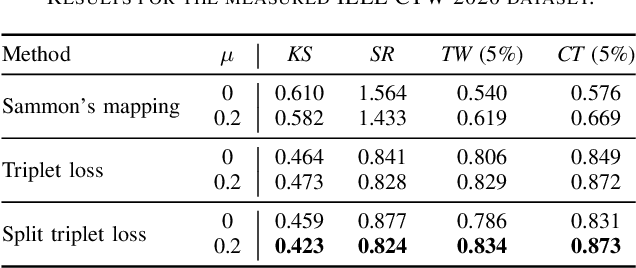

Improving Channel Charting using a Split Triplet Loss and an Inertial Regularizer

Oct 21, 2021

Abstract:Channel charting is an emerging technology that enables self-supervised pseudo-localization of user equipments by performing dimensionality reduction on large channel-state information (CSI) databases that are passively collected at infrastructure base stations or access points. In this paper, we introduce a new dimensionality reduction method specifically designed for channel charting using a novel split triplet loss, which utilizes physical information available during the CSI acquisition process. In addition, we propose a novel regularizer that exploits the physical concept of inertia, which significantly improves the quality of the learned channel charts. We provide an experimental verification of our methods using synthetic and real-world measured CSI datasets, and we demonstrate that our methods are able to outperform the state-of-the-art in channel charting based on the triplet loss.

* 6 pages, 2 figures

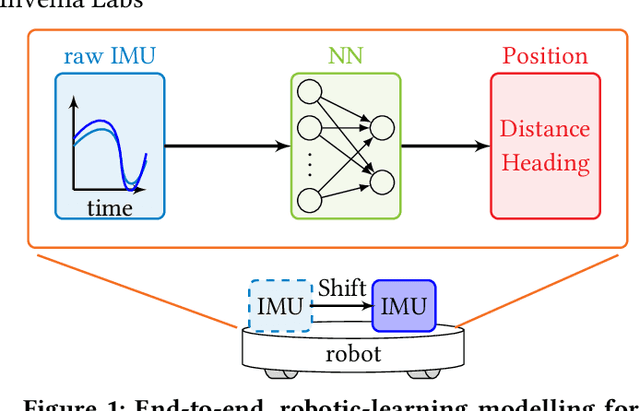

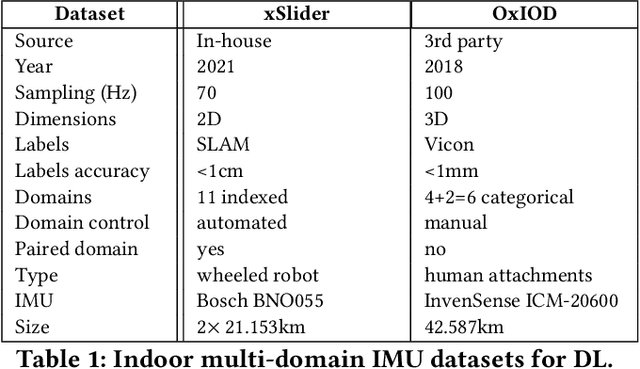

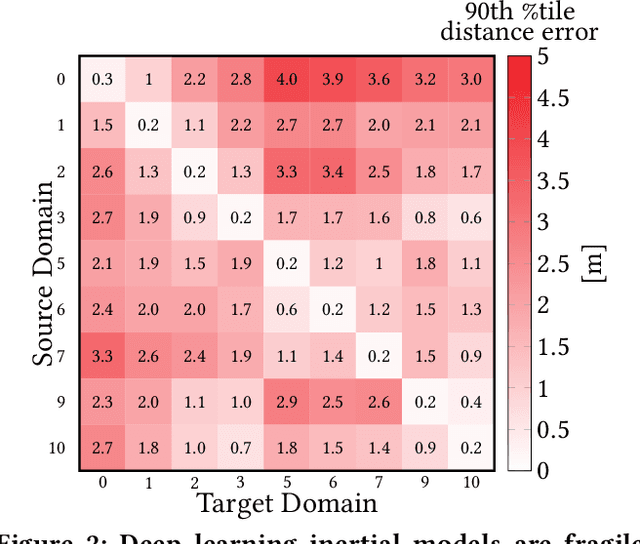

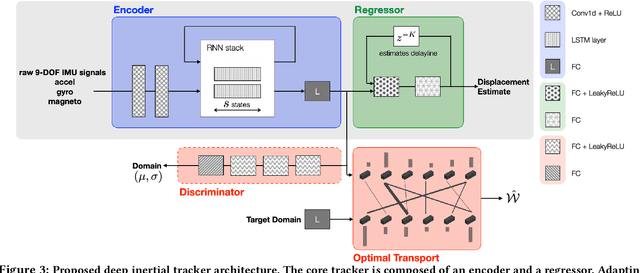

Towards Generalisable Deep Inertial Tracking via Geometry-Aware Learning

Jun 29, 2021

Abstract:Autonomous navigation in uninstrumented and unprepared environments is a fundamental demand for next generation indoor and outdoor location-based services. To bring about such ambition, a suite of collaborative sensing modalities is required in order to sustain performance irrespective of challenging dynamic conditions. Of the many modalities on offer, inertial tracking plays a key role under momentary unfavourable operational conditions owing to its independence of the surrounding environment. However, inertial tracking has traditionally (i) suffered from excessive error growth and (ii) required extensive and cumbersome tuning. Both of these issues have limited the appeal and utility of inertial tracking. In this paper, we present DIT: a novel Deep learning Inertial Tracking system that overcomes prior limitations; namely, by (i) significantly reducing tracking drift and (ii) seamlessly constructing robust and generalisable learned models. DIT describes two core contributions: (i) DIT employs a robotic platform augmented with a mechanical slider subsystem that automatically samples inertial signal variabilities arising from different sensor mounting geometries. We use the platform to curate in-house a 7.2 million sample dataset covering an aggregate distance of 21 kilometres split into 11 indexed sensor mounting geometries. (ii) DIT uses deep learning, optimal transport, and domain adaptation (DA) to create a model which is robust to variabilities in sensor mounting geometry. The overall system synthesises high-performance and generalisable inertial navigation models in an end-to-end, robotic-learning fashion. In our evaluation, DIT outperforms an industrial-grade sensor fusion baseline by 10x (90th percentile) and a state-of-the-art adversarial DA technique by > 2.5x in performance (90th percentile) and >10x in training time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge