Maxime Caniot

SoftBank Robotics Europe

Adapted Pepper

Sep 08, 2020

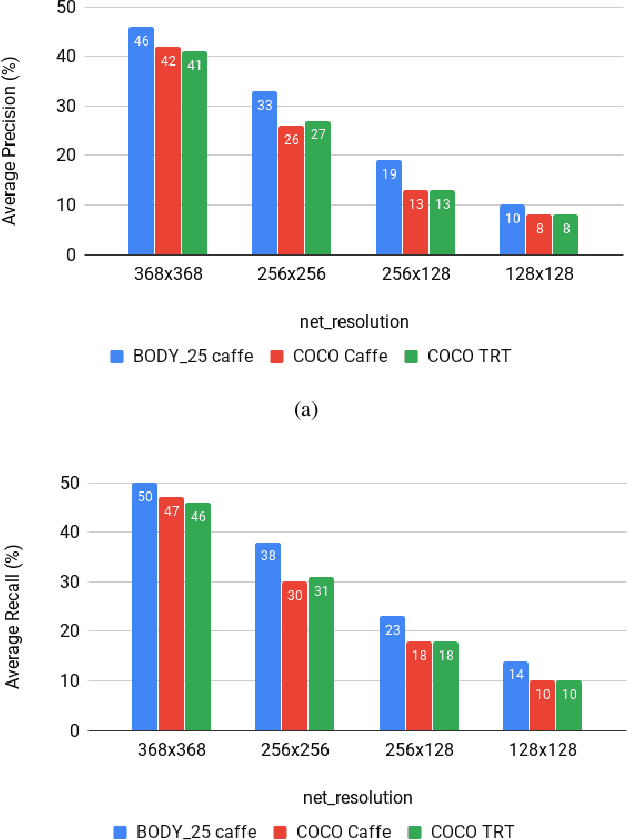

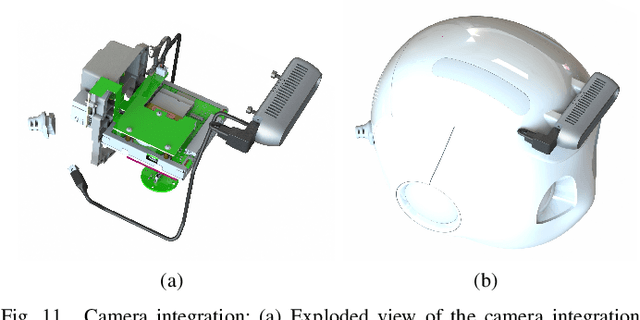

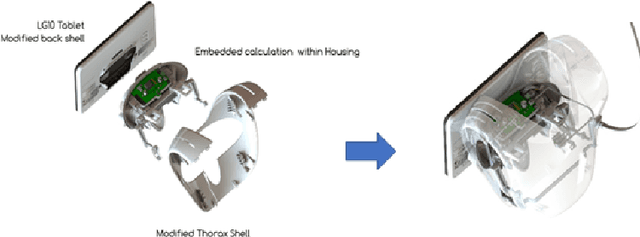

Abstract:One of the main issue in robotics is the lack of embedded computational power. Recently, state of the art algorithms providing a better understanding of the surroundings (Object detection, skeleton tracking, etc.) are requiring more and more computational power. The lack of embedded computational power is more significant in mass-produced robots because of the difficulties to follow the increasing computational requirements of state of the art algorithms. The integration of an additional GPU allows to overcome this lack of embedded computational power. We introduce in this paper a prototype of Pepper with an embedded GPU, but also with an additional 3D camera on the head of the robot and plugged to the late GPU. This prototype, called Adapted Pepper, was built for the European project called MuMMER (MultiModal Mall Entertainment Robot) in order to embed algorithms like OpenPose, YOLO or to process sensors information and, in all cases, avoid network dependency for deported computation.

MuMMER: Socially Intelligent Human-Robot Interaction in Public Spaces

Sep 15, 2019

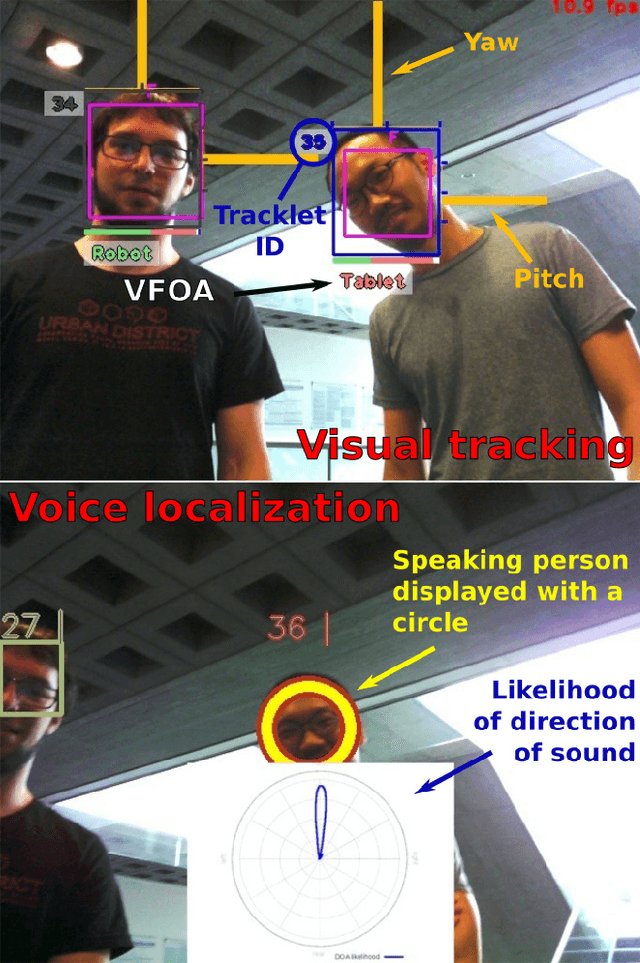

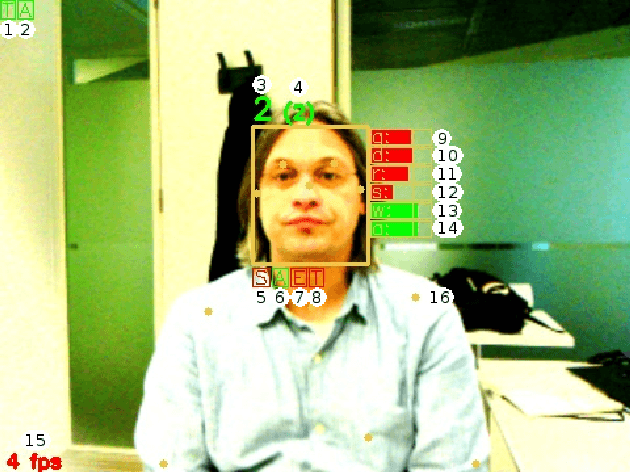

Abstract:In the EU-funded MuMMER project, we have developed a social robot designed to interact naturally and flexibly with users in public spaces such as a shopping mall. We present the latest version of the robot system developed during the project. This system encompasses audio-visual sensing, social signal processing, conversational interaction, perspective taking, geometric reasoning, and motion planning. It successfully combines all these components in an overarching framework using the Robot Operating System (ROS) and has been deployed to a shopping mall in Finland interacting with customers. In this paper, we describe the system components, their interplay, and the resulting robot behaviours and scenarios provided at the shopping mall.

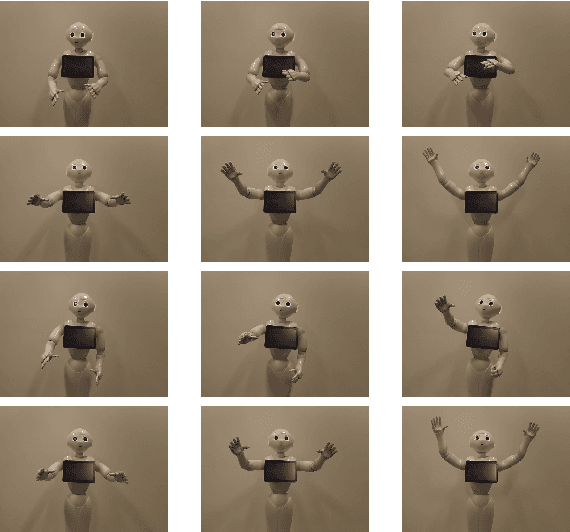

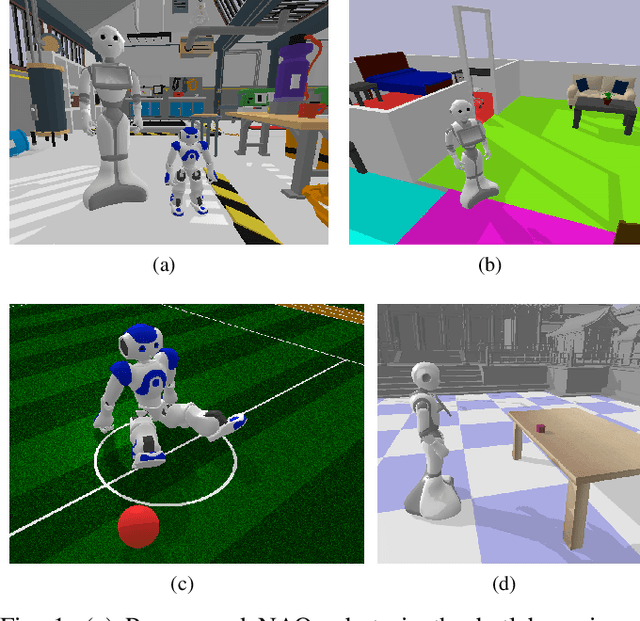

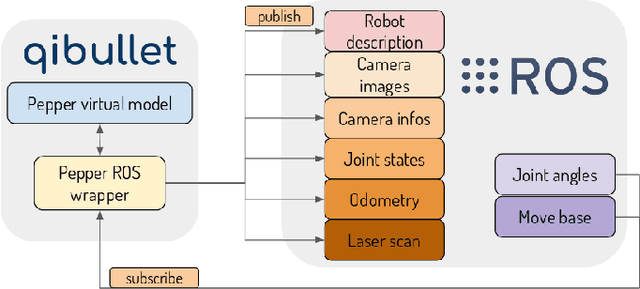

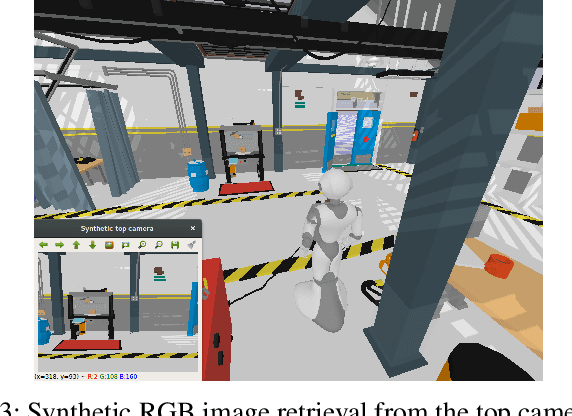

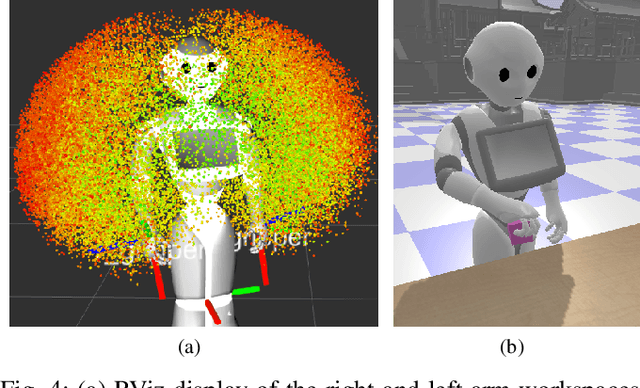

qiBullet, a Bullet-based simulator for the Pepper and NAO robots

Sep 04, 2019

Abstract:The Pepper and NAO robots are widely used for in-store advertizing and education, but also as robotic platforms for research purposes. Their presence in the academic field is expressed through various publications, multiple collaborative projects, and by being the standard platforms of two different RoboCup leagues. Developing, gathering data and training humanoid robots can be tedious: iteratively repeating specific tasks can present risks for the robots, and some environments can be difficult to setup. Software tools allowing to simulate complex environments and the dynamics of robots can thus alleviate that problem, allowing to perform the aforementioned processes on virtual models. One current drawback of the Pepper and NAO platforms is the lack of a physically accurate simulation tool, allowing to test scenarios involving repetitive movements and contacts with the environment on a virtual robot. In this paper, we introduce the qiBullet simulation tool, using the Bullet physics engine to provide such a solution for the Pepper and NAO robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge