Kensuke Harada

Osaka University, AIST

Multi-Pen Robust Robotic 3D Drawing Using Closed-Loop Planning

Oct 01, 2020

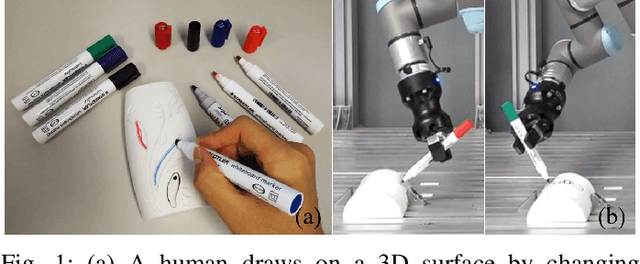

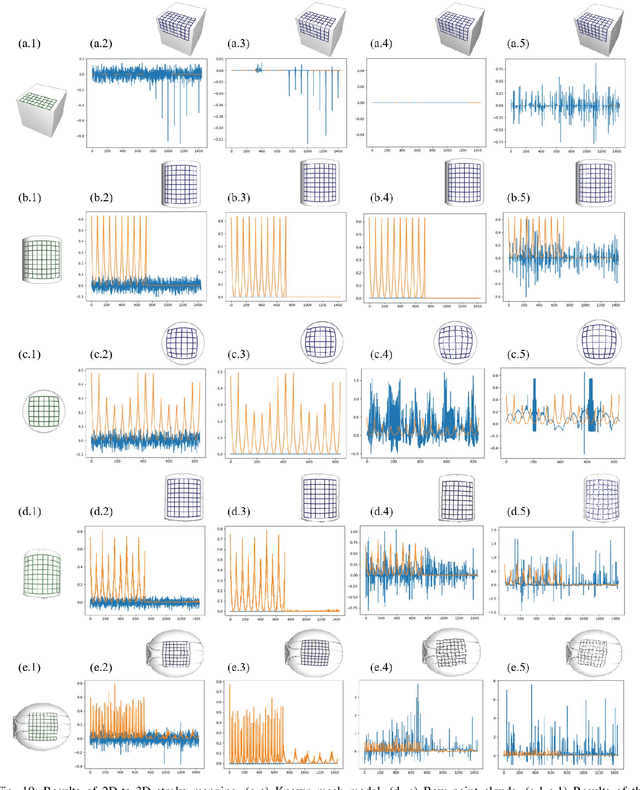

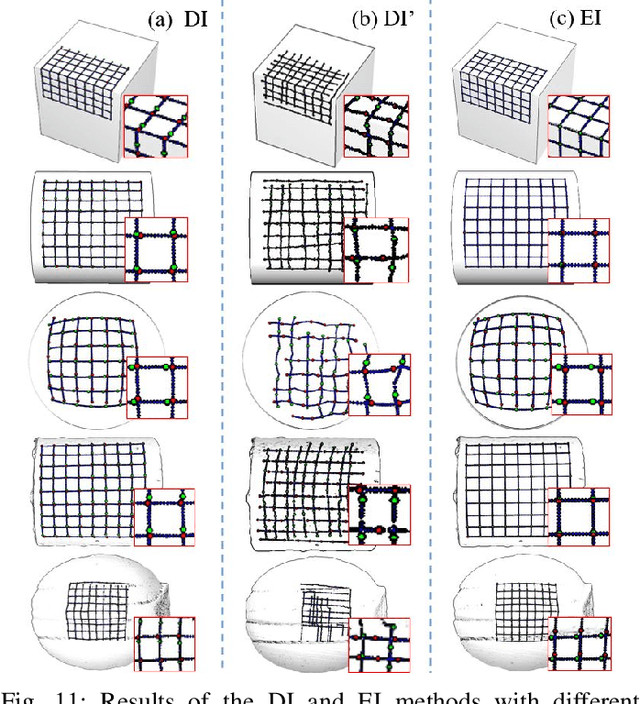

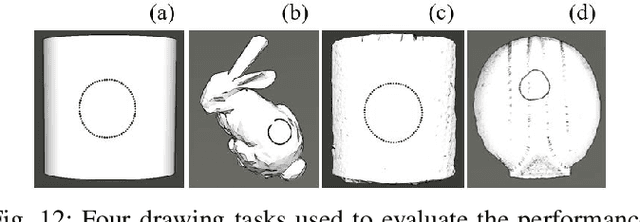

Abstract:This paper develops a flexible and robust robotic system for autonomous drawing on 3D surfaces. The system takes 2D drawing strokes and a 3D target surface (mesh or point clouds) as input. It maps the 2D strokes onto the 3D surface and generates a robot motion to draw the mapped strokes using visual recognition, grasp pose reasoning, and motion planning. The system is flexible compared to conventional robotic drawing systems as we do not fix drawing tools to the end of a robot arm. Instead, a robot selects drawing tools using a vision system and holds drawing tools for painting using its hand. Meanwhile, with the flexibility, the system has high robustness thanks to the following crafts: First, a high-quality mapping method is developed to minimize deformation in the strokes. Second, visual detection is used to re-estimate the drawing tool's pose before executing each drawing motion. Third, force control is employed to avoid noisy visual detection and calibration, and ensure a firm touch between the pen tip and a target surface. Fourth, error detection and recovery are implemented to deal with unexpected problems. The planning and executions are performed in a closed-loop manner until the strokes are successfully drawn. We evaluate the system and analyze the necessity of the various crafts using different real-word tasks. The results show that the proposed system is flexible and robust to generate a robot motion from picking and placing the pens to successfully drawing 3D strokes on given surfaces.

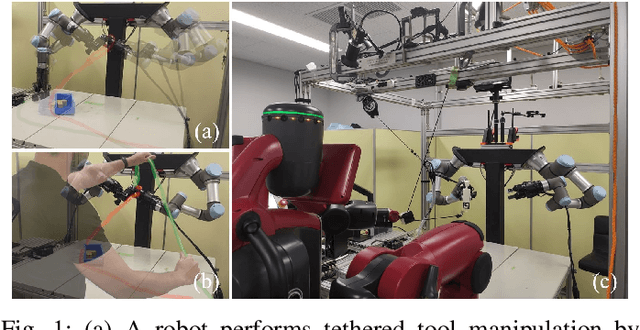

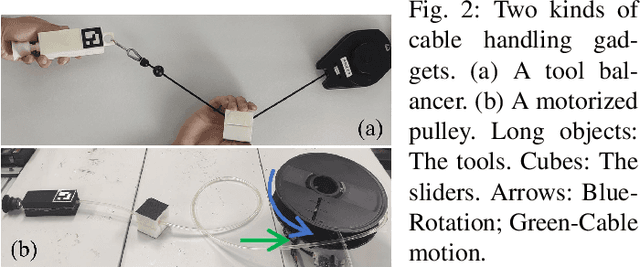

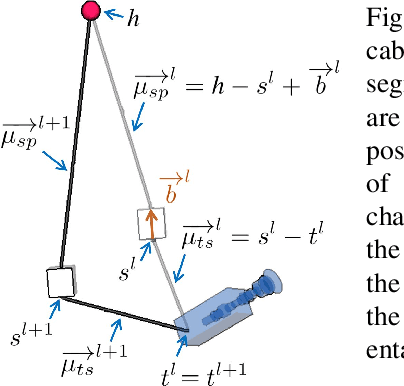

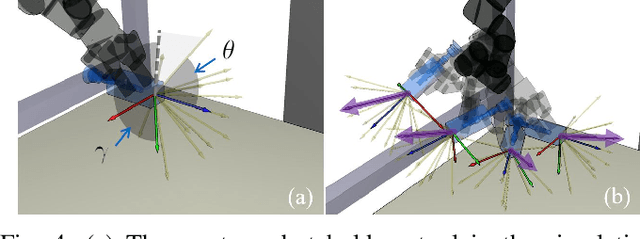

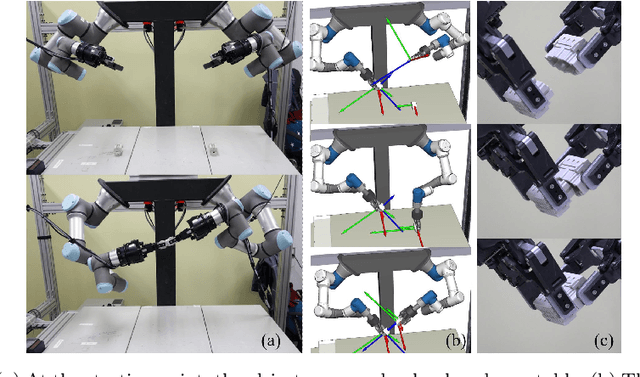

Four-Arm Collaboration: Two Dual-Arm Robots Work Together to Maneuver Tethered Tools

Sep 29, 2020

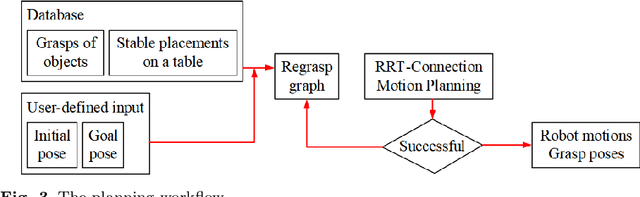

Abstract:In this paper, we present a planner for a master dual-arm robot to manipulate tethered tools with an assistant dual-arm robot's help. The assistant robot provides assistance to the master robot by manipulating the tool cable and avoiding collisions. The provided assistance allows the master robot to perform tool placements on the robot workspace table to regrasp the tool, which would typically fail since the tool cable tension may change the tool positions. It also allows the master robot to perform tool handovers, which would normally cause entanglements or collisions with the cable and the environment without the assistance. Simulations and real-world experiments are performed to validate the proposed planner.

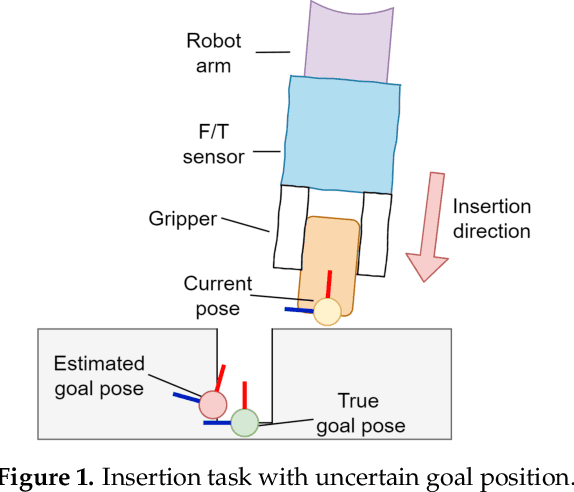

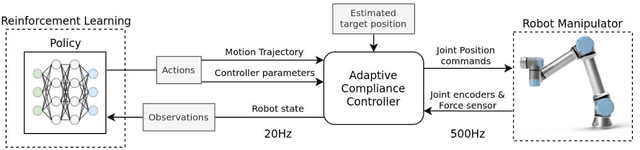

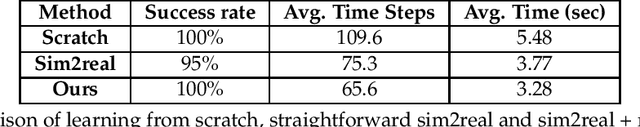

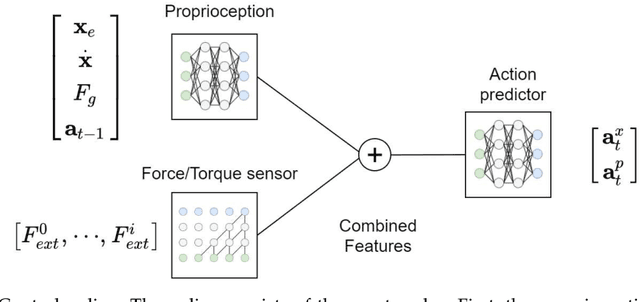

Variable Compliance Control for Robotic Peg-in-Hole Assembly: A Deep Reinforcement Learning Approach

Sep 25, 2020

Abstract:Industrial robot manipulators are playing a more significant role in modern manufacturing industries. Though peg-in-hole assembly is a common industrial task which has been extensively researched, safely solving complex high precision assembly in an unstructured environment remains an open problem. Reinforcement Learning (RL) methods have been proven successful in solving manipulation tasks autonomously. However, RL is still not widely adopted on real robotic systems because working with real hardware entails additional challenges, especially when using position-controlled manipulators. The main contribution of this work is a learning-based method to solve peg-in-hole tasks with position uncertainty of the hole. We proposed the use of an off-policy model-free reinforcement learning method and bootstrap the training speed by using several transfer learning techniques (sim2real) and domain randomization. Our proposed learning framework for position-controlled robots was extensively evaluated on contact-rich insertion tasks on a variety of environments.

A Mechanical Screwing Tool for 2-Finger Parallel Grippers -- Design, Optimization, and Manipulation Policies

Jun 18, 2020

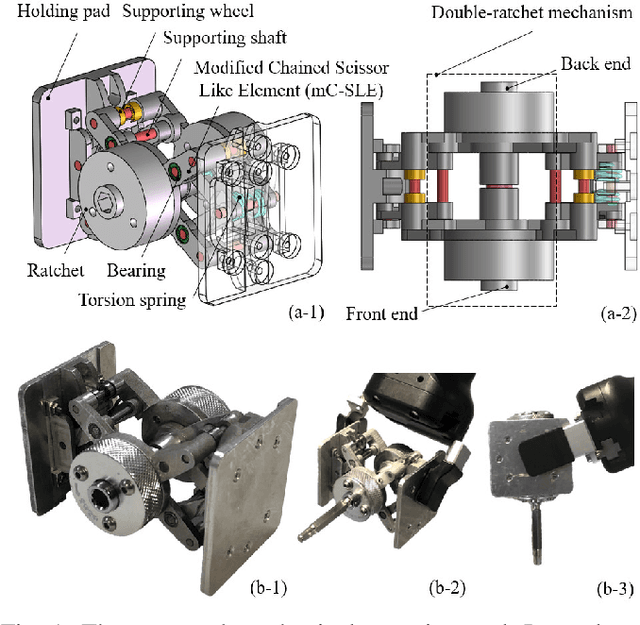

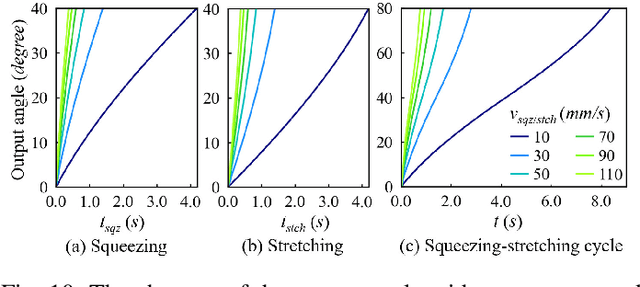

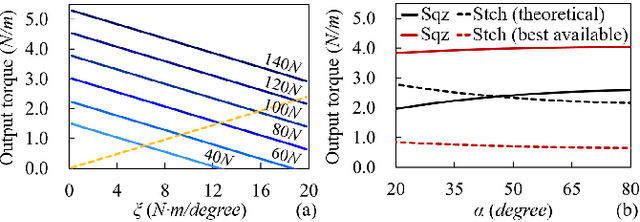

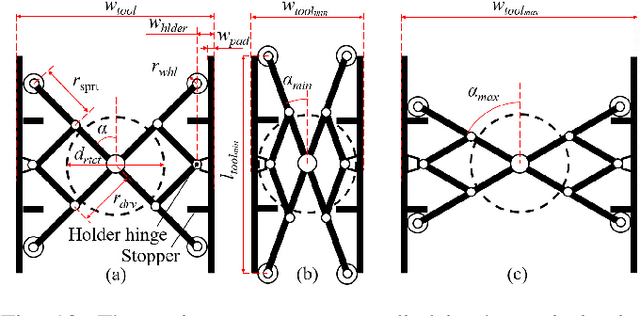

Abstract:This paper develops a mechanical tool as well as its manipulation policies for 2-finger parallel robotic grippers. It primarily focuses on a mechanism that converts the gripping motion of 2-finger parallel grippers into a continuous rotation to realize tasks like fastening screws. The essential structure of the tool comprises a Scissor-Like Element (SLE) mechanism and a double-ratchet mechanism. They together convert repeated linear motion into continuous rotating motion. At the joints of the SLE mechanism, elastic elements are attached to provide resisting force for holding the tool as well as for producing torque output when a gripper releases the tool. The tool is entirely mechanical, allowing robots to use the tool without any peripherals and power supply. The paper presents the details of the tool design, optimizes its dimensions and effective stroke lengths, and studies the contacts and forces to achieve stable grasping and screwing. Besides the design, the paper develops manipulation policies for the tool. The policies include visual recognition, picking-up and manipulation, and exchanging tooltips. The developed tool produces clockwise rotation at the front end and counter-clockwise rotation at the back end. Various tooltips can be installed at both two ends. Robots may employ the developed manipulation policies to exchange the tooltips and rotating directions following the needs of specific fastening or loosening tasks. Robots can also reorient the tool using pick-and-place or handover, and move the tool to work poses using the policies. The designed tool, together with the developed manipulation policies, are analyzed and verified in several real-world applications. The tool is small, cordless, convenient, and has good robustness and adaptability.

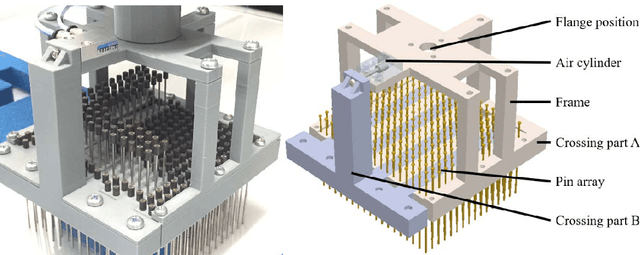

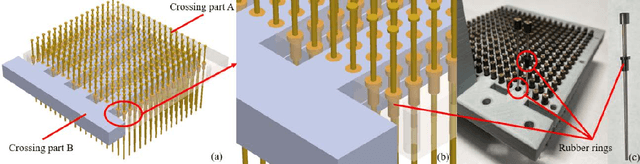

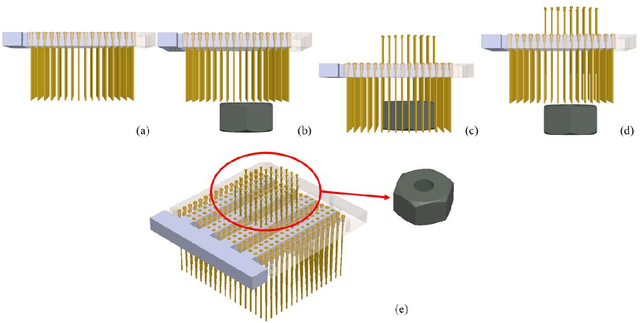

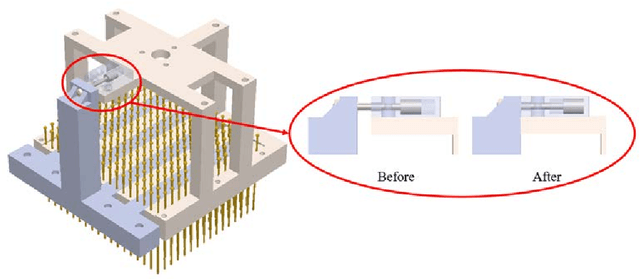

Development of a Shape-memorable Adaptive Pin Array Fixture

May 20, 2020

Abstract:This paper proposes an adaptive pin-array fixture. The key idea of this research is to use the shape-memorable mechanism of pin array to fix multiple different shaped parts with common pin configuration. The clamping area consists of a matrix of passively slid-able pins that conform themselves to the contour of the target object. Vertical motion of the pins enables the fixture to encase the profile of the object. The shape memorable mechanism is realized by the combination of the rubber bush and fixing mechanism of a pin. Several physical peg-in-hole tasks is conducted to verify the feasibility of the fixture.

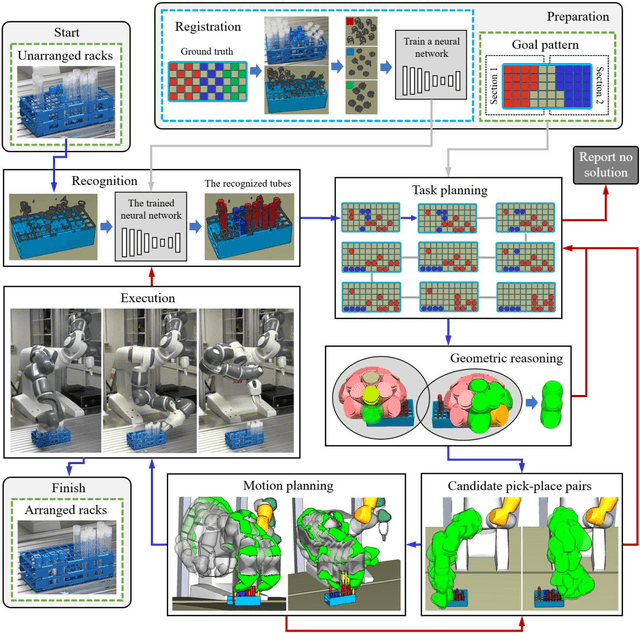

Arranging Test Tubes in Racks Using Combined Task and Motion Planning

May 07, 2020

Abstract:The paper develops a robotic manipulation system to treat the pressing needs for handling a large number of test tubes in clinical examination and replace or reduce human labor. It presents the technical details of the system, which separates and arranges test tubes in racks with the help of 3D vision and artificial intelligence (AI) reasoning/planning. The developed system only requires a person to put a rack with mixed and non-arranged tubes in front of a robot. The robot autonomously performs recognition, reasoning, planning, manipulation, etc., and returns a rack with separated and arranged tubes. The system is simple-to-use, and there are no requests for expert knowledge in robotics. We expect such a system to play an important role in helping managing public health and hope similar systems could be extended to other clinical manipulation like handling mixers and pipettes in the future.

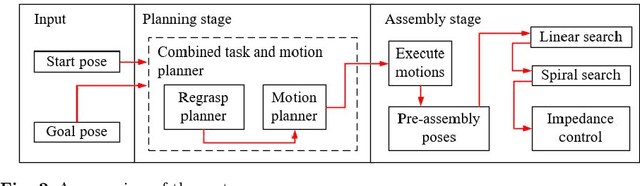

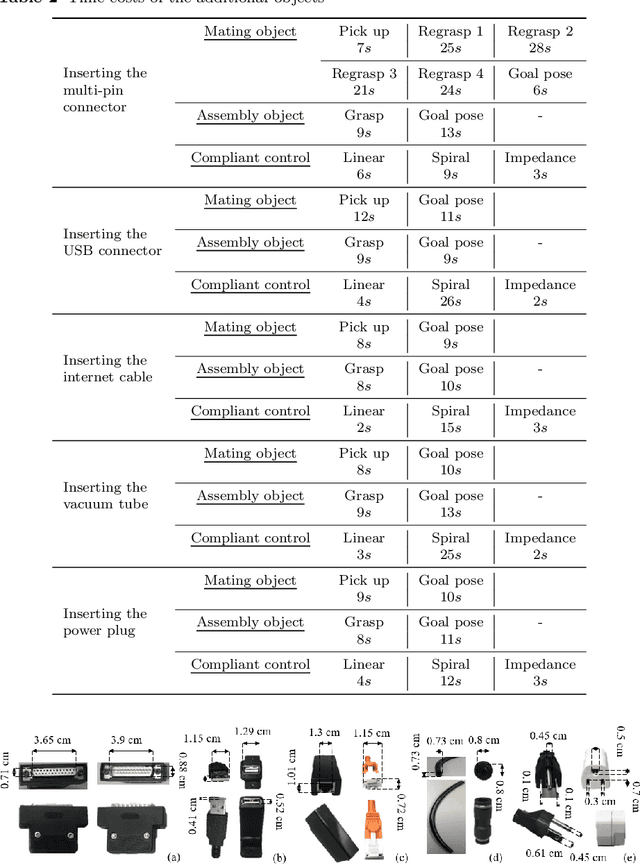

Integrating Combined Task and Motion Planning with Compliant Control

Mar 26, 2020

Abstract:Planning a motion for inserting pegs remains an open problem. The difficulty lies in both the inevitable errors in the grasps of a robotic hand and absolute precision problems in robot joint motors. This paper proposes an integral method to solve the problem. The method uses combined task and motion planning to plan the grasps and motion for a dual-arm robot to pick up the objects and move them to assembly poses. Then, it controls the dual-arm robot using a compliant strategy (a combination of linear search, spiral search, and impedance control) to finish up the insertion. The method is implemented on a dual-arm Universal Robots 3 robot. Six objects, including a connector with fifteen peg-in-hole pairs for detailed analysis and other five objects with different contours of pegs and holes for additional validation, were tested by the robot. Experimental results show reasonable force-torque signal changes and end-effector position changes. The proposed method exhibits high robustness and high fidelity in successfully conducting planned peg-in-hole tasks.

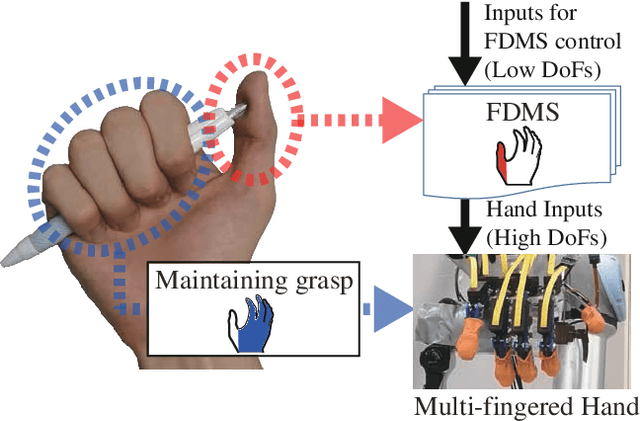

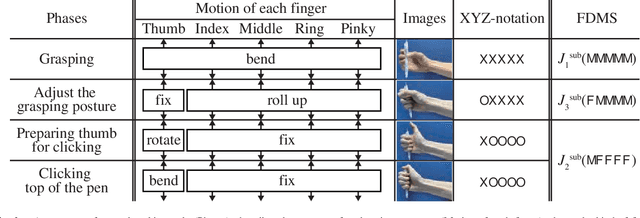

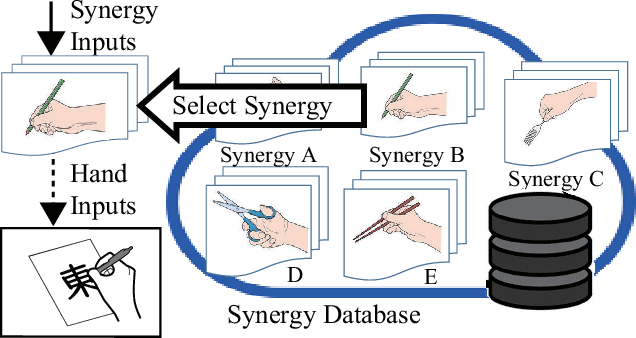

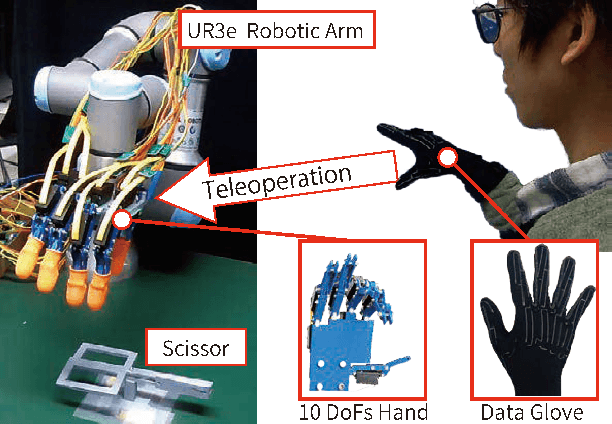

Functionally Divided Manipulation Synergy for Controlling Multi-fingered Hands

Mar 26, 2020

Abstract:Synergy supplies a practical approach for expressing various postures of a multi-fingered hand. However, a conventional synergy defined for reproducing grasping postures cannot perform general-purpose tasks expected for a multi-fingered hand. Locking the position of particular fingers is essential for a multi-fingered hand to manipulate an object. When using conventional synergy based control to manipulate an object, which requires locking some fingers, the coordination of joints is heavily restricted, decreasing the dexterity of the hand. We propose a functionally divided manipulation synergy (FDMS) method, which provides a synergy-based control to achieves both dimensionality reduction and in-hand manipulation. In FDMS, first, we define the function of each finger of the hand as either "manipulation" or "fixed." Then, we apply synergy control only to the fingers having the manipulation function, so that dexterous manipulations can be realized with few control inputs. The effectiveness of our proposed approach is experimentally verified.

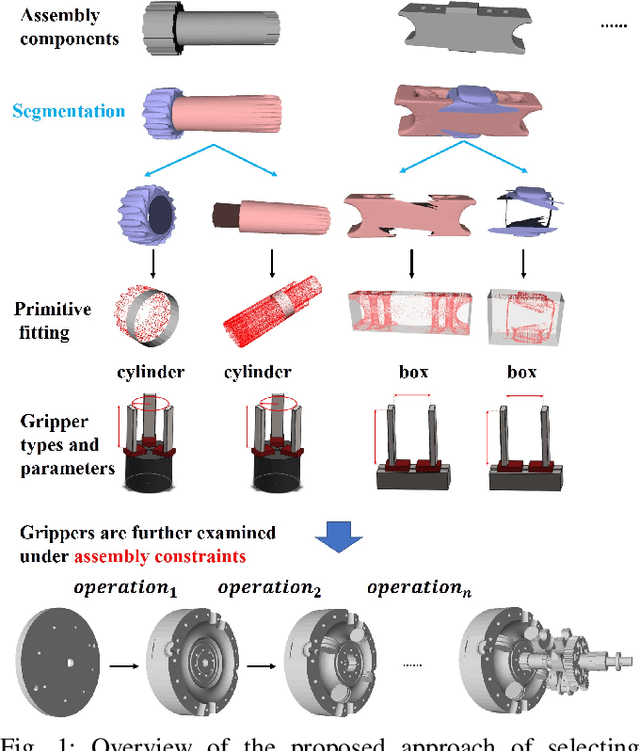

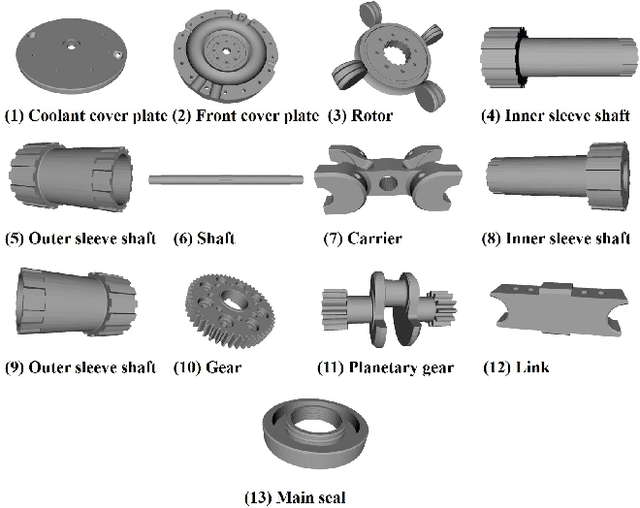

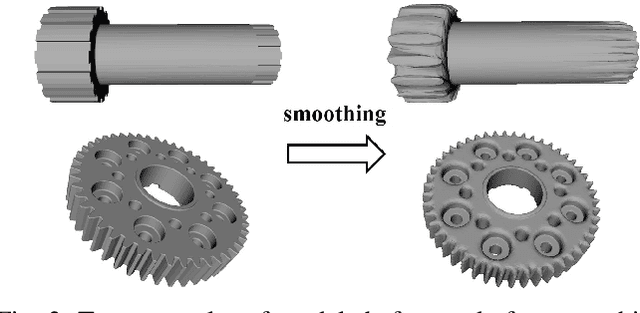

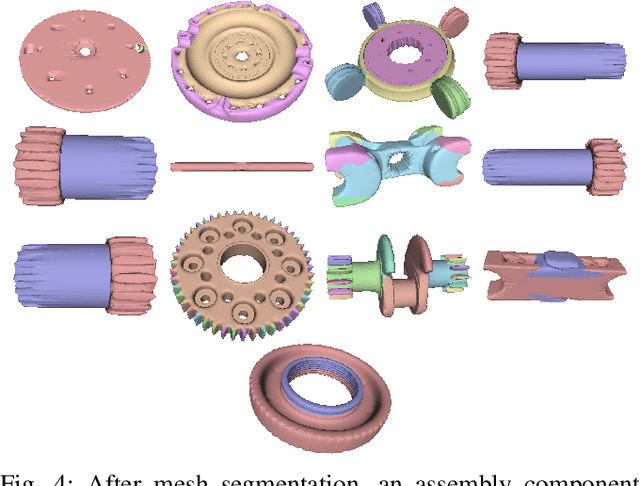

Selecting and Designing Grippers for an Assembly Task in a Structured Approach

Mar 09, 2020

Abstract:In this paper, we present a structured approach of selecting and designing a set of grippers for an assembly task. Compared to current experience-based gripper design method, our approach accelerates the design process by automatically generating a set of initial design options on gripper type and parameters according to the CAD models of assembly components. We use mesh segmentation techniques to segment the assembly components and fit the segmented parts with shape primitives, according to the predefined correspondence between primitive shape and gripper type, suitable gripper types and parameters can be selected and extracted from the fitted shape primitives. Then considering the assembly constraints, applicable gripper types and parameters can be filtered from the initial options. Among the applicable gripper configurations, we further minimize the required number of grippers for performing the assembly task, by exploring the gripper that is able to handle multiple assembly components during the assembly. Finally, the feasibility of the designed grippers are experimentally verified by assembling a part of an industrial product.

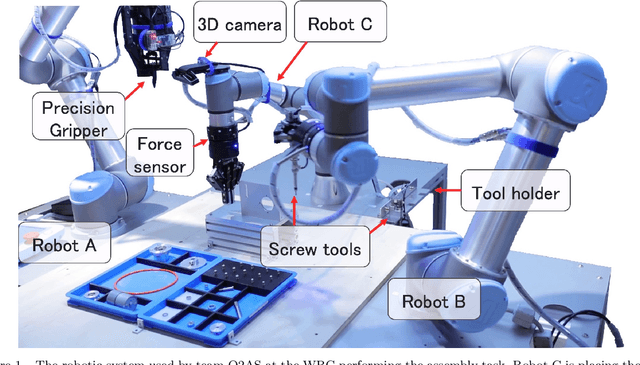

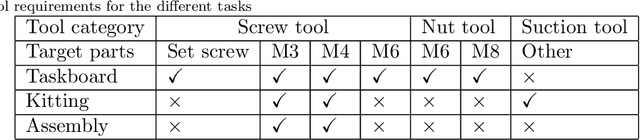

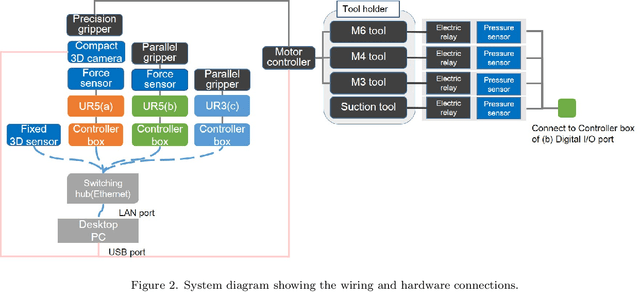

Team O2AS at the World Robot Summit 2018: An Approach to Robotic Kitting and Assembly Tasks using General Purpose Grippers and Tools

Mar 05, 2020

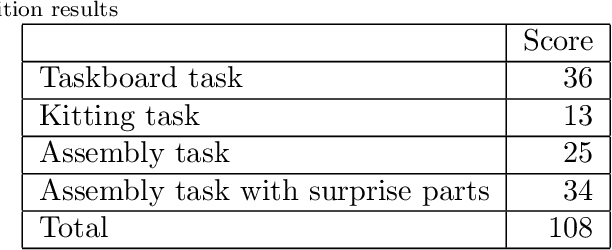

Abstract:We propose a versatile robotic system for kitting and assembly tasks which uses no jigs or commercial tool changers. Instead of specialized end effectors, it uses its two-finger grippers to grasp and hold tools to perform subtasks such as screwing and suctioning. A third gripper is used as a precision picking and centering tool, and uses in-built passive compliance to compensate for small position errors and uncertainty. A novel grasp point detection for bin picking is described for the kitting task, using a single depth map. Using the proposed system we competed in the Assembly Challenge of the Industrial Robotics Category of the World Robot Challenge at the World Robot Summit 2018, obtaining 4th place and the SICE award for lean design and versatile tool use. We show the effectiveness of our approach through experiments performed during the competition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge