Joshua Roe

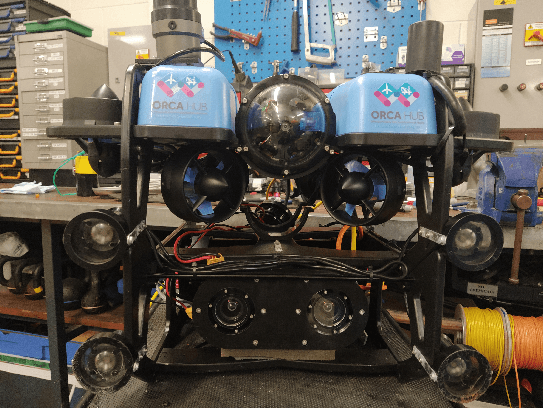

From market-ready ROVs to low-cost AUVs

Aug 12, 2021

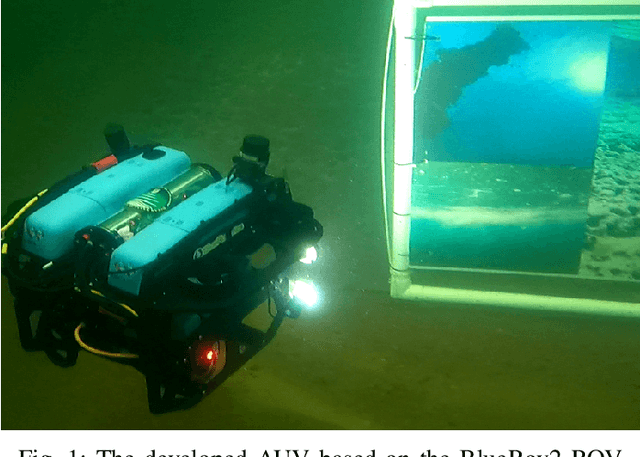

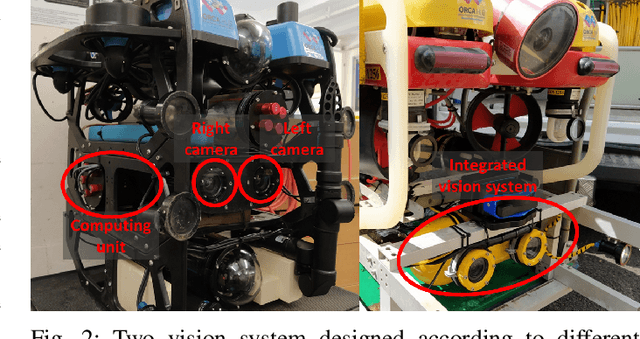

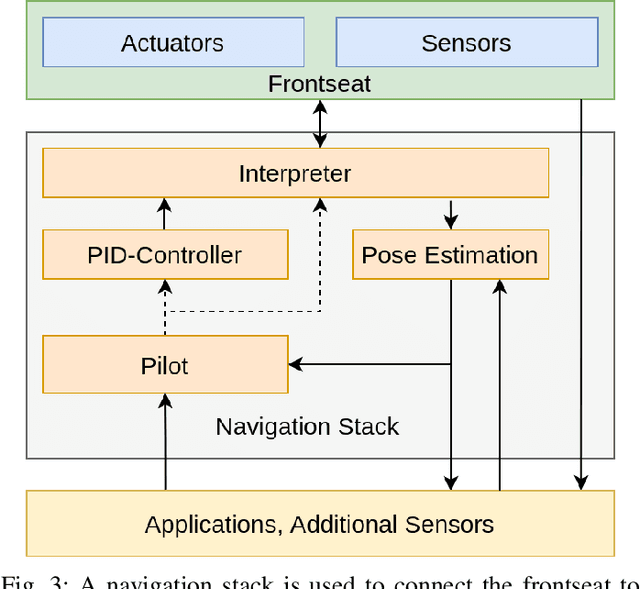

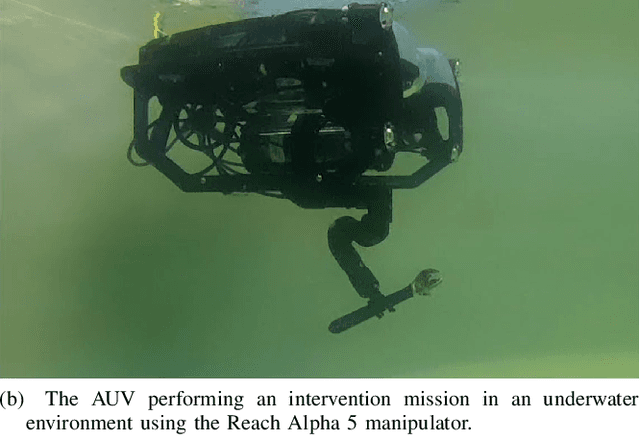

Abstract:Autonomous Underwater Vehicles (AUVs) are becoming increasingly important for different types of industrial applications. The generally high cost of (AUVs) restricts the access to them and therefore advances in research and technological development. However, recent advances have led to lower cost commercially available Remotely Operated Vehicles (ROVs), which present a platform that can be enhanced to enable a high degree of autonomy, similar to that of a high-end (AUV). In this article, we present how a low-cost commercial-off-the-shelf (ROV) can be used as a foundation for developing versatile and affordable (AUVs). We introduce the required hardware modifications to obtain a system capable of autonomous operations as well as the necessary software modules. Additionally, we present a set of use cases exhibiting the versatility of the developed platform for intervention and mapping tasks.

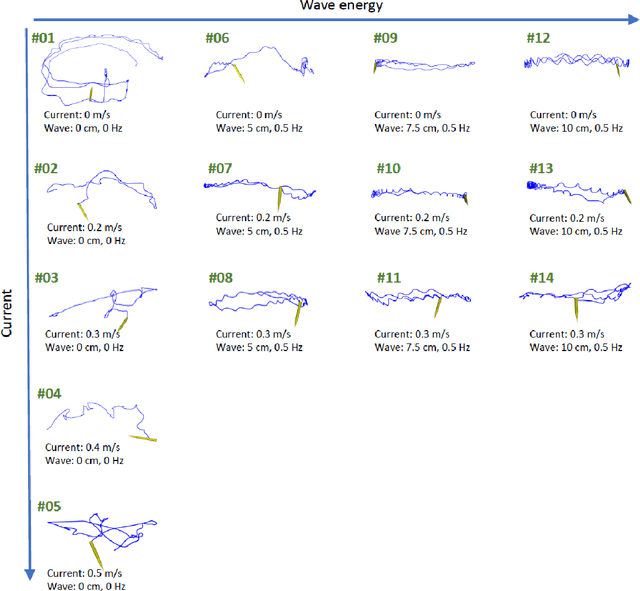

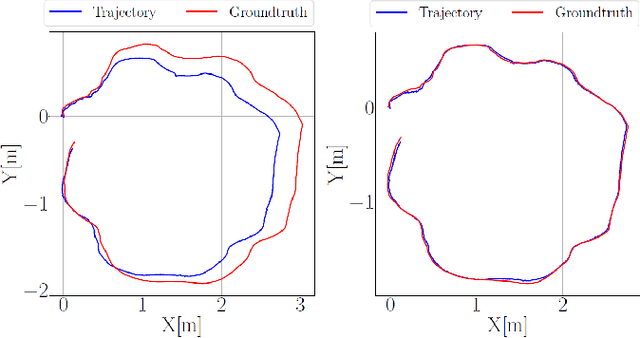

Underwater inspection and intervention dataset

Jul 28, 2021

Abstract:This paper presents a novel dataset for the development of visual navigation and simultaneous localisation and mapping (SLAM) algorithms as well as for underwater intervention tasks. It differs from existing datasets as it contains ground truth for the vehicle's position captured by an underwater motion tracking system. The dataset contains distortion-free and rectified stereo images along with the calibration parameters of the stereo camera setup. Furthermore, the experiments were performed and recorded in a controlled environment, where current and waves could be generated allowing the dataset to cover a wide range of conditions - from calm water to waves and currents of significant strength.

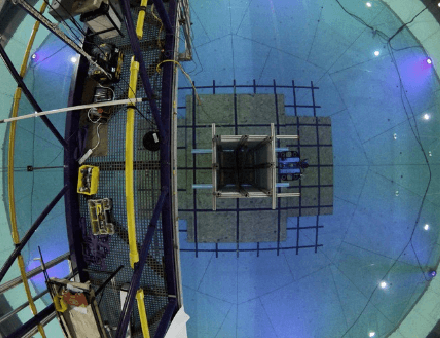

Symbiotic System Design for Safe and Resilient Autonomous Robotics in Offshore Wind Farms

Jan 23, 2021

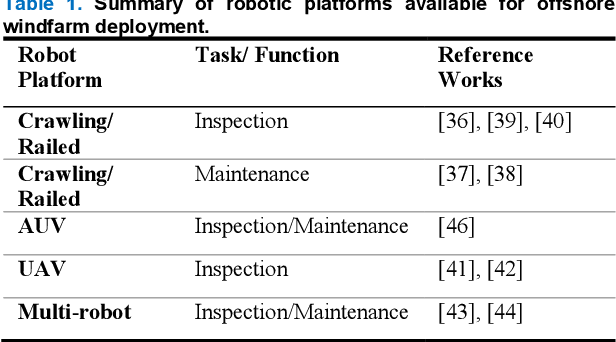

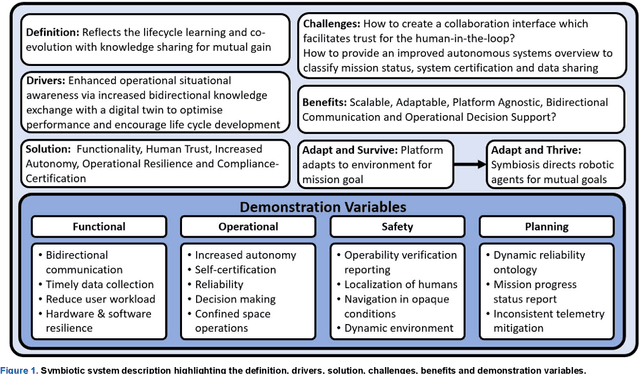

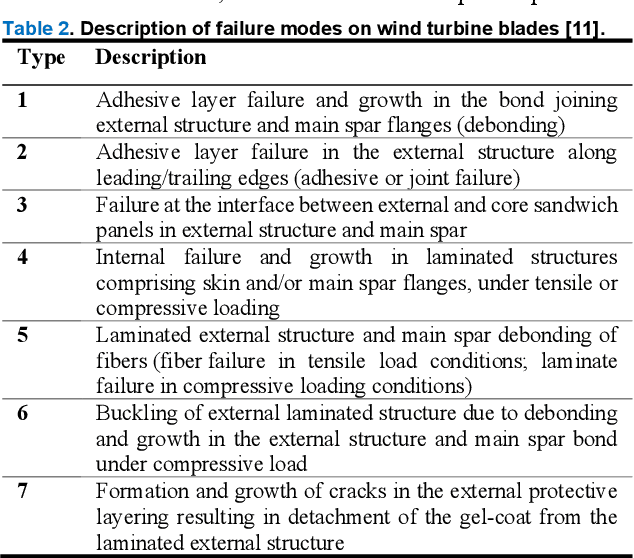

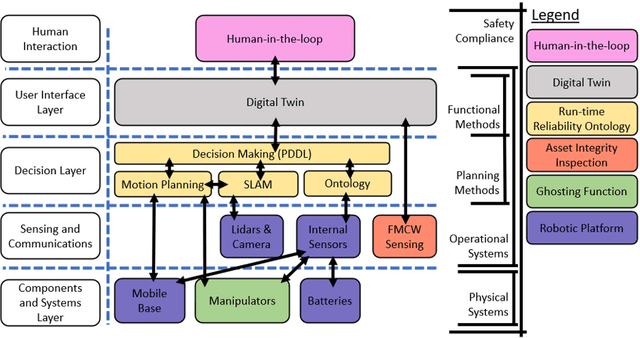

Abstract:To reduce Operation and Maintenance (O&M) costs on offshore wind farms, wherein 80% of the O&M cost relates to deploying personnel, the offshore wind sector looks to robotics and Artificial Intelligence (AI) for solutions. Barriers to Beyond Visual Line of Sight (BVLOS) robotics include operational safety compliance and resilience, inhibiting the commercialization of autonomous services offshore. To address safety and resilience challenges we propose a symbiotic system; reflecting the lifecycle learning and co-evolution with knowledge sharing for mutual gain of robotic platforms and remote human operators. Our methodology enables the run-time verification of safety, reliability and resilience during autonomous missions. We synchronize digital models of the robot, environment and infrastructure and integrate front-end analytics and bidirectional communication for autonomous adaptive mission planning and situation reporting to a remote operator. A reliability ontology for the deployed robot, based on our holistic hierarchical-relational model, supports computationally efficient platform data analysis. We analyze the mission status and diagnostics of critical sub-systems within the robot to provide automatic updates to our run-time reliability ontology, enabling faults to be translated into failure modes for decision making during the mission. We demonstrate an asset inspection mission within a confined space and employ millimeter-wave sensing to enhance situational awareness to detect the presence of obscured personnel to mitigate risk. Our results demonstrate a symbiotic system provides an enhanced resilience capability to BVLOS missions. A symbiotic system addresses the operational challenges and reprioritization of autonomous mission objectives. This advances the technology required to achieve fully trustworthy autonomous systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge