Jinha Kim

Swish-T : Enhancing Swish Activation with Tanh Bias for Improved Neural Network Performance

Jul 02, 2024

Abstract:We propose the Swish-T family, an enhancement of the existing non-monotonic activation function Swish. Swish-T is defined by adding a Tanh bias to the original Swish function. This modification creates a family of Swish-T variants, each designed to excel in different tasks, showcasing specific advantages depending on the application context. The Tanh bias allows for broader acceptance of negative values during initial training stages, offering a smoother non-monotonic curve than the original Swish. We ultimately propose the Swish-T$_{\textbf{C}}$ function, while Swish-T and Swish-T$_{\textbf{B}}$, byproducts of Swish-T$_{\textbf{C}}$, also demonstrate satisfactory performance. Furthermore, our ablation study shows that using Swish-T$_{\textbf{C}}$ as a non-parametric function can still achieve high performance. The superiority of the Swish-T family has been empirically demonstrated across various models and benchmark datasets, including MNIST, Fashion MNIST, SVHN, CIFAR-10, and CIFAR-100. The code is publicly available at "https://github.com/ictseoyoungmin/Swish-T-pytorch".

Can Contrastive Learning Refine Embeddings

Apr 11, 2024

Abstract:Recent advancements in contrastive learning have revolutionized self-supervised representation learning and achieved state-of-the-art performance on benchmark tasks. While most existing methods focus on applying contrastive learning to input data modalities such as images, natural language sentences, or networks, they overlook the potential of utilizing outputs from previously trained encoders. In this paper, we introduce SIMSKIP, a novel contrastive learning framework that specifically refines input embeddings for downstream tasks. Unlike traditional unsupervised learning approaches, SIMSKIP takes advantage of the output embeddings of encoder models as its input. Through theoretical analysis, we provide evidence that applying SIMSKIP does not result in larger upper bounds on downstream task errors than those of the original embeddings, which serve as SIMSKIP's input. Experimental results on various open datasets demonstrate that the embeddings produced by SIMSKIP improve performance on downstream tasks.

Reversed Image Signal Processing and RAW Reconstruction. AIM 2022 Challenge Report

Oct 20, 2022

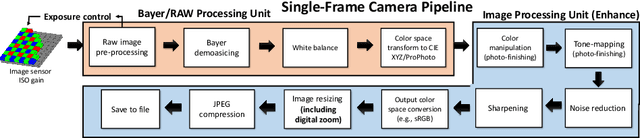

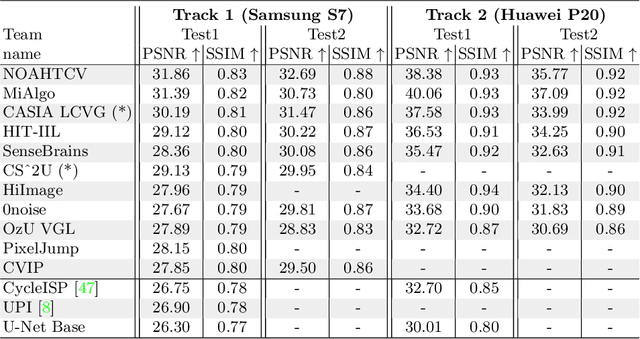

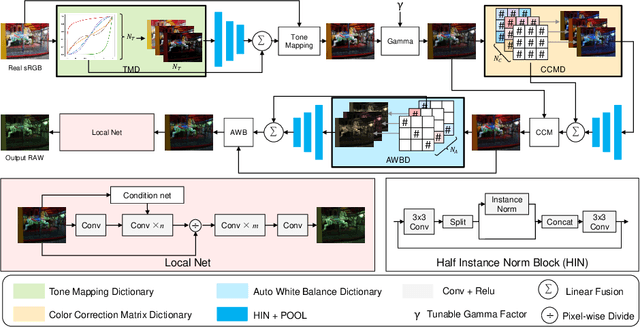

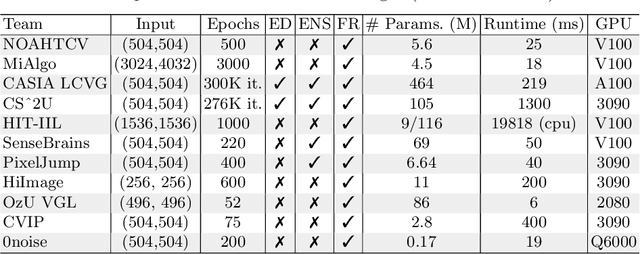

Abstract:Cameras capture sensor RAW images and transform them into pleasant RGB images, suitable for the human eyes, using their integrated Image Signal Processor (ISP). Numerous low-level vision tasks operate in the RAW domain (e.g. image denoising, white balance) due to its linear relationship with the scene irradiance, wide-range of information at 12bits, and sensor designs. Despite this, RAW image datasets are scarce and more expensive to collect than the already large and public RGB datasets. This paper introduces the AIM 2022 Challenge on Reversed Image Signal Processing and RAW Reconstruction. We aim to recover raw sensor images from the corresponding RGBs without metadata and, by doing this, "reverse" the ISP transformation. The proposed methods and benchmark establish the state-of-the-art for this low-level vision inverse problem, and generating realistic raw sensor readings can potentially benefit other tasks such as denoising and super-resolution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge