Ilker Hacihaliloglu

XTinyU-Net: Training-Free U-Net Scaling via Initialization-Time Sensitivity

May 10, 2026Abstract:While U-Net architectures remain the gold standard for medical image segmentation, their deployment in resource-constrained environments demands aggressive model compression. However, finding an optimally efficient configuration is computationally prohibitive, typically requiring exhaustive train-and-evaluate cycles to find the smallest model that maintains peak performance. In this paper, we introduce a training-free selection framework to automatically identify ultralightweight, dataset-specific U-Net configurations directly at initialization. We observe that systematically scaling down U-Net channel width induces a sharp transition from a stable performance plateau to representational capacity collapse. To pinpoint this boundary without training, we propose a Jacobian-based sensitivity metric that scores discrete, width-capped U-Net variants using a small set of unlabeled images. By analyzing the total variation of this sensitivity curve, we isolate the smallest stable configuration, which we denote as XTinyU-Net. Evaluated across six diverse medical datasets within the nnU-Net framework, XTinyU-Net achieves segmentation accuracy comparable to the heavy nnU-Net baseline with 400x-1600x fewer parameters, and outperforms contemporary lightweight architectures while utilizing 5x-72x fewer parameters. Code is publicly accessible on https://github.com/alvinkimbowa/nntinyunet.git.

MonoUNet: A Robust Tiny Neural Network for Automated Knee Cartilage Segmentation on Point-of-Care Ultrasound Devices

Apr 09, 2026Abstract:Objective: To develop a robust and compact deep learning model for automated knee cartilage segmentation on point-of-care ultrasound (POCUS) devices. Methods: We propose MonoUNet, an ultra-compact U-Net consisting of (i) an aggressively reduced backbone with an asymmetric decoder, (ii) a trainable monogenic block that extracts multi-scale local phase features, and (iii) a gated feature injection mechanism that integrates these features into the encoder stages to reduce sensitivity to variations in ultrasound image appearance and improve robustness across devices. MonoUNet was evaluated on a multi-site, multi-device knee cartilage ultrasound dataset acquired using cart-based, portable, and handheld POCUS devices. Results: Overall, MonoUNet outperformed existing lightweight segmentation models, with average Dice scores ranging from 92.62% to 94.82% and mean average surface distance (MASD) values between 0.133 mm and 0.254 mm. MonoUNet reduces the number of parameters by 10x--700x and computational cost by 14x--2000x relative to existing lightweight models. MonoUNet cartilage outcomes showed excellent reliability and agreement with the manual outcomes: intraclass correlation coefficients (ICC$_{2,k})$=0.96 and bias=2.00% (0.047 mm) for average thickness, and ICC$_{2,k}$=0.99 and bias=0.80% (0.328 a.u.) for echo intensity. Conclusion: Incorporating trainable local phase features improves the robustness of highly compact neural networks for knee cartilage segmentation across varying acquisition settings and could support scalable ultrasound-based assessment and monitoring of knee osteoarthritis using POCUS devices. The code is publicly available at https://github.com/alvinkimbowa/monounet.

When Minor Edits Matter: LLM-Driven Prompt Attack for Medical VLM Robustness in Ultrasound

Mar 22, 2026Abstract:Ultrasound is widely used in clinical practice due to its portability, cost-effectiveness, safety, and real-time imaging capabilities. However, image acquisition and interpretation remain highly operator dependent, motivating the development of robust AI-assisted analysis methods. Vision-language models (VLMs) have recently demonstrated strong multimodal reasoning capabilities and competitive performance in medical image analysis, including ultrasound. However, emerging evidence highlights significant concerns about their trustworthiness. In particular, adversarial robustness is critical because Med-VLMs operate via natural-language instructions, rendering prompt formulation a realistic and practically exploitable point of vulnerability. Small variations (typos, shorthand, underspecified requests, or ambiguous wording) can meaningfully shift model outputs. We propose a scalable adversarial evaluation framework that leverages a large language model (LLM) to generate clinically plausible adversarial prompt variants via "humanized" rewrites and minimal edits that mimic routine clinical communication. Using ultrasound multiple-choice question answering benchmarks, we systematically assess the vulnerability of SOTA Med-VLMs to these attacks, examine how attacker LLM capacity influences attack success, analyze the relationship between attack success and model confidence, and identify consistent failure patterns across models. Our results highlight realistic robustness gaps that must be addressed for safe clinical translation. Code will be released publicly following the review process.

Representation-Level Adversarial Regularization for Clinically Aligned Multitask Thyroid Ultrasound Assessment

Mar 22, 2026Abstract:Thyroid ultrasound is the first-line exam for assessing thyroid nodules and determining whether biopsy is warranted. In routine reporting, radiologists produce two coupled outputs: a nodule contour for measurement and a TI-RADS risk category based on sonographic criteria. Yet both contouring style and risk grading vary across readers, creating inconsistent supervision that can degrade standard learning pipelines. In this paper, we address this workflow with a clinically guided multitask framework that jointly predicts the nodule mask and TI-RADS category within a single model. To ground risk prediction in clinically meaningful evidence, we guide the classification embedding using a compact TI-RADS aligned radiomics target during training, while preserving complementary deep features for discriminative performance. However, under annotator variability, naive multitask optimization often fails not because the tasks are unrelated, but because their gradients compete within the shared representation. To make this competition explicit and controllable, we introduce RLAR, a representation-level adversarial gradient regularizer. Rather than performing parameter-level gradient surgery, RLAR uses each task's normalized adversarial direction in latent space as a geometric probe of task sensitivity and penalizes excessive angular alignment between task-specific adversarial directions. On a public TI-RADS dataset, our clinically guided multitask model with RLAR consistently improves risk stratification while maintaining segmentation quality compared to single-task training and conventional multitask baselines. Code and pretrained models will be released.

CENet: Context Enhancement Network for Medical Image Segmentation

May 23, 2025

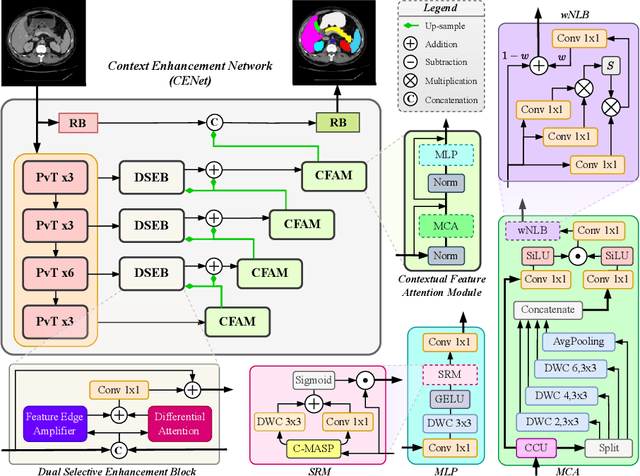

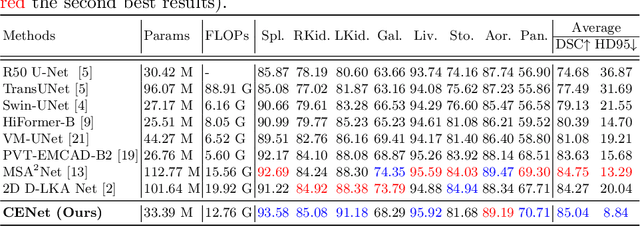

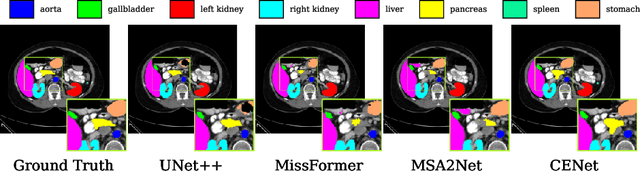

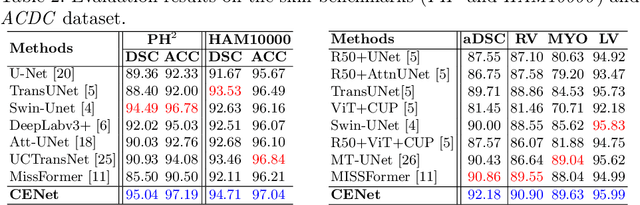

Abstract:Medical image segmentation, particularly in multi-domain scenarios, requires precise preservation of anatomical structures across diverse representations. While deep learning has advanced this field, existing models often struggle with accurate boundary representation, variability in organ morphology, and information loss during downsampling, limiting their accuracy and robustness. To address these challenges, we propose the Context Enhancement Network (CENet), a novel segmentation framework featuring two key innovations. First, the Dual Selective Enhancement Block (DSEB) integrated into skip connections enhances boundary details and improves the detection of smaller organs in a context-aware manner. Second, the Context Feature Attention Module (CFAM) in the decoder employs a multi-scale design to maintain spatial integrity, reduce feature redundancy, and mitigate overly enhanced representations. Extensive evaluations on both radiology and dermoscopic datasets demonstrate that CENet outperforms state-of-the-art (SOTA) methods in multi-organ segmentation and boundary detail preservation, offering a robust and accurate solution for complex medical image analysis tasks. The code is publicly available at https://github.com/xmindflow/cenet.

AllMetrics: A Unified Python Library for Standardized Metric Evaluation and Robust Data Validation in Machine Learning

May 21, 2025Abstract:Machine learning (ML) models rely heavily on consistent and accurate performance metrics to evaluate and compare their effectiveness. However, existing libraries often suffer from fragmentation, inconsistent implementations, and insufficient data validation protocols, leading to unreliable results. Existing libraries have often been developed independently and without adherence to a unified standard, particularly concerning the specific tasks they aim to support. As a result, each library tends to adopt its conventions for metric computation, input/output formatting, error handling, and data validation protocols. This lack of standardization leads to both implementation differences (ID) and reporting differences (RD), making it difficult to compare results across frameworks or ensure reliable evaluations. To address these issues, we introduce AllMetrics, an open-source unified Python library designed to standardize metric evaluation across diverse ML tasks, including regression, classification, clustering, segmentation, and image-to-image translation. The library implements class-specific reporting for multi-class tasks through configurable parameters to cover all use cases, while incorporating task-specific parameters to resolve metric computation discrepancies across implementations. Various datasets from domains like healthcare, finance, and real estate were applied to our library and compared with Python, Matlab, and R components to identify which yield similar results. AllMetrics combines a modular Application Programming Interface (API) with robust input validation mechanisms to ensure reproducibility and reliability in model evaluation. This paper presents the design principles, architectural components, and empirical analyses demonstrating the ability to mitigate evaluation errors and to enhance the trustworthiness of ML workflows.

Pathobiological Dictionary Defining Pathomics and Texture Features: Addressing Understandable AI Issues in Personalized Liver Cancer; Dictionary Version LCP1.0

May 20, 2025Abstract:Artificial intelligence (AI) holds strong potential for medical diagnostics, yet its clinical adoption is limited by a lack of interpretability and generalizability. This study introduces the Pathobiological Dictionary for Liver Cancer (LCP1.0), a practical framework designed to translate complex Pathomics and Radiomics Features (PF and RF) into clinically meaningful insights aligned with existing diagnostic workflows. QuPath and PyRadiomics, standardized according to IBSI guidelines, were used to extract 333 imaging features from hepatocellular carcinoma (HCC) tissue samples, including 240 PF-based-cell detection/intensity, 74 RF-based texture, and 19 RF-based first-order features. Expert-defined ROIs from the public dataset excluded artifact-prone areas, and features were aggregated at the case level. Their relevance to the WHO grading system was assessed using multiple classifiers linked with feature selectors. The resulting dictionary was validated by 8 experts in oncology and pathology. In collaboration with 10 domain experts, we developed a Pathobiological dictionary of imaging features such as PFs and RF. In our study, the Variable Threshold feature selection algorithm combined with the SVM model achieved the highest accuracy (0.80, P-value less than 0.05), selecting 20 key features, primarily clinical and pathomics traits such as Centroid, Cell Nucleus, and Cytoplasmic characteristics. These features, particularly nuclear and cytoplasmic, were strongly associated with tumor grading and prognosis, reflecting atypia indicators like pleomorphism, hyperchromasia, and cellular orientation.The LCP1.0 provides a clinically validated bridge between AI outputs and expert interpretation, enhancing model transparency and usability. Aligning AI-derived features with clinical semantics supports the development of interpretable, trustworthy diagnostic tools for liver cancer pathology.

Impact of Data Patterns on Biotype identification Using Machine Learning

Mar 15, 2025

Abstract:Background: Patient stratification in brain disorders remains a significant challenge, despite advances in machine learning and multimodal neuroimaging. Automated machine learning algorithms have been widely applied for identifying patient subtypes (biotypes), but results have been inconsistent across studies. These inconsistencies are often attributed to algorithmic limitations, yet an overlooked factor may be the statistical properties of the input data. This study investigates the contribution of data patterns on algorithm performance by leveraging synthetic brain morphometry data as an exemplar. Methods: Four widely used algorithms-SuStaIn, HYDRA, SmileGAN, and SurrealGAN were evaluated using multiple synthetic pseudo-patient datasets designed to include varying numbers and sizes of clusters and degrees of complexity of morphometric changes. Ground truth, representing predefined clusters, allowed for the evaluation of performance accuracy across algorithms and datasets. Results: SuStaIn failed to process datasets with more than 17 variables, highlighting computational inefficiencies. HYDRA was able to perform individual-level classification in multiple datasets with no clear pattern explaining failures. SmileGAN and SurrealGAN outperformed other algorithms in identifying variable-based disease patterns, but these patterns were not able to provide individual-level classification. Conclusions: Dataset characteristics significantly influence algorithm performance, often more than algorithmic design. The findings emphasize the need for rigorous validation using synthetic data before real-world application and highlight the limitations of current clustering approaches in capturing the heterogeneity of brain disorders. These insights extend beyond neuroimaging and have implications for machine learning applications in biomedical research.

Mono2D: A Trainable Monogenic Layer for Robust Knee Cartilage Segmentation on Out-of-Distribution 2D Ultrasound Data

Mar 12, 2025

Abstract:Automated knee cartilage segmentation using point-of-care ultrasound devices and deep-learning networks has the potential to enhance the management of knee osteoarthritis. However, segmentation algorithms often struggle with domain shifts caused by variations in ultrasound devices and acquisition parameters, limiting their generalizability. In this paper, we propose Mono2D, a monogenic layer that extracts multi-scale, contrast- and intensity-invariant local phase features using trainable bandpass quadrature filters. This layer mitigates domain shifts, improving generalization to out-of-distribution domains. Mono2D is integrated before the first layer of a segmentation network, and its parameters jointly trained alongside the network's parameters. We evaluated Mono2D on a multi-domain 2D ultrasound knee cartilage dataset for single-source domain generalization (SSDG). Our results demonstrate that Mono2D outperforms other SSDG methods in terms of Dice score and mean average surface distance. To further assess its generalizability, we evaluate Mono2D on a multi-site prostate MRI dataset, where it continues to outperform other SSDG methods, highlighting its potential to improve domain generalization in medical imaging. Nevertheless, further evaluation on diverse datasets is still necessary to assess its clinical utility.

Influence of High-Performance Image-to-Image Translation Networks on Clinical Visual Assessment and Outcome Prediction: Utilizing Ultrasound to MRI Translation in Prostate Cancer

Jan 30, 2025

Abstract:Purpose: This study examines the core traits of image-to-image translation (I2I) networks, focusing on their effectiveness and adaptability in everyday clinical settings. Methods: We have analyzed data from 794 patients diagnosed with prostate cancer (PCa), using ten prominent 2D/3D I2I networks to convert ultrasound (US) images into MRI scans. We also introduced a new analysis of Radiomic features (RF) via the Spearman correlation coefficient to explore whether networks with high performance (SSIM>85%) could detect subtle RFs. Our study further examined synthetic images by 7 invited physicians. As a final evaluation study, we have investigated the improvement that are achieved using the synthetic MRI data on two traditional machine learning and one deep learning method. Results: In quantitative assessment, 2D-Pix2Pix network substantially outperformed the other 7 networks, with an average SSIM~0.855. The RF analysis revealed that 76 out of 186 RFs were identified using the 2D-Pix2Pix algorithm alone, although half of the RFs were lost during the translation process. A detailed qualitative review by 7 medical doctors noted a deficiency in low-level feature recognition in I2I tasks. Furthermore, the study found that synthesized image-based classification outperformed US image-based classification with an average accuracy and AUC~0.93. Conclusion: This study showed that while 2D-Pix2Pix outperformed cutting-edge networks in low-level feature discovery and overall error and similarity metrics, it still requires improvement in low-level feature performance, as highlighted by Group 3. Further, the study found using synthetic image-based classification outperformed original US image-based methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge