Hongzhi Yu

Clinically Interpretable Sepsis Early Warning via LLM-Guided Simulation of Temporal Physiological Dynamics

Apr 22, 2026Abstract:Timely and interpretable early warning of sepsis remains a major clinical challenge due to the complex temporal dynamics of physiological deterioration. Traditional data-driven models often provide accurate yet opaque predictions, limiting physicians' confidence and clinical applicability. To address this limitation, we propose a Large Language Model (LLM)-guided temporal simulation framework that explicitly models physiological trajectories prior to disease onset for clinically interpretable prediction. The framework consists of a spatiotemporal feature extraction module that captures dynamic dependencies among multivariate vital signs, a Medical Prompt-as-Prefix module that embeds clinical reasoning cues into LLMs, and an agent-based post-processing component that constrains predictions within physiologically plausible ranges. By first simulating the evolution of key physiological indicators and then classifying sepsis onset, our model offers transparent prediction mechanisms that align with clinical judgment. Evaluated on the MIMIC-IV and eICU databases, the proposed method achieves superior AUC scores (0.861-0.903) across 24-4-hour pre-onset prediction tasks, outperforming conventional deep learning and rule-based approaches. More importantly, it provides interpretable trajectories and risk trends that can assist clinicians in early intervention and personalized decision-making in intensive care environments.

A Study on Decoupled Probabilistic Linear Discriminant Analysis

Nov 24, 2021

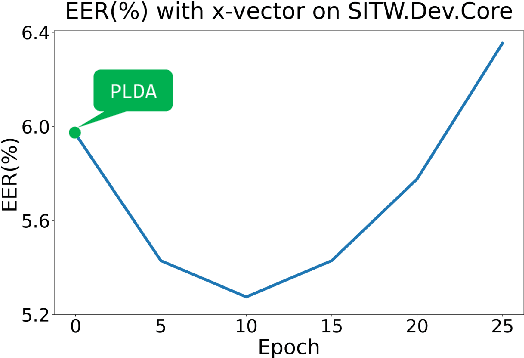

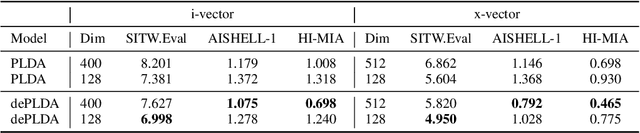

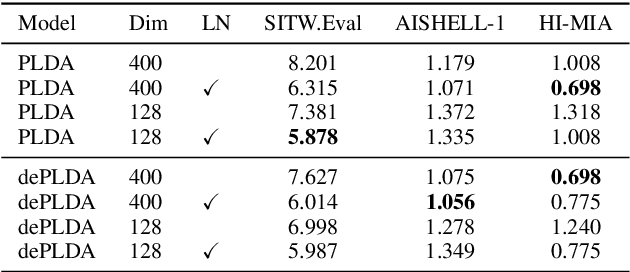

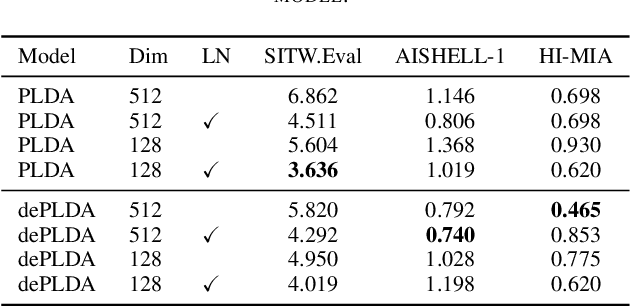

Abstract:Probabilistic linear discriminant analysis (PLDA) has broad application in open-set verification tasks, such as speaker verification. A key concern for PLDA is that the model is too simple (linear Gaussian) to deal with complicated data; however, the simplicity by itself is a major advantage of PLDA, as it leads to desirable generalization. An interesting research therefore is how to improve modeling capacity of PLDA while retaining the simplicity. This paper presents a decoupling approach, which involves a global model that is simple and generalizable, and a local model that is complex and expressive. While the global model holds a bird view on the entire data, the local model represents the details of individual classes. We conduct a preliminary study towards this direction and investigate a simple decoupling model including both the global and local models. The new model, which we call decoupled PLDA, is tested on a speaker verification task. Experimental results show that it consistently outperforms the vanilla PLDA when the model is based on raw speaker vectors. However, when the speaker vectors are processed by length normalization, the advantage of decoupled PLDA will be largely lost, suggesting future research on non-linear local models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge