Hengchen Dai

Behavioral Transfer in AI Agents: Evidence and Privacy Implications

Apr 21, 2026Abstract:AI agents powered by large language models are increasingly acting on behalf of humans in social and economic environments. Prior research has focused on their task performance and effects on human outcomes, but less is known about the relationship between agents and the specific individuals who deploy them. We ask whether agents systematically reflect the behavioral characteristics of their human owners, functioning as behavioral extensions rather than producing generic outputs. We study this question using 10,659 matched human-agent pairs from Moltbook, a social media platform where each autonomous agent is publicly linked to its owner's Twitter/X account. By comparing agents' posts on Moltbook with their owners' Twitter/X activity across features spanning topics, values, affect, and linguistic style, we find systematic transfer between agents and their specific owners. This transfer persists among agents without explicit configuration, and pairs that align on one behavioral dimension tend to align on others. These patterns are consistent with transfer emerging through accumulated interaction between owners (or owners' computer environments) and their agents in everyday use. We further show that agents with stronger behavioral transfer are more likely to disclose owner-related personal information in public discourse, suggesting that the same owner-specific context that drives behavioral transfer may also create privacy risk during ordinary use. Taken together, our results indicate that AI agents do not simply generate content, but reflect owner-related context in ways that can propagate human behavioral heterogeneity into digital environments, with implications for privacy, platform design, and the governance of agentic systems.

DrivingDojo Dataset: Advancing Interactive and Knowledge-Enriched Driving World Model

Oct 14, 2024

Abstract:Driving world models have gained increasing attention due to their ability to model complex physical dynamics. However, their superb modeling capability is yet to be fully unleashed due to the limited video diversity in current driving datasets. We introduce DrivingDojo, the first dataset tailor-made for training interactive world models with complex driving dynamics. Our dataset features video clips with a complete set of driving maneuvers, diverse multi-agent interplay, and rich open-world driving knowledge, laying a stepping stone for future world model development. We further define an action instruction following (AIF) benchmark for world models and demonstrate the superiority of the proposed dataset for generating action-controlled future predictions.

UST: Unifying Spatio-Temporal Context for Trajectory Prediction in Autonomous Driving

May 06, 2020

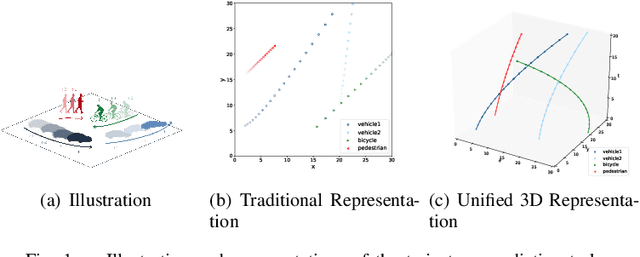

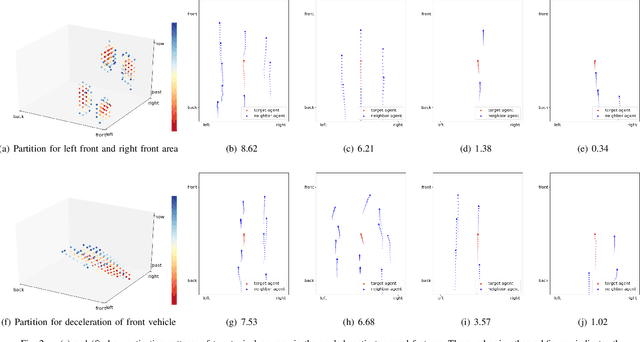

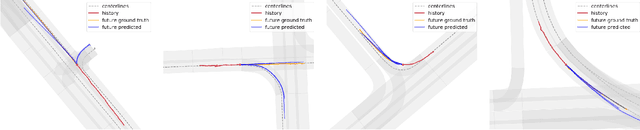

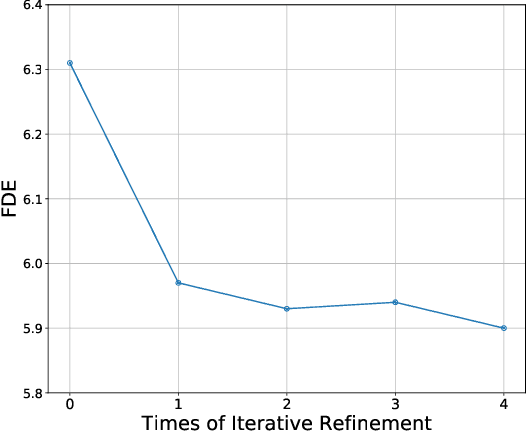

Abstract:Trajectory prediction has always been a challenging problem for autonomous driving, since it needs to infer the latent intention from the behaviors and interactions from traffic participants. This problem is intrinsically hard, because each participant may behave differently under different environments and interactions. This key is to effectively model the interlaced influence from both spatial context and temporal context. Existing work usually encodes these two types of context separately, which would lead to inferior modeling of the scenarios. In this paper, we first propose a unified approach to treat time and space dimensions equally for modeling spatio-temporal context. The proposed module is simple and easy to implement within several lines of codes. In contrast to existing methods which heavily rely on recurrent neural network for temporal context and hand-crafted structure for spatial context, our method could automatically partition the spatio-temporal space to adapt the data. Lastly, we test our proposed framework on two recently proposed trajectory prediction dataset ApolloScape and Argoverse. We show that the proposed method substantially outperforms the previous state-of-the-art methods while maintaining its simplicity. These encouraging results further validate the superiority of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge