Guojie Li

A Comparative Investigation of Thermodynamic Structure-Informed Neural Networks

Mar 26, 2026Abstract:Physics-informed neural networks (PINNs) offer a unified framework for solving both forward and inverse problems of differential equations, yet their performance and physical consistency strongly depend on how governing laws are incorporated. In this work, we present a systematic comparison of different thermodynamic structure-informed neural networks by incorporating various thermodynamics formulations, including Newtonian, Lagrangian, and Hamiltonian mechanics for conservative systems, as well as the Onsager variational principle and extended irreversible thermodynamics for dissipative systems. Through comprehensive numerical experiments on representative ordinary and partial differential equations, we quantitatively evaluate the impact of these formulations on accuracy, physical consistency, noise robustness, and interpretability. The results show that Newtonian-residual-based PINNs can reconstruct system states but fail to reliably recover key physical and thermodynamic quantities, whereas structure-preserving formulation significantly enhances parameter identification, thermodynamic consistency, and robustness. These findings provide practical guidance for principled design of thermodynamics-consistency model, and lay the groundwork for integrating more general nonequilibrium thermodynamic structures into physics-informed machine learning.

GroupKAN: Rethinking Nonlinearity with Grouped Spline-based KAN Modeling for Efficient Medical Image Segmentation

Nov 07, 2025

Abstract:Medical image segmentation requires models that are accurate, lightweight, and interpretable. Convolutional architectures lack adaptive nonlinearity and transparent decision-making, whereas Transformer architectures are hindered by quadratic complexity and opaque attention mechanisms. U-KAN addresses these challenges using Kolmogorov-Arnold Networks, achieving higher accuracy than both convolutional and attention-based methods, fewer parameters than Transformer variants, and improved interpretability compared to conventional approaches. However, its O(C^2) complexity due to full-channel transformations limits its scalability as the number of channels increases. To overcome this, we introduce GroupKAN, a lightweight segmentation network that incorporates two novel, structured functional modules: (1) Grouped KAN Transform, which partitions channels into G groups for multivariate spline mappings, reducing complexity to O(C^2/G), and (2) Grouped KAN Activation, which applies shared spline-based mappings within each channel group for efficient, token-wise nonlinearity. Evaluated on three medical benchmarks (BUSI, GlaS, and CVC), GroupKAN achieves an average IoU of 79.80 percent, surpassing U-KAN by +1.11 percent while requiring only 47.6 percent of the parameters (3.02M vs 6.35M), and shows improved interpretability.

Every Step Evolves: Scaling Reinforcement Learning for Trillion-Scale Thinking Model

Oct 21, 2025

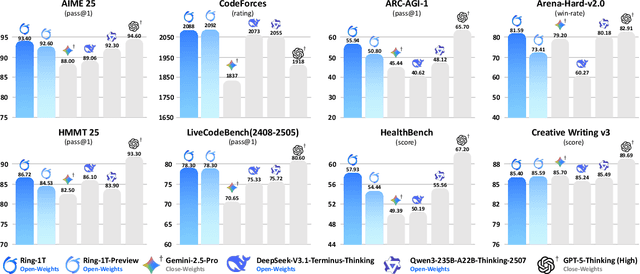

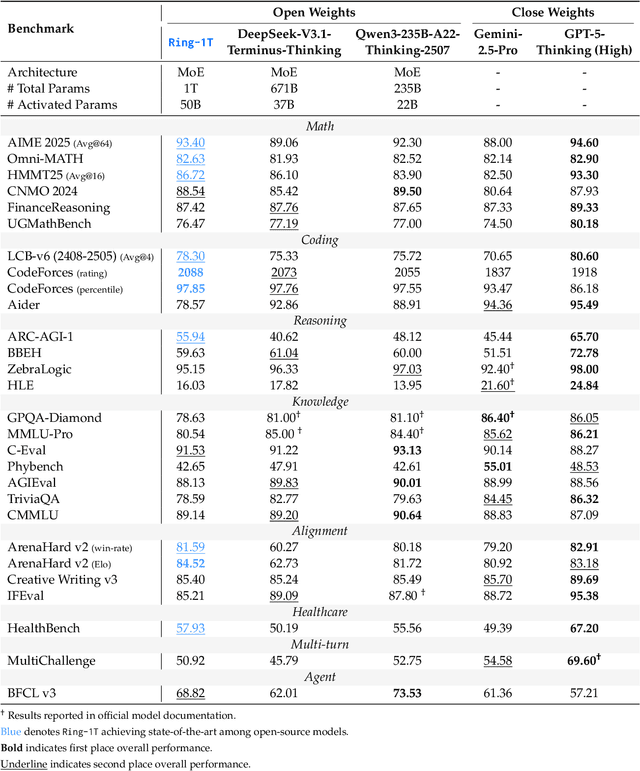

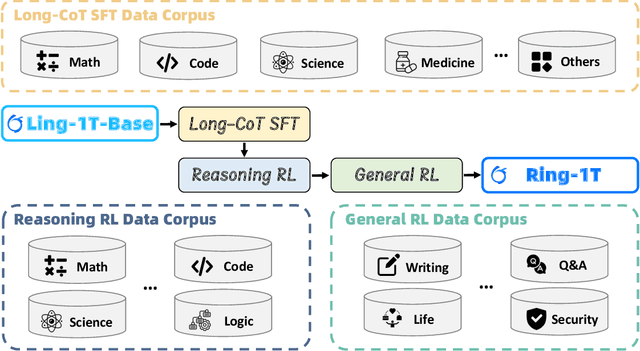

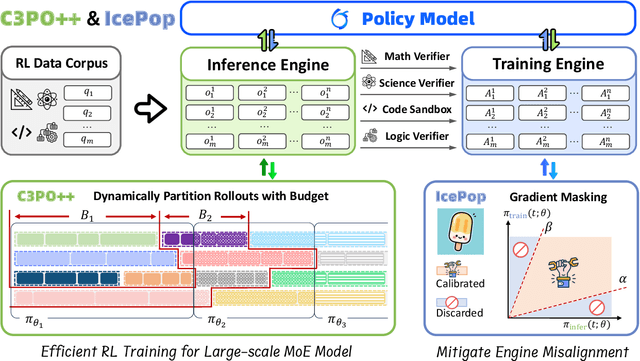

Abstract:We present Ring-1T, the first open-source, state-of-the-art thinking model with a trillion-scale parameter. It features 1 trillion total parameters and activates approximately 50 billion per token. Training such models at a trillion-parameter scale introduces unprecedented challenges, including train-inference misalignment, inefficiencies in rollout processing, and bottlenecks in the RL system. To address these, we pioneer three interconnected innovations: (1) IcePop stabilizes RL training via token-level discrepancy masking and clipping, resolving instability from training-inference mismatches; (2) C3PO++ improves resource utilization for long rollouts under a token budget by dynamically partitioning them, thereby obtaining high time efficiency; and (3) ASystem, a high-performance RL framework designed to overcome the systemic bottlenecks that impede trillion-parameter model training. Ring-1T delivers breakthrough results across critical benchmarks: 93.4 on AIME-2025, 86.72 on HMMT-2025, 2088 on CodeForces, and 55.94 on ARC-AGI-v1. Notably, it attains a silver medal-level result on the IMO-2025, underscoring its exceptional reasoning capabilities. By releasing the complete 1T parameter MoE model to the community, we provide the research community with direct access to cutting-edge reasoning capabilities. This contribution marks a significant milestone in democratizing large-scale reasoning intelligence and establishes a new baseline for open-source model performance.

MEP-Net: Generating Solutions to Scientific Problems with Limited Knowledge by Maximum Entropy Principle

Dec 03, 2024

Abstract:Maximum entropy principle (MEP) offers an effective and unbiased approach to inferring unknown probability distributions when faced with incomplete information, while neural networks provide the flexibility to learn complex distributions from data. This paper proposes a novel neural network architecture, the MEP-Net, which combines the MEP with neural networks to generate probability distributions from moment constraints. We also provide a comprehensive overview of the fundamentals of the maximum entropy principle, its mathematical formulations, and a rigorous justification for its applicability for non-equilibrium systems based on the large deviations principle. Through fruitful numerical experiments, we demonstrate that the MEP-Net can be particularly useful in modeling the evolution of probability distributions in biochemical reaction networks and in generating complex distributions from data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge