Chris Atkeson

Learning Deformable Object Manipulation Using Task-Level Iterative Learning Control

Feb 24, 2026Abstract:Dynamic manipulation of deformable objects is challenging for humans and robots because they have infinite degrees of freedom and exhibit underactuated dynamics. We introduce a Task-Level Iterative Learning Control method for dynamic manipulation of deformable objects. We demonstrate this method on a non-planar rope manipulation task called the flying knot. Using a single human demonstration and a simplified rope model, the method learns directly on hardware without reliance on large amounts of demonstration data or massive amounts of simulation. At each iteration, the algorithm constructs a local inverse model of the robot and rope by solving a quadratic program to propagate task-space errors into action updates. We evaluate performance across 7 different kinds of ropes, including chain, latex surgical tubing, and braided and twisted ropes, ranging in thicknesses of 7--25mm and densities of 0.013--0.5 kg/m. Learning achieves a 100\% success rate within 10 trials on all ropes. Furthermore, the method can successfully transfer between most rope types in approximately 2--5 trials. https://flying-knots.github.io

Using Memory-Based Learning to Solve Tasks with State-Action Constraints

Mar 08, 2023

Abstract:Tasks where the set of possible actions depend discontinuously on the state pose a significant challenge for current reinforcement learning algorithms. For example, a locked door must be first unlocked, and then the handle turned before the door can be opened. The sequential nature of these tasks makes obtaining final rewards difficult, and transferring information between task variants using continuous learned values such as weights rather than discrete symbols can be inefficient. Our key insight is that agents that act and think symbolically are often more effective in dealing with these tasks. We propose a memory-based learning approach that leverages the symbolic nature of constraints and temporal ordering of actions in these tasks to quickly acquire and transfer high-level information. We evaluate the performance of memory-based learning on both real and simulated tasks with approximately discontinuous constraints between states and actions, and show our method learns to solve these tasks an order of magnitude faster than both model-based and model-free deep reinforcement learning methods.

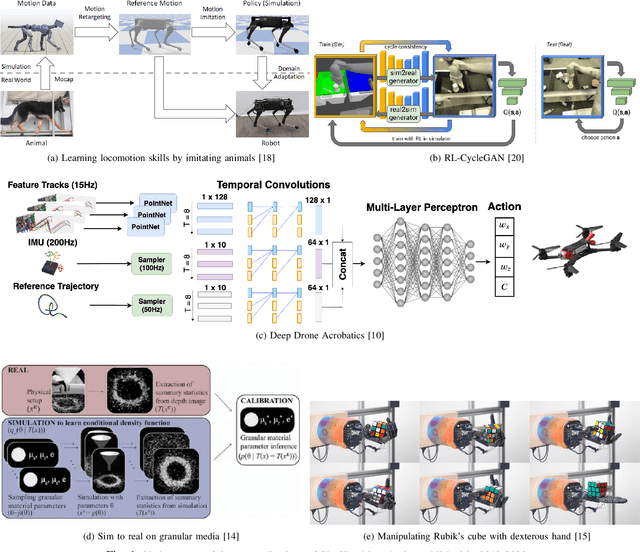

Perspectives on Sim2Real Transfer for Robotics: A Summary of the R:SS 2020 Workshop

Dec 07, 2020

Abstract:This report presents the debates, posters, and discussions of the Sim2Real workshop held in conjunction with the 2020 edition of the "Robotics: Science and System" conference. Twelve leaders of the field took competing debate positions on the definition, viability, and importance of transferring skills from simulation to the real world in the context of robotics problems. The debaters also joined a large panel discussion, answering audience questions and outlining the future of Sim2Real in robotics. Furthermore, we invited extended abstracts to this workshop which are summarized in this report. Based on the workshop, this report concludes with directions for practitioners exploiting this technology and for researchers further exploring open problems in this area.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge