Challenger Mishra

Discovering mathematical concepts through a multi-agent system

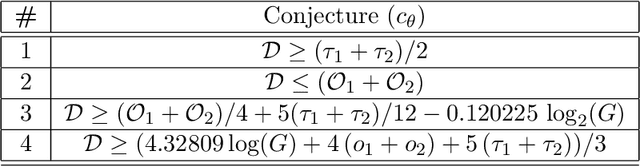

Mar 04, 2026Abstract:Mathematical concepts emerge through an interplay of processes, including experimentation, efforts at proof, and counterexamples. In this paper, we present a new multi-agent model for computational mathematical discovery based on this observation. Our system, conceived with research in mind, poses its own conjectures and then attempts to prove them, making decisions informed by this feedback and an evolving data distribution. Inspired by the history of Euler's conjecture for polyhedra and an open challenge in the literature, we benchmark with the task of autonomously recovering the concept of homology from polyhedral data and knowledge of linear algebra. Our system completes this learning problem. Most importantly, the experiments are ablations, statistically testing the value of the complete dynamic and controlling for experimental setup. They support our main claim: that the optimisation of the right combination of local processes can lead to surprisingly well-aligned notions of mathematical interestingness.

Hermitian Yang--Mills connections on general vector bundles: geometry and physical Yukawa couplings

Dec 11, 2025

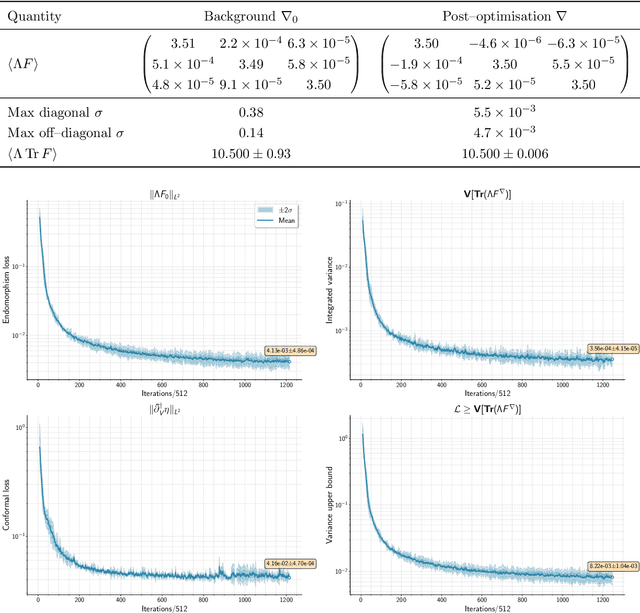

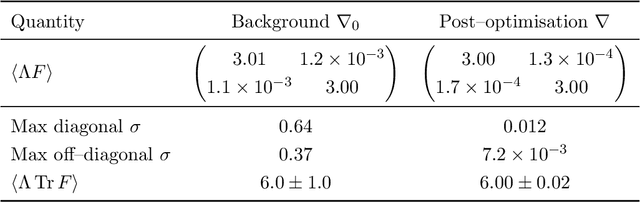

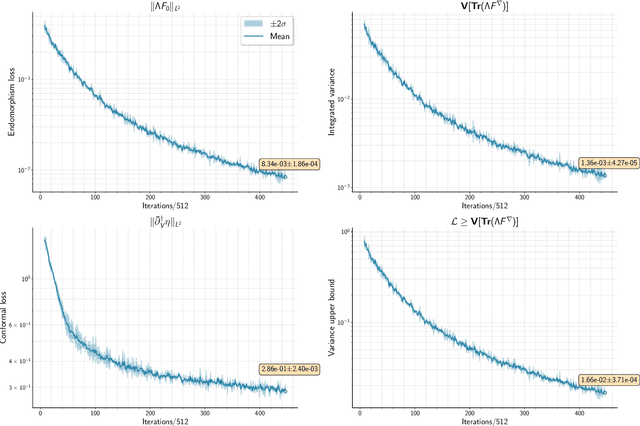

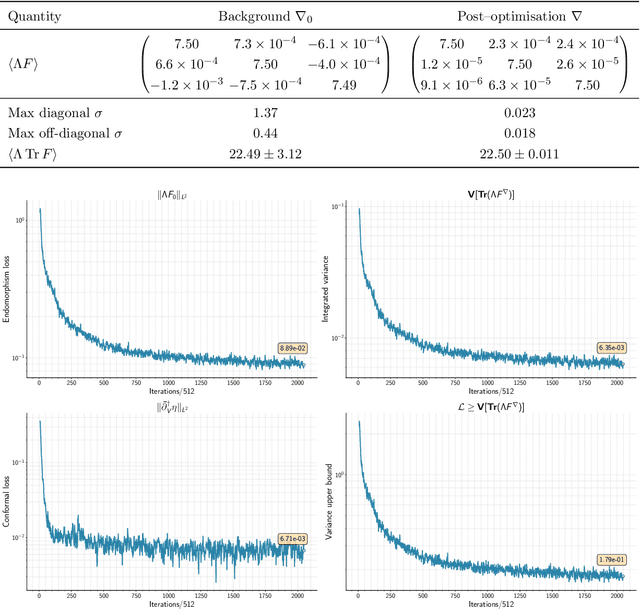

Abstract:We compute solutions to the Hermitian Yang-Mills equations on holomorphic vector bundles $V$ via an alternating optimisation procedure founded on geometric machine learning. The proposed method is fully general with respect to the rank and structure group of $V$, requiring only the ability to enumerate a basis of global sections for a given bundle. This enables us to compute the physically normalised Yukawa couplings in a broad class of heterotic string compactifications. Using this method, we carry out this computation in full for a heterotic compactification incorporating a gauge bundle with non-Abelian structure group.

A Matter of Interest: Understanding Interestingness of Math Problems in Humans and Language Models

Nov 11, 2025Abstract:The evolution of mathematics has been guided in part by interestingness. From researchers choosing which problems to tackle next, to students deciding which ones to engage with, people's choices are often guided by judgments about how interesting or challenging problems are likely to be. As AI systems, such as LLMs, increasingly participate in mathematics with people -- whether for advanced research or education -- it becomes important to understand how well their judgments align with human ones. Our work examines this alignment through two empirical studies of human and LLM assessment of mathematical interestingness and difficulty, spanning a range of mathematical experience. We study two groups: participants from a crowdsourcing platform and International Math Olympiad competitors. We show that while many LLMs appear to broadly agree with human notions of interestingness, they mostly do not capture the distribution observed in human judgments. Moreover, most LLMs only somewhat align with why humans find certain math problems interesting, showing weak correlation with human-selected interestingness rationales. Together, our findings highlight both the promises and limitations of current LLMs in capturing human interestingness judgments for mathematical AI thought partnerships.

Symbolic Approximations to Ricci-flat Metrics Via Extrinsic Symmetries of Calabi-Yau Hypersurfaces

Dec 27, 2024Abstract:Ever since Yau's non-constructive existence proof of Ricci-flat metrics on Calabi-Yau manifolds, finding their explicit construction remains a major obstacle to development of both string theory and algebraic geometry. Recent computational approaches employ machine learning to create novel neural representations for approximating these metrics, offering high accuracy but limited interpretability. In this paper, we analyse machine learning approximations to flat metrics of Fermat Calabi-Yau n-folds and some of their one-parameter deformations in three dimensions in order to discover their new properties. We formalise cases in which the flat metric has more symmetries than the underlying manifold, and prove that these symmetries imply that the flat metric admits a surprisingly compact representation for certain choices of complex structure moduli. We show that such symmetries uniquely determine the flat metric on certain loci, for which we present an analytic form. We also incorporate our theoretical results into neural networks to achieve state-of-the-art reductions in Ricci curvature for multiple Calabi-Yau manifolds. We conclude by distilling the ML models to obtain for the first time closed form expressions for Kahler metrics with near-zero scalar curvature.

cymyc -- Calabi-Yau Metrics, Yukawas, and Curvature

Oct 25, 2024Abstract:We introduce \texttt{cymyc}, a high-performance Python library for numerical investigation of the geometry of a large class of string compactification manifolds and their associated moduli spaces. We develop a well-defined geometric ansatz to numerically model tensor fields of arbitrary degree on a large class of Calabi-Yau manifolds. \texttt{cymyc} includes a machine learning component which incorporates this ansatz to model tensor fields of interest on these spaces by finding an approximate solution to the system of partial differential equations they should satisfy.

Calabi-Yau metrics through Grassmannian learning and Donaldson's algorithm

Oct 15, 2024

Abstract:Motivated by recent progress in the problem of numerical K\"ahler metrics, we survey machine learning techniques in this area, discussing both advantages and drawbacks. We then revisit the algebraic ansatz pioneered by Donaldson. Inspired by his work, we present a novel approach to obtaining Ricci-flat approximations to K\"ahler metrics, applying machine learning within a `principled' framework. In particular, we use gradient descent on the Grassmannian manifold to identify an efficient subspace of sections for calculation of the metric. We combine this approach with both Donaldson's algorithm and learning on the $h$-matrix itself (the latter method being equivalent to gradient descent on the fibre bundle of Hermitian metrics on the tautological bundle over the Grassmannian). We implement our methods on the Dwork family of threefolds, commenting on the behaviour at different points in moduli space. In particular, we observe the emergence of nontrivial local minima as the moduli parameter is increased.

Learning to be Simple

Dec 08, 2023

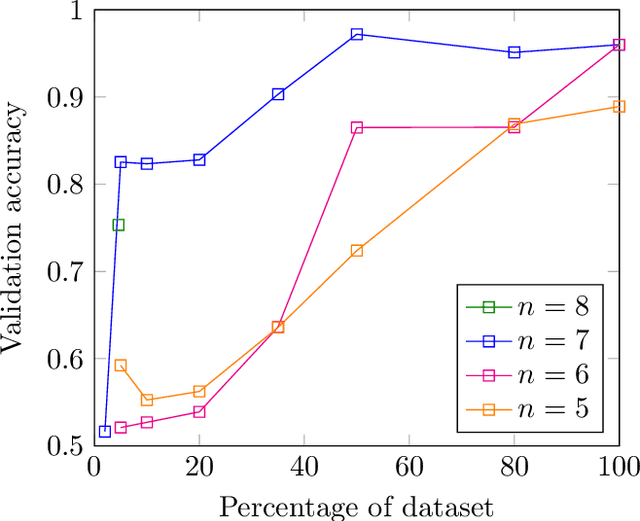

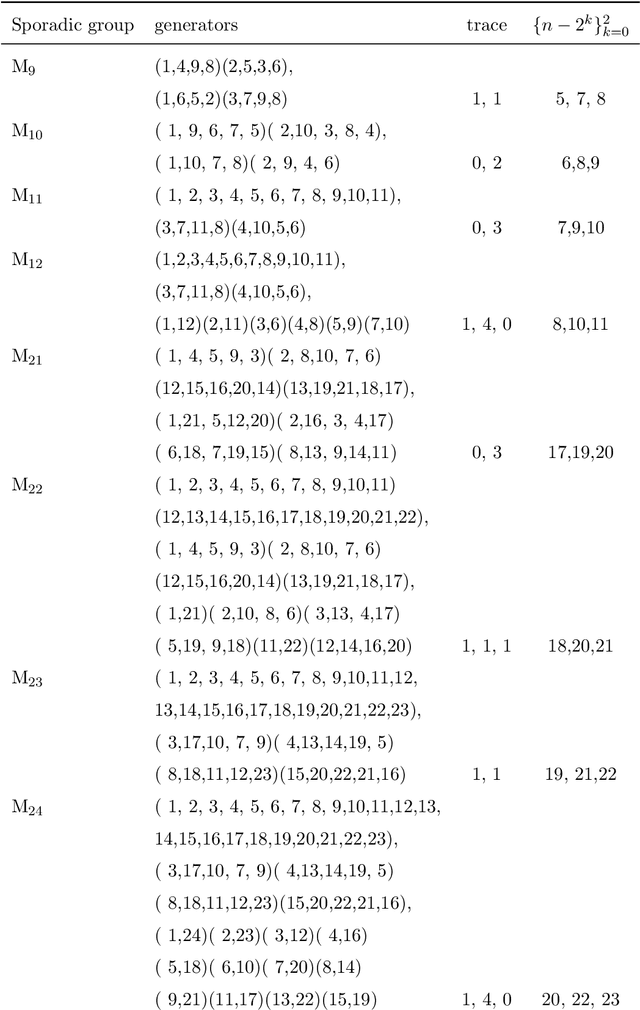

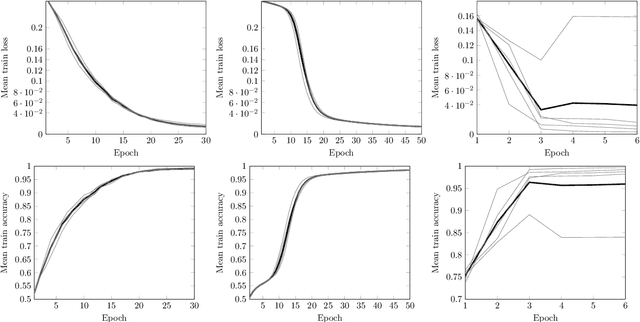

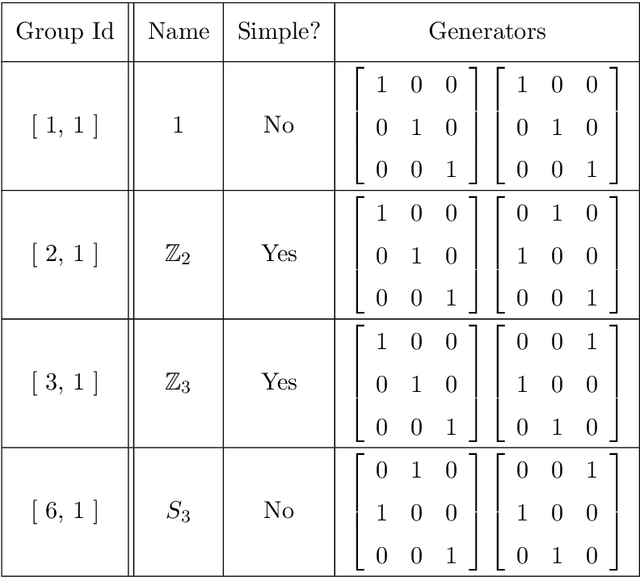

Abstract:In this work we employ machine learning to understand structured mathematical data involving finite groups and derive a theorem about necessary properties of generators of finite simple groups. We create a database of all 2-generated subgroups of the symmetric group on n-objects and conduct a classification of finite simple groups among them using shallow feed-forward neural networks. We show that this neural network classifier can decipher the property of simplicity with varying accuracies depending on the features. Our neural network model leads to a natural conjecture concerning the generators of a finite simple group. We subsequently prove this conjecture. This new toy theorem comments on the necessary properties of generators of finite simple groups. We show this explicitly for a class of sporadic groups for which the result holds. Our work further makes the case for a machine motivated study of algebraic structures in pure mathematics and highlights the possibility of generating new conjectures and theorems in mathematics with the aid of machine learning.

Mathematical conjecture generation using machine intelligence

Jun 12, 2023

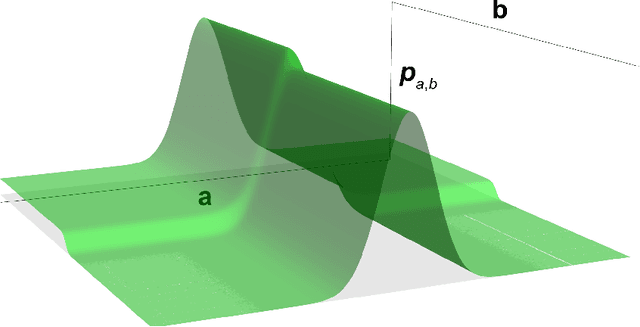

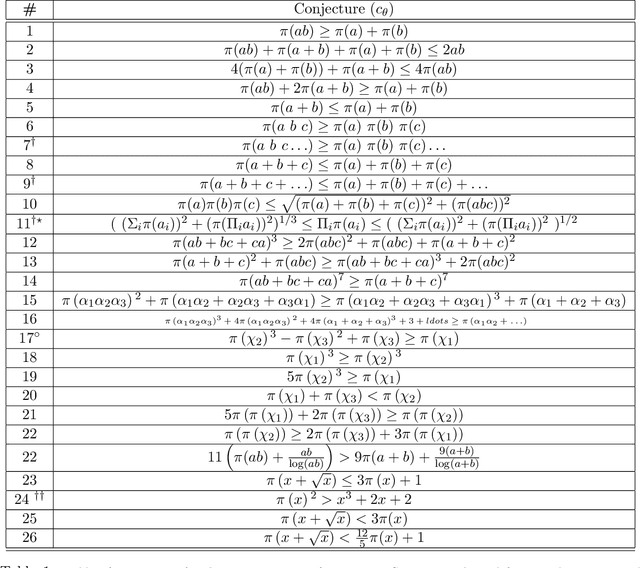

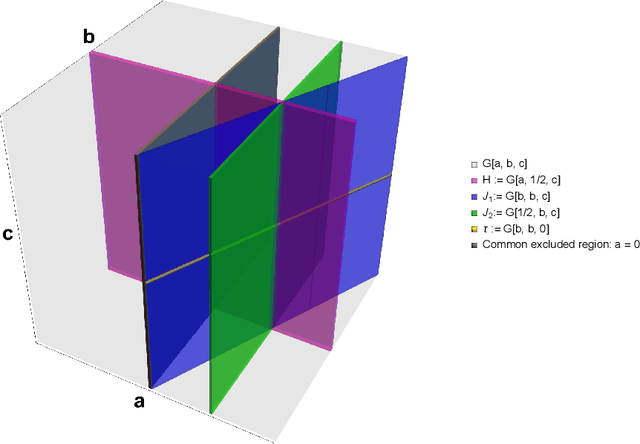

Abstract:Conjectures have historically played an important role in the development of pure mathematics. We propose a systematic approach to finding abstract patterns in mathematical data, in order to generate conjectures about mathematical inequalities, using machine intelligence. We focus on strict inequalities of type f < g and associate them with a vector space. By geometerising this space, which we refer to as a conjecture space, we prove that this space is isomorphic to a Banach manifold. We develop a structural understanding of this conjecture space by studying linear automorphisms of this manifold and show that this space admits several free group actions. Based on these insights, we propose an algorithmic pipeline to generate novel conjectures using geometric gradient descent, where the metric is informed by the invariances of the conjecture space. As proof of concept, we give a toy algorithm to generate novel conjectures about the prime counting function and diameters of Cayley graphs of non-abelian simple groups. We also report private communications with colleagues in which some conjectures were proved, and highlight that some conjectures generated using this procedure are still unproven. Finally, we propose a pipeline of mathematical discovery in this space and highlight the importance of domain expertise in this pipeline.

A physics-informed search for metric solutions to Ricci flow, their embeddings, and visualisation

Nov 30, 2022

Abstract:Neural networks with PDEs embedded in their loss functions (physics-informed neural networks) are employed as a function approximators to find solutions to the Ricci flow (a curvature based evolution) of Riemannian metrics. A general method is developed and applied to the real torus. The validity of the solution is verified by comparing the time evolution of scalar curvature with that found using a standard PDE solver, which decreases to a constant value of 0 on the whole manifold. We also consider certain solitonic solutions to the Ricci flow equation in two real dimensions. We create visualisations of the flow by utilising an embedding into $\mathbb{R}^3$. Snapshots of highly accurate numerical evolution of the toroidal metric over time are reported. We provide guidelines on applications of this methodology to the problem of determining Ricci flat Calabi--Yau metrics in the context of String theory, a long standing problem in complex geometry.

Machine Learned Calabi--Yau Metrics and Curvature

Nov 17, 2022

Abstract:Finding Ricci-flat (Calabi--Yau) metrics is a long standing problem in geometry with deep implications for string theory and phenomenology. A new attack on this problem uses neural networks to engineer approximations to the Calabi--Yau metric within a given K\"ahler class. In this paper we investigate numerical Ricci-flat metrics over smooth and singular K3 surfaces and Calabi--Yau threefolds. Using these Ricci-flat metric approximations for the Cefal\'u and Dwork family of quartic twofolds and the Dwork family of quintic threefolds, we study characteristic forms on these geometries. Using persistent homology, we show that high curvature regions of the manifolds form clusters near the singular points, but also elsewhere. For our neural network approximations, we observe a Bogomolov--Yau type inequality $3c_2 \geq c_1^2$ and observe an identity when our geometries have isolated $A_1$ type singularities. We sketch a proof that $\chi(X~\smallsetminus~\mathrm{Sing}\,{X}) + 2~|\mathrm{Sing}\,{X}| = 24$ also holds for our numerical approximations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge