Athresh Karanam

A Unified Framework for Human-Allied Learning of Probabilistic Circuits

May 03, 2024

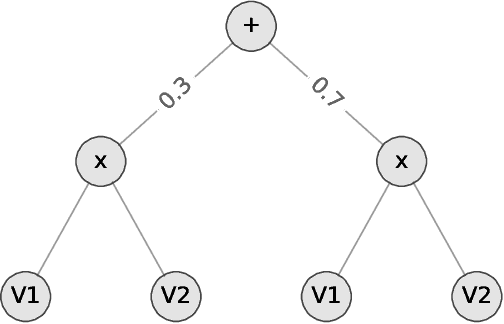

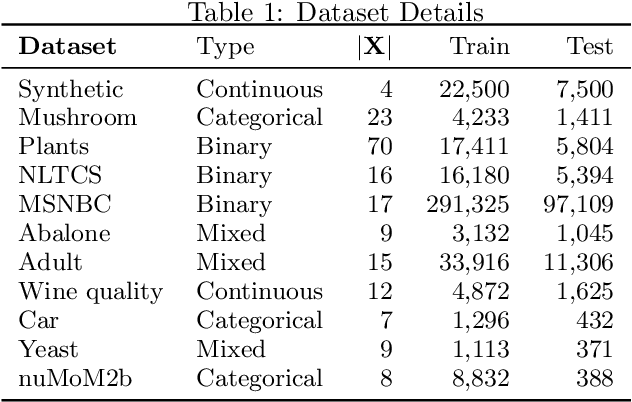

Abstract:Probabilistic Circuits (PCs) have emerged as an efficient framework for representing and learning complex probability distributions. Nevertheless, the existing body of research on PCs predominantly concentrates on data-driven parameter learning, often neglecting the potential of knowledge-intensive learning, a particular issue in data-scarce/knowledge-rich domains such as healthcare. To bridge this gap, we propose a novel unified framework that can systematically integrate diverse domain knowledge into the parameter learning process of PCs. Experiments on several benchmarks as well as real world datasets show that our proposed framework can both effectively and efficiently leverage domain knowledge to achieve superior performance compared to purely data-driven learning approaches.

Explaining Deep Tractable Probabilistic Models: The sum-product network case

Oct 19, 2021

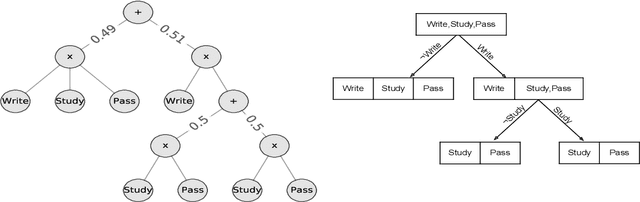

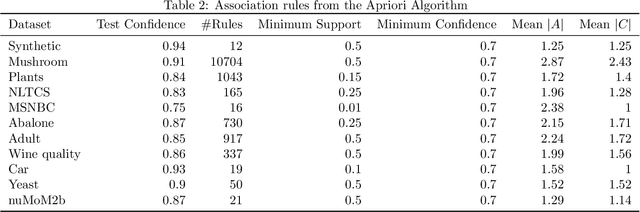

Abstract:We consider the problem of explaining a tractable deep probabilistic model, the Sum-Product Networks (SPNs).To this effect, we define the notion of a context-specific independence tree and present an iterative algorithm that converts an SPN to a CSI-tree. The resulting CSI-tree is both interpretable and explainable to the domain expert. To further compress the tree, we approximate the CSIs by fitting a supervised classifier. Our extensive empirical evaluations on synthetic, standard, and real-world clinical data sets demonstrate that the resulting models exhibit superior explainability without loss in performance.

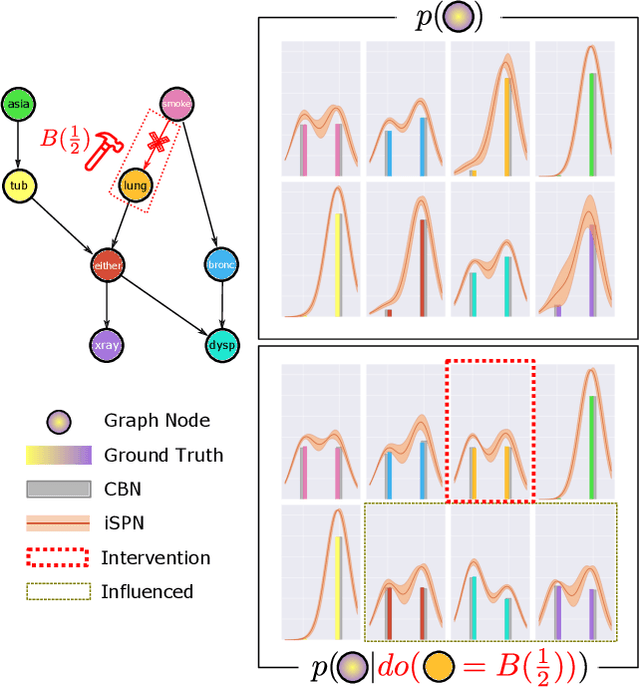

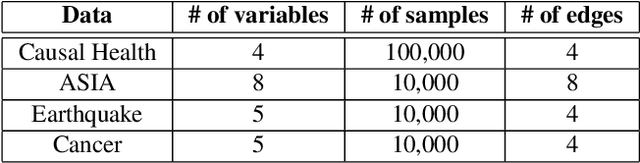

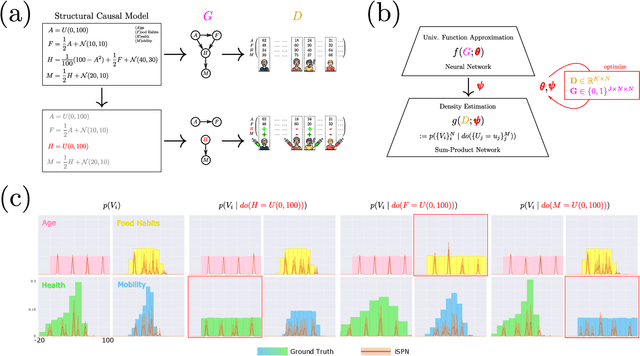

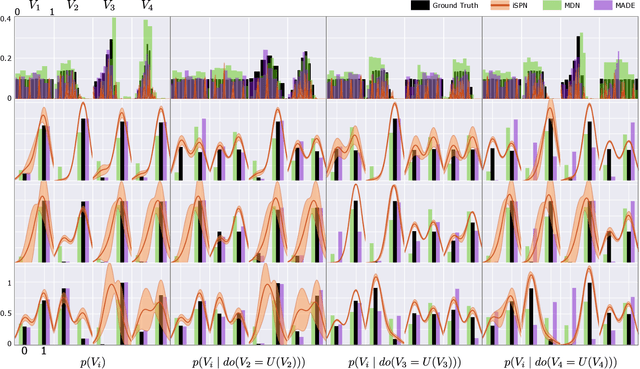

Interventional Sum-Product Networks: Causal Inference with Tractable Probabilistic Models

Feb 23, 2021

Abstract:While probabilistic models are an important tool for studying causality, doing so suffers from the intractability of inference. As a step towards tractable causal models, we consider the problem of learning interventional distributions using sum-product networks (SPNs) that are over-parameterized by gate functions, e.g., neural networks. Providing an arbitrarily intervened causal graph as input, effectively subsuming Pearl's do-operator, the gate function predicts the parameters of the SPN. The resulting interventional SPNs are motivated and illustrated by a structural causal model themed around personal health. Our empirical evaluation on three benchmark data sets as well as a synthetic health data set clearly demonstrates that interventional SPNs indeed are both expressive in modelling and flexible in adapting to the interventions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge