Aoyu Liu

ExpoCM: Exposure-Aware One-Step Generative Single-Image HDR Reconstruction

May 04, 2026Abstract:Single-image HDR reconstruction aims to recover high dynamic range radiance from a single low dynamic range (LDR) input, but remains highly ill-posed due to detail saturation in over-exposed regions and noise amplification in under-exposed areas. While recent diffusion-based approaches offer powerful generative priors, they often overlook the exposure-dependent nature of the degradation and incur substantial computational costs from iterative sampling. To address these challenges, we propose ExpoCM, a novel one-step generative HDR reconstruction framework that reformulates HDR reconstruction as a Probability Flow ODE (PF-ODE) and constructs exposure-aware consistency trajectories via exposure-dependent perturbations. Specifically, a soft exposure mask is first constructed to separate the LDR image into over-, under-, and well-exposed regions. Based on this partition, region-conditioned consistency trajectories are designed to hallucinate saturated details, suppress noise in dark regions, and preserve reliable structures within a single, distillation-free inference step. To further enhance perceptual quality, we introduce an Exposure-guided Luminance-Chromaticity Loss in the CIE~$\text{L}^*\text{a}^*\text{b}^*$ space, which assigns exposure-aware weights to luminance and chromaticity components, effectively mitigating brightness bias and color drift. Extensive experiments on the HDR-REAL, HDR-EYE, and AIM2025 benchmarks demonstrate that ExpoCM achieves state-of-the-art fidelity and perceptual accuracy, while enabling over 400$\times$ and 20$\times$ faster inference compared to DDPM (1000 steps) and DDIM (50 steps), respectively.

Spatial-Temporal Interactive Dynamic Graph Convolution Network for Traffic Forecasting

May 19, 2022

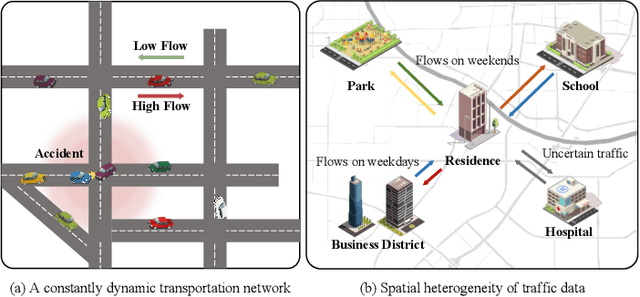

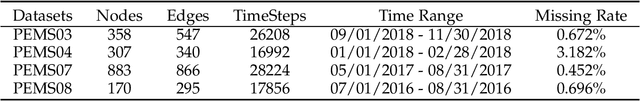

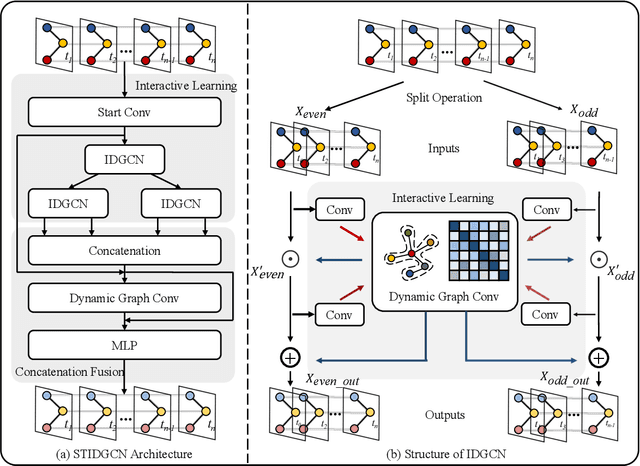

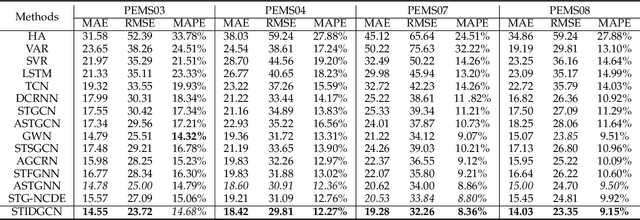

Abstract:Accurate traffic forecasting is essential for smart cities to achieve traffic flow control, route planning, and detection. Although many spatial-temporal methods are currently proposed, these methods are deficient in capturing the spatial-temporal dependence of traffic data synchronously. In addition, most of the methods ignore the dynamically changing correlations between road network nodes that arise as traffic data changes. To address the above challenges, we propose a neural network-based Spatial-Temporal Interactive Dynamic Graph Convolutional Network (STIDGCN) for traffic forecasting in this paper. In STIDGCN, we propose an interactive dynamic graph convolution structure, which first divides the sequences at intervals and captures the spatial-temporal dependence of the traffic data simultaneously through an interactive learning strategy for effective long-term prediction. We propose a novel dynamic graph convolution module consisting of a graph generator, fusion graph convolution. The dynamic graph convolution module can use the input traffic data, pre-defined graph structure to generate a graph structure and fuse it with the defined adaptive adjacency matrix, which is used to achieve the filling of the pre-defined graph structure and simulate the generation of dynamic associations between nodes in the road network. Extensive experiments on four real-world traffic flow datasets demonstrate that STIDGCN outperforms the state-of-the-art baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge