Alina Zare

Histogram Layers for Texture Analysis

Jan 16, 2020

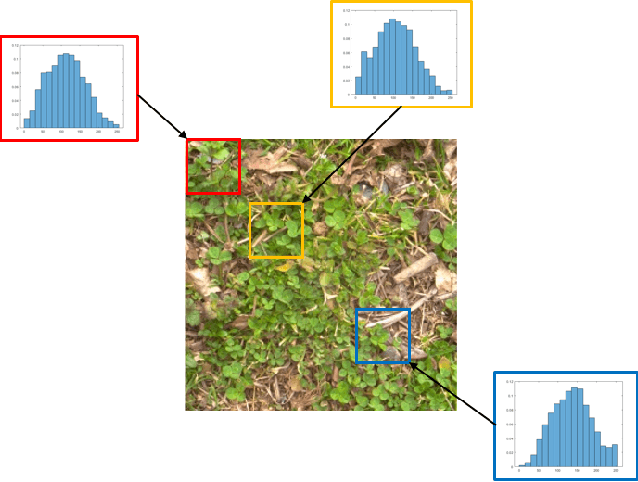

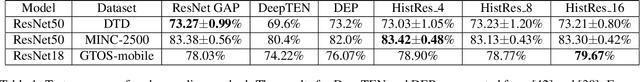

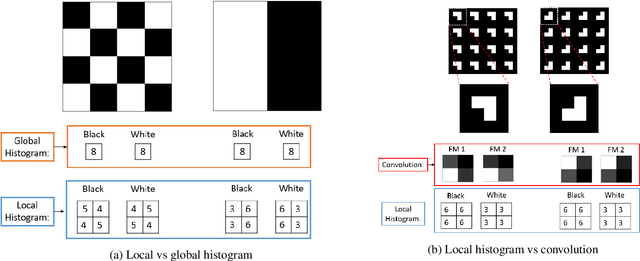

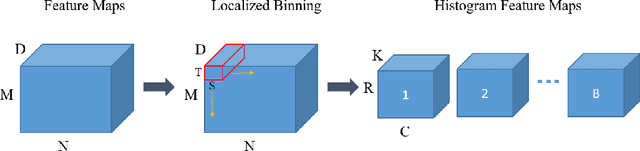

Abstract:We present a histogram layer for artificial neural networks (ANNs). An essential aspect of texture analysis is the extraction of features that describe the distribution of values in local spatial regions. The proposed histogram layer leverages the spatial distribution of features for texture analysis and parameters for the layer are estimated during backpropagation. We compare our method with state-of-the-art texture encoding methods such as the Deep Encoding Network (DEP) and Deep Texture Encoding Network (DeepTEN) on three texture datasets: (1) the Describable Texture Dataset (DTD); (2) an extension of the ground terrain in outdoor scenes (GTOS-mobile); (3) and a subset of the Materials in Context (MINC-2500) dataset. Results indicate that the inclusion of the proposed histogram layer improves performance. The source code for the histogram layer is publicly available.

Peanut Maturity Classification using Hyperspectral Imagery

Oct 25, 2019

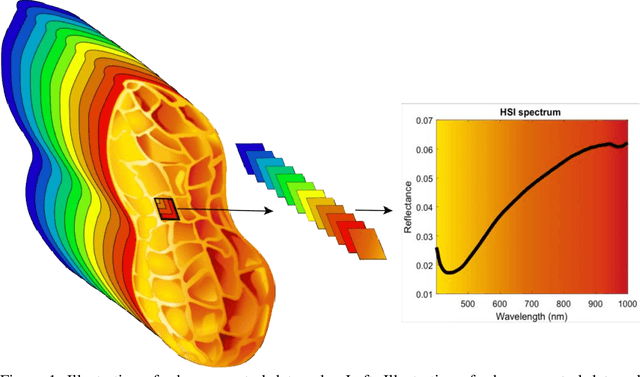

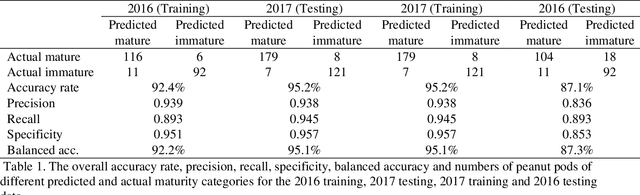

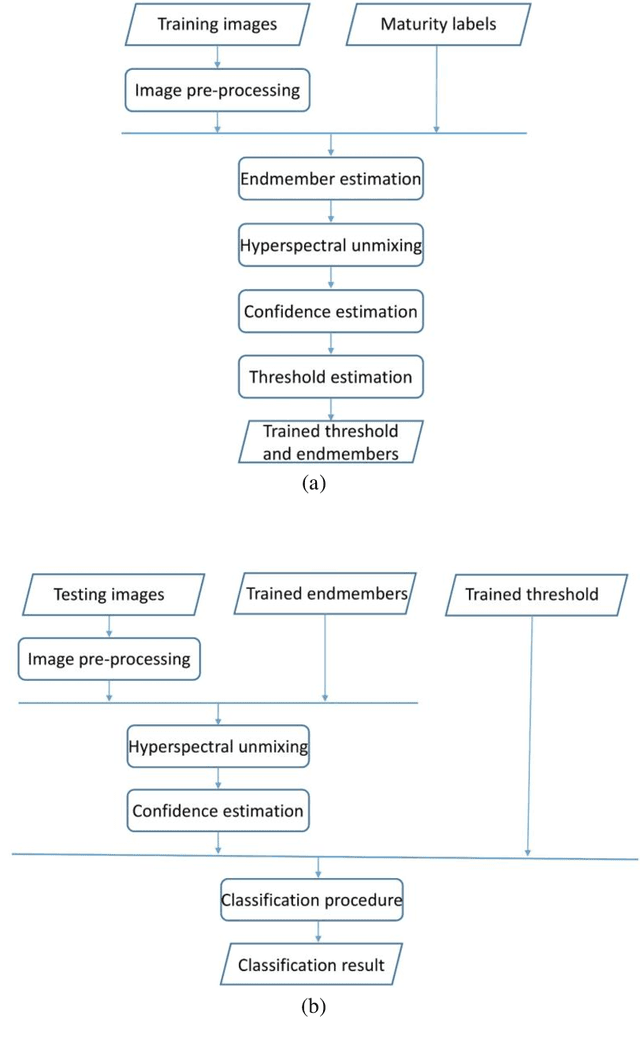

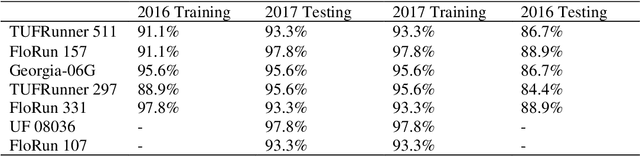

Abstract:Seed maturity in peanut (Arachis hypogaea L.) determines economic return to a producer because of its impact on seed weight (yield), and critically influences seed vigor and other quality characteristics. During seed development, the inner mesocarp layer of the pericarp (hull) transitions in color from white to black as the seed matures. The maturity assessment process involves the removal of the exocarp of the hull and visually categorizing the mesocarp color into varying color classes from immature (white, yellow, orange) to mature (brown, and black). This visual color classification is time consuming because the exocarp must be manually removed. In addition, the visual classification process involves human assessment of colors, which leads to large variability of color classification from observer to observer. A more objective, digital imaging approach to peanut maturity is needed, optimally without the requirement of removal of the hull's exocarp. This study examined the use of a hyperspectral imaging (HSI) process to determine pod maturity with intact pericarps. The HSI method leveraged spectral differences between mature and immature pods within a classification algorithm to identify the mature and immature pods. The results showed a high classification accuracy with consistency using samples from different years and cultivars. In addition, the proposed method was capable of estimating a continuous-valued, pixel-level maturity value for individual peanut pods, allowing for a valuable tool that can be utilized in seed quality research. This new method solves issues of labor intensity and subjective error that all current methods of peanut maturity determination have.

Multi-Target Multiple Instance Learning for Hyperspectral Target Detection

Sep 07, 2019

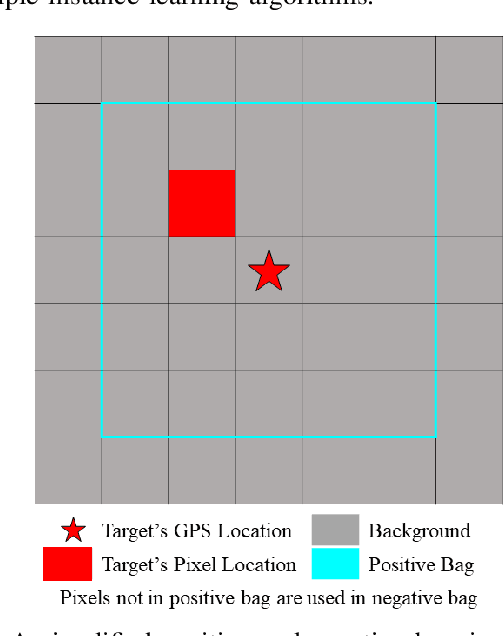

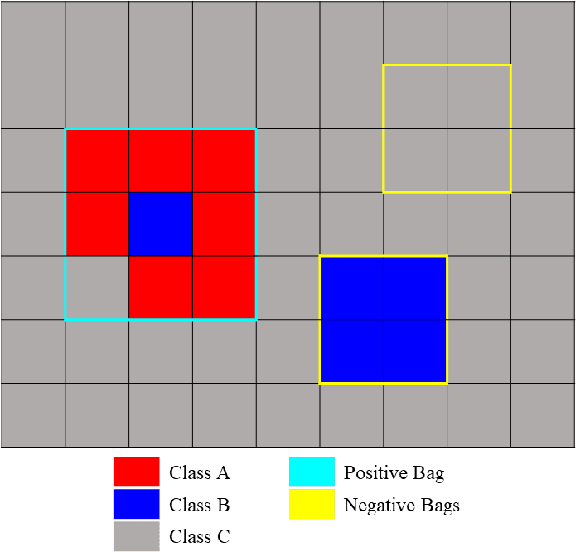

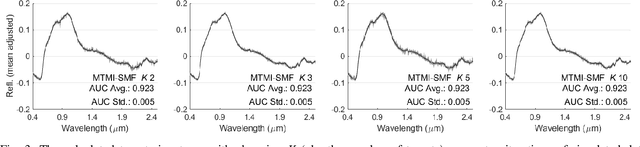

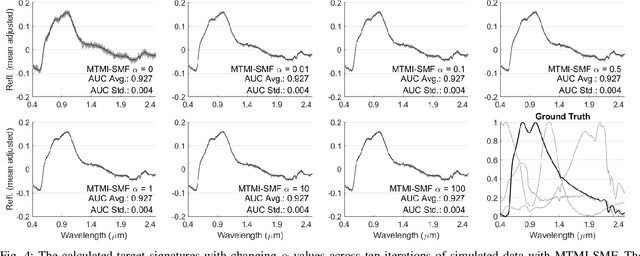

Abstract:In remote sensing, it is often difficult to acquire or collect a large dataset that is accurately labeled. This difficulty is often due to several issues including but not limited to the study site's spatial area and accessibility, errors in global positioning system (GPS), and mixed pixels caused by an image's spatial resolution. An approach, with two variations, is proposed that estimates multiple target signatures from mixed training samples with imprecise labels: Multi-Target Multiple Instance Adaptive Cosine Estimator (Multi-Target MI-ACE) and Multi-Target Multiple Instance Spectral Match Filter (Multi-Target MI-SMF). The proposed methods address the problems above by directly considering the multiple-instance, imprecisely labeled dataset and learns a dictionary of target signatures that optimizes detection using the Adaptive Cosine Estimator (ACE) and Spectral Match Filter (SMF) against a background. The algorithms have two primary steps, initialization and optimization. The initialization process determines diverse target representatives, while the optimization process simultaneously updates the target representatives to maximize detection while learning the number of optimal signatures to describe the target class. Three designed experiments were done to test the proposed algorithms: a simulated hyperspectral dataset, the MUUFL Gulfport hyperspectral dataset collected over the University of Southern Mississippi-Gulfpark Campus, and the AVIRIS hyperspectral dataset collected over Santa Barbara County, California. Both simulated and real hyperspectral target detection experiments show the proposed algorithms are effective at learning target signatures and performing target detection.

Investigation of Initialization Strategies for the Multiple Instance Adaptive Cosine Estimator

Apr 30, 2019Abstract:Sensors which use electromagnetic induction (EMI) to excite a response in conducting bodies have long been investigated for subsurface explosive hazard detection. In particular, EMI sensors have been used to discriminate between different types of objects, and to detect objects with low metal content. One successful, previously investigated approach is the Multiple Instance Adaptive Cosine Estimator (MI-ACE). In this paper, a number of new initialization techniques for MI-ACE are proposed and evaluated using their respective performance and speed. The cross validated learned signatures, as well as learned background statistics, are used with Adaptive Cosine Estimator (ACE) to generate confidence maps, which are clustered into alarms. Alarms are scored against a ground truth and the initialization approaches are compared.

Comparison of Possibilistic Fuzzy Local Information C-Means and Possibilistic K-Nearest Neighbors for Synthetic Aperture Sonar Image Segmentation

Apr 01, 2019Abstract:Synthetic aperture sonar (SAS) imagery can generate high resolution images of the seafloor. Thus, segmentation algorithms can be used to partition the images into different seafloor environments. In this paper, we compare two possibilistic segmentation approaches. Possibilistic approaches allow for the ability to detect novel or outlier environments as well as well known classes. The Possibilistic Fuzzy Local Information C-Means (PFLICM) algorithm has been previously applied to segment SAS imagery. Additionally, the Possibilistic K-Nearest Neighbors (PKNN) algorithm has been used in other domains such as landmine detection and hyperspectral imagery. In this paper, we compare the segmentation performance of a semi-supervised approach using PFLICM and a supervised method using Possibilistic K-NN. We include final segmentation results on multiple SAS images and a quantitative assessment of each algorithm.

Comparison of Hand-held WEMI Target Detection Algorithms

Mar 22, 2019Abstract:Wide-band Electromagnetic Induction Sensors (WEMI) have been used for a number of years in subsurface detection of explosive hazards. While WEMI sensors have proven effective at localizing objects exhibiting large magnetic responses, detecting objects lacking or containing very low amounts of conductive materials can be challenging. In this paper, we compare a number of target detection algorithms in the literature in terms of detection performance. In the comparison, methods are tested on two real-world data sets: one containing relatively low amounts of ground noise pollution, and the other demonstrating highly-magnetic soil interference. Results are quantitatively evaluated through receiver-operator characteristic (ROC) curves and are used to highlight the strengths and weaknesses of the compared approaches in hand-held explosive hazard detection.

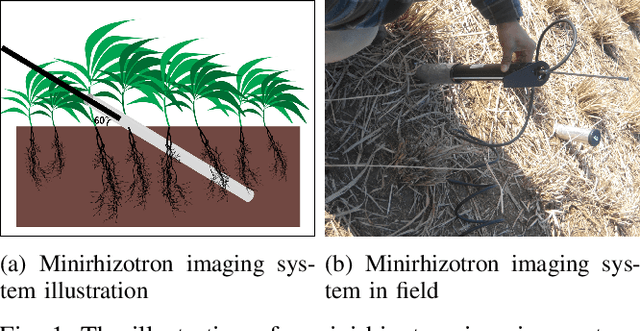

Overcoming Small Minirhizotron Datasets Using Transfer Learning

Mar 22, 2019

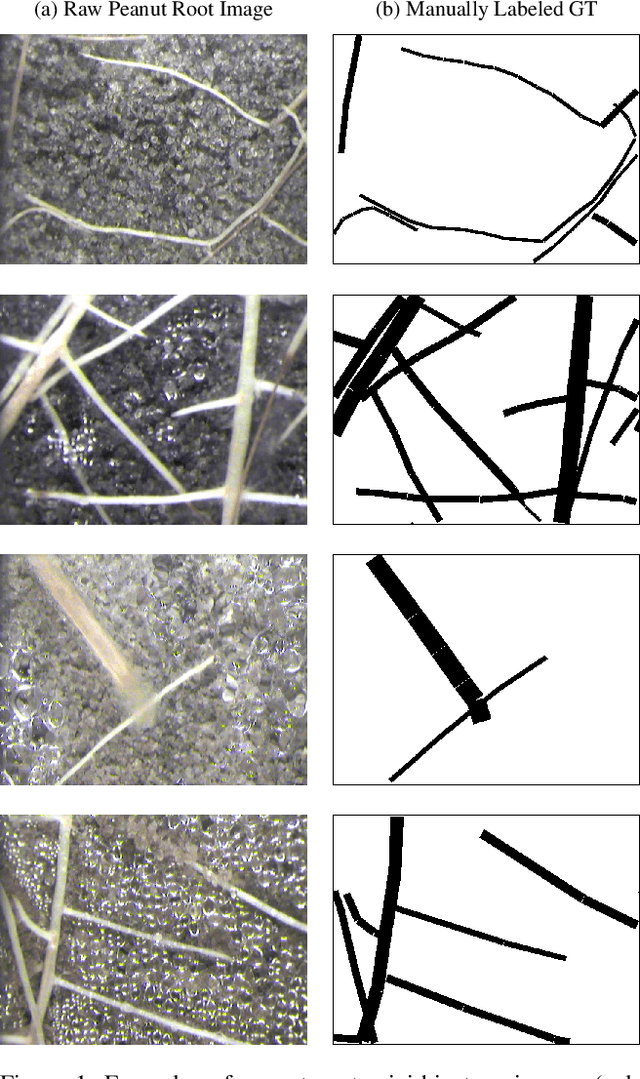

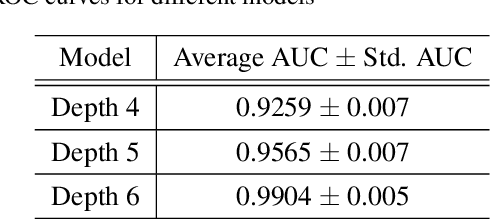

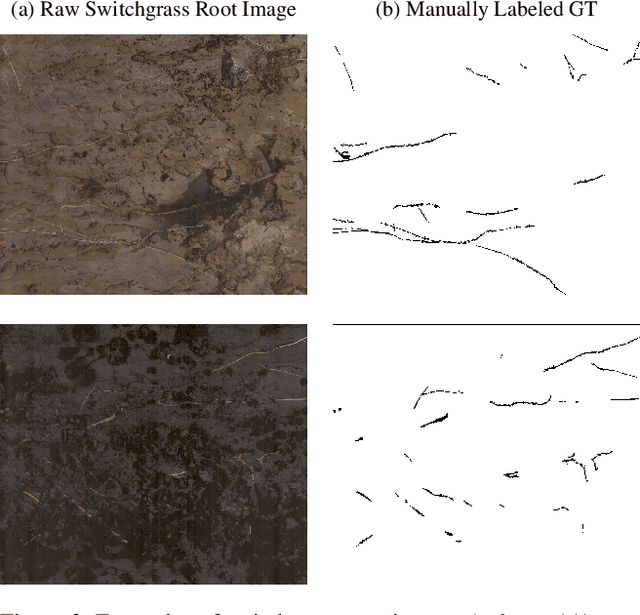

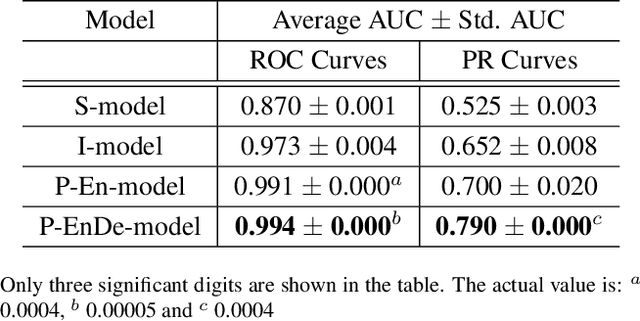

Abstract:Minirhizotron technology is widely used for studying the development of roots. Such systems collect visible-wavelength color imagery of plant roots in-situ by scanning an imaging system within a clear tube driven into the soil. Automated analysis of root systems could facilitate new scientific discoveries that would be critical to address the world's pressing food, resource, and climate issues. A key component of automated analysis of plant roots from imagery is the automated pixel-level segmentation of roots from their surrounding soil. Supervised learning techniques appear to be an appropriate tool for the challenge due to varying local soil and root conditions, however, lack of enough annotated training data is a major limitation due to the error-prone and time-consuming manually labeling process. In this paper, we investigate the use of deep neural networks based on the U-net architecture for automated, precise pixel-wise root segmentation in minirhizotron imagery. We compiled two minirhizotron image datasets to accomplish this study: one with 17,550 peanut root images and another with 28 switchgrass root images. Both datasets were paired with manually labeled ground truth masks. We trained three neural networks with different architectures on the larger peanut root dataset to explore the effect of the neural network depth on segmentation performance. To tackle the more limited switchgrass root dataset, we showed that models initialized with features pre-trained on the peanut dataset and then fine-tuned on the switchgrass dataset can improve segmentation performance significantly. We obtained 99\% segmentation accuracy in switchgrass imagery using only 21 training images. We also observed that features pre-trained on a closely related but relatively moderate size dataset like our peanut dataset are more effective than features pre-trained on the large but unrelated ImageNet dataset.

Complex Scene Classification of PolSAR Imagery based on a Self-paced Learning Approach

Mar 18, 2019

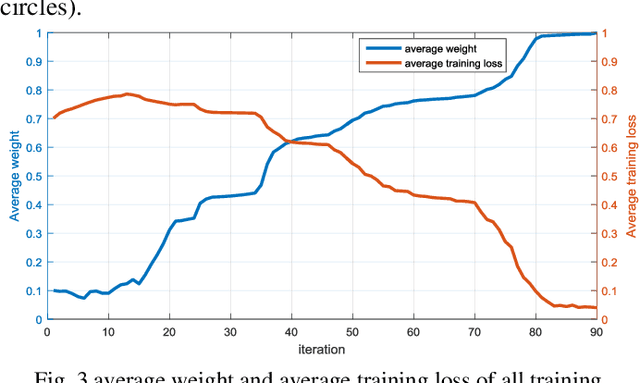

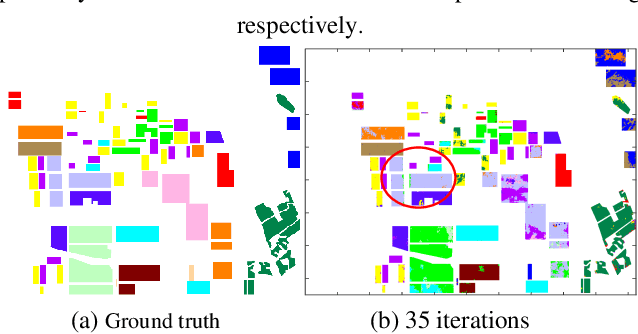

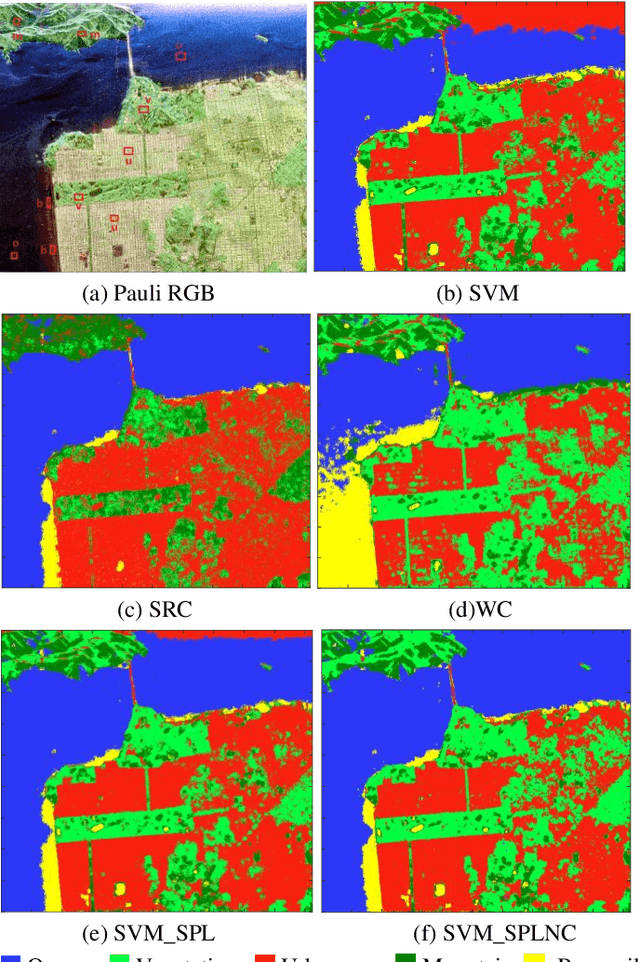

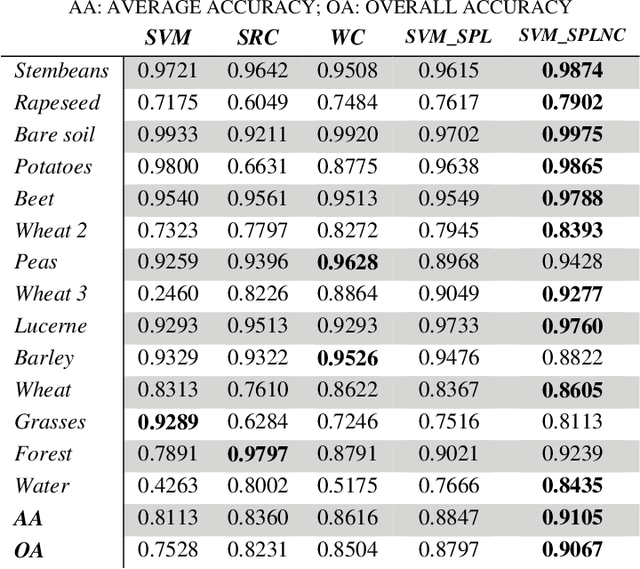

Abstract:Existing polarimetric synthetic aperture radar (PolSAR) image classification methods cannot achieve satisfactory performance on complex scenes characterized by several types of land cover with significant levels of noise or similar scattering properties across land cover types. Hence, we propose a supervised classification method aimed at constructing a classifier based on self-paced learning (SPL). SPL has been demonstrated to be effective at dealing with complex data while providing classifier. In this paper, a novel Support Vector Machine (SVM) algorithm based on SPL with neighborhood constraints (SVM_SPLNC) is proposed. The proposed method leverages the easiest samples first to obtain an initial parameter vector. Then, more complex samples are gradually incorporated to update the parameter vector iteratively. Moreover, neighborhood constraints are introduced during the training process to further improve performance. Experimental results on three real PolSAR images show that the proposed method performs well on complex scenes.

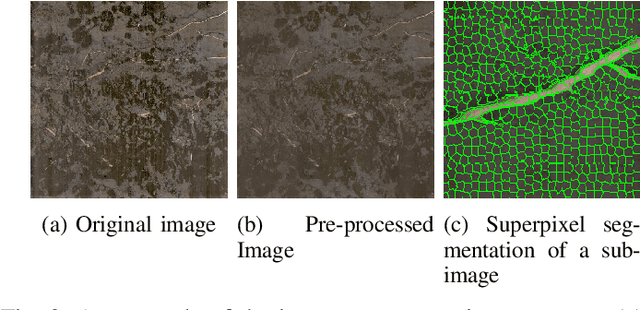

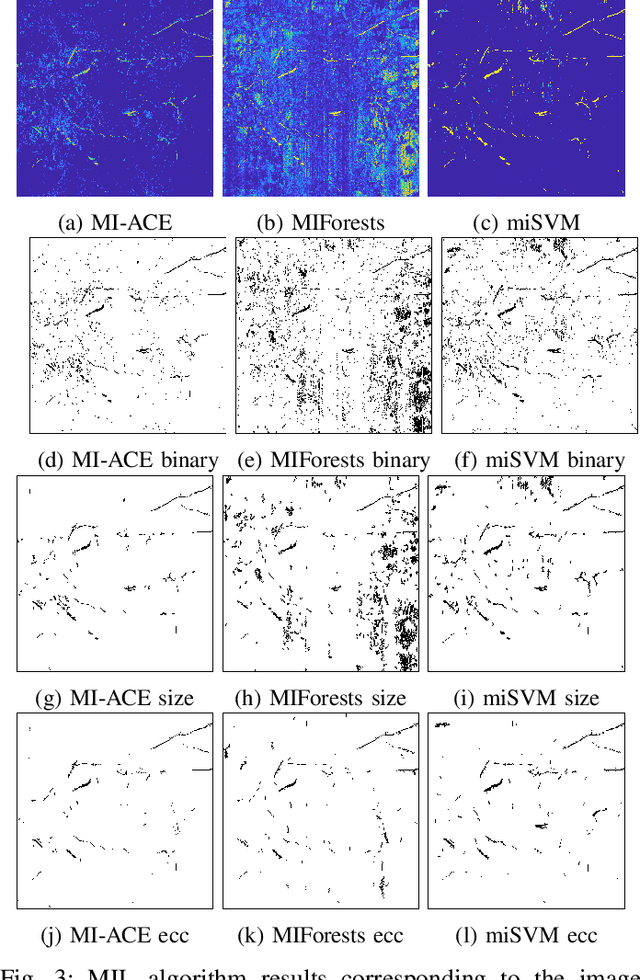

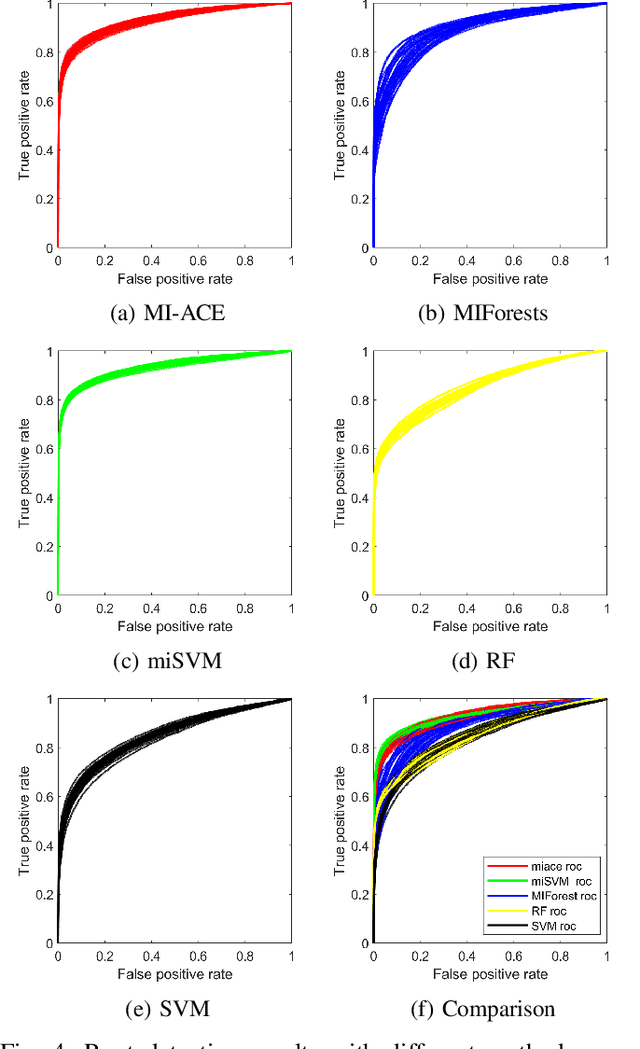

Root Identification in Minirhizotron Imagery with Multiple Instance Learning

Mar 11, 2019

Abstract:In this paper, multiple instance learning (MIL) algorithms to automatically perform root detection and segmentation in minirhizotron imagery using only image-level labels are proposed. Root and soil characteristics vary from location to location, thus, supervised machine learning approaches that are trained with local data provide the best ability to identify and segment roots in minirhizotron imagery. However, labeling roots for training data (or otherwise) is an extremely tedious and time-consuming task. This paper aims to address this problem by labeling data at the image level (rather than the individual root or root pixel level) and train algorithms to perform individual root pixel level segmentation using MIL strategies. Three MIL methods (MI-ACE, miSVM, MIForests) were applied to root detection and compared to non-MIL approches. The results show that MIL methods improve root segmentation in challenging minirhizotron imagery and reduce the labeling burden. In our results, miSVM outperformed other methods. The MI-ACE algorithm was a close second with an added advantage that it learned an interpretable root signature which identified the traits used to distinguish roots from soil and did not require parameter selection.

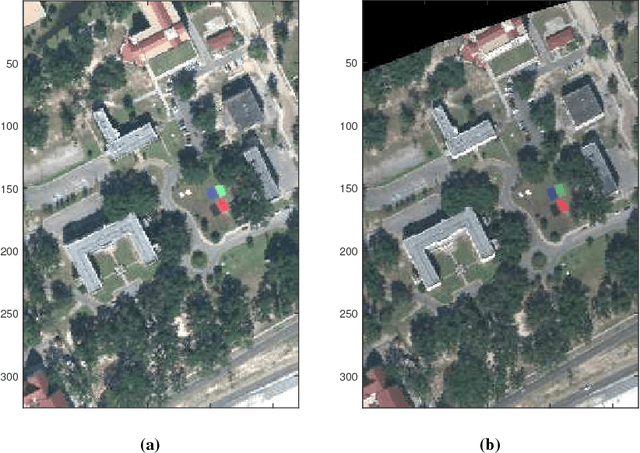

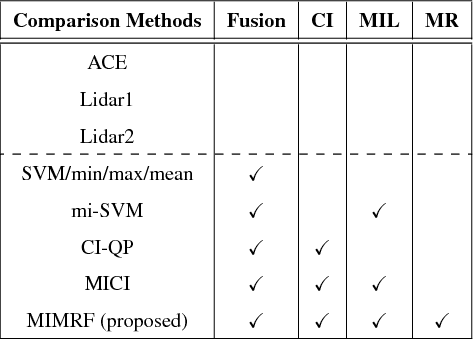

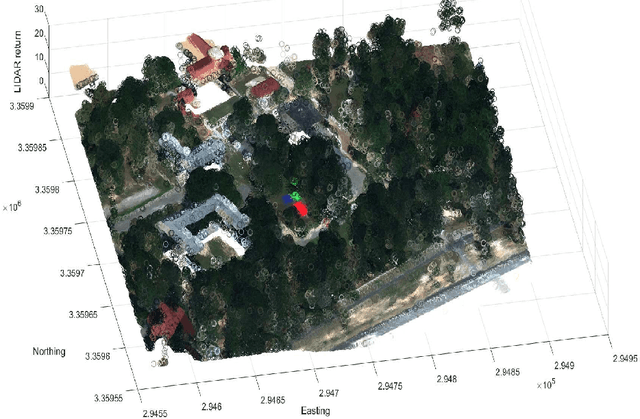

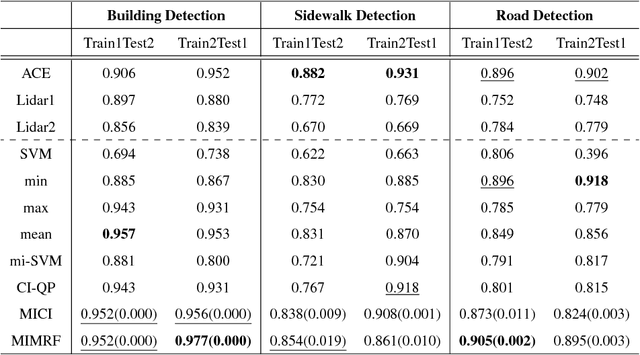

Multi-Resolution Multi-Modal Sensor Fusion For Remote Sensing Data With Label Uncertainty

May 02, 2018

Abstract:In remote sensing, each sensor can provide complementary or reinforcing information. It is valuable to fuse outputs from multiple sensors to boost overall performance. Previous supervised fusion methods often require accurate labels for each pixel in the training data. However, in many remote sensing applications, pixel-level labels are difficult or infeasible to obtain. In addition, outputs from multiple sensors may have different levels of resolution or modalities (such as rasterized hyperspectral imagery versus LiDAR 3D point clouds). This paper presents a Multiple Instance Multi-Resolution Fusion (MIMRF) framework that can fuse multi-resolution and multi-modal sensor outputs while learning from ambiguously and imprecisely labeled training data. Experiments were conducted on the MUUFL Gulfport hyperspectral and LiDAR data set and a remotely-sensed soybean and weed data set. Results show improved, consistent performance on scene understanding and agricultural applications when compared to traditional fusion methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge