Akshay Jain

Phase-Only Positioning in Distributed MIMO Under Phase Impairments: AP Selection Using Deep Learning

Feb 04, 2026Abstract:Carrier phase positioning (CPP) can enable cm-level accuracy in next-generation wireless systems, while recent literature shows that accuracy remains high using phase-only measurements in distributed MIMO (D-MIMO). However, the impact of phase synchronization errors on such systems remains insufficiently explored. To address this gap, we first show that the proposed hyperbola intersection method achieves highly accurate positioning even in the presence of phase synchronization errors, when trained on appropriate data reflecting such impairments. We then introduce a deep learning (DL)-based D-MIMO antenna point (AP) selection framework that ensures high-precision localization under phase synchronization errors. Simulation results show that the proposed framework improves positioning accuracy compared to prior-art methods, while reducing inference complexity by approximately 19.7%.

Failure Tolerant Phase-Only Indoor Positioning via Deep Learning

Aug 20, 2025

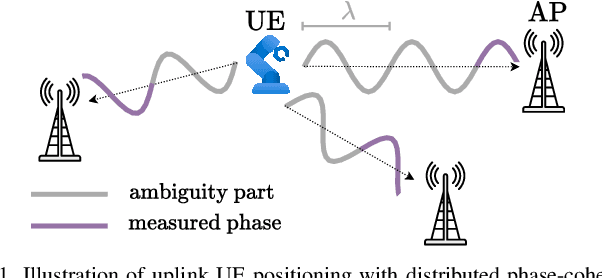

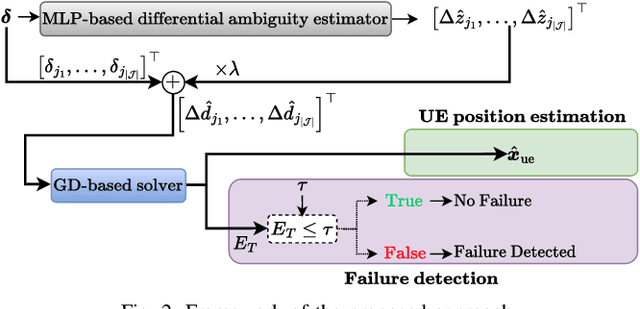

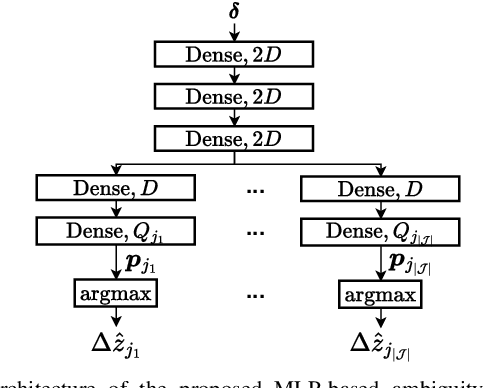

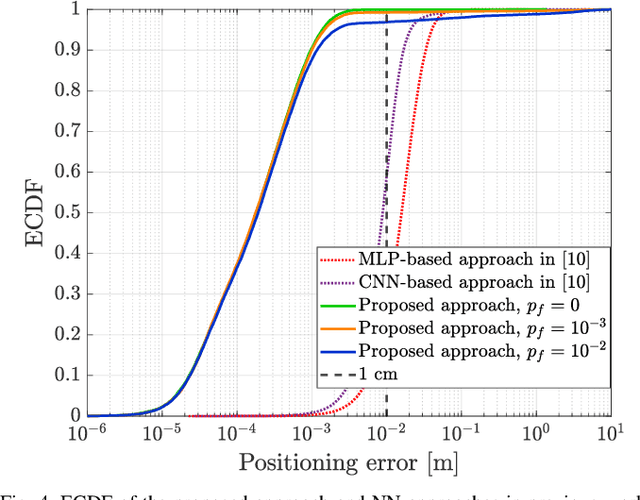

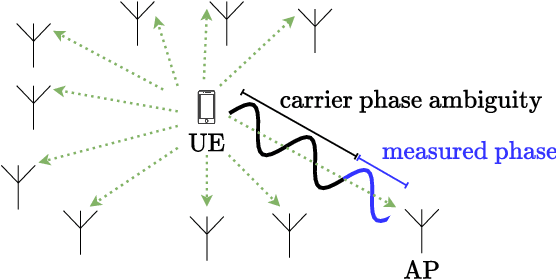

Abstract:High-precision localization turns into a crucial added value and asset for next-generation wireless systems. Carrier phase positioning (CPP) enables sub-meter to centimeter-level accuracy and is gaining interest in 5G-Advanced standardization. While CPP typically complements time-of-arrival (ToA) measurements, recent literature has introduced a phase-only positioning approach in a distributed antenna/MIMO system context with minimal bandwidth requirements, using deep learning (DL) when operating under ideal hardware assumptions. In more practical scenarios, however, antenna failures can largely degrade the performance. In this paper, we address the challenging phase-only positioning task, and propose a new DL-based localization approach harnessing the so-called hyperbola intersection principle, clearly outperforming the previous methods. Additionally, we consider and propose a processing and learning mechanism that is robust to antenna element failures. Our results show that the proposed DL model achieves robust and accurate positioning despite antenna impairments, demonstrating the viability of data-driven, impairment-tolerant phase-only positioning mechanisms. Comprehensive set of numerical results demonstrates large improvements in localization accuracy against the prior art methods.

Phase-Only Positioning: Overcoming Integer Ambiguity Challenge through Deep Learning

Jun 09, 2025

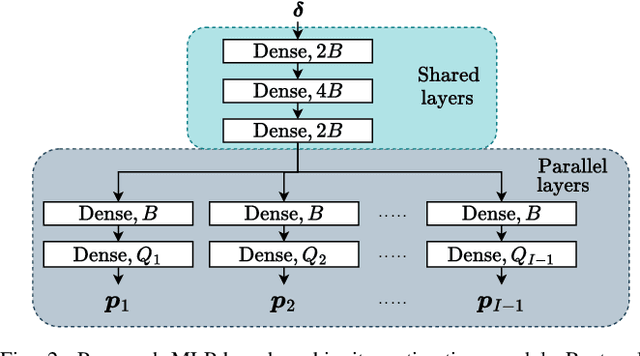

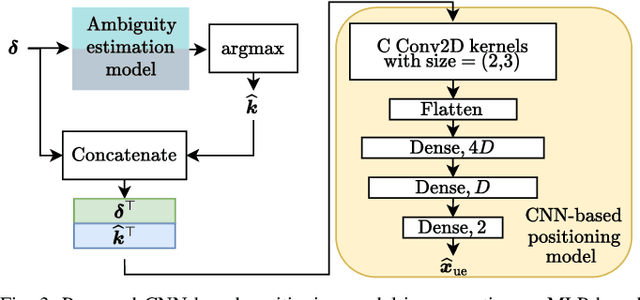

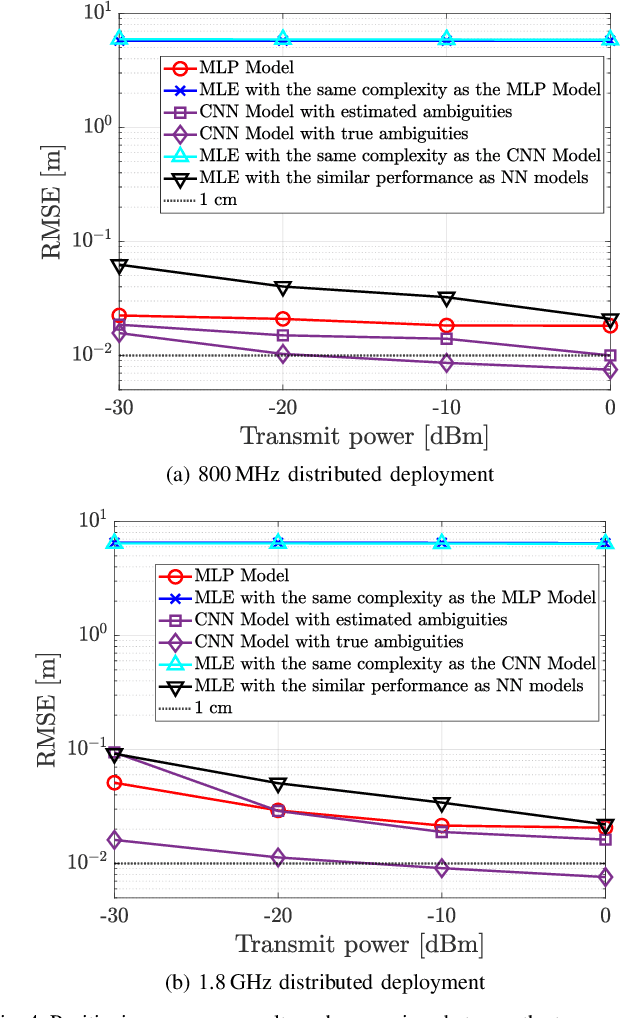

Abstract:This paper investigates uplink carrier phase positioning (CPP) in cell-free (CF) or distributed antenna system context, assuming a challenging case where only phase measurements are utilized as observations. In general, CPP can achieve sub-meter to centimeter-level accuracy but is challenged by the integer ambiguity problem. In this work, we propose two deep learning approaches for phase-only positioning, overcoming the integer ambiguity challenge. The first one directly uses phase measurements, while the second one first estimates integer ambiguities and then integrates them with phase measurements for improved accuracy. Our numerical results demonstrate that an inference complexity reduction of two to three orders of magnitude is achieved, compared to maximum likelihood baseline solution, depending on the approach and parameter configuration. This emphasizes the potential of the developed deep learning solutions for efficient and precise positioning in future CF 6G systems.

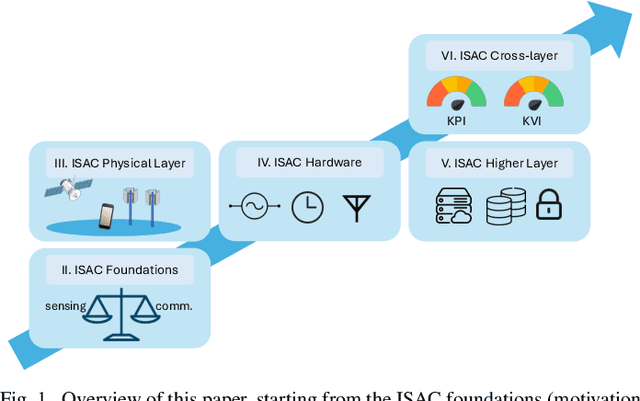

Cross-layer Integrated Sensing and Communication: A Joint Industrial and Academic Perspective

May 16, 2025

Abstract:Integrated sensing and communication (ISAC) enables radio systems to simultaneously sense and communicate with their environment. This paper, developed within the Hexa-X-II project funded by the European Union, presents a comprehensive cross-layer vision for ISAC in 6G networks, integrating insights from physical-layer design, hardware architectures, AI-driven intelligence, and protocol-level innovations. We begin by revisiting the foundational principles of ISAC, highlighting synergies and trade-offs between sensing and communication across different integration levels. Enabling technologies, such as multiband operation, massive and distributed MIMO, non-terrestrial networks, reconfigurable intelligent surfaces, and machine learning, are analyzed in conjunction with hardware considerations including waveform design, synchronization, and full-duplex operation. To bridge implementation and system-level evaluation, we introduce a quantitative cross-layer framework linking design parameters to key performance and value indicators. By synthesizing perspectives from both academia and industry, this paper outlines how deeply integrated ISAC can transform 6G into a programmable and context-aware platform supporting applications from reliable wireless access to autonomous mobility and digital twinning.

Non-Uniform Illumination Attack for Fooling Convolutional Neural Networks

Sep 05, 2024

Abstract:Convolutional Neural Networks (CNNs) have made remarkable strides; however, they remain susceptible to vulnerabilities, particularly in the face of minor image perturbations that humans can easily recognize. This weakness, often termed as 'attacks', underscores the limited robustness of CNNs and the need for research into fortifying their resistance against such manipulations. This study introduces a novel Non-Uniform Illumination (NUI) attack technique, where images are subtly altered using varying NUI masks. Extensive experiments are conducted on widely-accepted datasets including CIFAR10, TinyImageNet, and CalTech256, focusing on image classification with 12 different NUI attack models. The resilience of VGG, ResNet, MobilenetV3-small and InceptionV3 models against NUI attacks are evaluated. Our results show a substantial decline in the CNN models' classification accuracy when subjected to NUI attacks, indicating their vulnerability under non-uniform illumination. To mitigate this, a defense strategy is proposed, including NUI-attacked images, generated through the new NUI transformation, into the training set. The results demonstrate a significant enhancement in CNN model performance when confronted with perturbed images affected by NUI attacks. This strategy seeks to bolster CNN models' resilience against NUI attacks.

Downlink Power Control based UE-Sided Initial Access for Tactical 5G NR

May 22, 2024

Abstract:Communication technologies play a crucial role in battlefields. They are an inalienable part of any tactical response, whether at the battlefront or inland. Such scenarios require that the communication technologies be versatile, scalable, cost-effective, and stealthy. While multiple studies and past products have tried to address these requirements, none of them have been able to solve all the four challenges simultaneously. Hence, in this paper, we propose a tactical solution that is based on the versatile, scalable, and cost effective 5G NR system. Our focus is on the initial-access phase which is subject to a high probability of detection by an eavesdropper. To address this issue, we propose some modifications to how the UE performs initial access that lower the probability of detection while not affecting standards compliance and not requiring any modifications to the user equipment (UE) chipset implementation. Further, we demonstrate that with a simple downlink power control algorithm, we reduce the probability of detection at an eavesdropper. The result is a 5G NR based initial-access method that improves stealthiness when compared with a vanilla 5G NR implementation.

Demystifying 5G NR Downlink Synchronization for Tactical Networks

May 21, 2024

Abstract:5G NR is touted to be an attractive candidate for tactical networks owing to its versatility, scalability, and low cost. However, tactical networks need to be stealthy, where an adversary is not able to detect or intercept the tactical communication. In this paper, we investigate the stealthiness of 5G NR by looking at the probability with which an adversary that monitors the downlink synchronization signals can detect the presence of the network. We simulate a single-cell single-eavesdropper scenario and evaluate the probability with which the eavesdropper can detect the synchronization signal block when using either a correlator or an energy detector. We show that this probability is close to $ 100 $% suggesting that 5G out-of-the-box is not suitable for a tactical network. We then propose methods that lower this value without affecting the performance of a legitimate tactical UE.

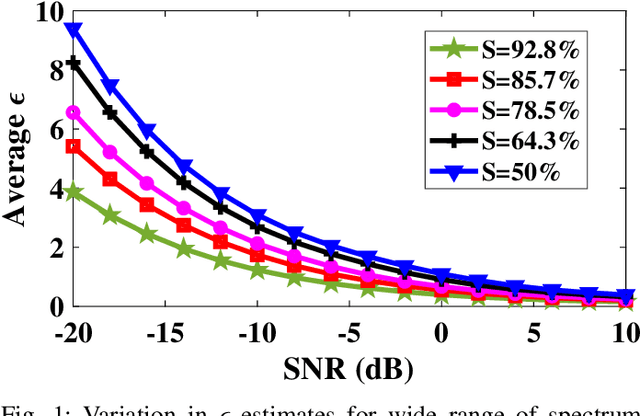

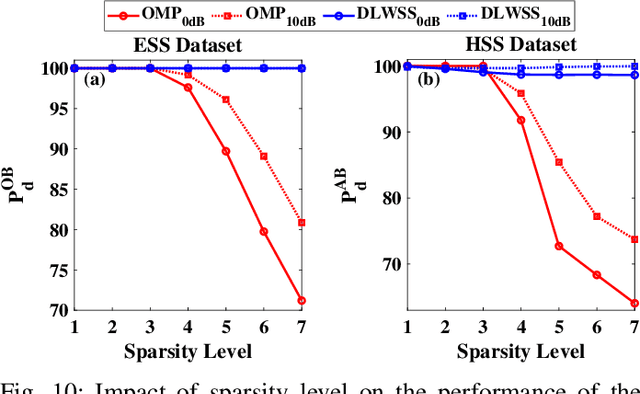

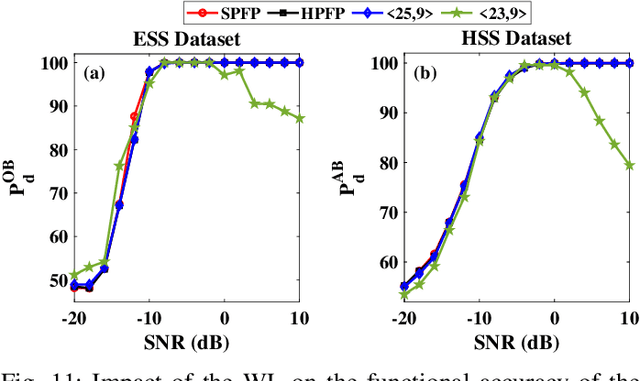

Hardware Software Co-design of Statistical and Deep Learning Frameworks for Wideband Sensing on Zynq System on Chip

Sep 06, 2022

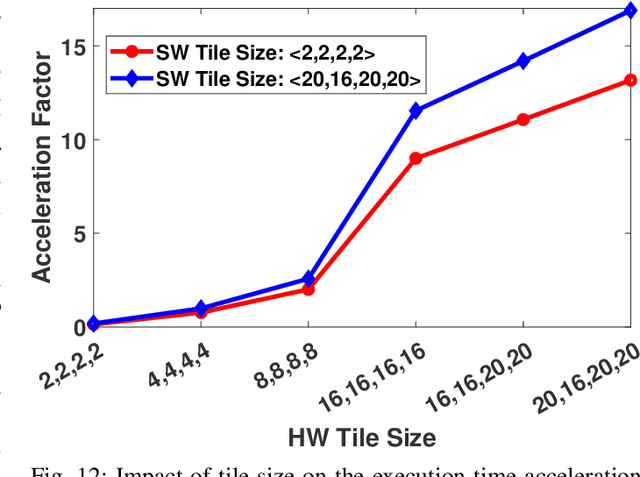

Abstract:With the introduction of spectrum sharing and heterogeneous services in next-generation networks, the base stations need to sense the wideband spectrum and identify the spectrum resources to meet the quality-of-service, bandwidth, and latency constraints. Sub-Nyquist sampling (SNS) enables digitization for sparse wideband spectrum without needing Nyquist speed analog-to-digital converters. However, SNS demands additional signal processing algorithms for spectrum reconstruction, such as the well-known orthogonal matching pursuit (OMP) algorithm. OMP is also widely used in other compressed sensing applications. The first contribution of this work is efficiently mapping the OMP algorithm on the Zynq system-on-chip (ZSoC) consisting of an ARM processor and FPGA. Experimental analysis shows a significant degradation in OMP performance for sparse spectrum. Also, OMP needs prior knowledge of spectrum sparsity. We address these challenges via deep-learning-based architectures and efficiently map them on the ZSoC platform as second contribution. Via hardware-software co-design, different versions of the proposed architecture obtained by partitioning between software (ARM processor) and hardware (FPGA) are considered. The resource, power, and execution time comparisons for given memory constraints and a wide range of word lengths are presented for these architectures.

On the Enabling of Multi-user Communications with Reconfigurable Intelligent Surfaces

Jun 12, 2021

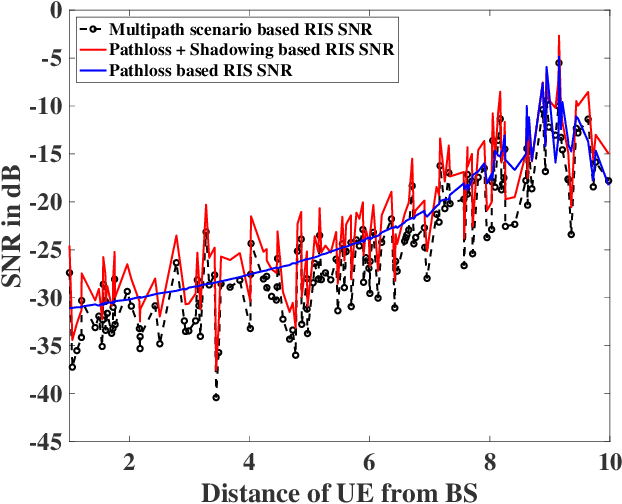

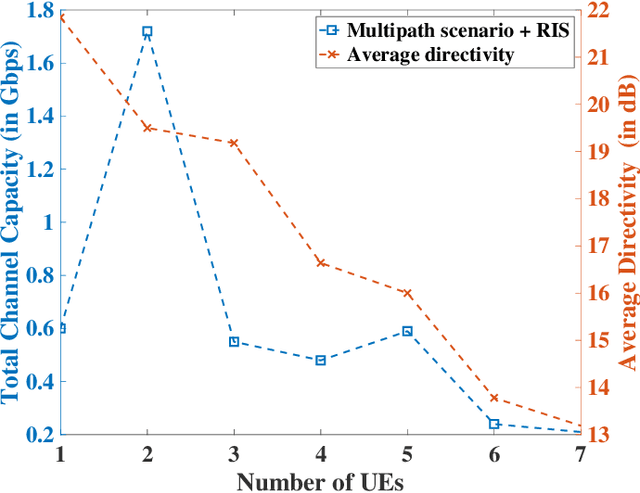

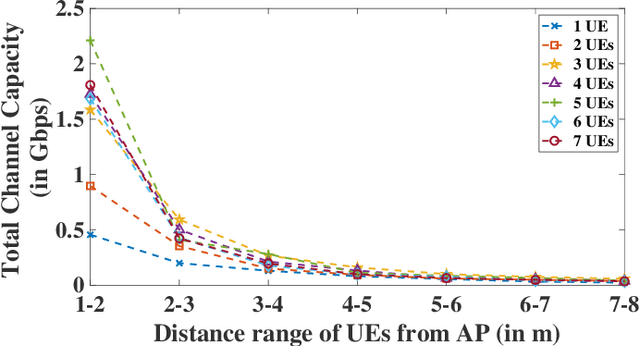

Abstract:Reconfigurable Intelligent Surface (RIS) composed of programmable actuators is a promising technology, thanks to its capability in manipulating Electromagnetic (EM) wavefronts. In particular, RISs have the potential to provide significant performance improvements for wireless networks. However, to do so, a proper configuration of the reflection coefficients of the unit cells in the RIS is required. RISs are sophisticated platforms so the design and fabrication complexity might be uneconomical for single-user scenarios while a RIS that can service multi-users justifies the costs. For the first time, we propose an efficient reconfiguration technique providing the multi-beam radiation pattern. Thanks to the analytical model the reconfiguration profile is at hand compared to time-consuming optimization techniques. The outcome can pave the wave for commercial use of multi-user communication beyond 5G networks. We analyze the performance of our proposed RIS technology for indoor and outdoor scenarios, given the broadcast mode of operation. The aforesaid scenarios encompass some of the most challenging scenarios that wireless networks encounter. We show that our proposed technique provisions sufficient gains in the observed channel capacity when the users are close to the RIS in the indoor office environment scenario. Further, we report more than one order of magnitude increase in the system throughput given the outdoor environment. The results prove that RIS with the ability to communicate with multiple users can empower wireless networks with great capacity.

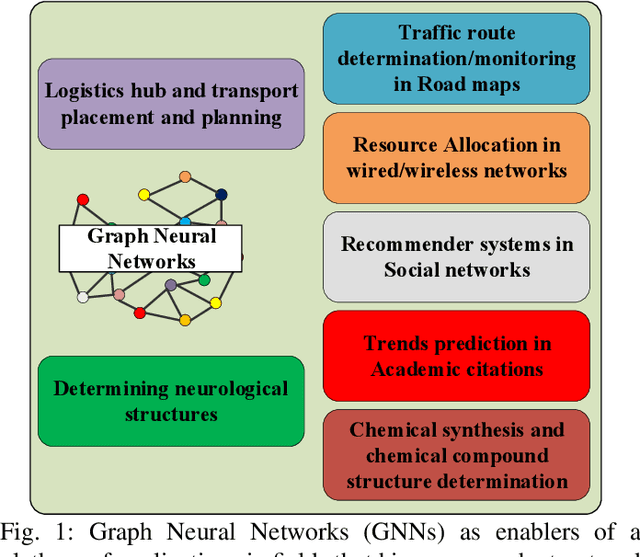

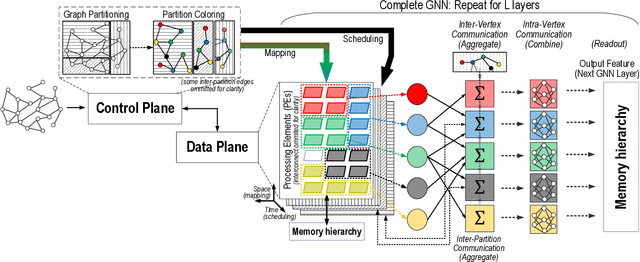

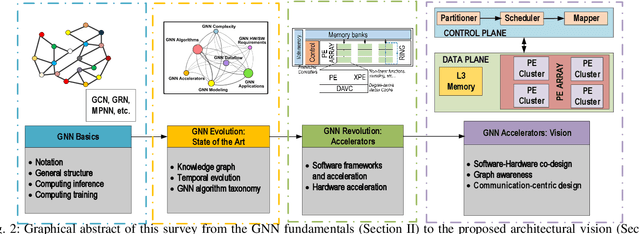

Computing Graph Neural Networks: A Survey from Algorithms to Accelerators

Sep 30, 2020

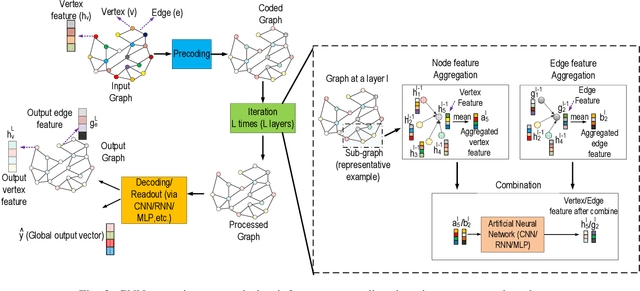

Abstract:Graph Neural Networks (GNNs) have exploded onto the machine learning scene in recent years owing to their capability to model and learn from graph-structured data. Such an ability has strong implications in a wide variety of fields whose data is inherently relational, for which conventional neural networks do not perform well. Indeed, as recent reviews can attest, research in the area of GNNs has grown rapidly and has lead to the development of a variety of GNN algorithm variants as well as to the exploration of groundbreaking applications in chemistry, neurology, electronics, or communication networks, among others. At the current stage of research, however, the efficient processing of GNNs is still an open challenge for several reasons. Besides of their novelty, GNNs are hard to compute due to their dependence on the input graph, their combination of dense and very sparse operations, or the need to scale to huge graphs in some applications. In this context, this paper aims to make two main contributions. On the one hand, a review of the field of GNNs is presented from the perspective of computing. This includes a brief tutorial on the GNN fundamentals, an overview of the evolution of the field in the last decade, and a summary of operations carried out in the multiple phases of different GNN algorithm variants. On the other hand, an in-depth analysis of current software and hardware acceleration schemes is provided, from which a hardware-software, graph-aware, and communication-centric vision for GNN accelerators is distilled.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge