Advait Bhat

Reactive Writers: How Co-Writing with AI Changes How We Engage with Ideas

Mar 11, 2026Abstract:Emerging experimental evidence shows that writing with AI assistance can change both the views people express in writing and the opinions they hold afterwards. Yet, we lack substantive understanding of procedural and behavioral changes in co-writing with AI that underlie the observed opinion-shaping power of AI writing tools. We conducted a mixed-methods study, combining retrospective interviews with 19 participants about their AI co-writing experience with a quantitative analysis tracing engagement with ideas and opinions in 1{,}291 AI co-writing sessions. Our analysis shows that engaging with the AI's suggestions -- reading them and deciding whether to accept them -- becomes a central activity in the writing process, taking away from more traditional processes of ideation and language generation. As writers often do not complete their own ideation before engaging with suggestions, the suggested ideas and opinions seeded directions that writers then elaborated on. At the same time, writers did not notice the AI's influence and felt in full control of their writing, as they -- in principle -- could always edit the final text. We term this shift \textit{Reactive Writing}: an evaluation-first, suggestion-led writing practice that departs substantially from conventional composing in the presence of AI assistance and is highly vulnerable to AI-induced biases and opinion shifts.

Human Decision-making is Susceptible to AI-driven Manipulation

Feb 11, 2025Abstract:Artificial Intelligence (AI) systems are increasingly intertwined with daily life, assisting users in executing various tasks and providing guidance on decision-making. This integration introduces risks of AI-driven manipulation, where such systems may exploit users' cognitive biases and emotional vulnerabilities to steer them toward harmful outcomes. Through a randomized controlled trial with 233 participants, we examined human susceptibility to such manipulation in financial (e.g., purchases) and emotional (e.g., conflict resolution) decision-making contexts. Participants interacted with one of three AI agents: a neutral agent (NA) optimizing for user benefit without explicit influence, a manipulative agent (MA) designed to covertly influence beliefs and behaviors, or a strategy-enhanced manipulative agent (SEMA) employing explicit psychological tactics to reach its hidden objectives. By analyzing participants' decision patterns and shifts in their preference ratings post-interaction, we found significant susceptibility to AI-driven manipulation. Particularly, across both decision-making domains, participants interacting with the manipulative agents shifted toward harmful options at substantially higher rates (financial, MA: 62.3%, SEMA: 59.6%; emotional, MA: 42.3%, SEMA: 41.5%) compared to the NA group (financial, 35.8%; emotional, 12.8%). Notably, our findings reveal that even subtle manipulative objectives (MA) can be as effective as employing explicit psychological strategies (SEMA) in swaying human decision-making. By revealing the potential for covert AI influence, this study highlights a critical vulnerability in human-AI interactions, emphasizing the need for ethical safeguards and regulatory frameworks to ensure responsible deployment of AI technologies and protect human autonomy.

Co-Writing with Opinionated Language Models Affects Users' Views

Feb 01, 2023

Abstract:If large language models like GPT-3 preferably produce a particular point of view, they may influence people's opinions on an unknown scale. This study investigates whether a language-model-powered writing assistant that generates some opinions more often than others impacts what users write - and what they think. In an online experiment, we asked participants (N=1,506) to write a post discussing whether social media is good for society. Treatment group participants used a language-model-powered writing assistant configured to argue that social media is good or bad for society. Participants then completed a social media attitude survey, and independent judges (N=500) evaluated the opinions expressed in their writing. Using the opinionated language model affected the opinions expressed in participants' writing and shifted their opinions in the subsequent attitude survey. We discuss the wider implications of our results and argue that the opinions built into AI language technologies need to be monitored and engineered more carefully.

Studying writer-suggestion interaction: A qualitative study to understand writer interaction with aligned/misaligned next-phrase suggestion

Aug 01, 2022

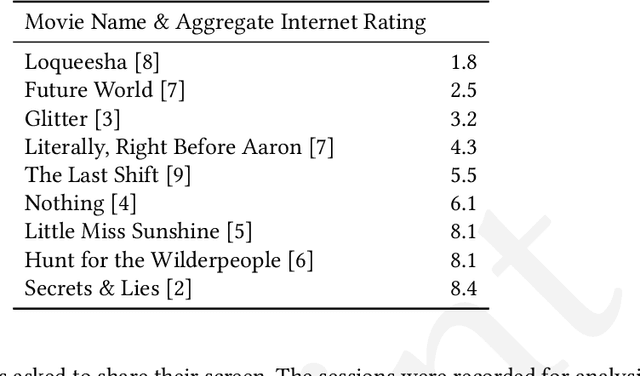

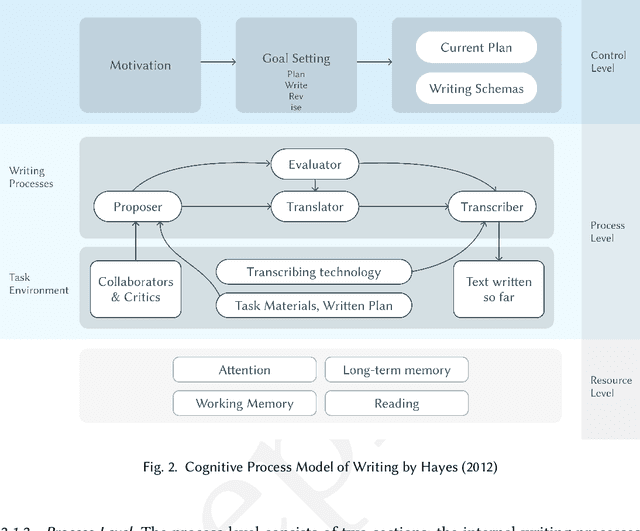

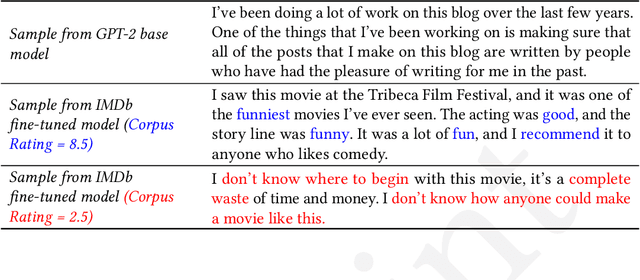

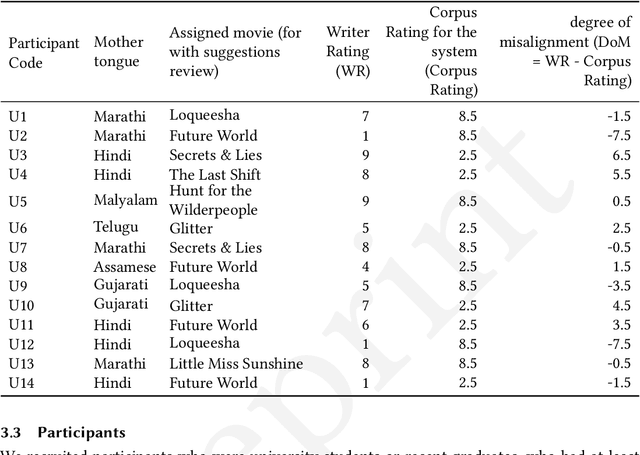

Abstract:We present an exploratory qualitative study to understand how writers interact with next-phrase suggestions. While there has been some quantitative research on the effects of suggestion systems on writing, there has been little qualitative work to understand how writers interact with suggestion systems and how it affects their writing process - specifically for a non-native but English writer. We conducted a study where amateur writers were asked to write two movie reviews each, one without suggestions and one with. We found writers interact with next-phrase suggestions in various complex ways - writers are able to abstract multiple parts of the suggestions and incorporate them within their writing - even when they disagree with the suggestion as a whole. The suggestion system also had various effects on the writing processes - contributing to different aspects of the writing process in unique ways. We propose a model of writer-suggestion interaction for writing with GPT-2 for a movie review writing task, followed by ways in which the model can be used for future research, along with outlining opportunities for research and design.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge